-

Posts

50 -

Joined

-

Last visited

-

Days Won

6

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by joerghampel

-

-

Dear community and network,

at HSE, we are big believers in Inner Source (https://en.wikipedia.org/wiki/Inner_source) and apply it to our customer projects. By opening up our code as open source to the public and sharing it for free we make sure we stay on top of the tools and processes needed for that, and we pay back to the awesome LabVIEW community. You can browse our Open Source offerings at https://code.hampel-soft.com.

We constantly work to give back to the community and widen our offerings. But we need your help! Please let us know how you use our open source LabVIEW and DQMH code, by taking this quick (promise!) survey:https://bit.ly/HSE-OS-Survey-2022

This survey is targeted at those of you who use our tools. But even if you don't use any HSE offerings, we have some questions for you. We want to hear your feedback so we can keep improving our offerings and our logistics.

Thank you in advance!!

Joerg

PS: This has been posted on the dark side, too.-

2

2

-

-

3 hours ago, bjustice said:

I just go to see all of you guys "dance" at the Spazmatics band night

Oh man I really really miss that!!

-

On 7/22/2020 at 12:07 AM, Neil Pate said:

Sorry you are right. Bug report submitted.

Hey Neil, can you share the CAR number for this (LV not releasing closed projects from memory)?

-

6 hours ago, Rolf Kalbermatter said:

However I have to say that this trend seems to have been reversed in recent times.

I can confirm this trend, I had very good experience in the last year or so.

-

Generally, once the project is setup, it usually doesn't change much for us. If you're using .lvlib's and/or LVOOP to organise your code, ongoing development will not touch the .lvproj file much.

Yet another option... Have two .lvproj files: One for the developer, and another one for the installer.

-

2 minutes ago, ShaunR said:

I wouldn't have passed your interview.

I'd have happily helped you along those steps 😍 so you can get to the actual work!

But seeing as git is integral to our way of working, that's an important piece of information for me.

-

For the last few candidates I interviewed, I had prepared the following process:

- a ~1-hour conversation to get a feeling of the person (in person until the pandemic hit, then via MS Teams), chatting about their past experience, current work, personal interests, involvement in the community etc.

- a sample project to work on, which they had to clone from GitLab (via git), get the problem statement (requirements) from the readme, and describe in words how to solve the problem

- another short conversation about their reply and suggested solution

- implement part of the requirements in the sample project and submit the solution via GitLab (git)

- another conversation about how they solved it, why, etc.

Regarding certifications, I recently posted to LinkedIn about my opinion on them.

Edit: The project mentioned above is an actual project of ours, where we had to implement some small changes to a very badly written piece of legacy code with no encapsulation, documentation, etc.

-

On 8/2/2021 at 9:36 PM, infinitenothing said:

Find your local integrator and poach anyone that's been there for a few years. Basically, the integrators like to "train on the job" so the customers are effectively paying for the training. You can usually out pay the integrator for talent because they have to maintain margins on their contract hourly rates.

So you're saying that because a customer pays for a solution they get, they buy the rights to the people who worked on it? I don't agree with that notion.

Also, while people can't be kept from switching jobs, and companies can't be kept from advertising their vacancies, "poaching" your local integrator's employees doesn't sound like the right thing to do to me. Good thing my employees are in Germany and thus not local to you.

-

Tom McQuillan offers a tool that helps with updating VI descriptions and icons: https://github.com/TomsLabVIEWExtensions/Documentation-Tool

AntiDoc by wovalab (Olivier Jourdan) generates very nice and extensive documentation for DQMH modules, LV classes and .lvlibs. Here's an example PDF of our open-source application template.

-

2

2

-

-

Unser nächstes Meeting findet am Donnerstag 29. Juli von 17:00 - 20:00 statt:

Zertifizierungen in der LabVIEW- und NI-Welt

Das Zertifizierungsprogramm von NI bietet die Möglichkeit, seine Kenntnisse und Erfahrung im Umgang mit NI-Produkten unter Beweis zu stellen. Darüber hinaus kann man die Zertifizierungen aber auch zum Anlass nehmen, gemeinsam mit Kollegen oder anderen LabVIEW-Freunden seinen Horizont zu erweitern.

Wir werden in unserem Treffen etwas über das Kurs- und Zertifizierungsangebot von NI lernen. Natürlich werfen wir auch einen genauen Blick auf die Prüfungsbeispiele, und hören Tipps und Tricks "direkt von der Quelle". Außerdem werden wir eine Lern- und Vorbereitungsrunde starten und gemeinsam auf die nächsten Prüfungstermine hinarbeiten.

Das Meeting findet in Deutsch statt. Die Meeting-Details findet ihr weiter unten. Bitte meldet Euch unbedingt auf Eventbrite an!

Ich freue mich, Euch zumindest virtuell bald wieder zu sehen!!

LG

Jörg

Wann:

Donnerstag, 29. Juli, 17:00 Uhr CESTWo:

Virtuell via Microsoft Teams (Link via Eventbrite)Vorab gibt's wie immer ein Test-Meeting, so dass alle Teilnehmer die Technik testen können. Ein bisschen Hilfe zu virtuellen Meetings und zu MS Teams gibt's in unserem Dokuwiki. Bitte vergesst nicht, Euch via Eventbrite anzumelden!

Agenda:

- NI Schulungen und Zertifizierungen Überblick (Adrienn Nagy)

- Ev.: Certification Details (NI-Team USA, in English)

- Tipps, Tricks und Einblicke in Zertifizierungsprüfungen (Andreas Kreiseder)

- Prüfungsbeispiele, offene Diskussion

Anmeldung:

https://wuelug13.eventbrite.de -

@The Q started such a thing on the LabVIEW Wiki:

-

2

2

-

-

Another reason for git (not LabVIEW!) reporting changes where there don't seem to be any is file permission.

If your repository is on a filesystem where executable bits are unreliable (like FAT), git should be configured to ignore differences in file modes recorded in the index and the file mode on the filesystem if they differ only on executable bit:

git config --local core.filemode falseand/or

git config --global core.filemode falseAnd while we're at configuring git for Windows: Due to a Windows API limitation of file paths having 260 or fewer characters, Git checkouts fail on Windows with “Filename too long error: unable to create file” errors if a path is longer than the 260 characters. To resolve this issue, run the following command from GitBash or the Git CMD prompt:

git config --system core.longpaths true(Taken from our public Dokuwiki)

-

18 hours ago, Matt_AM said:

I thought the EHL was the "top loop" that listens to user events or button presses via the event loop. The EHL would then send a message via queue to the "lower loop" of the MHL which would then be the primary place where you "do the work" of the module.

Exactly, what you say here is correct. I was confused by your suggestion to "use the MHL timeout" - but I realise now you actually meant to change the timeout setting for reading from the message queue in Delacor_lib_QMH_Dequeue Message.vi, right?

That could be done by implementing your own child class for the default DQMH Message Queue class and overriding that method. While there definitely are proper use cases for implementing your own child class of the Message Queue, I don't think this is one. I would advise against going down that rather unusual road when there are simpler solutions available.

18 hours ago, Matt_AM said:Regrading the helper loop reccomendation, it sounds like the helper loop would be triggered by the user events of a different module...? The way that I have been imagining the helper loop, it would set some timeout/flag to start and stop the module via the EHL getting a request to start broadcasting which sends a message to the MHL to set the flag to initialize the helper loops. Likewise when the code needs to stop, the EHL sends a message to MHL which sends the flag to the helper loop. This feels a bit cumbersome, but thats how I am currently visualizing the process.

You can make your helper loop private (then the only way to control it from outside the module is to go through EHL and MHL, just as you describe).

Or, you can design your helper loop to contain an event structure, and make it publicly available by registering for (some of the) DQMH module's request events directly in the helper loop's event structure. This is the design we describe in our blog post on helper loops.

-

Hey Matt, great that you're looking into using DQMH!

Regarding your first question, I think you're using the term MHL (Message Handling Loop, the one in the bottom with the case structure) to describe the EHL (Event Handling Loop, the one on top with the event structure).

You're saying that "by having my helper loop broadcast, I feel like I'm taking away from the main design that the MHL is supposed to broadcast". I don't think that by design only the MHL or EHL should do broadcasting. I would most definitely go with a helper loop. If you haven't seen it yet, feel free to take a look at our blog post on helper loops. It shows how to create helper loops that can be enabled/disabled.

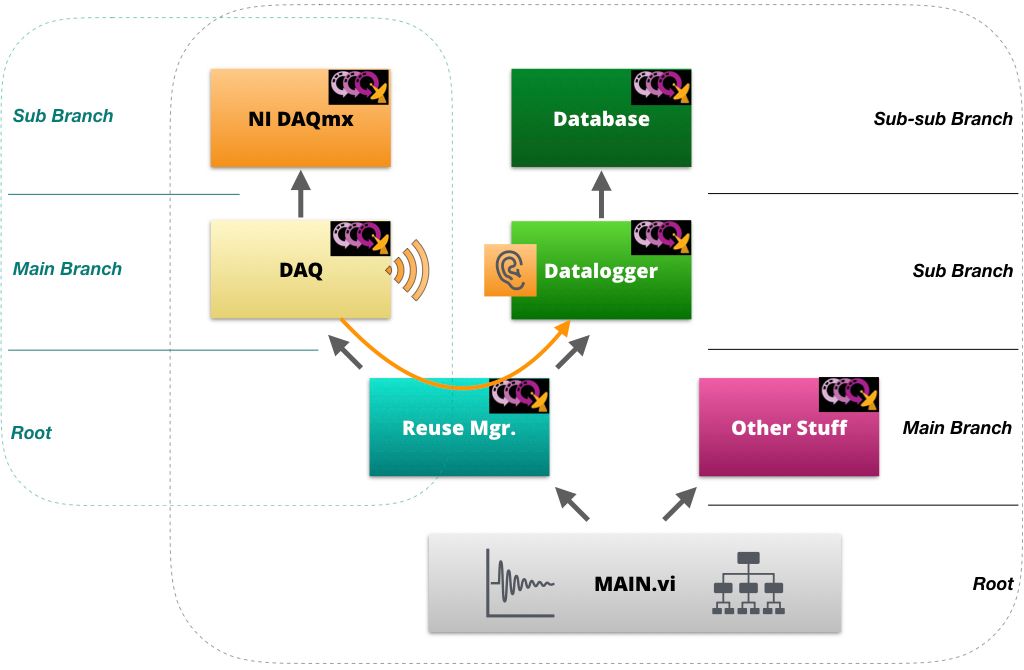

Regarding your second issue, many people advocate to structure your modules like a tree, for exactly those reasons you already mentioned. You will find that as you remove the static dependencies, reusability of Modules B and C will pay for a little "cumbersomeness".

Here's a graph illustrating that tree structure (I created it for another thread some time ago, so please excuse that the naming is different to yours) :

Let me know if this helps!

-

1

1

-

-

Yes, sorry, and thanks for pointing that out. It seems the editor thought the dot would make a nice addition to the URL, and I didn't catch it. I just fixed.

-

...and for what it's worth, we use G-CLI, which sets the "App.UnattendedMode" property. That seems to be all it needs. G-CLI also allows to kill the LabVIEW process after some timeout in case it should hang.

-

19 hours ago, ThomasGutzler said:

I guess, in theory, my stuff works too. It's just the pain of debugging when things don't go as they should because LabVIEW decided to corrupt its compiled objects cache, or that build error that we get when we have the build folder open while it's building that sometimes happens even that folder isn't open, or solar flares...

I don't disagree. I've been working on our tools for ~ 10 years now, maybe 5 years running them automatically on a server. Some kinks could be ironed out, others worked around. It's definitely still a pain sometimes (add to the LabVIEW woes some other troubles like VMs losing network connectivity etc.).

All in all, the whole experience has taught us so much process-wise. And of course, once you got used something, you don't want to do without it anymore.

I believe that's the actual USP of our tools for our customers: The process we teach while integrating our tools into their environment.

-

Have you seen Sam Sharp's presentation on Test Complete?

-

1

1

-

-

Answering the question in this post's title: Yes!

We're very happy with our solution, we can apply .vipc's, validate DQMH modules, run VI Analyzer and Unit Tests, create documentation from source code, execute build specifications, create .vip's, package results into zip archives and deploy (move the result files somewhere). We have also created plugins for our dokuwiki which will automatically compile a release list with links to the artefacts. At the moment, we're working on adding FPGA compilation to the list of features.

It's a commercial product, but you might get some inspiration from the information on the product website at https://rat.hampel-soft.com.

-

I've only seen/used TOML for GitLab CI's gitlab-runner configuration (i.e. not in LabVIEW)...

-

1. What type of source control software you are using?

Git on GitLab with Git Fork or Git Tower

2. You love it, or hate it?

LOVE it!!

3. Are you forced to use this source control because it's the method used in your company, but you would rather use something else

We get to choose, and git is the scc system of our choice.

4. Pro's and Con's of the source control you are using?

Pro: Decentralised, very popular (i.e. many teams use it), very flexible, very fast, feature branches, tagging, ...

Con: Complicated to set up if using SSH, Exotic use cases/situations can be very hard to solve and sometimes need turning to the command line5. Just how often does your source control software screw up and cause you major pain?

Never. It's always me (or another user) doing something wrong. For our internal team of 5, it never happens. For our customers, the occasional problem occurs.

As mentioned above, we use Git Fork and/or Git Tower as our graphical UIs to git. IMHO, using a graphical client is soo superior to working with git on the command line (but I'm posting to a LabVIEW forum, so who am I telling this?).

In order to avoid having to merge VIs (we actually do not allow that in our internal way of working), we use the gitflow workflow. It helps us with planning who's working on which parts of an application (repo). I honestly can count the number of unexpected merge conflicts in the last few years with one hand.

On top of git, many management systems like GitHub, GitLab, Bitbucket etc. offer lots of functionality like merge requests, integration with issue tracking, and other project-management-type features.

-

2

2

-

-

I wrote a blog post about separating compiled code a while ago, it might be interesting for you:

https://www.hampel-soft.com/blog/separate-compiled-code-from-source/

-

1

1

-

-

For those of you interested in exploring CI/CD with git (and especially with GitLab), please take 5 minutes to visit our Release Automation Tools for LabVIEW website.

At the very least, you'll get an impression of (and maybe some inspiration through) what we've been doing for ourselves and for some of our customers for quite some time now. A ready-made solution might save you a lot of development time. If you want more details, I can share recordings of webinars we did a while back. I'd also be happy to show you around myself, too.

Spoiler(?) alert: This is a commercial tool.

-

+1 for allowing GitHub as a GPM repo (and GitLab, too).

I watched Derek's CLA Summit presentation on What's new in GPM and was intrigued by the local/private repositories! Don't get me wrong, we already share our own libs and might also do so via GPM, but this makes it probably viable to use it for our customer projects. I will definitely have a play with it in the holidays (and am looking forward to it 🙂 )

Labview help

in LabVIEW General

Posted

Hey Thomas, feel free to contact us via our website. We have CPIs on our team and offer both NI courses and customised training, consulting and workshops (see https://www.hampel-soft.com/services/). If we're not the right match, I'm sure I can forward your request to other consultants.