-

Posts

81 -

Joined

-

Last visited

-

Days Won

9

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by Youssef Menjour

-

-

Good morning

Ha I hadn't seen this answer!I don't understand the point of putting NI Linux RT online? What's the point ?

I just want to simply deploy my code to this target that has a GPU.

IN fact what I want is to deploy LabVIEW code on my Jetson target.

I don't want to install LabVIEW RT on my target, I just want to deploy my code as if I were on a machine.Is this possible on an Ubuntu Arm target as was done on Rasberry PI.

The idea is to use the Jetson GPU for HAIBAL deployments.I asked NI but they didn't answer me.

who could be our contact at NI ? -

As i understood, we can install LabVIEW RT everywhere in case to know how to modify the linux kernel source code (open source)

It's possible but it seems it mean to have strong knowledge in OS linux dev.

We will start to work on it to deploy LabVIEW RT on jetson.

New challenge accepted

-

Hi everyone,

Can someone explain to me what this repository is for?From the NI linux RT source code, can we consider, after hard development work, gateways to, for example, a LabVIEW deployment of RT code on a Jetson nano ?

I would really like to understand what it is, thank you

-

Hi everyone,

I would like modify a project build specification by using input only the path of the Lvproj.

Is it possible ?I would like to change with a VI a destination directory of a Build Specification

Thank you for your help

-

Good morning

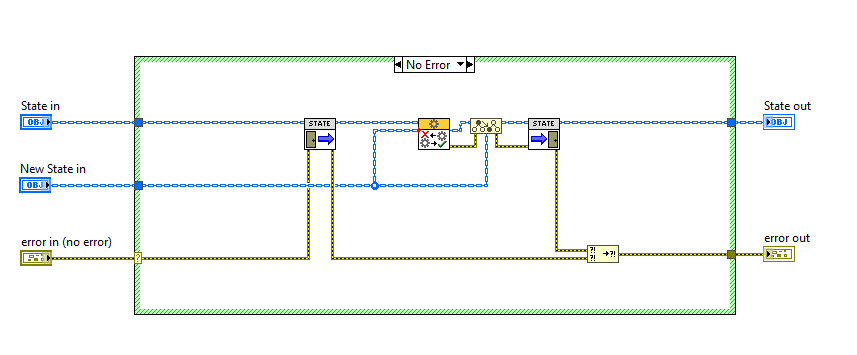

I downloaded the famous state actor patern and clearly it explains absolutely nothing.

Where can I find an example? What's the point ? Does anyone really know? There are no examples anywhere in the NI examples and this VI shows absolutely nothing

How do we launch this switch actor? Where ? I don't understand the point of this VI?

When hitchhiking, is the switch actor useful? (obviously no, since nowhere are the FIFO connections cut, the nesteds are not deleted in the actor who is going to be switched)

-

J'ai téléchargé le fameux state actor patern et clairement il n'explique absolument rien. Ou puis je trouver un exemple ? A quoi ça sert ? Est ce que quelqu'un sait vraiment ? Il n'y a aucun exemples null part dans les exemple NI et ce VI ne montre absolument rienclear274 / 5 000

Résultats de traduction

Résultat de traduction

Good morning I downloaded the famous state actor patern and clearly it explains absolutely nothing. Where can I find an example? What's the point ? Does anyone really know? There are no examples anywhere in the NI examples and this VI shows absolutely nothing -

OK i'll check

thank you

-

It's quite frustrating not to have a direct answer to my question and to be forced to dissect a package to understand.

The simplest thing would have been to explain this override better.

I'll go see but it's really not practical -

I really need to understand how it works.

Unfortunatelly there is few information on substitute actor override.

Anyone able to help me ? -

Hi everybody,

I'm trying to use in actor framework the substitute actor override but there is no example on internet.

Is someone can provide me a simple example to understand it ?Thank you

-

Here's some examples of semantic segmentation with 𝐓𝐈𝐆𝐑 𝗟𝗮𝗯𝗩𝗜𝗘𝗪 𝐯𝐢𝐬𝐢𝐨𝐧 𝐭𝐨𝐨𝐥𝐤𝐢𝐭 (Available now for test)

This is accomplished by utilizing a UNET architectural model in conjunction with a basic 𝗟𝗮𝗯𝗩𝗜𝗘𝗪 state machine architecture, showcasing how the HAIBAL, the LabVIEW deep learning toolkit by Graiphic toolkit's capabilities enable seamless integration of any model into practical applications with minimal effort.Visit us now --> www.graiphic.io

𝐓𝐈𝐆𝐑 website -->https://tigr.graiphic.io/

Download GIM and try 𝐓𝐈𝐆𝐑 𝗟𝗮𝗯𝗩𝗜𝗘𝗪 𝐯𝐢𝐬𝐢𝐨𝐧 𝐭𝐨𝐨𝐥𝐤𝐢𝐭 --> https://lnkd.in/eUtVumG2

Get started with TIGR --> https://lnkd.in/dssB-MS4

-

- Popular Post

- Popular Post

This video may not look like it, but for us it represents an enormous amount of effort, difficulty, sacrifice and financial means. It is with special emotion that we proudly unveil the upcoming major update for HAIBAL, the LabVIEW deep learning toolkit by Graiphic.

In a few weeks, we will introduce a significant enhancement to our deep learning toolkit for LabVIEW. This update takes our tool to a new dimension by integrating a range of reinforcement learning algorithms: 𝐃𝐐𝐍, 𝐃𝐃𝐐𝐍, 𝐃𝐮𝐚𝐥 𝐃𝐐𝐍, 𝐃𝐮𝐚𝐥 𝐃𝐃𝐐𝐍, 𝐃𝐏𝐆, 𝐏𝐏𝐎, 𝐀𝟐𝐂, 𝐀𝟑𝐂, 𝐒𝐀𝐂, 𝐃𝐃𝐏𝐆 𝐚𝐧𝐝 𝐓𝐃𝟑.

Naturally, this update will include practical, easy-to-use examples such as DOOM, MARIO, Ataris games and many more surprises will come along.

(starcraft or not starcraft?)👉🏼 Visit us now

www.graiphic.io

👉🏼 Get started with TIGR vision toolkit

https://lnkd.in/dssB-MS4

👉🏼 Get started with HAIBAL deep learning toolkit

https://lnkd.in/e6cPn4Fq-

4

4

-

thanks !

It's very simple in fact ... -

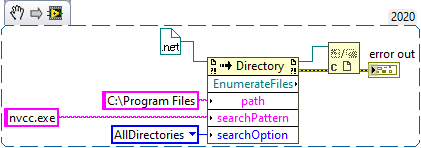

Hi,

I'm using .net with LabVIEW and i have some difficulties to open a reference.

I use mscorlib(4.0.0.0) as assembly. (System.io.directory class)The method i'm testing is EnumerateFile which return a reference representing "an enumerable collection of full file names that meet specified criteria."

Now my problem is to find a method that take this reference and give me back information in readable LabVIEW.

Someone can help me ?

Thank you

-

On 4/22/2023 at 5:12 PM, Antoine Chalons said:

Really?

I haven't done any vision application in the last 4 years, but before that I was using IMAQ daily and i never had performance issues with the acquisition part.

And I had a few industrial application that were quite intense in terms of image acquisition and processing.

The processing was also acceptable in terms of perf, except maybe the pattern matching.Can you describe an application in which IMAQ perf is too low for you?

I phrased it wrong

Vision poses a problem for us because it requires an additional installation. The philosophy of our package is to make it all in one so that it is as simple as possible for our users.

So we want to do without Vision while keeping the same performance.What also causes us problems is that we don't understand anything about NI on the free, not free, add on... nothing is clear. As a user I do not feel I have to go looking for information, it should be simple which is not the case. (well that's our philosophy)

To do this, we have to do the same thing that the alliance vision team did at the time, a low level switch (pointer).

What we will be able to do in the next few days. -

We don't want to use Imaq because the performance are bad for our application.

We would like to use .net with pointer. Anyone knows ?

17 hours ago, Antoine Chalons said:I guess you don't care about supporting non-windows OSes, right?

For now we are working on windows. In the future we'll work on linux but for now we focus to make it work well on windows.

-

-

In fact we would like to compare the image acquisition methods in terms of optimization (display speed).

.net seems to be very interesting.20 hours ago, hooovahh said:I posted my Image Manipulation code over on VIPM.IO

https://www.vipm.io/package/hooovahh_image_manipulation/

Here is a video demonstrating some of its functionality, including loading an image into a picture box and manipulating it.

I looked at your example and it is really good to start with!

The problem with .net is the large number of functions, you have to be able to find your way around.I'm trying to display an image from a table of values on .net and I have not yet found. What method should I use? I have not found a simple example that does it. 😱

23 hours ago, Antoine Chalons said:Are you asking that in order to avoid NI vision dev module license for your vision product?

If yes, you can install vision acquisition software (> no license for that, at least until 4years ago) and use the image display without needing vdm license

I was not aware about ! I'll test if it's enough.

We already do with our own solution now but we would like to try with other methods because it lacks optimization.

I also think there is a high potential using .net and i would like to try.

-

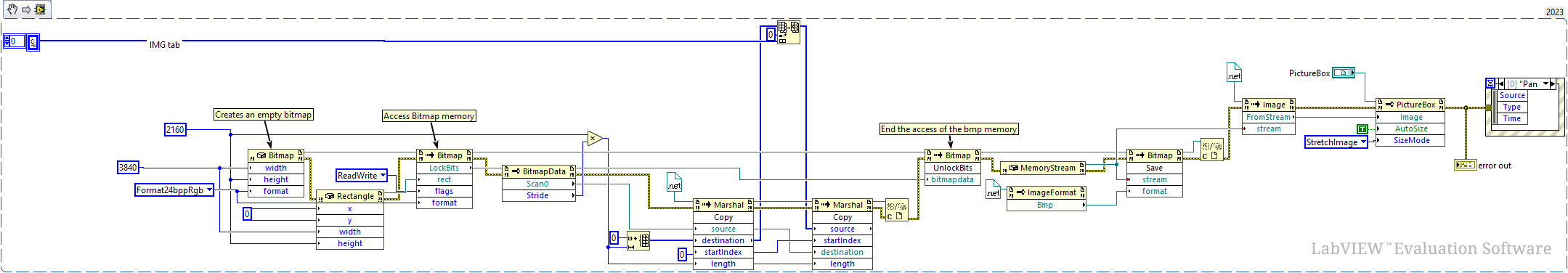

Hi everybody,

I'm discovering .net on LabVIEW and i would like simply display a JPG or BMP image.

Is it possible to have a simple example doing it ?(I started with Picturebox and imagelocation method but it seems not to be the good method).

Thank you

-

working now, it was a problem of installation.

-

strange i test it in another machine it works.

On my principal computer it crash 🤔

-

8 minutes ago, Antoine Chalons said:

silly question : does it work if you don't use a cluster in the dll but independent indicators (2 strings, a float and an int) ?

This example is volontary a cluster for another app. I need a "complexe" structure organized in cluster.

Do you know how to do it ?

-

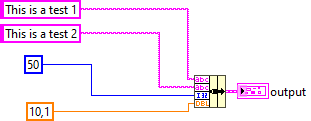

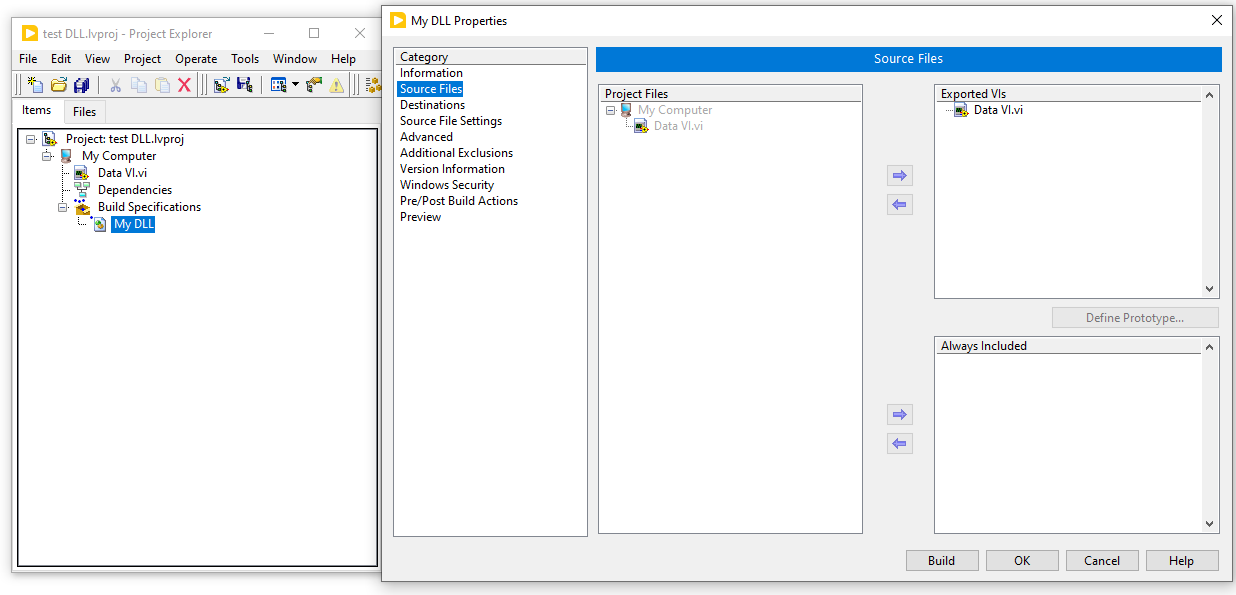

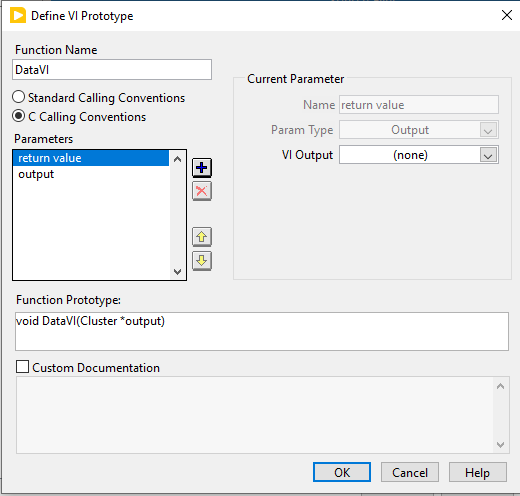

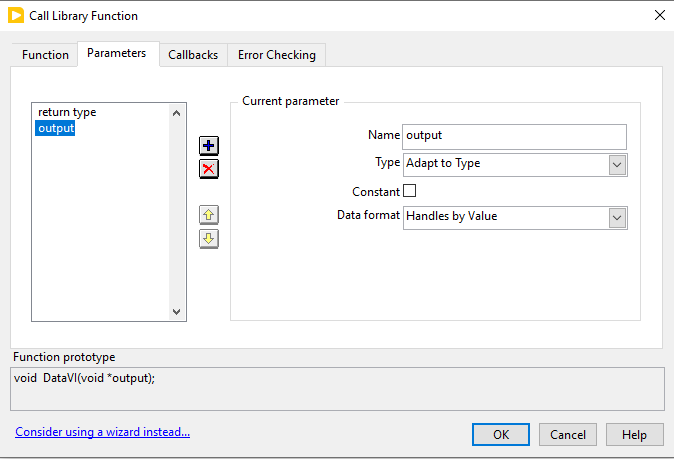

Hi everybody,

I'm generating a DLL with this simple function

I want to recover the clustrer through a DLL function, so i did it in project.

I build the DLL and when i call it, --> TALA CRASH

To recover my cluster i supposed Type is "Adapt to type" and Data format "Handles by Values"

Of course it's not !

Someone know what are Type and Data format of my output should be ?

Thank you,

(i put the VI and project in attached file

-

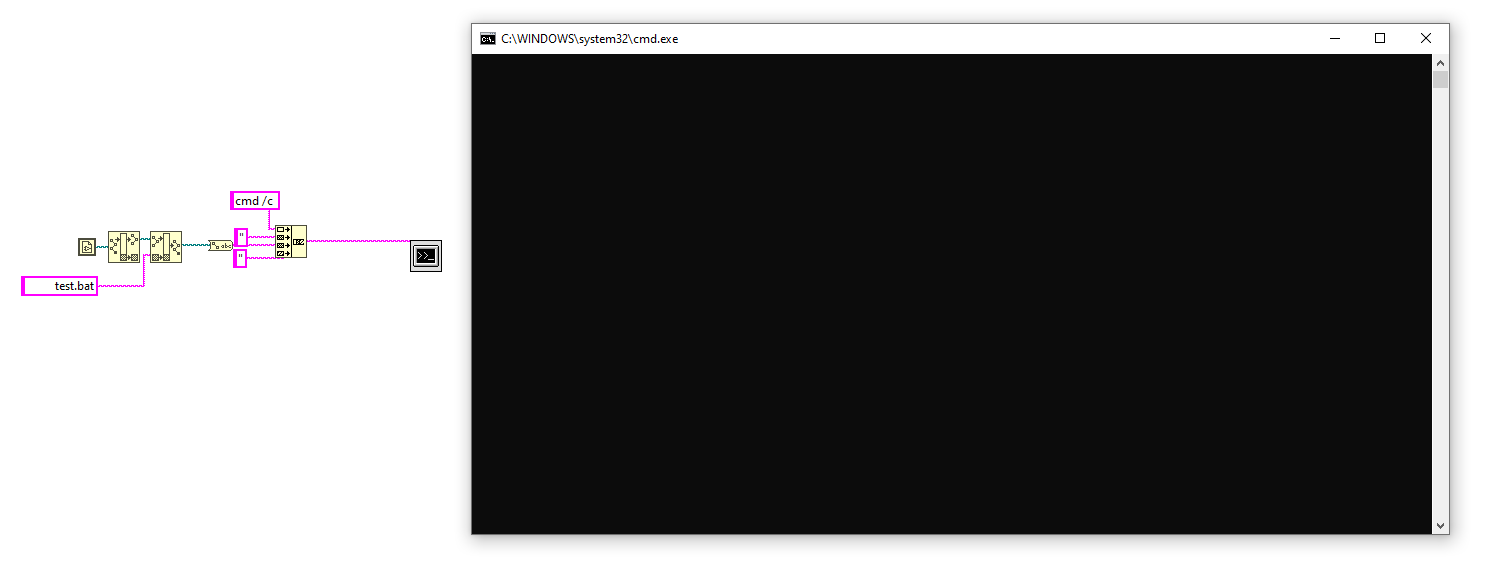

On 2/4/2023 at 1:44 PM, ShaunR said:

Start is an extension. Remove "start" or enable cmd extensions (/e:on). I'm guessing you think the "/x" is enabling extensions. It's not. There is no "/x".

I have removed start but bat file does not launch why ?

How to launch a bat with LabVIEW cmd ?

Deep Learning toolkit beta test is now

in Code In-Development

Posted · Edited by Youssef Menjour

We are still looking for beta testers to join the ongoing testing phase of SOTA, our unified development environment for deep learning in LabVIEW. Now in its 36th version, SOTA is designed for developers interested in exploring deep learning using graphical programming. If you're passionate about innovation and eager to shape the future of graphical deep learning, we would love to hear from you!

🚀 𝐉𝐨𝐢𝐧 𝐭𝐡𝐞 𝐋𝐚𝐛𝐕𝐈𝐄𝐖 𝐒𝐎𝐓𝐀 𝐁𝐞𝐭𝐚 𝐓𝐞𝐬𝐭𝐞𝐫 𝐏𝐫𝐨𝐠𝐫𝐚𝐦 𝐚𝐧𝐝 𝐒𝐡𝐚𝐩𝐞 𝐭𝐡𝐞 𝐅𝐮𝐭𝐮𝐫𝐞 𝐨𝐟 𝐀𝐈 𝐃𝐞𝐯𝐞𝐥𝐨𝐩𝐦𝐞𝐧𝐭!

Are you passionate about artificial intelligence? Do you want to be part of a groundbreaking journey to revolutionize AI workflows? SOTA, the unified AI development platform, is looking for beta testers to explore its latest capabilities and provide valuable feedback.

𝐖𝐡𝐲 𝐉𝐨𝐢𝐧 𝐭𝐡𝐞 𝐒𝐎𝐓𝐀 𝐁𝐞𝐭𝐚 𝐏𝐫𝐨𝐠𝐫𝐚𝐦?

Be the First to Explore: Get early access to the 64th version of SOTA, featuring powerful tools like the Deep Learning Toolkit and Computer Vision Toolkit.

Collaborate and Innovate: Share your insights and ideas to help us refine and improve the platform.

Experience Unmatched Simplicity: Discover how SOTA’s graphical programming language, ONNX interoperability, and optimized runtime simplify complex AI workflows.

⭐ 𝐖𝐡𝐚𝐭’𝐬 𝐢𝐧 𝐢𝐭 𝐟𝐨𝐫 𝐘𝐨𝐮?

𝐄𝐱𝐜𝐥𝐮𝐬𝐢𝐯𝐞 𝐀𝐜𝐜𝐞𝐬𝐬: Be part of an exclusive group shaping the next generation of AI tools.

𝐄𝐚𝐫𝐥𝐲 𝐈𝐧𝐧𝐨𝐯𝐚𝐭𝐢𝐨𝐧𝐬: Test cutting-edge features before they’re released to the public.

𝐃𝐢𝐫𝐞𝐜𝐭 𝐈𝐦𝐩𝐚𝐜𝐭: See your feedback implemented as part of SOTA’s evolution.

💡𝐖𝐡𝐨 𝐂𝐚𝐧 𝐀𝐩𝐩𝐥𝐲?

Whether you're an engineer, researcher, or AI enthusiast, your voice matters. If you're curious, innovative, and ready to explore, we want you on board!

👉Contact us if you want to join the open beta!

https://lnkd.in/dWrckRJV or hello@graiphic.io or PM

Stay informed and follow us on our youtube channel !

👉 https://lnkd.in/dmP49rCa

Stay informed on our website !

👉 https://www.graiphic.io