-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

278

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by Rolf Kalbermatter

-

-

20 hours ago, AjayMV said:

I guess it's issue with VIPM 2026.. I tried with other machine with 2023 VIPM and it installed fine. Now I'm looking for licensing details of Lua and looks CIT Engineering is not there any more.. Any leads on this @Rolf Kalbermatter?

Thanks for the feedback. Will try to check what the problem with VIPM 2026 might be.

As to commercial information I saw that you have sent an email to info (at) citengineering.nl and will make sure that the person in question will respond to that.

-

2 hours ago, AjayMV said:

Hey Rolf, one of such project popped up in our side and has old scripts in LuaVIEW.. Unfortunately the VIPM is not happy to open Lua4LabVIEW_Toolkit-2.0.5-2.ogp downloaded from https://www.luaforlabview.com/download.htm Seems it's not listed in VIPM.io as well. By any means you know last LabVIEW version it's successfully installed? We are trying with LV2023 64 bit.

-BR-

Ajay.Can you tell me more about what the problem is with VIPM? Which VIPM version is that? And what error if any do you get?

-

5 hours ago, viSci said:

Just to wanted add another voice to the LuaVIEW fan club. LUA is an excellent scripting language and LuaVIEW is an excellent integration with LV. Keep hoping you might see the advantages of open sourcing it to the LV community.

Thanks for your feedback. I'm not the legal owner of Lua for LabVIEW, only the maintainer. It is unfortunately not my decision how it is distributed/sold.

But even if it was, I don't think I would actually open source it. But I would probably make it free.

-

On 3/6/2026 at 12:10 PM, ShaunR said:

It will eat away at you slowly...at first. Then every time you see the link you will know [it doesn't work]. Drip, drip, drip. It's like those crossed wires on your diagram - you tell yourself it doesn't matter, that it's just cosmetic, that there is no change in function. But eventually you have to do something about it.

Send that request to the admin, you know you want to

Send that request to the admin, you know you want to

I tried hard to ignore your poisonous whisperings but eventually succumbed to it. 🤫

-

1

1

-

-

8 minutes ago, ShaunR said:

Your fastidiousness with code tells me this is an outright lie.

Hey, I didn't talk about code! This was about advertisement and commerce.

(And my lost privilege, which indeed hurts my sensitive soul a little 😁. It's soul crushing to read an old post of myself and discover typos in it.)

-

On 3/4/2026 at 6:36 AM, Vandy_Gan said:

RTK LVButtonGX Installer.exeThis is the installation package

Are you seriously expecting anyone to install a random executable on their system from an unknown publisher, provided by an anonymous person on the web, where one can't even get a proper link in Google to the actual company page?

Sorry, but anyone doing that should not be allowed near 5m of a computer system!

-

2

2

-

-

1 hour ago, ShaunR said:

The link is broken for lazy people like me

F*ck! 😁

And I lost my privilege of being allowed to edit posts indefinitely some years ago for unexplained reason.

Ohh well! Not sure I care at this point very much. I just suck at commercial promotional stuff and am admitting it.

-

On 3/5/2026 at 4:08 PM, Neil Pate said:

You resurrecting LuaVIEW?

It's still maintained and sold, although not actively marketed.

-

Not starting but want to try to do a cRIO variant of a few things such as the OpenG ZIP library, Lua for LabVIEW, and especially my OPC UA Toolkit.

-

19 minutes ago, jmog said:

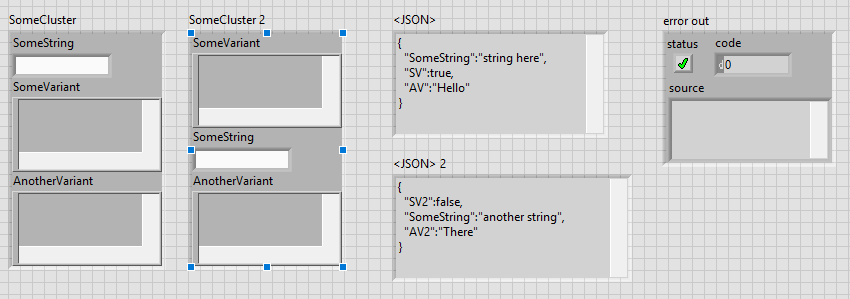

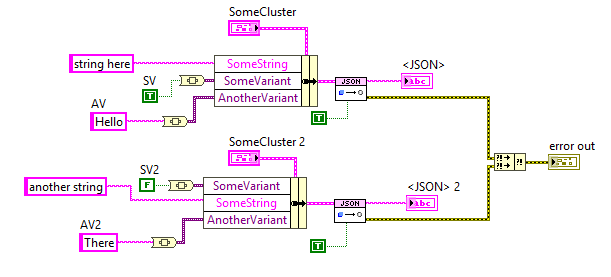

Can't find a way to edit my previous post, so continuing here. After posting, I found the related discussion on page 7 of this thread. So apparently the variant names that get used in the resulting JSON string are not the name fields of the cluster, but rather the names of the pieces of data inside the variant. This feels very counterintuitive to me, but in case someone is running into the same issue, at least one solution is to name the data used inside the variant (see image below).

Would it be possible to use the names of the fields in the cluster instead of the names inside the variant? That would feel more intuitive to me and apparently several others as well.

Nothing is impossible but often so painful that not doing it is almost in every case the better option.

The label of the data in the variant is the label of the data element that was used to create the variant. The variant has also a label but that is one level higher. You could of course modify JSON to Text, but that is a lot of work. And I think it is up to James if he feels like this would be a justified effort. Personally I absolutely would understand if he feels like "Why bother".

-

5 hours ago, Vandy_Gan said:

Francois,

Indeed, as you mentioned, I came across a Chinese video while surfing the internet earlier. It demonstrated examples of generating buttons in both development environment and runtime engine. The video link is provided below. I'm not sure if you can see it

I can't understand Chinese and the video only shows some highlights, not how it works. My assumption it that they might use Windows Controls. It's a possibility but the effort needed to create a toolkit like that for use in LabVIEW is ENORMOUS. If it exists and you really absolutely want to do this for any price, buy the toolkit no matter how expensive. Trying to do that yourself is an infinite project! Believe me!

-

6 hours ago, Adnene007 said:

Hi,

My project is to translate an application into multiple languages,

However, I am facing an issue with the display of the TabControl and the TreeMenu, When I translate them into French, I have spacing problems, as you can see in the picture.I am using:

LabVIEW 2024

Input file format: .json

Encoding/Conversion: Unicode

Thanks for help,

A bit confusing. You talk about French but show an English front panel. But what you see is a typical Unicode String displayed as ASCII. On Windows, Unicode is so called UTF16LE. For all the first 127 ASCII codes this means that each character results in two bytes, with the first byte being the ASCII code and the second byte being 0x00. LabVIEW does display a space for non-printable characters and 0x00 is non-printable unlike in C where it is the End Of String indicator.

So you will have to make sure the control is Unicode enabled. Now Unicode support in LabVIEW is still experimental (present but not a released feature) and there are many areas where it doesn't work as desired. You do need to add a special keyword to the INI file to enable it and in many cases also enable a special (normally non-visible) property on the control. It may be that the Tab labels need to have this property enabled separately or that Unicode support for this part of the UI is one of the areas that is not fully implemented.

Use of Unicode UI in LabVIEW is still an unreleased feature. It may or may not work, depending on your specific circumstances and while NI is currently actively working on making this a full feature, they have not made any promises when it will be ready for the masses.

-

On 2/27/2026 at 4:06 AM, Vandy_Gan said:

Francois,

Is it possible to generate an application that runs in a runtime environment only?

First, attaching your question to random (multiple) threads is not a very efficient way of seeking help.

I'm not sure I understand your question correctly. But there is the Application Builder, which is included in the Professional Development License of LabVIEW, that lets you create an executable. It still requires the LabVIEW Runtime Engine to be installed on computers to be able to run it, but you can also create an Installer with the Application Builder that includes all the necessary installation components in addition to your own executable file.

-

On 2/24/2026 at 2:31 PM, Neon_Light said:

Hello Rolf,

thank you for the help. I did download the source files from HDF5 and HDF5Labview. You're are right it can take some time to get it working. For that reason I'll start with making a datastream from the cRIO to the windowns gui pc and use that for now. At least I'll be able to reduce some risk.

Meanwhile I'll try to get a the Cross compiler working I'll should at lease be able to get a "hello world" running on the cRIO and take some steps if I can find some time. I did find the following site:

https://nilrt-docs.ni.com/cross_compile/cross_compile_index.html

Do you think this site is still up to date or is it a good point to start? I am only targeting a Ni-9056 for now.

I haven't recently tried to use that information but will have to soon for a few projects. From a quick cursory glance it would seem still relevant. There is of course the issue of computer technology in general and Linux especially being a continuous moving target, so I would guess there might be slight variations nowadays to what was the latest knowledge when that document was written, but in general it seems still accurate.

-

4 hours ago, ManuBzh said:

I'd would find useful (may be it's already exists) to choose the editing growing direction of an array. It's used to be from left to right and/or up to down, but others directions could be usefull.

Yes I can do it programmatically, but it's cumbersome and it useless calculus since it could directly done by the graphical engine.

Possible (and I forget about it) or already asked on the wishlist ? (I'm quite sure there's of one the two !)

Emmanuel

I can't really remember ever having seen such a request. And to be honest never felt the need for it.

Thinking about it it makes some sense to support it and it would probably be not that much of work for the LabVIEW programmers, but development priorities are always a bitch. I can think of several dozen other things that I would rather like to have and that have been pushed down the priority list by NI for many years.

The best chance to have something like this ever considered is to add it to the LabVIEW idea Exchange https://forums.ni.com/t5/LabVIEW-Idea-Exchange/idb-p/labviewideas. That is unless you know one of the LabVIEW developers personally and have some leverage to pressure them into doing it. 😁

-

3 hours ago, ManuBzh said:

For sure, this must have been debated here over and over... but : what are the reasons why X-Controls are banned ?

It is because :

- it's bugged,

- people do not understand their purpose, or philosophy, and how to code them incorrectly, leading to problems.Absolutely echo what Shaun says. Nobody banned them. But most who tried to use them have after some more or less short time run from them, with many hairs ripped out of their head, a few nervous tics from to much caffeine consume and swearing to never try them again.

The idea is not really bad and if you are willing to suffer through it you can make pretty impressive things with them, but the execution of that idea is anything but ideal and feels in many places like a half thought out idea that was eventually abandoned when it was kind of working but before it was a really easily usable feature.

-

1

1

-

1

1

-

-

It's a little more complicated than that. You do not just need an *.o file but in fact an *.o file for every c(pp) source file in that library and then link it into a *.so file (the Linux equivalent of a Windows dll). Also there are two different cRIO families the 906x which runs Linux compiled for an ARM CPU and the 903x, 904x, 905x, 908x which all run Linux compiled for a 64-bit Intel x686 CPU. Your *.so needs to be compiled for the one of these two depending on the cRIO chassis you want to run it on. Then you need to install that *.so file onto the cRIO.

In addition you would have to review all the VIs to make sure that it still applies to the functions as exported by this *.so file. I haven't checked the h5F library but there is always a change that the library has difference for different platforms because of the available features that the platform provides.

The thread you mentioned already showed that alignment was obviously a problem. But if you haven't done any C programming it is not very likely that you get this working in a reasonable time.

-

Many functions in LabVIEW that are related to editing VIs are restricted to only run in the editor runtime. That generally also involves almost all VI Server functions that modify things of LabVIEW objects except UI things (so the editing of your UI boolean text is safe) but the saving of such a control is not supported.

And all the brown nodes are anyways private, this means they may work, or not, or stop working, or get removed in a future version of LabVIEW at NI's whole discretion. Use of them is fun in your trials and exercises but a no-go in any end user application unless you want to risk breaking your app for several possible reasons, such as building an executable of it, upgrading the LabVIEW version, or simply bad luck.

-

On 2/4/2026 at 5:26 PM, JBC said:

@BryanThanks for the info, I tried multiple methods to get CE going again but none worked. This is CE on a MAC (my personal computer) which as far as I can tell is no longer supported. It sucks they force you to update the CE version and will not let you simply use the CE version you have. I have a work windows PC that I have a LV2021 perpetual license for though I have kept CE off that computer since that one is my commercial use dev machine and it works. I dont want NI to update packages (during CE install) on it that break my 2021 dev environment.

Actually LabVIEW for Mac OS is again an official thing since 2025 Q3. Community Edition only, can't really buy it as a Professional Version but it's officially downloadable and supported. 2024Q3 and 2025Q1 was only a semi official thing that you had to download from the makerhub website.

-

On 1/27/2026 at 12:56 AM, Mefistotelis said:

This is clearly the assert just after `DSNewPClr()` call.

Anyone knows what packer tool was used to decrease size of LabVIEW-3.x Windows binaries? It is clear the file is packed, but I'm not motivated enough to identify what was used. UPX had a header at start and it's not there, so probably something else.

Anyway, without unpacked binary, I can't say more from that old version.

Though the version 6.0, although compiled in C++, seem to have this code very similar, and that I can analyze:

if (gTotalUnitBases != 9) DBAssert("units.cpp", 416, 0, rcsid_234); cvt_size = AlignDatum(gTDTable[10], 4 * gTotalUnitBases + 18); gCvtBuf = DSNewPClr(cvt_size * (gTotalUnits - gTotalUnitBases + 1)); if (gCvtBuf == NULL) DBAssert("units.cpp", 421, 0, rcsid_234);The 2nd assert seem to correspond to yours, even though it's now in line 421. It's clearly "failed to allocate memory" error.

It could also be the assert a few lines above or somewhere there around there. Hard to say for sure.

gTotalUnitBases being not 9 is a possibility but probably not very likely. I suppose that is somewhere initialized based on some external resource file, so file IO errors might be a possibility to cause this value to not be 9.

The other option might be if cvt_size ends up evaluating to 0. DSNewPClr() is basically a wrapper with some extra LabVIEW specific magic around malloc/calloc.

C standard specifies for malloc/calloc: "If size is zero, the behavior of malloc is implementation-defined" meaning it can return a NULL pointer or another canonical value that is not NULL but still can not be dereferenced without causing an access error or similar. I believe most C compilers will return NULL. The code definitely assumes that even though the C standard does not guarantee that.

AlignDatum() calculates the next valid offset with adjusting for possible padding based on the platform and the typedef descriptor in its first parameter. Difficult to see how that could get 0 here, as the AlignDatum() should always return a value at least as high as the second parameter and possibly higher depending on platform specific alignment issues.

This crash for sure shows that some of the API functions of Mini vMac are not quite 100% compatible to the original API function. But where oh where could that be? This happening in InitApp() does however not spell good things for the future. This is basically one of the first functions called when LabVIEW starts up, and if things go belly up already here, there are most likely a few zillion other things that will rather sooner than later go bad too even if you manage to fix this particular issue by patching the Mini vMac emulator.

Maybe it is some Endianess error. LabVIEW 3 for Mac was running on 68000 CPU which is BigEndian, not sure what the 3DS is running as CPU (seems to be a dual or quad core ARM11 MPCore) but the emulator may get some endianess emulation wrong somewhere. It's very tricky to get that 100% right and LabVIEW does internally a lot of Endianess specific things.

-

-

2 hours ago, hooovahh said:

What did have a measurable improvement is calling the OpenG Deflate function in parallel. Is that compress call thread safe? Can the VI just be set to reentrant? If so I do highly suggest making that change to the VI. I saw you are supporting back to LabVIEW 8.6 and I'm unsure what options it had. I suspect it does not have Separate Compile code back then.

Reentrant execution may be a safe option. Have to check the function. The zlib library is generally written in a way that should be multithreading safe. Of course that does NOT apply to accessing for instance the same ZIP or UNZIP stream with two different function calls at the same time. The underlaying streams (mapping to the according refnums in the VI library) are not protected with mutexes or anything. That's an extra overhead that costs time to do even when it would be not necessary. But for the Inflate and Deflate functions it would be almost certainly safe to do.

I'm not a fan of making libraries all over reentrant since in older versions they were not debuggable at all and there are still limitations even now. Also reentrant execution is NOT a panacea that solves everything. It can speed up certain operations if used properly but it comes with significant overhead for memory and extra management work so in many cases it improves nothing but can have even negative effects. Because of that I never enable reentrant execution in VIs by default, only after I'm positively convinced that it improves things.

For the other ZLIB functions operating on refnums I will for sure not enable it. It should work fine if you make sure that a refnum is never accessed from two different places at the same time but that is active user restraint that they must do. Simply leaving the functions non-reentrant is the only safe option without having to write a 50 page document explaining what you should never do, and which 99% of the users never will read anyways. 😁

And yes LabVIEW 8.6 has no Separated Compiled code. And 2009 neither.

-

1

1

-

-

2 hours ago, hooovahh said:

Edit: Oh if I reduce the timestamp constant down to a floating double, the size goes down to 2.5MB. I may need to look into the difference in precision and what is lost with that reduction.

A Timestamp is a 128 bit fixed point number. It consists of a 64-bit signed integer representing the seconds since January 1, 1904 GMT and a 64-bit unsigned integer representing the fractional seconds.

As such it has a range of something like +- 3*10^11 years relative to 1904. That's about +-300 billion years, about 20 times the lifetime of our universe and long after our universe will have either died or collapsed. And the resolution is about 1/2*10^19 seconds, that's a fraction of an attosecond. However LabVIEW only uses the most significant 32-bit of the fractional part so it is "only" able to have a theoretical resolution of some 1/2*10^10 seconds or 200 picoseconds. Practically the Windows clock has a theoretical resolution of 100ns. That doesn't mean that you can get incremental values that increase with 100ns however. It's how the timebase is calculated but there can be bigger increments than 100ns between two subsequent readings (and no increment).

A double floating point number has an exponent of 11 bits and 52 fractional bits. This means it can represent about 2^53 seconds or some 285 million years before its resolution gets higher than one second. Scale down accordingly to 285 000 years for 1 ms resolution and still 285 years for 1us resolution.

-

1

1

-

-

Well I referred to the VI names really, the ZLIB Inflate calls the compress function, which then calls internally the inflate_init, inflate and inflate_end functions, and the ZLIB Deflate calls the decompress function wich calls accordingly deflate_init, deflate and deflate_end. The init, add, end functions are only useful if you want to process a single stream in junks. It's still only one stream but instead of entering the whole compressed or uncompressed stream as a whole, you initialize a compression or decompression reference, then add the input stream in smaller junks and get every time the according output stream. This is useful to process large streams in smaller chunks to save memory at the cost of some processing speed. A stream is simply a bunch of bytes. There is not inherent structure in it, you would have to add that yourself by partitioning the junks accordingly yourself.

-

1

1

-

Actor framework: User Event Error 1

in LabVIEW General

Posted

It is not that LabVIEW MAY unregister the reference, but that it WILL unregister the reference as soon as the top level VI in whose hierarchy the reference was created goes idle. This is by design and the only way to prevent that is to either keep that hierarchy active until any other user of that refnum has finished or delegate creating of the refnum to the place where it is needed, for instance through a LV2 style global maintaining the reference in a shift register and when being called for the first time it will create the refnum if the shift register contains an invalid refnum. True Actor Framework design kind of mandates that all refnums are created in the context of where they are used not some other global instance that may or may not keep running for the time some Actor is using the refnum.