-

Posts

123 -

Joined

-

Last visited

-

Days Won

3

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by BobHamburger

-

-

Panel is not resizing. Dev environment.

More interesting details: Vertical array of dbls. Working just on my laptop, not migrating anything anywhere. Open the app's GUI and highlight the text in the first element of the array, and then open a Font Style dialog with CTL-0. Make no changes, just close the box by hitting OK. The vertical size of the elements increases from 21 (the size I've previously edited it to be) to 22 pixels. Note that both the array, as well as the element which comprises it, have strict type defs, so I couldn't even make this change directly from the VI's front panel if I wanted to.

Now, open the typedefs and resize the indicator to 24 pixels, apply the changes, save the typedefs and close them. Go back to the GUI, open the Font Style dialog, make no changes, close the box by hitting OK: the vertical size of the elements decreases from 24 to 22 pixels.

It seems like anything that "disturbs" the GUI causes the numeric indicator to assume its default size for the selected font. Considering that the indicator in question is tied to a strict type def, I consider this behavior a bug: nothing except editing the type def should be able to change display properties of the object.

-

I am developing an app on my laptop, and deploying to a customer's machine. Every time I migrate from one system to another, various controls and indicators decide to change vertical size (# of pixels) and/or alignments. For example, I've got a name-value display which consists of a vertical array of strings next to an array of dbls, with the vertical scroll bar of the dbl array tied programmatically to a property node index input of the string array so that they scroll together. The nominal size of each of these indicators is 18 pixels, and the arrays are aligned at their top edges. Migrate from my laptop to the customer's machine, and the indicators in the dbl array become 19 pixels, the string remains 18 pixels, and the arrays no longer align on top.

FYI, I've set the fonts in both string and dbl to be fixed (Tahoma) rather than dependent on system settings. What am I doing wrong?

-

I know this is an older thread, but I wanted to follow-up on my earlier remarks as I believe my recent experience is relevant.

My local ASM was kind enough to find and send to me some training manuals this past fall. I spent the past two months thoroughly reviewing the materials at a leisurely pace, and I have to admit that the four classes (Core 3, Advanced Architectures, Project Management, and OO Programming) showed me quite a few interesting methods and approaches which I'm sure will prove useful at some point.

I took the exam today and scored a glorious 56.My impression of many of the questions was that they were vague, poorly constructed, or ambiguous. Once I got past the confusing syntax, the majority of questions fell into four distinct categories:1. Who cares?2. Nobody uses this feature or does it this way.3. This is not applicable to any real-life project issue.4. (None of these choices) or (more than one of these choices) is the "best" way to do <whatever>.To put this in perspective, I took the online example test two months ago (before reviewing any of the course materials), and scored a 52; today I got a 56. From this viewpoint, the training materials are completely irrelevant to the exam. In my opinion, the majority of the questions on the exam were almost totally detached from any realistic issues or considerations that a LabVIEW professional actually faces. After the exam, I chatted with a manager at the local Platinum Alliance Partner, who told me that I'm in good company -- over the past 2 years, nobody there has passed the CLA-R the first time, and in fact some of their most experienced engineers required multiple attempts to pass. I cannot fathom the process followed by the Customer Education and Certification folks who put this program together.Anybody from NI out there listening?-

2

2

-

-

I'm in the position that Jim was in six months ago: my CLA is about to expire and I need to take the CLA-R. I downloaded and took the practice exam and failed it by a couple of questions. Like Jim, I used to work for a local select integration partner (the same one, in fact) with which I have maintained a cordial business relationship, and I spoke with their training manager to discuss the best strategy to prepare for the CLA-R. She informed me she, as well as several other of their engineers, have recently failed the CLA-R. We're not talking about a bunch of slouches here; this is a group of some of the most talented and experienced LabVIEW developers that you'll find anywhere in the world. They are all certified NI instructors, also. The bottom line here is that the validity of this exam must come into question if there is this high a failure rate in a population of properly qualified test takers. People who could pass the 4-hour exam should be able to pass the 1-hour test without this kind of extraordinary attrition rate. Something is drastically wrong with the test design or the way the questions have been written.

What I'm hearing from this thread is that we got very close to the exam we wanted but not quite right. But we got closer than might be expected... it is, as you said, very hard to write a multiple choice exam that actually tests advanced architecture concepts.Steve, from my perspective, what I'm seeing from this thread is that you didn't get quite as close as you needed to. Just my two cent's worth; YMMV.

-

Introduced in LabVIEW 7.0, the Subpanel is a container that is used to display the front panel of a subVI within the front panel of a Main VI, allowing users to interact with the subVI's front panel controls within the bounds of the main VI.

-

People are throwing the term "state machine" around in this thread (and in the general LV community at large) pretty cavalierly. When I took Digital Design as an undergraduate (granted, about a million years ago, or so it seems), we studied two kinds of state machines:

1. Moore: the outputs are uniquely determined by the state, and

2. Mealy: the outputs are determined by the transitions between states.

The other significant feature of a state machine is that, for every given state, the next state can be uniquely and deterministically quantified as a function of the current state and inputs. Each state selects its next state, but only its next state -- not the next 3 states, or 5 states, or whatever. However useful they may be -- and I'll admit to being a heavy user of the paradigm -- a QSM is not a state machine.

1. Entry Actions -- These are actions that are performed exactly once every time this state is entered from another state.2. Execution Actions -- These are actions that are performed continuously while the SM remains in the current state.

3. Exit Actions -- These are actions that are performed exactly once just prior to this state exiting.

4. Transition Actions -- The are actions that are performed exactly once when this state exits and are unique for every 'current state-next state' combination. When looking at a state diagram, these actions are associated with the arrows.

Very interesting and very general purpose approach, a hybrid between Moore and Mealy, but personally I think I'd find it unnecessarily complex and confusing to maintain.

Oh, and one more thing... FSM has another important meaning to me beyond computer science.

-

Well, it's a month later, and I'm a dedicated convert to virtual machines. Thanks, Omar, for the suggestion. However, I think I'm about to run into another bomb. My various VM's, each with their own clone of Windows 7, are requesting activation and threatening to not work in two days if I don't. From everything I've Googled online, there seems to be no way around this other than purchasing additional Windows keys, which is ridiculous. Anybody out there know of a way to get around the MS Licensing Nazis?

-

....

Suggestions?

Well, I found a place to download a Win7 .iso file. Seems to work... I've created VM's for LV8, 2009, and 2010; I'm installing 2010 and its myriad toolkits right now. I'll update this thread as soon as I know how well it all works.

-

I'm taking Omar's suggestion and installing VMWare Workstation; I've downloaded it and trying it out for 30 days before purchase.

I've installed it and created a virtual machine without any difficulty. Now it wants me to supply it with the Win7 installation discs so that it can install the OS in the VM. Of course, my laptop didn't come with media (how many do these days?) It came from Dell with Win7 Home Premium installed, which I upgraded to Professional. Is there any direct way for me to generate an installation image from what I've got? I've poked around with VMWare and can't find any other way forward.

Suggestions?

-

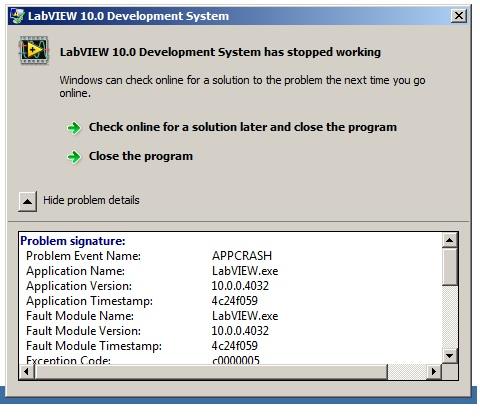

I've been using LV2010 for the past two months on my relatively new laptop (Dell Inspiron w/ Core i5, Windows 7) without any problems. Then, this evening, out of the blue, LV won't start; I get a dialog box saying "LabVIEW Development System Has Stopped Working" See attached screenshot.

I had the exact same thing happen last week with my installed copy of LV2009. I have customers using various versions of LV, so I have multiple versions installed. The only way that I was able to get 2009 up and running again was to uninstall and reinstall it; trying to do a repair did not help. This is very time consuming, considering that I have Vision, RT, and FPGA installed.

Anybody else seen this? Is there a quick fix, or do I need to take the long way home?

Edit: BTW I just tried it again (for like the 4th or 5th time) and this time it started. I'm in a hotel room, 4 hours from home, for onsite service at a customer location. I'm probably just going to keep my laptop on overnight with LV running until I'm back in the plant tomorrow morning.

-

What ever happened to the revision/update to the book that you and Mike were working on?

-

Note that this is a cross-post from the NI Discussion Forums...

I have a cRIO system which consists of a 9024 RT controller in a 4-slot chassis, and a separate, remote 9144 EtherCAT 8-slot chassis. The 9144 has the usual assortment of AI, AO, etc. modules, and it also includes a 9853 2-port CAN module. When I try to use the "Add Targets and Devices on FPGA Target", the 9853 shows up as a NI 37670, with the comment "This C Series module is not supported by the current versions of LabVIEW and NI-RIO." For reference, I'm using LV2009 SP1, and NI-RIO 3.4. Even more confusing: if I move the 9853 from the 9144 to the 4-slot (non EtherCAT) chassis, and try to add it to that chassis' FPGA target, it works without any problem. Is there some issue with CAN through EtherCAT? This thread seems to imply that it can be done, although that poster had different issues.

Any suggestions as to what I or NI might be doing wrong?

-

One of the many cool things which I love about LabVIEW is the ability of most of its primitives to be polymorphic. Similar to the general meaning in Computer Science, polymorphism is a programming language feature that allows values of different data types to be handled using a uniform interface. For example, the comparison palette is almost completely generic; you can use the same equality or inequality primitives for integers, floats, strings, enums, or arbitrarily complex compound structures (e.g. clusters) comprising all of the aforementioned. Particularly handy is LabVIEW's capacity to be polymorphic with arrays, which in many cases eliminates the need for looping. However, with this convenience comes behavior which may or may not suit your needs.

-

Try here

Thanks.

-

QUOTE (EHM @ Apr 3 2009, 02:57 AM)

-

Check out Ohio Semitronics. They make a huge range of power metering sensors, transmitters, and related instrumentation.

-

1

1

-

-

I've used VA's for the same thing -- a name/value pair lookup table -- and have similarly noticed a huge efficiency gain vs. using the Search 1D Array. Our clever friends in Austin must've implemented something cool inside the variant attribute code. Kinda makes you wonder why they didn't do the same trick with the Search 1D Array primitive.

-

Within the context of a large test application, I recently wrote a set of utilities for defining CAN tags using the Channel API. In a nutshell, it allows for CAN channels and the associated messages to be defined from a configuration spreadsheet without the use of MAX. It handles scaling, limits, encoding, all the bits and bytes stuff, and is very easy and intuitive to use. It's all very cool, and most of it functioned perfectly right from the start without any hassles at all. I had successfully defined several little-endian, unsigned channels, of various widths, and everything worked great.

Then I tried to define a channel to read a single-precision floating point value using big-endian (Motorola) format. LabVIEW kept throwing cryptic "an input parameter is invalid (but I won't tell you which one)" errors that didn't seem to make any sense at all. I chatted about the situation with friends and colleagues at Bloomy Controls and VI Engineering, and no one seemed to have a clue why I has encountering difficulty. We were collectively beginning to think that the CAN Channel API somehow couldn't deal with a Float32. We needed to read a channel that started at bit 8 and was 32 bits in length, in big endian format, and format that into a float, and LabVIEW simply wouldn't accommodate us.

Since I knew that I could successfully read U16's, I formulated a fallback position that I would read the requisite 32 bits as two channels, and reconstruct the Float32 programmatically. Ugly, but workable. While I coded that, one of my co-workers at UTC Power hooked up a CANalyzer to watch the bus traffic. The CANalyzer showed the bytes coming through the way they were expected to, but my two U16's showed bytes shifted in strange ways, crossing word boundaries, and things didn't make sense. And then there was a flash of insight provided by the CANalyzer: when you changed the channel configuration from Intel to Motorola and back again, it automatically changed the location of the start bit. A 32-bit channel that starts at bit 8 for Intel (little endian) format becomes a 32-bit channel that starts at bit 32 for Motorola. I edited my channel configuration spreadsheet accordingly, and suddenly, magically, LabVIEW knew what to do. No channel configuration errors, and the data came through correctly.

WTF?

We scratched our heads for a minute, and the lights came on in our collective brains. The start of a CAN channel appeared to be specified by the least-significant bit of the least-significant byte. For a little-endian message beginning at bit 8 and spanning 32 bits, it's WYSIWYG. But a big-endian Float32 has its words, and the bytes within those words, swapped. So for a 32-bit message that resides from bit 8 to bit 40, the message "starts" at bit 32. It all makes perfect sense, in a totally frustrating and absolutely retarded way.

There are two really amazing things about this story. First, this bizarre idiosyncrasy was consistent across two completely separate and apparently unrelated software platforms: the LabVIEW CAN API, and a commercial CAN diagnostic tool. In other words, this isn't a bug, it's a feature, based upon a standard. Second, neither environment contained any hint of this requirement in its documentation.

The moral of the story: if you're using CAN and dealing with Floats and big-endians, watch out. Reality is not what it may seem to be.

You're welcome

-

1

1

-

-

Good stuff mate - glad to hear it. Now if only I could get on a program like that - I could do with the exercise motivation

Hopefully, you can find a more cheerful motivation to start doing it. In my case, the very real threat of suffering a lingering cardiovascular disability, or, on the other hand, sudden cardiac arrest, act as strong incentives for me to get my arse off the couch.

-

Hey Bob - how are you doing mate? I hope you're still doing okay...

Thanks for the kind thoughts, Chris.

Still here and kicking. Feeling fine most days, at least as fine as my advancing years allow me to

My cardiologist prescribed a 12-week cardiac rehab exercise program for me, which is 100% covered by my health insurance (the topic of many other lively threads...) I go three times a week for supervised aerobics while wearing a wireless EKG monitor. At this point, each 45 minute session includes walking 1.5 miles on the treadmill at a 4% grade, followed by 2.5 miles on a stationary bike. Not bad for a middle-aged geezer with a bad ticker.

My cardiologist prescribed a 12-week cardiac rehab exercise program for me, which is 100% covered by my health insurance (the topic of many other lively threads...) I go three times a week for supervised aerobics while wearing a wireless EKG monitor. At this point, each 45 minute session includes walking 1.5 miles on the treadmill at a 4% grade, followed by 2.5 miles on a stationary bike. Not bad for a middle-aged geezer with a bad ticker. -

I am glad you did not. your willingness to act a sounding borad is greatly appreciated.

Ben

What was that? A sounding Borat?

-

1

1

-

-

NI's exams are based on their training materials. If you've taken the classes (and really understand the concepts involved), then your best bet is to go back and thoroughly review the manuals. Your ability to accurately and thoroughly regurgitate the training concepts will facilitate your passing the exams.

The majority of the most useful and practical stuff that I've learned over the years has come from the examples that ship with LabVIEW. Mind you, most of this won't help you with the CLD exam, but it can greatly expand your knowledge of various techniques and approaches.

-

Great reply, Dak. Well thought-out, articulate, detailed, and respectful; just the kind of debate that I value on LAVA. Nonetheless, I still think we need to wean ourselves off of the oil tittie and move on to something more sustainable. Just my $0.02 worth.

Woo hoo! I just hit my 100th post! At this rate, it'll only take me about 120 years to catch up to crelf

-

1

1

-

-

Ice core data, cyclical temperature changes, who said what and when, graphs, politics... none of this is really relevant.

To me, the central issue that needs to be addressed is from where we get our energy. Right now, the bulk of the world's petroleum reserves -- the energy source we find easiest to obtain and use -- are under countries with unstable political regimes and (to Westerners) undesirable cultures. The US is fighting two wars because we have to keep ourselves involved in the politics of the Middle East. Plentiful non-petrochemical energy would mean that these countries would become irrelevant.

What truly irks me about our country's energy strategy (or lack thereof) is the complete absence of political will and forward thinking. We've spent close to a trillion dollars over the last eight years in Iraq and Afghanistan; imagine how many solar panels, wind farms, nuclear reactors, or whatever other kinds of non-petrochemical energy sources could have been brought online with that kind of money. We've been collectively dicking around with fusion research for the last four decades, and the latest projections that I've seen still put practical fusion power out another 20 years. We're spending only about $200 million per year (that's 0.25% of what we spend in Iraq) on what should be a Manhattan Project-style national priority.

This is the real issue. Without stable, abundant, and inexpensive energy, our entire economy stumbles and falters. We have become the pawns of political regimes whose power and influence otherwise would be those of nomadic desert tribesman.

-

1

1

-

Prevent control resize when changing monitors?

in User Interface

Posted

Win7 on my laptop, XP on the customer's desktop. Re-read my second entry on how things spontaneously resize without changing from one machine to another.