-

Posts

798 -

Joined

-

Last visited

-

Days Won

14

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by John Lokanis

-

-

Cool. I'll give it a try!

-

I don't have a size control. Just color. I think the size difference you see is because I messed around with the output in yEd a bit.

-

thanks. I'll see if I can make this work.

-

I need some help from a scripting ninja. I need to find all instances of a particular sub-vi in every VI in a project and then find out the value of the constants that are wired to this VI as inputs.

Specifically, I am looking for every place the "Error Cluster From Error Code.vi" is used in a project. I then want to get the value of the constant that is wired to the 'error code' input. Next I want to get the value of the constant wired to the 'error message' input. If the 'error message' input is connected to a 'format into string' function, then I want to traverse to that node and grab the constant wired to the 'format string' input.

I want to output an array with each row having the name of the vi that contains a "Error Cluster From Error Code.vi" instance, the value of the 'error code' constant and the value of the 'error message' or 'format string' constant.

So far I have gotten an array of the VIs that use the "Error Cluster From Error Code.vi" and have been able to pull the reference to the "Error Cluster From Error Code.vi" sub-vi on each instances BD but I am unsure of how to find the constants attached and pull their values.

I would appreciate any help, even in the form of a list of steps to follow so I can code up a solution.

thanks!

-John

-

I checked out those MS links. I am not sure how to use that information since what I am looking for is a way to connect to each session individually using network protocols (IP and Port) if I use VI Server or TCP. But with Network Streams I can create a unique name. I suppose I could use the sessionID as that unique name, but that would simply replace the machinename/username unique identifier.

As for the bi-directionality, the proposal I put forth would establish two streams between the server and client, one for command/data in each direction.

Regarding the dealer concept, I already handle that in the server code via a subscription mechanism. This is abstracted from the transport mechanism.

I think the problem that Network Streams has is there is no way around the 1:1 connection issue so I would need some alternate system to establish the connections. Perhaps a mix of VI Server and Network Streams might work but would be rather complex.

-

Not sure how to meet all the requirements with the TCP primitives.

1. No Polling. Seems like there will always be a need to poll the connection. If nothing else then to be able to respond to internal shutdown messages.

2. Robust connections. How do you re-establish the connection from the server side due to your flaky network dropping it if the client does not have a unique machine name and port to connect to?

It seems like I would run into the same issues and would have to design a very complex solution from the ground up.

-

I have run into a bit of a problem with how I am trying to do networked messaging between a client and a server application. I am considering trying Network Streams as a solution but wanted to see if anyone has had success (or failure) with this solution or has a better idea.

First, a little background on the application:

In my system, there are N servers running on the network. Each of these is running on it's own VM with a static IP and unique machine name.

There are also N clients. These are running on physical machines, VMs and a Windows RDS (Remote Desktop Server).

The messages are all abstract class objects. Both client and server have a copy of the abstract classes so they can send/receive any of the messages.

My current solution is to use VI Server to push messages from one application to the other. There is no polling.

Clients and servers can go offline at anytime without warning and the application at the other end of the connection must deal with the disconnect gracefully.

In normal operation, the client will establish connection to a group of servers (controlled by the user). The client provides it's machine name and VI Server port. Each server then connects back to the client. All messages then pass via these connections.

Therefore, a client is uniquely identified to the server by its network machine name.

Now the problem. A Windows RDS session does not have a unique machine name. Instead it uses the machine name of the RDS. So, if two users run the client application in two separate RDS sessions at the same time, when they connect to the servers, they will look like the same machine. There is no way to uniquely identify them and route messages to the correct one.

So, I have started looking at network streams as a possible solution. They looked interesting because you could add a unique identifier to the URL so you could have more than one endpoint on a single machine. This looked like it would solve my problem because I could combine the machine name and user name to make my client endpoint unique. But here is the process I would need to follow:

- Server creates an endpoint to listen for client connections.

- Client gets the list of server names (from a central DB) and then tries to connect to each server's endpoint.

- If successful, the client creates a unique reader endpoint for that server and then passes the server the information about how to connect to this endpoint.

- When the server connects to this endpoint, it also creates a unique reader for the client and sends that info.

- The client connects to the server using this unique endpoint and we now have two unique network streams setup between client and server, one in each direction.

- The client then disconnects from the first server endpoint since it now has its own unique connection.

- Both ends will need to have some sort of loop that waits for messages (flattened class objects) and then puts them into the proper local queue for execution within their application.

- Both will also need to be able to send a shutdown message to each of these loops when exiting to close the connection.

My concerns are how to have the server listen for connections with its generic endpoint to multiple clients at once. There is no guarantee that only one client will try to connect at a time. And once the client is fully connected and releases the generic connection, will the server be able to listen for more connections or will that endpoint be broken? How do I reinitialize it?

This is all so much easier with VI Server that I might have to just give up on the RDS solution altogether. But I want to give this my best shot before I do that.

Thanks for any tips or ideas or pointing out any pitfalls I missed.

-John

-

That works! What a weird behavior. I think the reason I only see this rarely is because almost all of my code is in a class namespace. In the most recent case, I made a new VI outside of a class to do the same function as the original method because I wanted to use the function in multiple classes.

FWIW, the VI does not have to be 'in memory' necessarily. Just has to be in the project.

-

Came across this thread today when looking for a solution to this issue. This has been happening to me occasionally. I have not found a solution yet.

I am not looking for a 'Remove from Project' menu item but rather a 'Remove from Library' option when trying to get rid of a method I no longer need.

The offending method cannot be dragged out of the class.

If I delete it, the class complains and I cannot remove the reference to it still.

The only solution I have found is to hack the .lvlcass XML and manually remove any reference to the method.

This definitely needs a CAR. I am seeing this in LV2015 so it is still broken.

-

Thanks for the info. I suspected something like this. I finally got the IT guys to dig into this and they found an issue with the NIC configuration and drivers on the VM. (This VM is dual homed across our corporate network and a private testing network in my lab).

Seems that LabVIEW is much more susceptible to issues like this than other languages.

In the end, I changed my code to use the .NET call to the Environment.MachineName property. And I took the further step to wrap that in a FGV that sets the value on first call and then returns it on all subsequent calls. This will protect me from any future issues that introduce a delay when getting this data.

-

Here is a fun fact I just discovered:

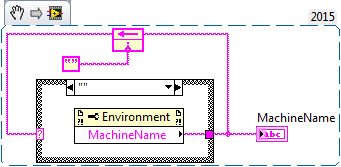

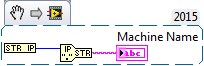

If you want to get the host name of your machine in LabVIEW, there is a function for that:

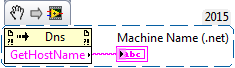

If you want to get the host name using .NET, then you can call the method System.Net.DNS.GetHostName using an invoke node:

https://msdn.microsoft.com/en-us/library/system.net.dns.gethostname(v=vs.100).aspx

Now the fun part:

If you call each of these functions on a Window7 OS they execute fairly quickly:

LabVIEW Method: 0.000289767 seconds consistently.

.NET Method: 0.182981 first call, 0.000417647 seconds subsequent calls (must be a cache)

Next, try the same thing on a Windows Server 2012R2 OS:

LabVIEW Method: 4.53384 seconds (WTF LabVIEW???)

.NET Method: 0.187395 first call, 0.000350523 seconds subsequent calls (again, must be a cache)

So, let's say you are using the machine name at a unique identifier to manage a networked messaging architecture. And as a result, you are often getting the machine name to tag a message you are sending with the sender's 'name'. And you decide to run you system on Windows Server 2012R2 OS. It will *work* but your performace will be down the tubes...

Any ideas on what could possibly be causing this? I know I could come up with my own cache of the host name in my code and then access it via FGV or something simular, but that is no excuse for this dismal performance.

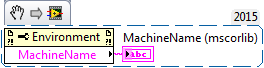

FWIW, there is yet another way to do this:

https://msdn.microsoft.com/en-us/library/system.environment.machinename.aspx

execution time: 7.91631E-5 seconds on Both OSes.

This leads me to the fact that a lot of the advice out there on this topic may need updating.

http://digital.ni.com/public.nsf/allkb/4B3877DB13370AA586257542003945A9

https://decibel.ni.com/content/docs/DOC-3917

http://forums.ni.com/t5/LabVIEW/Get-Computer-Name/td-p/101433

-John

-

1

1

-

-

Here is a fun thing I just had to share:

Started a build (that takes about 20 mins to run) and walked away from my machine to work on something else. Came back 25 mins later and the NI Updater had popped up on my screen while I was away. When I checked the build I saw that it had failed. When I looked at the explanation, it said another NI process was running (the updater) and that blocked the build from finishing.

Nice one NI, your nag-ware just wasted 30 mins of my time. If anyone is running a CI server, be sure to disable the NI Update tool.

-

Just bringing this thread back from the past. I ran into this exact same issue in LV2015. Here are some details:

Sending a message from app1 to app2.

Message payload is a class with some data members also being objects (composition).

Everything was working fine in IDE and EXE.

Changed one of the data members from a 2D array to an array of objects with the following structure:

Parent class with abstract methods. Two child classes with concrete methods. Two grandchildren classes with different private data than one of the child classes. (the grandchildren only inherit from one of the children to specify some finer details for that branch in the tree).

sending message from app1 to app2 in the IDE continues to work fine.

Build both EXEs and suddenly error 1403 rears it's ugly head.

After much work trying to get to the bottom of this, I find this post.

I place a static copy of the child and grandchild classes on the BD of the top level VI in the app2 EXE.

Problem solved!

Oh, and I never called any flatten functions when sending my message. Instead I am calling a VI in app2 from app1 using call by reference node. (This VI puts the message object in the receiver's queue.)

So, apparently, the call by reference node uses the flatten/unflatten object function under the hood. And also, that function has no problem resolving the class type in the IDE but fails in an EXE.

I have ensured that app2 has all the classes in it's project and they are even marked 'always include' in the app builder. So, they are in the EXE but maybe not in memory already. Still, it should be able to find them and unflatten the data without the static links I had to add. And in any case, this error message is extremely misleading because there is no corruption happening. The RTE just cannot find the class definition.

I hope that a CAR can be created to finally address this situation. And I hope future versions of LabVIEW can do a better job of reporting the correct error. Even better would be a version of LabVIEW where code ran the same way in an EXE as it does in the IDE.

-

I need to build an installer for my EXE so that it gets installed for all users of the target machine. This includes users who have yet to setup an account on the machine.

I see how I can create an shortcut on the 'All Users Desktop' but I cannot see how to also have a shortcut appear on the start menu for all users.

I am trying to install my app on a Windows Terminal Server (aka Windows Remote Desktop Server) so any user can log in and have access to my application.

Is this even possible with the installers LabVIEW is capable of building?

thanks,

-John

-

Doh! I knew it had to be something simple. A Lokanis always pays his debts.

See me at the BBQ to collect your beer.

See me at the BBQ to collect your beer.

-

Here is a simple example of what I am trying to do.

I tried changing from string to U8 array in the example but it made no difference.

I'll buy a beer at the LAVA BBQ next week for the first person to solve this!

-

Not sure what catagory to put this one into so I am trying here in application design.

I need to read a VI off disk on one machine, package the data up and send it across the network using my messaging system and then reconstitute it on the destination machine. Then I want to load and run it.

My VI is pretty simple and contains no non-vi.lib subvis

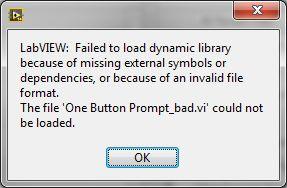

I have tried using the 'Read from Binary File' to get a string of the data and then 'Write to Binary File' to save it at the destination. The file size looks ok but the VI will not open. I get this error message:

Also, I have checked the MD5 of both files and they do not match.

So, what is the trick to getting this to work? Is there a data type I should be using other than string to send the data? Is there a special way to save the data at the destination so it creates a valid VI file?

thanks for any help,

-John

-

Yes, I could undefer the panel when the resize completes, but where can I turn defer on? I need a 'panel resize?' filter event or something equivalent.

-

This seems like it would be a simple thing to fix but I can't seem to find a way and could not find any posts on NI or LAVA about it so here goes:

I have a string control with a vertical scroll bar viable. This string control fills the entire pane. I have it set to scale objects with pane. I also have the pane set to scale objects as the pane resizes.

The problem I have is when the user resizes the window, the string control will scroll to the top. So, if the user is looking at some data at some point below the top, as soon as they try to make the window bigger to see more data, they lose their place as the scroll position is change to the top again. This is very frustrating.

I have tried to find a programmatic solution. There is a panel resize event that I can use to reset the scroll position back to the desired spot. Unfortunately there is no 'panel resize?' event to capture the current scroll position. I have been able to store the position in a SR when the mouse moves over the control using another event. But, this still results in very jerky behavior when resizing as LabVIEW keeps scrolling the string to the top while my code fights to keep it as the right spot.

Does anyone know a better solution to this? And can someone at NI explain why this behavior exists and if it will be corrected in a future release? I cannot think of a benefit to having the control behave the way it does.

I have attached a simple example demonstrating this effect and my current attempt to address it.

-John

-

Not sure I understand. You wouldn’t be changing any functionality; your DD methods for grandparent and parent would behave as before, but you would have the ability to call the static method directly from the child.

The problem would be the static method would need to be part of the grandparent, which in this case is basically my version of 'Actor Core' for my architecture. So, it is not the right place to make customizations for a particular use case.

I think the cleanest solution is to strip the functionality that is common to the grandchild and the class I wanted to have inherit from it into a separate standalone reuse VI. Then I can call that from the override VI in the grandchild and from the new class that will be a sibling of the parent.

Thanks for all the suggestions. It really helped me sort this out.

-

Yes. Sorry for the verbose description. I did end of making a test project to prove/disprove this and discovered that even though I cast my object from grandchild to grandparent and then called the method in the grandparent directly, it still tried to run the grandchild's implementation which results in recursion and the VI was halted at that point. So, there does not seem to be a way to do this.

And the point about the static method is correct, but I don't have the option of modifying the grandparent class as it is a key part of the system architecture.

My setup is similar to Actor Framework. There is a top level actor class that implements the message handler. All children of this class add on additional functionality but in parallel call the parent method to get the message handling functionality. This works well in most situations.

But, in my case, I implemented an Actor that has certain behaviors for one use case. I then made a child of this actor that uses the same 'run' method but overrides some of the other methods inside the 'run' to customize the actor to a slightly different use case.

I now have a third use case that differs from the first actor enough that just overriding some of the methods that are part of the 'run' method will not meet my needs. *BUT* it does need some of the same methods used in the second actor that I created to override some of the functionality. I really wanted to inherit those methods but it looks like I will have to instead make a new actor that inherits from the top level and I will have to duplicate those parts that are in the second actor's class. This is a case where multiple inheritance or maybe traits would be useful. I guess I am SOL.

thanks for the help.

-John

-

I have a class hierarchy with a grandparent that implements a message handler. I have a child class that overrides the message hander to add additional parallel functionality but also calls the parent method to get the message processing functionality.

I now want to make a grandchild that also overrides the message handler method to implement different parallel functionality but still needs to call the grandparent implementation to get the message processing functionality. (I am doing this because I want to inherit some methods from the child in the middle.)

The problem is, the grandchild wants to call the child implementation when I use 'call parent'. But I don't want this middle implementation, I want the grandparent's version. I cannot simply place the grandparent's method because that dispatches back to the grandchild (making the method recursive).

I think I solved this by using 'to more generic' before the call to the grandparent's method and 'to more specific' after the call. But in the grandparent's method, it calls message 'Do' methods that need the data on the wire to be from the grandchild. Will the 'to more generic' call strip that data from the wire or will the call to the 'Do' inside the grandparent pass the object as the grandchild so when I cast it to the grandchild, it will work?

Hope that was not too convoluted. Any help is appreciated.

-John

-

Tried that. Cannot cast the webclient() to the webrequest() or the httpwebrequest(). I think it is because the webclient() is not a child but rather uses webrequest(). So, I need to create a webrequest() object, set the timeout and then tell webclient() to use my version.

But I have no idea how to create a .net object that inherits from the base class and then instantiate the webclient using my new object.

-

I am calling a web service using system.net.webclient(). So far it is working fine but now I want to set the timeout to something other than the default. Unfortunately, the timeout is not an exposed property for webclient(). From what I have read, webclient() is a child of the httpwebrequest() class, and that class has a timeout that can be set. But httpwebrequest in system (2.0.0.0) has no public constructors. All the examples on the web show how to override the webrequest getter to set a different timeout. Unfortunately this exceed my .net skills. Does anyone know how to solve this in LabVIEW?Here is the C# example:

NOTE: I am trying to solve this problem in LabVIEW 2011 so am stuck with .NET 2.0 for the system assembly.thanks,-John

Insert class in hierarchy

in Object-Oriented Programming

Posted

I have a project with a large number of classes that inherit from a common parent. The parent has a method that I want to override in all the child classes. The override method will be the same for all children. Instead of creating individual overrides in each child, I want to create a new class that inherits from the parent and only includes the common override method. I then want to change all the children that currently inherit from the parent to now inherit from this new class. That allows them all to get the new override method.

What I don't want to do is manually change the inheritance on each of these child classes. Instead I want to 'insert' the new class between the parent and all the children.

Does anyone know of an easy way to do this or maybe have a tool they scripted to solve this?

thanks,

-John