-

Posts

3,433 -

Joined

-

Last visited

-

Days Won

289

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by hooovahh

-

-

I am unaware of a time limit on editing your own posts. You are welcome to use the report to moderator to make fixes. I realize small things are just easier to ignore than making a report.

-

6 minutes ago, ShaunR said:

Do you see the unresponsiveness in dadreamer's example?

The newest version of LabVIEW I have installed is 2022 Q3. I had 2024, but my main project was a huge slow down in development so I rolled back. I think I have some circular library dependencies, that need to get resolved. But still same code, way slower. In 2022 Q3 I opened the example here and it locked up LabVIEW for about 60 seconds. But once opened creating a constant was also on the order of 1 or 2 seconds. QuickDrop on create controls on a node (CTRL+D) takes about 8 seconds, undo from this operation takes about 6. Basically any drop, wire, or delete operation is 1 to 2 seconds. Very painful. If you gave this to NI they'd likely say you should refactor the VI so it has smaller chunks instead of one big VI. But the point is I've seen this type of behavior to a lesser extent on lots of code.

-

22 minutes ago, ShaunR said:

There was a time when on some machines the editing operations would result in long busy cursors of the order of 10-20 secs - especially after LabVIEW 2011. Not necessarily XNodes either (although XNodes were the suspect). I don't think anyone ever got to the bottom of it and I don't think NI could replicate it.

It has gotten worst in later versions of LabVIEW. I certainly think the code influences this laggy, unresponsiveness, but the same code seems to be worst the later I go.

-

In the past I have used the IMAQ drivers for getting the image, which on its own does not require any additional runtime license. It is one of those lesser known secrets that acquiring and saving the image is free, but any of the useful tools have a development, and deployment license associated with it. I've also had mild success with leveraging VLC. Here is the library I used in the past, and here is another one I haven't used but looks promising. With these you can have a live stream of a camera as long as VLC can talk to it, and then pretty easily save snapshots.

EDIT: The NI software for getting images through IMAQ for free is called "NI Vision Common Resources". This LAVA thread is where I first learned about it.

-

2

2

-

-

38 minutes ago, dadreamer said:

In addition to the LV native method, there are options with .NET and command prompt: Get Recently Modified Files.

If you are in a Windows environment, and have many files to process, this is probably going to be faster. There probably are several factors in determining when doing this in .NET is the better solution.

-

3 hours ago, Neil Pate said:

Done some simple testing.

On a directory containing 838 files it took 60 ms.

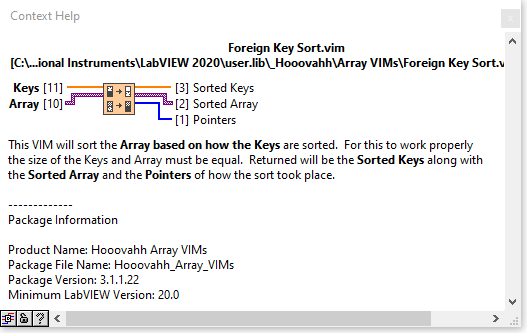

That's how I'd do it. Then combine that with the Foreign Key Sort from my Array package, putting the Time Stamps into the Keys, then paths into the Arrays, and it will sort the paths from oldest to newest. Reverse the array and index at 0, or use Delete From Array to get the last element, which would be the newest file.

-

1

1

-

-

3 hours ago, ShaunR said:

Have you played with scripting event prototypes and handlers from JSON strings?

No but that is a great suggestion to think about for future improvements. At the moment I could do the reverse though. Given the Request/Reply type defs, generate the JSON strings describing the prototypes. Then replacing the Network Streams with HTTP, or TCP could mean other applications could more easily control these remote systems.

-

Yeah I tried making it as elegant as possible, but as you said there are limitations, especially with data type propagation. I was hoping to use XNodes, or VIMs to help with this, but in practice it just made things overly difficult. I do occasionally get variant to data conversion issues, if say the prototype of a request changes, but it didn't get updated on the remote system. But since I only work in LabVIEW, and since I control the release of all the builds, it is fairly manageable. Sometimes to avoid this I will make a new event entirely, to not break backwards compatibility with older systems, or I may write version mutation code, but this has performance hits that I'd rather not have. Like you said, not always elegant.

-

12 minutes ago, ShaunR said:

IC. so you have created a cloning mechanism of User Events - reconstruct pre-defined User Event primitives locally with the data sent over the stream?

Yes. The transport mechanism could have been anything, and as I mentioned I probably should have gone with pure TCP but it was the quickest way to get it working.

-

3 hours ago, ShaunR said:

Yes. It is the "send user event" that I'm having difficulty with. User events are always local and require a prototype so how do you serialise a user event to send it over a stream?

Variants and type defs. There is a type def for the request, and a type def for the reply, along with the 3 VIs for performing the request, converting the request, and sending the response. All generated with scripting along with the case to handle it. Because all User Events are the same data type, they can be registered in an array at once, like a publisher/subscriber model. Very useful for debugging since a single location can register for all events and you can see what the traffic is. There is a state in a state machine for receiving each request for work to be done, and in there is the scripted VI for handling the conversion from variant back to the type def, and then type def back to variant for the reply.

When you perform a remote request, instead of sending the user event to the Power Supply actor, it gets sent to the Network Streams actor. This will get the User Event, then send the data as a network stream, along with some other house keeping data to the remote system. The remote system has its Network Stream actor running and will get it, then it will pull out the data, and send the User Event, to its own Power Supply actor. That actor will do work, then send a user event back as the reply. The remote Network Stream actor gets this, then sends the data back to the host using a Network Stream. Now my local Network Stream actor gets it, and generates the user event as the reply. The reason for the complicated nature, is it makes using it very simple.

-

3 hours ago, ShaunR said:

How does this work?

I have a network stream actor (not NI's actor but whatever) that sits and handles the back and forth. When you want work to happen like "Set PSU Output" you can state which instance you are asking it to (because the actor is reentrant), and which network location you want. The same VI is called, and can send the user event to the local instance, or will send a user event to the Network Stream loop, which will send the request for a user event to be ran on the remote system, and then reverse it to send the reply back if there is one. I like the flexibility of having the "Set PSU Output" being the same VI I call if I am running locally, or sending the request to be done remotely. So when I talked about running a sequence, it is the same VIs called, just having its destination settings set appropriately.

-

I work in a battery test lab and was the architect for the testing platform we use. Our main hardware is an embedded cDAQ, or cRIO running Linux RT. This is the hardware that runs the actual sequence, and talks to the various hardware. We use the RT platform more for reliability, and less for its determinism. Timing is of course important, but we don't have any timed loops. mS precision is really all we care about. The architecture is built around User Events with asynchronous loops dedicated to specific tasks. These events can be triggered from the RT application, or from a device on the network using Network Streams. A low level TCP would probably be better, but the main sequence itself isn't sent, step by step from Windows. Instead the sequence file is downloaded via WebDAV, then told to run it. The RT then reads the file and performs the step one at a time. Windows from this point is just for monitoring status, and processing logs. This is important to us since Windows PCs might restart, or update, or blue screen, or have any other number of weird situations, and we wanted our test to just keep going along. This design does mean that any external devices that use Windows DLLs for the communication can't be used. But network controlled power supplies, loads, cyclers, chambers, and chillers all are fine. Same with RS-232/485. Linux RT supports VISA, and we plugged in a single USB cable to a device that gives us 8 serial ports. If money were no object I suppose we would have gone with PXI but it is pretty over kill for us.

On the Windows side we do also have a sequence editor. The main reason we didn't go with TestStand is because we want that sequence to run entirely on Linux RT. Because the software communicates over User Events, and Network Streams sending User Events, we can configure the software to run entirely on Windows if we want. This of course takes out many of the safety things I talked about, but to run a laptop to gather some data, control a chamber, battery, cycler, or chiller for a short test is very valuable for us.

As for the design, we don't use classes everywhere. Mostly just for hardware abstraction. The main application is broken up into Libraries, but not Classes. If I were to start over maybe I would use classes just to have that private data for each parallel loop, but honestly is isn't important and a type def'd cluster works fine for the situation we use it in.

-

Thanks for still monitor these forums to help give answers. It sounds like the intent is to allow free use in any context, being the literal person I am, interprets accessing the block diagram as "discovering the source code" which would violate it. This is also a good exercise in reading the license agreements, for things you download.

-

On 4/25/2025 at 12:41 AM, Michael Aivaliotis said:

I put a temporary ban on inserting external links in posts (except from a safe list). We'll see what affect it has.

I like this solution. It doesn't ban external links entirely, instead it asks a mod or admin to approve the post with external links. I got got an email to approve of a post, just like I would get an email for reported spam.

-

Your reporting of spam is helpful. And just like you are doing one report per user is enough since I ban the user and all their posts are deleted. If spam gets too frequent I notify Michael and he tweaks dials behind the scene to try to help. This might be by looking at and temporarily banning new accounts from IP blocks, countries, or banning key words in posts. He also will upgrade the forum's platform tools occasionally and it gets better at detecting and rejecting spam.

-

2

2

-

-

According to this page there isn't a free trial of the base software.

https://www.ni.com/en/shop/labview/select-edition.html

But it does show the differences in the version. The main things you won't have are the ability to make stand alone applications, you won't have the Report Generation or Database Connectivity toolkit, or Web Services. There are other differences based on that page but in practice those feel like the most significant differences.

-

Well regardless of the reason, what I was trying to say is that references opened in a VI, get closed when the VI that opens them goes idle.

-

1

1

-

-

This sounds like the expected behavior of LabVIEW. Many references go idle and the automatic garbage collector takes care of it, if the VI that made the reference goes idle. I'd suggest redesigning your software to handle this in a different way. Like maybe initializing the interface in a VI that doesn't go idle.

-

I think everything in here is the expected behavior. As Crossrulz said an array can be empty, if one of the dimensions are zero, but other dimensions aren't. Yes this can cause things like a FOR loop to execute with an empty array.

Lets say I have some loop talking to N serial devices. Each device will generate an array of values. So if I index those values coming out of the loop, it will create a 2D array. Now lets say I want to close my N serial devices. A programmer may ask how many devices are there? Well you can look at the number of Rows in that 2D array and it will be the number of devices that were used earlier. We might run that 2D array from earlier into a FOR loop and close each of them. But what if each of the N serial devices returned an empty array? Now if arrays worked like you expected, then the 2D array is empty and the loop should run zero times. But LabVIEW knows the 2D array has N rows, and 0 columns. So it can run the loop N times. This isn't the exact scenario, but something like this is a reason why you might want your 2D array to be empty, but have a non zero number of rows. You want it to run some other loop on the rows, even if the columns are empty.

-

Glad it eventually worked for you. After several spammers took over LAVA extra restrictions were put on account creation. I suspect this is part of the issue you had.

-

32 minutes ago, crossrulz said:

To be honest, I always thought those should be in the Visible Items menu.

I never thought about it because of muscle memory. But logically it should be there.

-

I did do something similar years ago and posted the code here. With a youtube link demoing the graph functions. I never actually used it on a real project but put some decent time into the UX. It also allows for dragging out the graph into a new semi transparent window. It is not a generic framework, and mostly a proof of concept that could be used in an application, if you don't mind the various limitations, and restrictions.

-

I don't have anything to contribute to the development here. Only to say that I really like this type of function, and looking at your source it sure looks efficient. Thanks for sharing.

-

1

1

-

-

On 1/25/2025 at 7:57 AM, Rolf Kalbermatter said:

Not likely. I think the Administrators always resisted such requests unless there was a real legal matter involved. But LavaG had several nervous breakdowns over the years, either because a harddisk crashed or forum software somehow got in a fit. It was always restored as well as possible, but at at least one of those incidents a lot got lost. Some of that was consequently restored from archives other people had maintained from their push notifications from this website, but quite a bit got lost then.

This is pretty accurate. I know one ex-coworker in particular had an RSS feed push to his Outlook every post on LAVA. When LAVA had a major crash his Outlook was used to restore as much content as was possible. As for the content moderation, we try to self police our selves, enough to not get on NI's bad side. I have very rarely ever needed to intervein. One time I had to ask one user, to tread carefully on the topic they were sharing, but I did not delete any content or post. Thanks for the additional history. Jim has mentioned this story to me in the past but I didn't remember the details. I believe there was a meeting with NI where they were insisting that the scripting code wouldn't be made public, and someone called their bluff basically stating the tools for scripting are already being made by the community, and that if these were good enough for NI to use, we should also have access to them.

Need to know about DVR

in LabVIEW General

Posted

It was equally as bad as Gemini in my work with Task Scheduler. It is far too much to paste in here but I created a Task with the command line, and provided it then said: This all works but I'd like to turn off the feature Stop the task if it runs longer than 3 days, and turn off the Start the task only if computer is on AC Power. What command line switches do I need for this?

Gemini made up switches, and I had to keep pasting back the error I got over and over with Google eventually telling me it isn't possible.

I just hit the limit on free Grok messages and it had similar behavior. I'd run the command it gave with a paragraph explaining how it should work. I'd reply back with the error. It would tell me why the error existed and what command to use. That would generate a new error which I would tell it, and it would do the same. Over and over until I can't chat with it anymore.

I use AI primarily for writing assistance, but coding or technical assistance on the surface looks great. But in practice is lacking.