Search the Community

Showing results for tags 'flatten to string'.

-

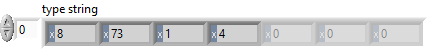

I discovered a potential memory corruption when using Variant To Flattened String and Flattened String To Variant functions on Sets. Here is the test code: In this example, the set is serialized and de-serialized without changing any data. The code runs in a loop to increase the chance of crashing LabVIEW. Here is the type descriptor. If you are familiar with type descriptors, you'll notice that something is off: Here is the translation: 0x0008 - Length of the type descriptor in bytes, including the length word (8 bytes) => OK 0x0073 - Data type (Set) => OK 0x0001 - Number of dimensions (a set is essentially an array with dimension size 1) => OK 0x0004 - Length of the type descriptor for the internal type in bytes, including the length word (4 bytes) => OK ???? - Type descriptor for the internal data type (should be 0x0008 for U64) => What is going on? It turns out that the last two bytes are truncated. The Flatten String To Variant function actually reports error 116, which makes sense because the type descriptor is incomplete, BUT it does not always return an error! In fact, half of the time, no error is reported and LabVIEW eventually crashes (most often after adding a label to the numeric type in the set constant). I believe that this corrupts memory, which eventually crashes LabVIEW. Here is a video that illustrates the behavior: 2021-02-06_13-43-58.mp4 Can somebody please confirm this issue? LV2019SP1f3 (32-bit) Potential Memory Corruption when (de-)serializing Sets.vi

-

Over in the community forum, I have finally gotten around to posting the prototype for my serialization library. The prototype supports serialization to and from JSON for 9 data types and to XML (my own schema) for the same 9 (listed below). https://decibel.ni.com/content/docs/DOC-24015 Please take a look. I've made it a priority to finish out the data type support for the framework generally and for the JSON format specifically. I will need community help to tackle any other formats: the framework is designed to make a single pair of functions on a class handle any file format, but the particular formats on the back end are not my specialty. Please post all feedback on the ni.com site so that they stay all in one place and I can follow the threads easier.

- 7 replies

-

- 1

-

-

- serialization

- object persistence

-

(and 2 more)

Tagged with:

-

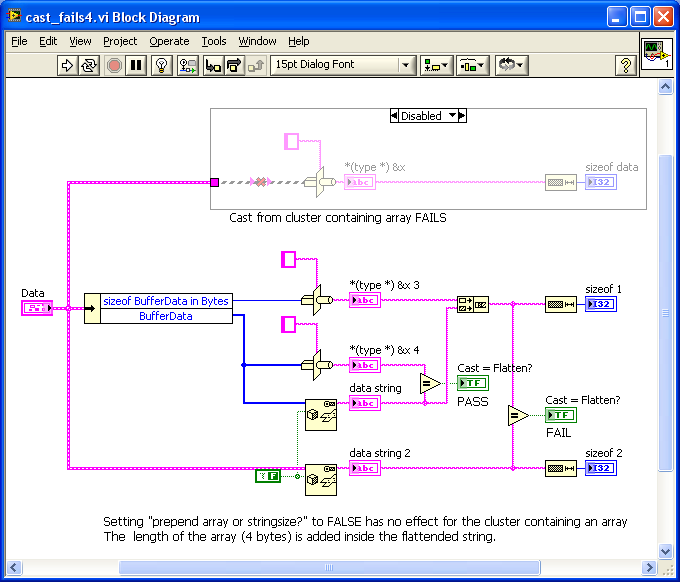

Hi, I need to pass some data to a DLL and need to pass the number of bytes I'm passing also. I tried using 'cast' (should accept anything) for getting the memmap but this fails (polymorphic input cannot accept this datatype) due to an array inside the cluster. Then I wanted to switch to 'flatten to string' for getting the same result and now the arraysize is prepended before the arraydata I stripped down the code to the bare parts but I don't want to unbundle all elements from my original cluster, just for getting the size. Attached you can find a LV8.5 version, the behaviour in LV 2010 SP1 is exactly the same. cast_fails4.vi thx tnt

- 9 replies

-

- cast

- flatten to string

-

(and 2 more)

Tagged with:

PotentialMemoryCorruptionwhen(de-)serializingSets.png.e31ac61a8ef3ee1d71ad471d67565015.png)