-

Posts

264 -

Joined

-

Last visited

-

Days Won

25

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by GregSands

-

-

Have you looked at using a 3D Picture control? It shouldn't be too hard to set up a 2D graph using it, and it has a "Render To Image" method which seems very fast.

Overall I agree, graphical output in LabVIEW is fragmented and inconsistent (and ultimately frustrating).

-

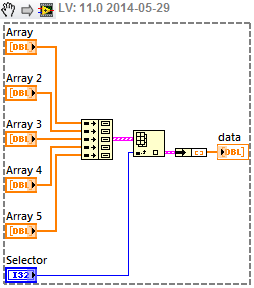

In fact, you can even use an XNode version of the OpenG code written by Gavin Burnell, and he has also written a few more Array XNodes including one which replicates your code.

-

1

1

-

-

My computer has a 6-core processor, so I was wondering if maybe I could use one of those processor cores for the same purpose one would use a real-time OS. Is there any way I could install something that would run at kernel level and make Windows temporarily behave as if there were only five cores, giving my VI exclusive control of the remaining core to use for timing? (or would other USB devices, or the USB controller itself, add too much latency?)

Just to answer this original question, yes it must be possible. I have an Aerotech stage controller which installs a Real-Time extension that reserves one core, and Windows thinks it has one less. I don't know how this is done, nor if it's a sensible solution to your problem - probably not would be my guess.

Just thinking further, I wonder if LabVIEW RT could be set to run one core (or several) of a Windows machine? I wouldn't be surprised if NI hadn't tried this at some stage, and now it's easy to get a dozen cores or more.

-

Hope you all realize AQ is just using this thread to find out which doors might need to be closed....

Actually, I think these type of threads (and there's a few lately thanks flarn2006) are good to have on LAVA, even if I'm unlikely to want to use the details provided. They give more of a picture of the LabVIEW that is public by seeing bits of the scaffolding and ropes and pulleys holding it all together.

The responses also confirm a sense I have that NI is becoming more open about the design and implementation decisions and tradeoffs that define LabVIEW, and that in itself provides a more stable and reliable product. And hopefully on LAVA it's parked enough away from the main flow to be seen only by those experienced enough to know how to handle it (i.e. very carefully!).

-

1

1

-

-

Keep It Simple. If it has a block diagram (or at least compiled code), it's a VI.

If a VI is run at the top level, it's a top-level-VI.

If a VI is called by another VI, it's a sub-VI.

If a VI has a front panel that is presented to the user, it's a UI-VI.

Or an interactive-VI. Or a user-VI.

Or an interactive-VI. Or a user-VI.But they're all VIs, the other names just give hints as to how the VI is used.

-

2

2

-

-

- Popular Post

- Popular Post

Silly David, just put a mirror beside your banana and then you're good to go...

-

3

3

-

-

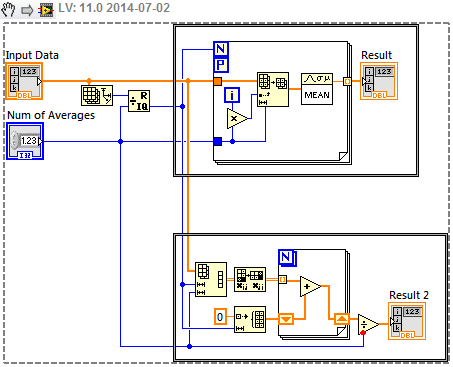

Couple of obvious things to start with.

- The equation looks rather like a convolution, which is equivalent to a multiplication in the Fourier domain. So at the very least, you need to take the FFT of your image before multiplying it with your Exponential array, and then computing the Inverse.

- In your upper For loop (which I presume is the subVI with an arrow on it) it looks as though you're trying to compute the matrix corresponding to the first exponential. Your equation defines an N x N array (not k x l array) in which k and l vary from 0 to N-1 (effectively they are a frequency domain analog of your image pixel positions, and the image should also be of size N x N). Your code does something quite different. You can also move many of the constants (anything not changing during the loop iteration) outside the loop, including the final Exponential, which will work just as happily, and usually faster, on a 2D array.

-

1

1

-

"Magnitude" only makes sense in terms of a Complex image, and there is a VI for extracting that. The output image is a grayscale U8 image, and so doesn't have a magnitude. Can you explain more of what you're trying to do?

-

Even though the Help docs are not very clear, I'm pretty sure that the Inverse FFT takes a complex Src image and returns a grayscale Dst image. So does it work if you change im1 to be a Complex image (and use the ArrayToComplexImage VI), and change im2 to be a U8 image (though I think this will get automatically set)?

-

1

1

-

-

Finally, I remember seeing Stephen post somewhere that property nodes, due to their inherent case structure, prevent LabVIEW from optimizing the code -- even if the VI is set to inlined. For this reason alone I avoid them in any code I expect to be running at high-priority. For UI, however, I use them extensively.

Thanks - I do almost exclusively use them in UI code, but will be sure to be careful otherwise.

-

Can I just add that when using classes across multiple targets (Windows, RT, FPGA), the issues are squared, not doubled! Thank you for the checklist (lets call it LVOOP-OCD) which I'll run my current project through soon. Particularly removing the class mutation histories, which are a waste to have if I have no code that could ever use a previous version. Why isn't there a class setting to never retain mutations? For some situations (e.g. pre-release) you're only ever interested in the current version. Or perhaps to retain only mutations related to major version number changes.

Jack, could you elaborate on your avoidance of typedefs? I'm in the "but ..." camp, and though I don't tend to typedef primitives, I do use typedef clusters (not strict) throughout my classes. What issues arise? I haven't had any major problems to date (except with the RT object cache getting out of sync with the Windows object cache), and like having the "certainty" of the same data structure everywhere. Is there a method for reliably using them? Or am I best to convert each typedef cluster into its own class - and is that any better anyway?

The other smaller question is LVOOP property nodes - I haven't noticed any problems at all with using them, and I'm still on LV2012 (may jump to LV2014 in a month). Is something unsavory lurking beneath the surface?

-

I've just written about an interesting RT issue I've experienced. Perhaps keep the comments over there.

-

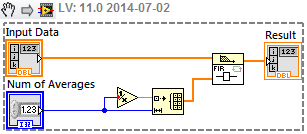

That's not quite a moving average, rather a down-sampling - i.e. Filtered Array is shorter than Input Data. Your first solution is pretty good - just speed it up with a Parallel For Loop. An alternative is to reshape into a 2D array. Both these come out roughly the same speed, about 5x faster on my machine than your solutions above.

If you want a true Moving Average (where the result is the same length as the original) I think this suggestion from the NI forums using an FIR filter is nice and simple, although you might look carefully at the first Num values if that's important.

-

1

1

-

-

-

Actually, on my machine it's the same speed as the XNode (plus/minus OS jitter), even for >10M iterations. I had run it only once which had Build Cluster Array marginally faster, but over several runs they end up more or less the same. Maybe check the debugging/compile options.

-

You could use Build Cluster Array:

That runs faster than the XNode.

I'm torn because I like this XNode, but won't add it to my reuse tools due to the fact that it is an XNode. Which is why I tried implementing a solution just as easy to use but with OpenG.

My experience is that for a well-written, and fairly simple, XNode (which this seems to be), there is almost never a practical issue with using them. I've even used them on RT. Yes there may be dragons, but they usually be tamed.

-

2

2

-

-

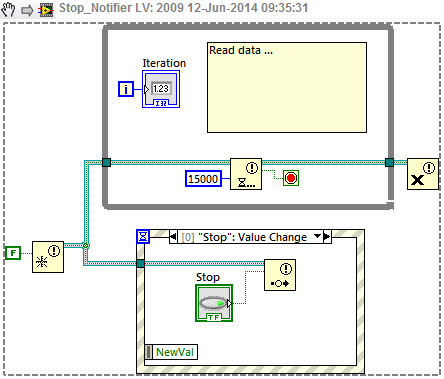

Move Register Events outside the loop - it should only be done once.

I'd just be guessing to explain why this works, but perhaps the re-registration will not contain a previously generated Event (in the case of no Delay).

-

I have used multiple event loops on one occasion, certainly not regularly. The main reason for doing so was that the VI had several separate groups of controls ("components") which were largely independent, and at the time it made sense to have separate event loops to handle each component, simply for ease of programming and keeping the handling of related controls together.

But I wouldn't be too concerned not to have that option, especially if there were a bunch of other improvements, such as being able to group events in some way (otherwise I can have an Event menu which is several screens tall), or to import/export/merge event handling functions. Or if adding a control (or group of controls) to the FP could also add a set of "default" events to manage those controls, which would be kind of like using an XControl, but without needing to create it (and handle all the workarounds needed to use it).

-

... If the connector pane and front panel could be decoupled, then most VIs wouldn't even need a front panel ... Maybe post on the LabVIEW Idea Exchange ... I think VIs should have n front panels (where n could be 0) ...

It's already on the Idea Exchange - in fact, these two points are exactly what my idea suggested a couple of years ago.

-

-

I have two zip files from that era which look promising (i.e. they have FFTW in their titles!).

FFTW_1D_2D__forward_and_inverse.zip

The Multicore Toolkit is another option for fast, parallelized FFTs. http://sine.ni.com/nips/cds/view/p/lang/en/nid/210525

-

I am a compulsive CTRL+S and CTRL+E, CTRL+W.

Which is all well and good - as long as I wasn't actually on the FP, or worse still, in the Project window (which can take a long time to show the Files view (Ctrl-E)).

-

@Jordan: In my mind it's not so much "removing" the FP, rather, only creating it if and when needed. That could still be completely automated (even customized with the sort of hooks LV currently provides) - certainly not needing to manually build a UI or match tags. I simply feel that having the BD as the default starting point for a VI provides a better programming perspective than filtering what I want to do through the FP. I'm not anti-FP

just saying the FP serves the BD, and not the other way around.

just saying the FP serves the BD, and not the other way around.

"Separate Compiled Code From Source"? (LV 2014)

in Development Environment (IDE)

Posted

I've been using "separate compiled code" in 2012 for some time, and for the most part it works without a problem. The only thing I recall is getting out of sync with an RT deployment, but clearing the cache and starting over got things working. One point not yet mentioned is that it's also very useful for code that is used in both 32 and 64-bit LabVIEW.