-

Posts

43 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Ryan Vallieu

-

Ubuntu daqmx / miss: ni-daqmx-labview-2021-support

Ryan Vallieu replied to JimPanse's topic in Linux

We just found that the NI RHEL8 DAQmx Repo is also missing this support file and showed the same issue initially. The DAQmx VIs would not appear on the palette, but the DAQmx package indicated it was installed correctly. Once I found this post and let my sysadmin know about it, he was able to download the package and get DAQmx working with LabVIEW 2022. -

pxeboot RHEL 7.9 with LabVIEW 2019, using NFS - no DAQmx driver support

Ryan Vallieu replied to Ryan Vallieu's topic in Linux

Ok this seems to have worked but the final solution has me baffled. We built the new initramfs and added a /etc/dracut.conf.d/newsystem-dracut.conf with the space delimited list of NI modules to include. We built this list from the lsmod list of the working disk booted RHEL 7.9 system that has no problem with the HW drivers and runs the code perfectly well. Tried then doing the pxe boot with the new initramfs with those drivers as being verified as installed in the image through lsinitrd /boot/initramfs-newsystem.img When we pxe booted the system - it again failed to load the DAQmx driver. Then we looked the list of installed drivers from my OTHER disk booted system and noticed that the pxe boot initramfs image was missing ni-serial module. Went to the RHEL 7.9 system, did the yum install of niserial Went back and added that to the dracut.conf.d file - and then rebuilt the initramfs with that added and took a new rsync copy of the file system for the NFS. PXE boot again... This time the system WORKED! I will reboot PXE boot again and verify. I am very confused as to why this would work, when the original RHEL 7.9 disk boot image DOES NOT HAVE niserial module installed but the EXE works fine and can call DAQmx. But - I'll take the win. -

pxeboot RHEL 7.9 with LabVIEW 2019, using NFS - no DAQmx driver support

Ryan Vallieu replied to Ryan Vallieu's topic in Linux

Ah - we might have missed a step and this may be the solution: https://access.redhat.com/solutions/47028 How can I ensure certain kernel modules are included in the initrd or initramfs in RHEL? -

pxeboot RHEL 7.9 with LabVIEW 2019, using NFS - no DAQmx driver support

Ryan Vallieu replied to Ryan Vallieu's topic in Linux

Checking around on the system for failures of the pxeboot system when starting - dmesg showed: nikal module verification failed: signature and/or required key missing According to NI: NI-KAL is driver software that supports the Linux driver architecture. Edit: It actually did load according to lsmod, this must just be a warning. -

We are attempting to pxeboot a system based on our working RHEL 7.9 LabVIEW 2019 SP1 installation and load the system using NFS as our systems can't have hard drives for security reasons. The problem I am having now is that the NFS image version doesn't seem to be loading the NI DAQmx driver. PXIe-8135 controller LabVIEW 2019 SP1 DAQmx driver/code works great when run on the system with the HD to boot as normal. We created the NFS image that the system uses using rsync. We took everything but /proc and /sys and /home/installers (where we put package and iso images on our disconnected systems in case we need to install a package we forgot). The system boots into RHEL 7.9 GNOME, autologin and autostart work to trigger the start of our LabVIEW EXE. The issue is when my DAQmx device based code runs, it is telling me that the DAQmx driver basically has not been installed. I get error -52006 when DAQmx Create Task.vi is called. when I run nidaqmxconfig --export /tmp/ni-system when I run the system from the installed HD I get the DAQmx cards listed in Slots 6, 7, 8, and 9 When I use pxeboot running the NFS image for the file system: nidaqmxconfig --export /tmp/ni-system shows Failed Error -52006 The requested resource was not located. Any insight? Also posted over on NI.com, but so far no hits. Edit to add: Just tried this solution - Coworker used the disk-booted FSP-4 system to collect all modules reported by lsmod that started with "ni" or "Ni", and created files in the rootfs on tftpboot Server for each in the directory /etc/modules-load.d/*.conf We saw some suggestions that on systemd systems that this could work to load drivers. This did not help. Thus we had files like ni488k.conf, nikal.conf in the /etc/modules-load.d/ location - This did not work to solve the problem.

-

Since I had time while waiting for NI to figure out why the same code called by the Embedded Runtime in Linux is not working the same as the normal LabVIEW runtime EXe - I tackled the replacement of the C code in the .SO called by the LabVIEW CLFN for the implementation of this driver. Removes the need for me to compile the code on the target, removes the extra issues of everything discussed above. Thanks for the impetus to complete that. The reads from the TCP were much easier to implement and maintain and won't lock up my code if there is an error on the server side.

-

I think they meant that if you read out the string into your program you are responsible for allocating the memory. the Readstring function called in the Union code does allocate memory for the pointer, but I agree that I don't see a memory deallocation when done. What I meant by the StrLen being used to allocate was using it with the Build Array and feeding that in to the MoveBlock. I will definitely bring this up with the developer that gave me the library. Moving to a LabVIEW only solution shouldn't be too difficult for the communications. Just costs time at this point.

-

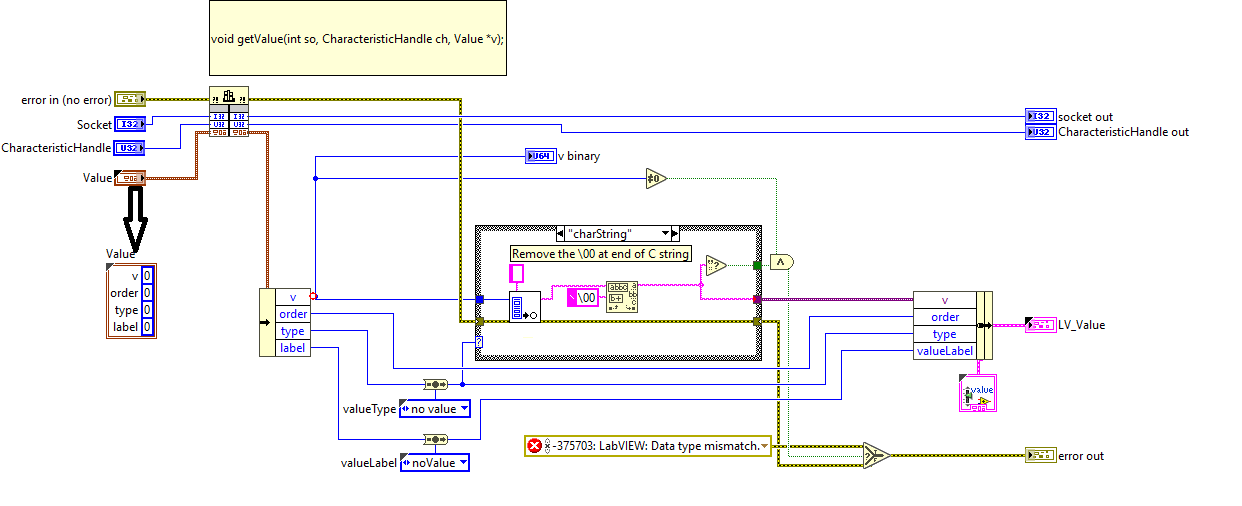

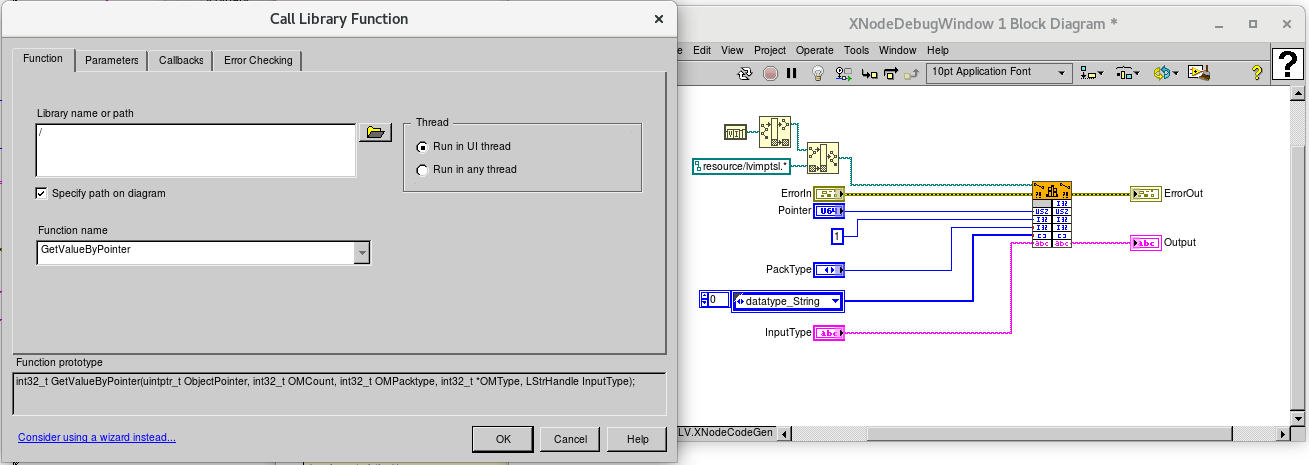

Maybe I am completely misunderstanding here....the 'v' in the case of the switch detecting the string type is just a pointer to the first element of the string in memory. The nice thing about the GetValueByPointer.vi is that you don't need to wire anything in or know the size of the string, you just wire a blank string type. When I make the call into the getValue function, which is upstream of trying to dereference the pointer it returns if the switch detects string type, I am feeding getValue the Cluster of elements, in which 'v' is a U64, as that is the widest the data can be based on the types switched into v. When valuetype is used to drive the decoding and states the data in the U64 v is a Pointer I use that pointer to read the characters. GetValueByPointer handles the memory allocation for dereferencing the string, or in the case of StrLen and MoveBlock - the StrLen is used to allocate the memory for the String. After that I am not using the original Struct in the LabVIEW code and have converted the converted data into variant so I can convert back in the calling LabVIEW code based on valueType, I am not trying to reuse the Value structure from the C call. Am I missing something? The function within getValue that gets the datatype does perform the malloc. In the case of the valuetype being charString it calls a readString() function that performs a memory allocation to hold the string.

-

I'm dumb 😒 and was looking at the output in the wrong spot in my code when initially trying to validate the StrLen and MoveBlock output you sent. ShaunR your version is working as well as the GetValueByPointer. I am just feeding in a blank string to the GetValueByPointer to define the string type for the return and it is returning the whole string including the \00 at the end.

-

This is the xnode code that is generated for a string. I wondered why the other system owner wanted to do things that way myself. I guess because this is the API they provide to all the other systems developers. I certainly am talking to the remote system on another process just using the TCP refnum and building the packets and interpreting the incoming message packets. Maybe I will rework this piece in the future, but it is working with including the lvimptsl.so in my support folders

-

I got the same kind of strange behavior. With the Ptr coming from the v in the junction as a U64 - the GetValueByPointer.xnode works, your example points to the wrong memory block, and does not give the same answer. I do recognize WHERE the text came from, but not what the alignment correction would need to be to get StrLen and MoveBlock to point to the correct memory location.

-

Ahhhhh... https://knowledge.ni.com/KnowledgeArticleDetails?id=kA00Z0000019ZANSA2&l=en-GB Adding the ..\resource\lvimptsl.so to the project and then making sure to add it to the Always Included as specified in the link under a support \resource\ folder in the build directory allowed the EXE code to run properly. Of course now it is going to bug me why StrLen wasn't working correctly...

-

I find that GetValueByPointer.vi is working (in LabVIEW Development mode) with the u64 returned in the 'v' union value treating it as a pointer, but when I try the same thing with StrLen and passing the U64 as an Unsigned Pointer - StrLen appears to be not working, returning a size of only 2 bytes, when I expect 7, and then when I feed the u64 pointer into the MoveBlock and Size 2 it isn't returning any characters.

-

That info about Long on Linux being 64-bit cleared up a bunch of issues around the other function calls provided to me. The old system being interfaced is a Solaris system returning 32-bit Long information and the sendLong function called out on the PXIe CentOS system was sending out 64-bit Long types to the Solaris system, so all the messages had 32 extra bits of information. I've made the owner of the API aware of the issue that Long is not guaranteed to be 32-bit on a system so the API must be reworked.

-

valueLabel is a typedef enum typedef enum { noValue = 0, InstrumentServiceName, NetworkPortNumber, ComponentID, ScanRate, Slot, NumChannels, ChannelNumber, CardNumber, FirstChannelNum, IEEE488_Address, VXI_Address, SerialNumber, SensorID, ScanListItem, Gain, Filter, Excitation, Cluster, Rack, PCUtype, EUtype, RefType, PCUpressure, MaxPressure, AcqMode, CalTolerance, CalPressure1, CalPressure2, CalPressure3, CalPressure4, CalPressure5, DataFormat, ThermoSensorType, ThermoSensorSubType, SensorType, MaxValue, SwitchState, OrderForAction, RcalFlag, CalDelayTime, CalShuttleDelayTime, nfr, frd, msd, MeasSetsPerSec, ServerName, RefPCUCRS, CalMode, ZeroEnable, NullEnable, ZeroMode, RezeroDelay, SensorSubType, ModuleType, ModuleMode, MeasSetsPerTempSet, CardType, Threshold, ControlInitState, ControlPolarity, StateEntryLogicType, StateEntryDelay, StateExitLogicType, StateExitDelay, TriggerType, TriggerEdge, ScansPerRDB, InterruptLevel, CSR_Address, Program_Address, ScannerRange, Hostname, ConnectVersion, From, To, ShuntValue, ValveConfig, NextToLastLabel, lastLabel, EndOfValList = 9999 } valueLabel; and valueType is also defined as an Enum typedef enum { noValueType = 0, integer = 1, floating = 2, charString = 3, uLong = 4, maxValueType, EndOfValTypeList = 9999 } valueType;

-

I have code from another developer that allows me to interface with a server to get configuration data. I have compiled the C code into a .SO on my Linux system without error - but in writing my LabVIEW CLFN, I am unsure what to do with a Union Pointer to pass the data. The function in the C file: void getValue(int so, CharacteristicHandle ch, Value *v) { long longValue; unsigned long ulongValue; double doubleValue; char * c_ptr = 0; long msgType = g_val; sendLong( so, msgType ); sendCHARhandle( so, ch ); readLong( so, &longValue ); v->order = longValue; readLong( so, &longValue ); v->type = longValue; readLong( so, &longValue ); v->label = longValue; switch( v->type ) { case integer: { readLong( so, &longValue ); v->v.l = longValue; break; } case uLong: { readLong( so, &ulongValue ); v->v.ul = ulongValue; break; } case floating: { readDouble( so, &doubleValue ); v->v.d = doubleValue; break; } case charString: { readString( so, &c_ptr ); v->v.s = c_ptr; break; } } return; } The definition of "Value" from another header file: /* Define a type to hold the value and type information for * a characteristic. */ typedef struct Value { union /* This holds the value. */ { long l; unsigned long ul; double d; char *s; /* If a string user is responsible for * allocating memory. */ } v; long order; valueType type; /* Int,float, or string */ valueLabel label; /* Description of characteristic -- what is it? */ } Value; I'm a newb at CLFN - the primitive datatypes I've got working for my other calls - this one I am not sure how to configure with the CLFN. Is this possible to do with the CLFN?

-

LabVIEW RT - Linux RT PXI - xinetd -System.in and System.out

Ryan Vallieu replied to Ryan Vallieu's topic in Linux

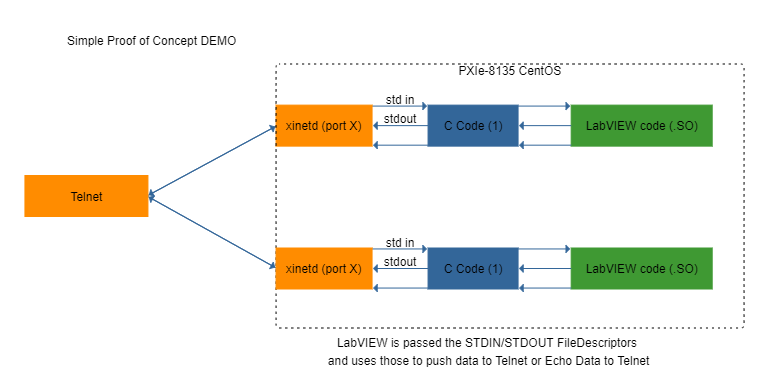

Solution: Since we need xinetd to be able to open N number of LabVIEW VIs as this is how the legacy system is configured and the "institution" doesn't want to change that at this point, I had to figure out how to make this work. Compiled my test VI into a .SO Shared Object library on my PXIe-8135 running Cent OS 7.6. Selected Advanced options in Build to use EMBEDDED version of run-time engine - doesn't require a GUI for the code to run. Wrote simple c code to launch the LabVIEW VI. Installed and configured xinetd to launch C code that launches LabVIEW code. Used the package: xinetd-2.3.15-14.el7.x86_64.rpm LabVIEW Test VI uses LabVIEW Linux Pipe VIs with hard coded 0 (STDIN) and 1 (STDOUT) to communicate over the socket. As explained basically here: http://www.troubleshooters.com/codecorn/sockets/ Result is that the LabVIEW code runs and can communicate over the Socket connection through the Pipe VIs. It turned out to be easier on the LabVIEW side than I expected. Hard coding the 0 and 1 into the Read from Pipe.vi and Write to Pipe.vi worked. -

LabVIEW RT - Linux RT PXI - xinetd -System.in and System.out

Ryan Vallieu replied to Ryan Vallieu's topic in Linux

This has since changed. I am now compiling my LabVIEW code into .SO library and calling that from C as apparently I can only get xinetd to launch one instance of LabVIEW, the system must be set to run-at start-up, etc. etc. I can call any number of LabVIEW VIs from .SO through a C call and have them happily chugging away in their own app spaces. What I am missing still is how to get the STDIN/STDOUT through to the LabVIEW program. I suspect if I was better at C this would be easy (easier?). Just trying out a simple demo at first so the LabVIEW code doesn't need to be a full-blown architecture. I just need to get that damn "pipe" or File Descriptor.