-

Posts

3,183 -

Joined

-

Last visited

-

Days Won

205

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Aristos Queue

-

The fix is easy -- just get the names of the cluster elements and pass into the subarray instead of relying upon the Variant To Data node to capture the names.

-

Our use case is nullable strings and nullable integers. We want to be able to differentiate between "this is the set value of the parameter (including possibly empty string)" and "this parameter has never been set, so defer to the default". So a cluster of variant correctly should record "null" for an empty variant and not-null for a non-empty variant. Recording an array when passed a cluster is definitely a bug, regardless of the behavior of the variants.

-

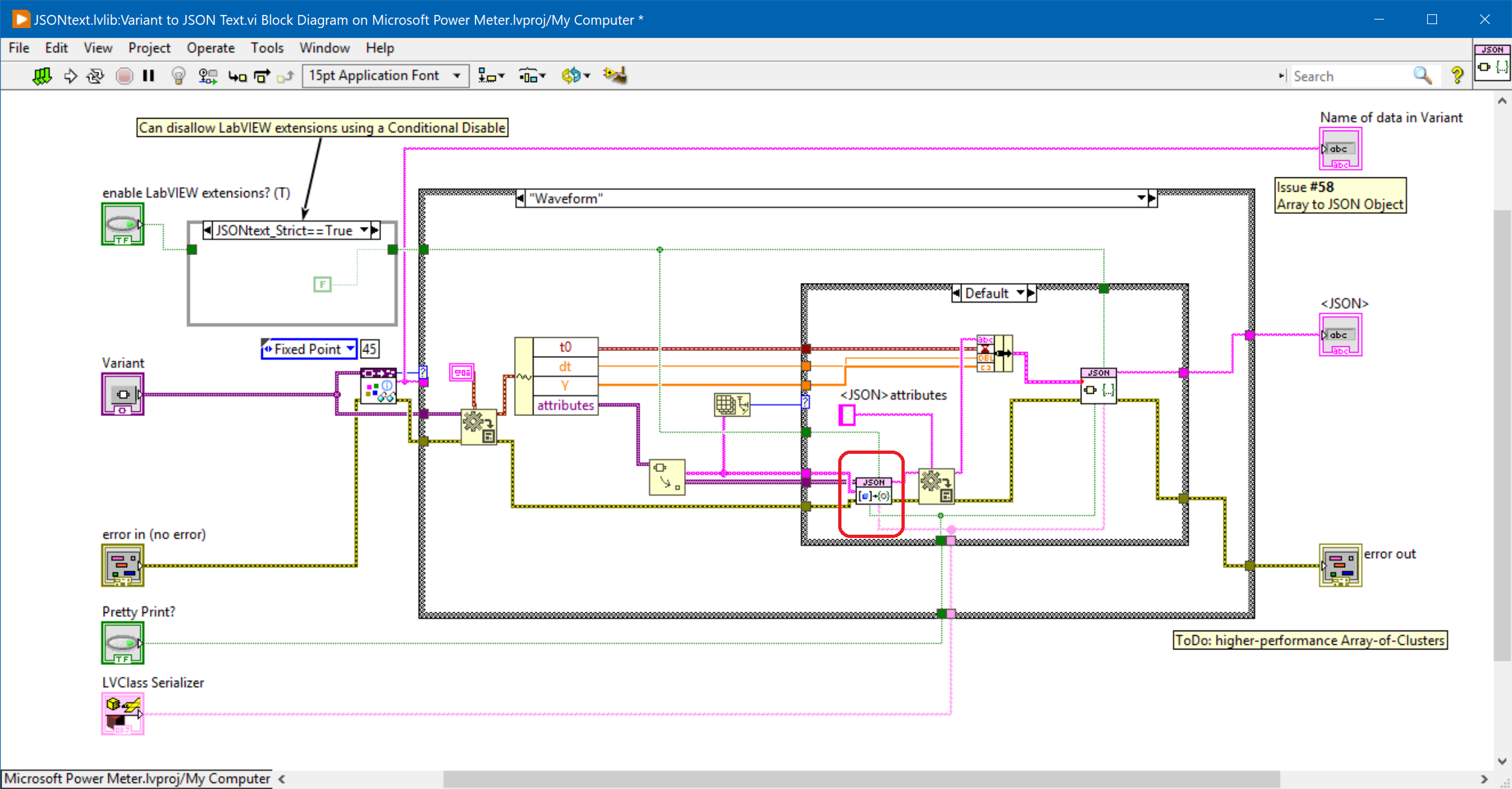

Found two bugs in "Variant to JSON Text.vi". The fixed VI is attached, saved in LV 2015. Included in this is a whole lot of manual diagram cleanup to help me follow some cases I was trying to understand. I noticed that there are several calls to the To JSON primitive that do not have the "enable LV extensions" boolean wired up. I did NOT change any of those, but they may warrant a review. There are also a few places where the error cluster is dropped that I don't think it should be. FIRST Here's the test case for the major bug: Expected JSON: {"a":null,"b":null} Actual JSON: [null, null] SECOND A simple miswire: the LV extensions bool was wired to the pretty print terminal in the Waveform case. Variant to JSON Text.vi

-

There are many links on the Internet to tell you how to configure git to use custom tools for VI. Many are wrong. Yesterday, I and another developer outside NI worked through the sequence and got it working repeatably on both of our machines. Here is the process. Save both of the attached files someplace permanent on your hard drive that is outside of any particular git repo. We used C:\Users\<<username>>\AppData\Local\Programs\GIT\bin _LVCompareWrapper.sh_LVMergeWrapper.sh Modify your global git config file. It is saved at C:\Users\<<username>>\.gitconfig You need to add the following lines: [mergetool "sourcetree"] cmd = 'C:/Users/smercer/AppData/Local/Programs/GIT/bin/_LVMergeWrapper.sh' \"$BASE\" \"$LOCAL\" \"$REMOTE\" \"$MERGED\" trustExitCode = true [difftool "sourcetree"] cmd = 'C:/Users/smercer/AppData/Local/Programs/GIT/bin/_LVCompareWrapper.sh' \"$REMOTE\" \"$LOCAL\" [merge] tool = sourcetree [diff] tool = sourcetree That's it. There are lots of ways to edit the .gitconfig from the command line or by using SourceTree's UI... if you know those ways, go ahead and use them.

-

Dont misunderstand me: I think it’s a great toolkit, and I think it should be a G toolkit not a language primitive. I’m just finding it’s edge cases. 🙂

-

Kind of. The JSONText VIs are more efficient overall than my originals that I slapped together in a weekend. But the current JSONText VIs do have some inefficiencies that my originals didn’t have (most notably, see the earlier post about accessing all fields of an object) that crop up when translating schemas. And the single serializer hook is insufficient to do some operations — support for sets/maps or custom translations of complex data structures, for example. These are things that the CL architecture accounts for that I wish JSONText was refactored to support. Having said that, I’m happy enough for my own projects with the existing toolkit and unwilling to donate the refactoring time. It is definitely a good toolkit. But it still has a long way to go in my opinion.

-

@ShaunR "Didn't that do JSON?" Yes. Initially I rolled my own JSON parser, but it was rewritten to call JSONText as subVIs. The Character Lineator is a better framework (my opinion) for importing and exporting objects into/out of JSON than trying to hook the serializer directly. Raw JSON doesn't provide any schema version management nor any data massaging capacities. It's just fields without context. The JSONText library is important for the raw string handling. You need more infrastructure sitting above it to actually use JSON. CL is one possible choice for that infrastructure. The serializer hook that was added to JSONText in its last revision allows users to roll their own infrastructure.

-

It has pros and cons. It is definitely a nice change of pace. 🙂

-

I generally prefer #4 straight up. If you add #5, anyone not supplying the types will be slowed down by the code checking the input variant for "Did you provide a type for this attribute? How about this attribute?" and getting a "no" answer each time. It's not slow, but it is an unnecessary hiccup. Anyone who knows the types can get them from the generated variant. The one difficulty that makes me lean toward #5 is objects. If we parse "a":5 then we know to add attribute "a" as an integer. But if we hit "a":{ ... } then that can be a cluster of those elements, another variant attribute table, or an class. In those cases, I'd like to leave it to the Serializer to decide how to parse what is between the braces. If it can detect that the stuff between the braces is an object or a known cluster and deserialize it, great. And if it cannot, it yields to its parent implementation which pulls it in as a variant attribute table. Does that make sense?

-

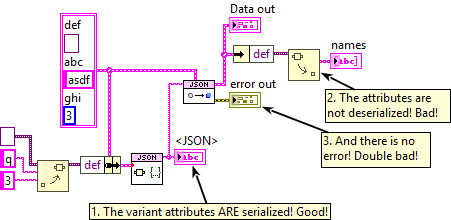

The handling of variant attributes is asymmetric. I can serialize the variant and its attributes are recorded as fields of an object. But on deserialize, the attributes are not recovered. I suspect this is because there isn't any type information to tell us what type each attribute should be, but I'm not sure. Why isn't the deserialize handled? Can it be handled?

-

This subVI violates the "never copy all or part of the input JSON string" rule. There's no such thing in LabVIEW as an array of substrings. I would suggest that the next version of the JSONText toolkit should include a VI named something like "Get all Object Item offsets.vi". It would return two arrays of clusters of two integers: start offset and length. The first would be the names and the second would be the object data. (After looking at the JSON spec again, I note that the name string can contain escaped characters, so maybe go ahead and return the names as you are doing now because otherwise people are going to forget that step and just get substrings and think they have valid names and the copies out of the main JSON will have to happen anyway.)

-

I'm feeding it legit object JSON. As long as the field name wasn't empty, everything worked. 🙂

-

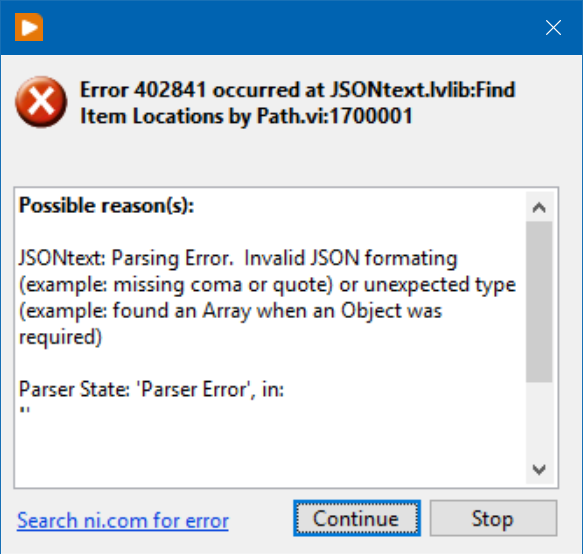

I am getting this error when going to JSON. This seems like an error that should only occur when going from JSON. The error comes out of whenever the "$Path or Item Name" terminal is an empty string. Now, for one thing, I'm not convinced empty string should be an error at all... using empty string as a field name is legit JSON so far as I know. But even if it is illegal, I would prefer a different error here, something that says "the field name cannot be empty string." Is that reasonable? Note that I did try passing "$." instead of empty string... that gave me the same error. Is there a way to add a field that has a name of ""?

-

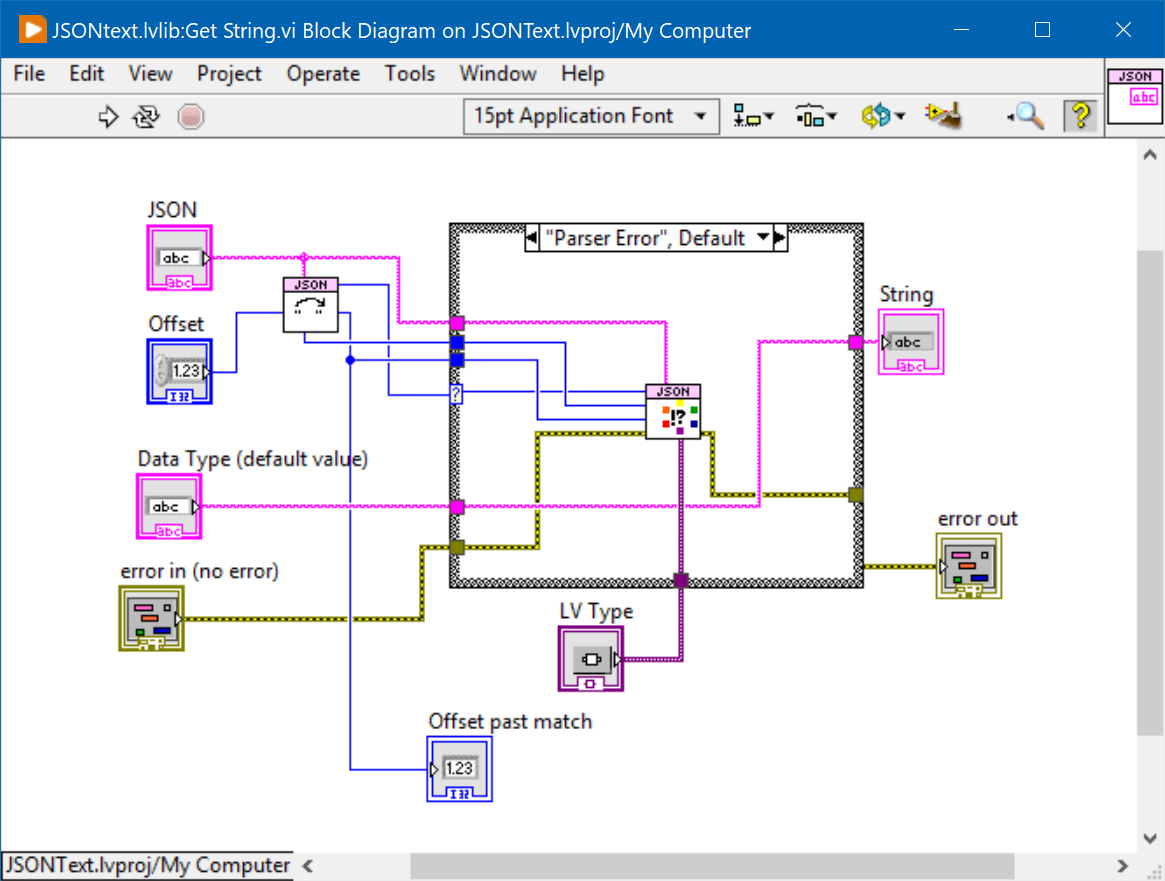

Next question: Why does "Get String" have two different inputs, one for the LV Type and one for the default value? The default value terminal does not appear to be wired anywhere in the toolkit, and all callers have to do their own selection after the call to pick the default value.

-

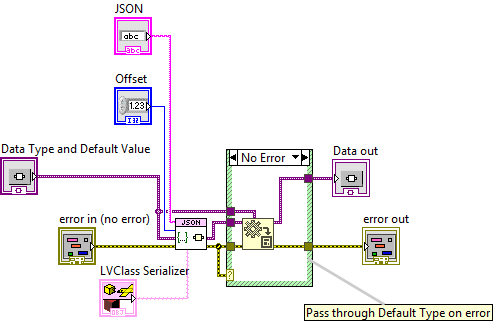

Block diagram of "From JSON Text.vim" is shown below. What is going on in the error case of the case structure? Why do you need to call the same node but bypassing the error cluster? Why not just wire the default value through the case frame and leave out the call to Variant to Data entirely? The error cluster tunnel going into the case structure can be changed into the ? terminal, thus avoiding forking the error cluster wire and making it just slightly clearer what's going on in this diagram.

-

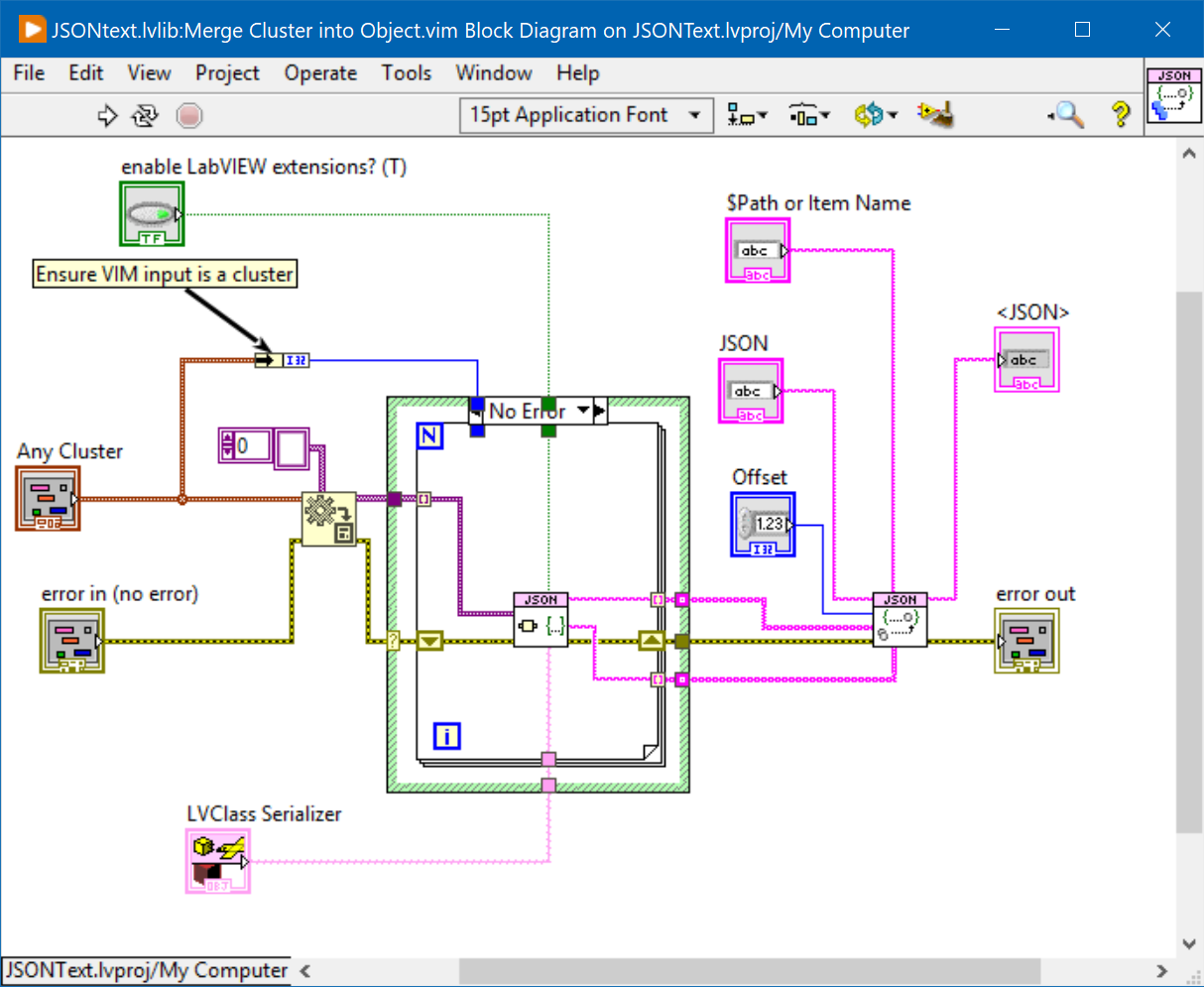

The subVIs that "Merge Cluster into Object.vim" calls support pretty printing, but the terminal is not exposed to callers. Please add?

-

Malleable Buffer (seeing what VIMs can do)

Aristos Queue replied to drjdpowell's topic in Code In-Development

Malleable Buffer.zip James: I did a lot of polish on your library -- cleaning diagrams, finishing out a couple of API points, documentation. I deleted the experiment stuff. You're free to take the attached files and add them to your published VIP if you want. There was only one real functionality change that I made: a performance improvement to "Add to Buffer (By Value).vi". Thank you -- this code is quite helpful in my current project. -

I retract my earlier statement. I was confused by part of the code. I think I can use this.

-

Powell: I looked at your library. Useless to me because it doesn't expose the raw buffer for traversal... it allows a *copy* of the buffer, but not an IPE access to the buffer. You abstracted everything away.

-

@hooovahh The XNodes in this library... can these be replaced with VIMs at this point? Are the XNodes doing any type adaptation that couldn't be done with VIMs? I haven't opened up the code to investigate... figured I'd ask you first in case you know off the top of your head.

-

I can build the jump to diagram easily enough -- I've written many jump-to-highlight behaviors. I'm looking for someone who has already built all the label displaying, tree organizing, keyboard shortcuts, event handling, dialog resizing, etc. That's hours of work, and if it already exists out in the world, I'd rather reuse than build it.

-

I've got a tool that searches through a bunch of VIs for stuff. That part was easy. Now I've got an array of GObject refnums, I need to present the results to the user as a list that the user can click on to visit each of the found items. Basically, I want the functionality of the Search Results Dialog Box, but without the search part. The one built into LV has no exposed API. Is there a publicly available replica that someone has built? My Googling around didn't find anything, and I really would prefer not to reinvent that particular wheel if I can avoid it.

-

OpenG and Object compatibility

Aristos Queue replied to bjustice's topic in OpenG General Discussions

That would still be a bug because a child doesn't know whether its parent will remain without private data permanently. That's why the inheritance only supplies the ability to serialize. It says nothing about the format/structure/etc. -

OpenG and Object compatibility

Aristos Queue replied to bjustice's topic in OpenG General Discussions

I really didn't expect serialization to be weaponized like this when I wrote it, and over the last few years, I've become aware that it can be abused like this. I am going to have to prioritize making flatten/unflatten an option to toggle on classes, one that defaults to "off" for new classes. This is the sort of "cast to void" thing that completely sinks C++ projects. -

OpenG and Object compatibility

Aristos Queue replied to bjustice's topic in OpenG General Discussions

Using interfaces would be a BUG. You cannot add serialization to a class if all ancestors do not support it or you end up with an insane class. That's why the Lineator uses class inheritance.