Search the Community

Showing results for tags 'performance'.

-

The DevOps engineer plays a critical role in Listen’s Software delivery process to ensure the highest level of product quality for our flagship audio test product, SoundCheck, for both Windows and MacOS. In this position, you will work closely with both the Software and Support/Validation teams. You will devise appropriate smoke and integration tests, create new builds and installers, and approve product releases based on test data. You will also maintain and improve our Continuous Integration test framework to run reliable automated validation tests using a combination of Jenkins, Python, and LabVIEW, and maintain and develop our licensing utility using Python. You may also design and build analysis tools to enhance our test and development environment. Additionally, you will be responsible for providing IT support and maintaining systems used by the company. If you are looking for an opportunity to take your passion for LabVIEW and apply it to a leading commercial test and measurement system, please review the detailed job descriptions at our website.

-

- automated tests

- continuous integration

-

(and 3 more)

Tagged with:

-

I am using MoveBlock via Call Library Function to copy a few bytes. The duration of this call measured with two TickCount timestamps in a flat sequence around the call is about 40ms. Since MoveBlock should be similar to memcpy in C, I thought it would also perform similarly, however, if it's really this slow, I cannot use it in a meaninful way. Has anyone else measured its duration and can you confirm my findings?

- 5 replies

-

- labview

- memory management

-

(and 1 more)

Tagged with:

-

I have never gotten the performance that I desire out of the 2D picture control. I always think that it should be cheaper than using controls since they don't have to handle user inputs and click events etc. But they always seem to be slower. I was wondering if any of the wizards out there had any 2d picture control performance tips that could help me out? Some things that come to mind as far as questions go: Is the conversion to from pixmap to/from picture costly? Why does the picture control behave poorly when in a Shift register? What do the Erase first settings cost performance wise? Anything you can think of that are bad ideas with picture controls? Anything you can think of that is generally a good idea with picture controls?

- 4 replies

-

- performance

- labview

-

(and 1 more)

Tagged with:

-

Hello, I am using the variantconfig.llb for saving/loading configuration files. Unfortunately these configuration-files got very huge: 10.000 lines or even more, having hundreds of sections. Loading these files takes 15-30 seconds. The workflow: - getting all sections with "Get Section Names.vi" (is very fast) - a for-loop is using "Read Section Cluster_ogtk.vi" on each section Name (using Profiler, I can see that this uses the most amount of time). Depending on the section name, I am selecting the cluster type. Do you have any hints, how I can speed up the process? Thanks!

- 7 replies

-

- variantconfig

- configuration ini

-

(and 2 more)

Tagged with:

-

I'm using a Labview Shared Library (DLL) to comunicate between a C# program (made by another company) and a labview Executeable (which means different processes) on the same PC. Currently i'm using network published shared variables, to communicate between the Labview DLL and the LABVIEW program (both made by me) which works well, except for the performance. Each time the DLL is called it needs to connect to the shared variable, which takes between 50 and 300 ms. When it is connected, the data transfer is instant. I have tried to use the PSP "Open Variable Connection In Background", which is a bit faster, because it doesn't wait to verify the connection. But it still adds some overhead. I have also tried to use notifiers from this example: https://lavag.org/topic/10408-communication-between-projects/ . Opening connection and sending the notifier takes 50 - 100 ms. I guess both the notifier and the shared variables are "slow" because they use the network communication, even if it is the same pc both programs are running on (localhost). Does any of you know of a faster method of communicating between a program that is running continuesly (connection open constantly) and one only exectuted when new data is ready (connection "re"-opened on every instance)? Thanks in advance. Best Regards Mads

- 4 replies

-

- shared variables

- dll

-

(and 3 more)

Tagged with:

-

Hi LAVA, I need your help! I've recently started updating a system from LV8.6 to 2011SP1, and have ended up in confusion. I deploy the system as executables on two different machines running Linux Ubuntu, one a laptop with a single processor and the other a panel PC with two processors. What happens is the on the first, single-processor computer I see a dramatic fall in the use of the CPU. The other in contrast shows a dramatic raise in CPU usage. The computers do not have LV installed, only the RTE:s. Machine1 (1* Intel® Celeron® CPU 900 @ 2.20GHz): CPU% with LV8.6: 63% CPU% with 2011SP1: 39% Machine2 (2* Genuine Intel® CPU N270 @ 1.60GHz): CPU% with LV8.6: 40% CPU% with 2011SP1: 102% In the second machine the max CPU is 200% since it has two CPU:s. The load seems to be pretty even between the CPU:s. Why is this happening, and what should I do to get the CPU usage down on machine2 (the one being shipped to customers)? /Martin

- 12 replies

-

- linux

- application

-

(and 3 more)

Tagged with:

-

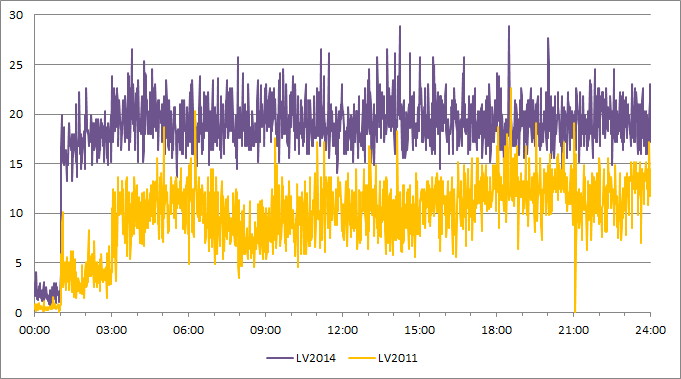

Before making the switch from LV2011 to LV2014, I ran the exact same test with the 2 versions (2011 and 2014) of my application. I recorded the CPU usage and discovered a huge deterioration of in LV2014. Is anybody aware of any change between LV2011 and LV2014 that could impact the performances like this? I should mention that the unit on the Y-scale is %CPU and the X-scale is MM:SS

-

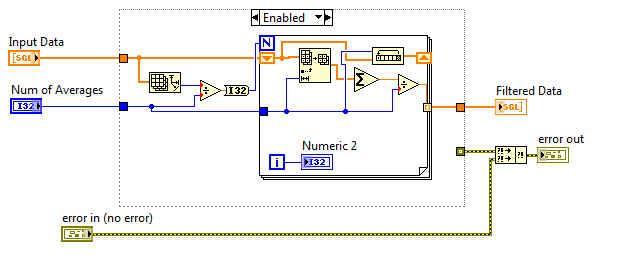

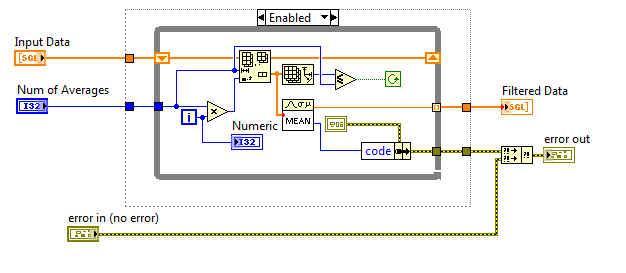

I am trying to create a code section that will take a 1D array and create a moving average array. Sorry if this is a bad description. I want to take x elements of the input array, average them, and put that average in the first element of a new array. Then take the next x elements, average them, and put them as the second element of the new array. I want this done until the array is empty. I have two possible ways to do it, but neither are running as fast as I wanted them to. I want to see if anyone knows of a faster way to conduct this averaging. Thanks Joe

-

Hi all - I've just posted a tool to analyze compiled code complexity of VIs in a project at http://forums.ni.com...12/td-p/2121692. Note that compiled code complexity is a new feature in LabVIEW 2012, and so this won't work with earlier versions. I'd be happy to answer any questions here or on the forums! (is it kosher to spam LAVA with links to forum posts? If not, my apologies...) Greg Stoll LabVIEW R&D

-

After two years "leeching" content every now and then from the Lava community I think it's time to contribute a little bit. Right now, I'm working on a project that involves lots of data mining operations through a neurofuzzy controller to predict future values from some inputs. For this reason, the code needs to be as optimized as possible. With that idea in mind I've tried to implement the same controller using both a Formula Node structure and Standard 1D Array Operators inside an inlined SubVI. Well... the results have been impressive for me. I've thought the SubVI with the Formula Node would perform a little bit better than the other one with standard array operators. In fact, it was quite the opposite. The inlined SubVI was consistently around 26% faster. Inlined Std SubVI Formula Node SubVI evalSugenoFnode.vi evalSugenoInline.vi perfComp.vi PerfCompProject.zip

- 36 replies

-

- 2

-

-

Hi, I am wondering if anyone did or found a performance test of IMAQ functions. What I am interesting in is what is the performance of IMAQ functions compared to functions written in .net or C++ (OpenCV)? Can we improve or deteriorate the performance of IMAQ based applications? regards, Marcin

- 5 replies

-

- imaq

- image processing

-

(and 1 more)

Tagged with: