-

Posts

717 -

Joined

-

Last visited

-

Days Won

81

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by LogMAN

-

-

- Popular Post

- Popular Post

11 hours ago, Rolf Kalbermatter said:Yes we do have customers wanting to have test software written in Python.

10 hours ago, Rolf Kalbermatter said:implement it in C as a shared library to call from Python through ctypes, since the routines ported to Python were to slow for the desired test throughput.

-

3

3

-

- Popular Post

- Popular Post

-

5

5

-

4 hours ago, MartinP said:

I recomend all other LabVIEW programmers to do the same.

To what end?

NI will never go back to perpetual licenses, no matter how many offers we reject.

Mainstream support for LabVIEW 2021 ends in August 2025, which means no driver support, no updates, and no bug fixes. By then you should have a long-term strategy and either pay for subscriptions or stop using LabVIEW.

As a side note, if you own a perpetual license you can get up to 3 years of subscription for the price of your current SSP. This should give you enough time to figure out your long-term strategy and convince management

Quote

QuoteWhat will happen if I currently own a perpetual software license when my SSP renewal is up?

We will quote you for a subscription license of your software at a one-time price that is the same as your SSP renewal quote would have been. You can pre-purchase up to a three-year subscription term at that per-year rate. This one-time price is available whenever your SSP renewal expires. For example, if you purchased software in 2021 with 3 years of SSP, the one-time price will be available to you in 2024.

-- https://www.ni.com/en-us/landing/subscription-software.html

Of course, if you let your SSP expire and are later forced to upgrade, than you have to pay the full price...

-

17 hours ago, Dpeter said:

It seems that you can not just insert a class into a library.

You cannot directly create PPLs from classes. Instead, put the class inside a project library to create a PPL.

-

- Popular Post

- Popular Post

Quoteempowering engineers and enterprises around the globe through the significant differentiation of NI’s tailored, software-connected approach.

Powered by LabVIEW NXG 😋

-

1

1

-

1

1

-

4

4

-

20 hours ago, alvise said:

When I run the "Preview Demo" example built with C# that comes with the SDK, it seems to increase memory usage, is this normal?

22 hours ago, alvise said:When running -VI, memory usage sometimes goes up and down. For example: 178.3MB to 182.3MB then be 178.3MB again.

In C#/.NET, memory usage can fluctuate because of garbage collection. Objects that aren't used anymore can stay in memory until the garbage collector releases them.

https://docs.microsoft.com/en-us/dotnet/standard/garbage-collection/

This could explain why your memory usage goes up and down.

There is a way to invoke the garbage collector explicitly by calling GC.Collect(). This will cause the garbage collector to release unused objects.

-

Don't tell him about Excel...

-

-

14 minutes ago, crossrulz said:

Yeah, but this is still a major support hole.

No subscription = no money = no support

I believe this is the case for most software products.

-

9 minutes ago, FixedWire said:

Now this is fun...anybody else tried to download a old version of LV that was used to develop projects and noticed that you can't actually download it any longer?

You need an active subscription to download previous versions. This has been the case for many years.

QuotePrevious versions are available only to customers with an active standard service program (SSP) membership.

-

Welcome to LAVA @jorgecat 🏆

As a matter of fact, there can be two files depending when you installed NIPM for the first time.

- \%programdata%\National Instruments\NI Package Manager\Packages\nipkg.ini

- \%localappdata%\National Instruments\NI Package Manager\nipkg.ini

According to this article, the location changed from %programdata% to %localappdata% in later versions of NIPM but both locations are still supported.

QuoteThe INI file for the feed has changed, but the software still references the old INI file. If you have an nipkg.ini file inside C:\Program Files\National Instruments\NI Package Manager\Settings, delete this file.

-

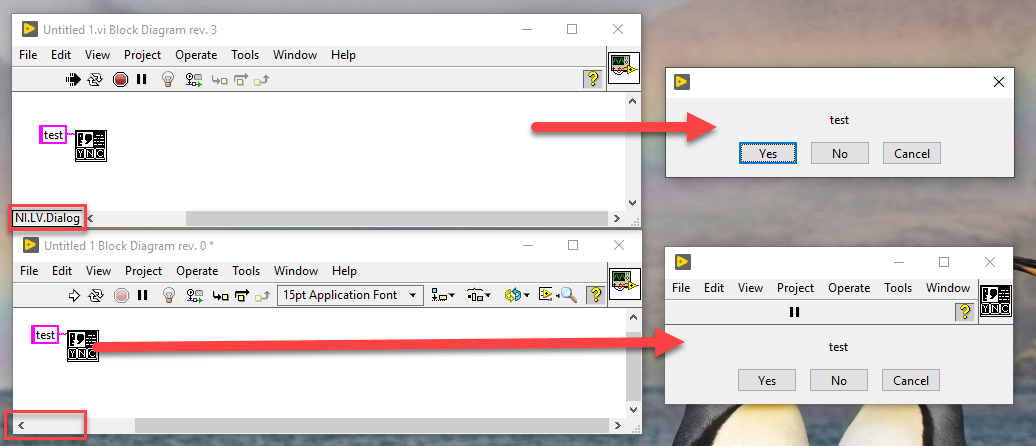

By any chance, is your main VI launched in a different application context (i.e. from the tools menu or custom context)?

Here is an example where the dialog is called from the tools menu (top) while the front panel is open in standard context (bottom).

-

19 minutes ago, ShaunR said:

A prototype is just a program before you up issue it to version 1.0.0. Either that or it becomes a test harness. Why would you throw away anything that's useful?

Good question. There are different kinds of prototypes that serve different purposes.

The one I'm referring to is a throwaway prototype. It serves as a training ground to try out a new architecture and/or refine requirements early in the process. The entire point of this kind of prototype is to build it fast, learn from it, then throw it away and build a better version with the knowledge gained.

-

43 minutes ago, Lipko said:

In my uneducated opinion the biggest obstackle of making clean code and fine architecture (thus less bugs, better improvability) is that it's impossible to fully flesh out the specifications when starting a new project (and refractoring can be a real pain).

On the other hand if you port (especially if you are also an active user of the program), then you have a solid specification and a working "prototype" (the original project) mapped out to the last detail. Of course, you have to be ready with a very detailed test plan.Even without porting to a different language, a small prototype is a good idea if you aren't sure about your architecture. That way you can test your ideas early in the project and refine it before you begin the actual project. Just don't forget to throw away the prototype before it gets too useful 😉

-

On 4/19/2022 at 6:38 PM, infinitenothing said:

Has anyone gone through the experience of rewriting your LabVIEW code into a different programming language?

I had to do that once. Moved from LV to C++

On 4/19/2022 at 6:38 PM, infinitenothing said:

On 4/19/2022 at 6:38 PM, infinitenothing said:I'm wondering if it was a total rewrite or if you went line by line translating it to a new language?

A translation doesn't make sense as many concepts don't transfer well between languages, so it was rewritten from scratch.

It's actually funny to see how simple things become complex and vice versa as you try to map your architecture to a different language.

On 4/19/2022 at 6:38 PM, infinitenothing said:After the effort was over, was the end result still buggy?

I assume by "done" you mean feature parity.

We approached it as any other software project. First build a MVP and then iterate with new features and bug fixes. We also provided an upgrade path to ensure that customers could upgrade seamlessly (with minimal changes) and made feature parity a high priority. The end result is naturally less buggy as we didn't bother to rewrite bugs 😉

On 4/19/2022 at 6:38 PM, infinitenothing said:Did it take it a while to get it back to its former reliability?

It certainly took a while to get all features implemented but reliability was never a concern. We made our test cases as thorough as possible to ensure that it performs at least as well as the previous software. There is no point in rewriting software if the end result is the same (or even worse) than its predecessor. That would just be a waste of money and developer time.

-

1

1

-

-

On 4/11/2022 at 5:00 PM, IpsoFacto said:

I have a base Hardware class that has must overrides to instantiate the communication to the instrument, configuration of the instrument by passing in a JSON strong of config arguments, and deconstruction of the instrument.

Perhaps consider using separate Hardware Configuration classes instead of JSON strings. That way your Hardware classes are independent of the configuration storage format (which may change in the future). All of your Hardware Configuration classes could inherit from a base class that is then cast by each Hardware at runtime.

On 4/11/2022 at 5:00 PM, IpsoFacto said:I then have interfaces to represent generic instrument types, DMM, Switch, Digital Input, etc, that have overrides for their API. Then I have specific hardware that inherits the appropriate interface(s). A keithley DMM inherits DMM, an NI MIO device inherits DI, DO, AI, AO...

Sounds reasonable.

On 4/11/2022 at 5:00 PM, IpsoFacto said:I have a hardware manager class that acts as a factory, instantiating the classes using a config file that I want to include a key inside of that points to the specific class on disk. The hardware manager is passed to operations that use the instruments and they interact with them by casting to the type of interface they want.

From what you describe, it sounds more like a registry than a manager. Could you imagine using a Map instead?

Your hardware manager also has a lot of responsibilities.

First of all, it should not be responsible for creating classes. This should be responsibility of a factory (Hardware Factory). If you want to load classes on-demand, then the factory should be passes to the manager. Otherwise, the factory should create all classes once on startup and pass the instances to the manager.

It also sounds as if the Hardware Factory should receive the configuration data. In this case, the configuration data could be a separate class or a simple cluster. In either case, the factory should not be responsible for loading the data (for the same reason as for the Hardware above).

On 4/11/2022 at 5:00 PM, IpsoFacto said:Heres some hurdles I'm having trouble conceptualizing. I have some applications that run in different labs but perform the same task with different hardware. For example, in one lab they use a Kiethley DMM with a built-in switch card, in others they use a Kiethley DMM and an NI Switch card. In the first case it's one instrument that inherits DMM and Switch, in the other it's two instruments. I guess I could have two config entries, one for DMM and one for Switch and have the factory compare addresses on instantiation and if it's already initiated communication on an address it just points to the first instance?

A proxy could be useful here (a class that forwards calls to another class). In this case, "Kiethley DMM with a built-in switch card" could be passed directly to one of your operations. In the the case of "Kiethley DMM and a NI switch card", however, a proxy could hold the specific hardware instances and forward all calls to the appropriate hardware.

On 4/11/2022 at 5:00 PM, IpsoFacto said:And the biggest question, if the above is workable, any tips on how to get from a case structure containing every possible specific hardware class to dynamically loading them disk without putting them all into some subVI somewhere to guarantee they're loaded in memory during compile time?

You can load a class from disk and cast it to a specific type. See Factory pattern - LabVIEW Wiki for more details.

-

9 hours ago, X___ said:

You will love this: https://forums.ni.com/t5/LabVIEW/NI-s-move-to-subscription-software/td-p/4215663

In essence: we failed you, but this is because you were not paying us enough, so we will change this, which will be good value for you and us.

What was he saying about denying reality?

I suspect they did another one of their "approaches" and hired more consultants to completely miss the point...

This is really sad and I fail to see how any of this makes LabVIEW a better product and not just more expensive to their current user base.

Responses like this are also a good reason to seek alternatives. NI has made it clear for quite some time that LabVIEW is only an afterthought to their vision. Instead they are building new products to replace the need for LabVIEW ("it's not the only tool"). Customers will eventually use those products over writing their own solutions in LabVIEW, which means more business for NI and a weak argument for LabVIEW.

In my opinion, higher prices are also a result of balancing cross-subsidization. In the past, other products likely added to the funds for LabVIEW development in order to drive business. With more and more products replacing the need for LabVIEW, these funds are no longer available. Eventually, when there are not enough customers to fund development, they will pull the plug and sunset the product.

On the bright side, they might gain a large enough user base to invest in the long-term development of LabVIEW. They might listen to the needs of their users and improve its strengths and get rid if its weaknesses. They might make it a product that many engineers are looking forward to use and who can't await the next major release to engineer ambitiously

I hope for the latter and prepare for the former.

-

1

1

-

-

Here is a video that showcases a logic designed by NI. It counts the number of iterations since the last state change and triggers when a threshold is reached.

-

My system is to put them in project libraries or classes and change the scope to private if possible. Anything private that only serves as a wrapper can then easily be replaced by its content (for example, private accessors that only bundle/unbundle the private data cluster). Classes that expose all elements of their private data cluster can also be refactored into project libraries with simple clusters, which gets rid of all the accessors.

Last but not least, VI Analyzer and the 'Find Items with No Callers' option are very useful to detect unused and dead code. Especially after refactoring.

On 1/21/2022 at 5:22 PM, Taylorh140 said:So I really like the LabVIEW classes, but always end up with alot of vi's that do very little.

What do you mean by "very little"?

The 'Add' function does very little but it is very useful. If you have lots of VIs like that, your code should be very readable no matter the number of VIs.

-

You can find a lot of information on the type descriptor help page. The LabVIEW Wiki also has a comprehensive list of all known type and refnum descriptors. Feel free to add more details as you discover them 🙂

That said, I would use Get Type Information over type string analysis whenever possible. It's much easier and less error prone. A great example of this is the JSONtext library, which utilizes the type palette a lot.

-

58 minutes ago, Zyga said:

Does anyone know for how long perpetual license will be available?

The answer is in the title.

QuoteBeginning January 2022, NI software is moving to subscription-based licenses.

-- https://www.ni.com/en-us/landing/subscription-software.html

NI will stop selling perpetual licenses by the end of this year. Any licenses renewed before that date will continue until they expire, after which NI will offer subscription-based licenses.

-

14 hours ago, X___ said:

Now I wonder what "access to historical versions" means. Can we try to run LabVIEW 1.0 on a Macintosh VM? LabvVIEW 2.5 on a Windows 3.1 VM? That could be a lot of fun.

Well, technically speaking that should be the case if you take their answer literally (and ignore the rest of the sentence)

Quote

QuoteIs it mandatory to update to the newest version when using a subscription?

No, while subscriptions provide access to the latest version, you are welcome to use any previous version of the NI software in your subscription.

Most likely, though, it will allow you to use any version listed on the downloads page, which currently goes back to LV2009. You might also be able to activate earlier versions if you still have access to the installer but I'd be surprise if that went back further than perhaps 8.0. Only NI can tell.

-

It looks like the certificate was renewed today. According to the certificate, it is valid from 13/Dec/2021 to 13/Jan/2023. Have you tried clearing your cache?

You can clear the cache in most browsers using the key combination <Ctrl> + <F5>. Hope that helps.

-

27 minutes ago, AndyS said:

Is this a bug in JSON Text or is my data-construction not supported as expected?

27 minutes ago, AndyS said:The 2nd thing I recognized is that the name "Value" of the cluster is not used during flatten. Instead the name of the connected constant / control / line is used.

Here is a similar post from the CR thread. The reason for this behavior are explained in the post after that.

JSONtext essentially transforms the data included in your variant, not the variant itself. So when you transform your array into JSON, the variant contains name and type information. But this information doesn't exist when you go the other way around (you could argue that the name exists, but there is no type information). The variant provided to the From JSON Text function contains essentially a nameless element of type Void. JSONtext has no way to transform the text into anything useful. To my knowledge there is no solution to this.

The only idea I have is to read the values as strings, convert them into their respective types and cast them to variant manually.

Download link for OpenG library compatible with LabVIEW 7.1

in OpenG General Discussions

Posted

This is not the right place to ask for legal advice. You should read the license terms and check with your legal department.

Here is a link to the full license text for version 2.1 of the license: GNU Lesser General Public License v2.1 | Choose a License