-

Posts

72 -

Joined

-

Last visited

-

Days Won

2

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by codcoder

-

A follow up on this: the issue seemed to be with the PXIe-7820R module. We tried another one and it worked. Don't know why. Physical damage? Firmware mismatch? I have no idea but it is annoying since the module was brand new. But my specific problem is solved now.

-

Thanks for the input. I'll look into it. I would like to add that I have created a small test application which I also have sent to NI as part of the support task. This test application works just as intended on my old PXI system: an indicator on the front panel updates from a counter on the FPGA counting up when the trigger comes. But it does not work on my new system. The indicator is always zero.

-

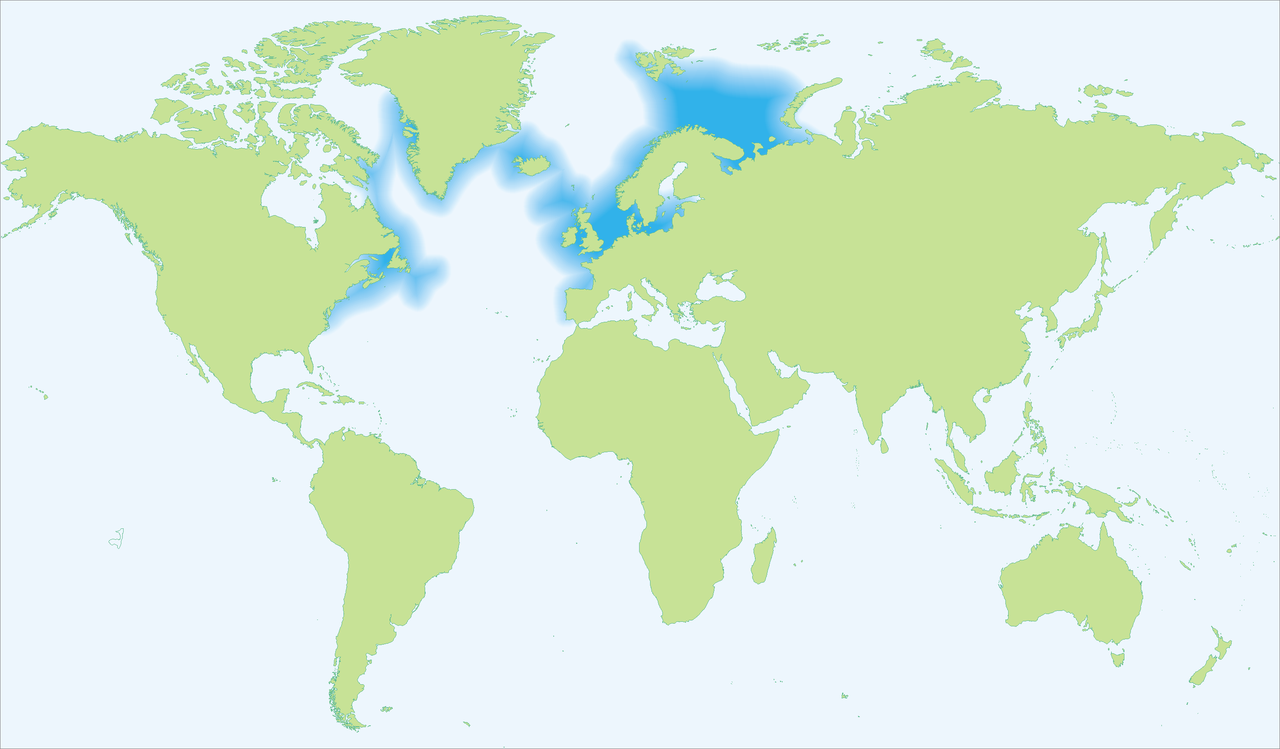

Hi, So this is a blatantly repost from the discord channel but I'm getting kind of desperate here. I have opened an issue with the NI support function but I figure I might as well try the rest of the community here too. This is a copy and paste of my problem description which I have sent to NI and it is very similar to what I have written in the discord channel: I am building a new PXI-based test system that duplicates an older system which has been working reliably since around 2019. The purpose of this setup is to provide absolute time to an FPGA so that all FPGA read/write signals can be timestamped correctly. The timing architecture is as follows: A PXI-6683H generates a custom 1 Hz pulse using NI-Sync. This pulse is routed over PXI_Trig0 on the backplane. In the LabVIEW RT application, on the falling edge of the pulse, I write the next whole second value to the PXIe-7820R through a front panel control. On the rising edge, an FPGA loop is expected to trigger, latch that value as valid, and update the FPGA time function. Between pulses, the FPGA time is advanced using the FPGA internal 40 MHz clock. To verify that the FPGA loop has triggered, the FPGA sets a status indicator to 1, which I read back from the RT side. In the failing system, this status always remains 0. I also observe that other FPGA functions appear inactive. Based on this, my conclusion is that the FPGA is not seeing the trigger, or is not responding to it. Important context: The same software and FPGA firmware concept works in another chassis, a PXI-1062Q. The new system uses a PXI-1084 chassis. I am aware that the PXI-1084 is divided into three trigger bus segments, but both the PXI-6683H and PXIe-7820R are installed within the same segment. I have verified that the PXI-6683H can generate a signal. In MAX test panels, I can successfully drive PXI_Trig0 from the 6683H and read it back using MAX functionality, which suggests that the 6683H is operational and that PXI_Trig0 exists at least in some sense. I have formatted the RT controller and reinstalled software, but the issue remains. My main question is whether there is any chassis-specific routing limitation, configuration requirement, or compatibility issue in the PXI-1084 that could prevent a user-generated PXI_Trig0 signal from being seen by the PXIe-7820R, even when both modules are in the same trigger segment. So... anyone? I am hoping for a super obscure yet super easy solution here.

-

Aren't DVR's just LabVIEW's take on pointers?

- 14 replies

-

- 1

-

-

- dvr

- ni software

-

(and 2 more)

Tagged with:

-

There should be a way to work with very large files in LabVIEW without having to keep the entire file in memory. Many years ago I worked with a very large file in Matlab (well, back then it was a very large file) and I extensively used the function memmapfile: https://se.mathworks.com/help/matlab/ref/memmapfile.html It is a way to map a file on the harddrive and access its content without having to keep the entire file in workspace memory. A bit slower I assume but far less load on the RAM! There must be a similar method in LabVIEW. EDIT: I found this old thread: https://forums.ni.com/t5/LabVIEW/Is-there-a-way-to-read-only-a-portion-of-a-TDMS-file-without/td-p/1784752 This is something similar to what cordm refers to: Best practice regardless of language must always be to handle large files in chunks.

-

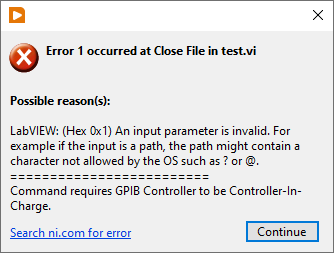

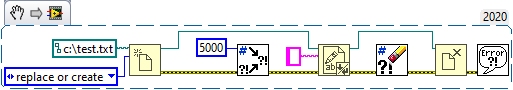

Hi, So I had a bug I couldn't figure out. The setup is according to the attachment. The Close File function kept throwing an error (code 1) and I couldn't understand why. But then I finally understood: if there is an error in to the Write to Text File the file refnum becomes invalid. The reason I am starting this thread is that I always thought that there is always a case structure in a sub VI, even for the primary functions, so that if there is an error the function isn't executed BUT any refnum passes the VI unaffected. But that isn't the case? Is this an expected behaviour?

-

Doesn't sound too hard?

-

Jake: Your Smarter LabVIEW Development Assistant

codcoder replied to jcarmody's topic in LAVA Lounge

Promising! But I am waiting for Jake to be able to produce VI snippets. Asked for a for loop and all I got was simple ASCII art. +------------------------+ | +------------------+ | | | [ ] | 0->1->2 | | | | For Loop | | // 5 iterations | +------------------+ | | Iterations | +------------------------+ -

Wow, thanks! This is exactly what I was looking for!

-

Hi, So I have this project with a lot of vi's which are saved with block diagram windows somewhat larger than the actual block diagram. I am looking for a way to programmatically go through all these vi's and resize the block diagram window size to the size of the actual block diagram (plus some margin). Has anyone done this? I'm looking at the different properties: BDWin.Bounds, Diagram.Bounds, but I can't really make heads or tails of it.

-

Can you put the AI node inside a single cycle timed loop with a slower clock? On my FPGA target, 7820R, it is possible to create derived clocks with both lower and higher frequency compared to the base clock of 40 MHz. Create a derived clock of 500 kHz -- if possible -- and connect that clock to the SCTL. If the compiler doesn't complain it maybe should work?

-

If you have a controller in the PXI chassis it can either run LabVIEW RT (used to be a PharLaps derivate but they are now switching over to something built on Linux) or Windows. If you want the PXI system to run as an embedded system, and if you need any real-time capabilities, then LabVIEW RT is the way to go. If you don't need that I do not suggest running Windows on the controller. We have a system where we do that and unless you really don't have space for a rack computer or some other external PC I don't see any advantages. What you get is basically a more expensive computer with worse performance. Just connecting to the PXI system with an MXI link is much better (which we do in all our other systems) and if I understand you correctly that's already your idea.

-

I'm not sure I undestand the question. LabVIEW FPGA can handle math caluclation, although decimal numbers are a bit cumbersome, and the straight line formula is pretty straight forward to implement. Are you sure you need a LabVIEW FPGA for this? Do you have a very specific application?

-

Yes, exactly those signals! PXI Triggers. I don't have specific experience of the PXIe-7975R but I use the PXIe-7820R quite a lot. And on that card you can simply access the trigger lines like any other digital I/O in LabVIEW FPGA. So it would be fairly simple to use Wait on Any Edge or something like that. https://www.ni.com/docs/en-US/bundle/understand-flexrio-modular-io-fpga/page/fpga-io-method-node.html

-

Cannot you use one of the PXI trigger signal routing? https://www.ni.com/docs/en-US/bundle/pxie-6672-feature/page/using-pxi-triggers.html

-

If you a certain you already have a functional license, check the license folder and remove any old ones. Those can confuse NI License Manager. The path to that folder is c:\ProgramData\National Instruments\License Manager\License at least on my computer.

-

Both your links were good. I've found the second one, but the first was new to me. But you are right to assume that I'm looking for something less abstract. At least, that is what I want to create here—a cooking recipe of sorts.

-

Hi, (This is a repost from NI's community forum. No answers there so I'm trying my luck here 😀 ) As many of you are likely aware, TestStand is a powerful tool with numerous features and incredible flexibility. While these aspects are undoubtedly valuable, they can sometimes result in the creation of test sequences that are challenging to read. In my workplace, particularly with many newcomers learning TestStand, there's a tendency to be awestruck by its programming language-like capabilities, leading to the use of excessive loops, parameters, and if-cases where a simpler, flat sequence would suffice. Recognizing the need for clarity in test sequences, I've taken on the task of creating a style guide. The aim is to keep sequences coherent without unnecessary complexity. My question for the community is whether anyone has already developed such a guide and is willing to share it? Thank you in advance!

-

I've always thought LabVIEW's higher level of abstraction somewhat reduced the need for that. But I'm not proficient enough in any other language to make a fair comparision.

-

Interesting take but does it fly with TestStand?

-

Well yes from a data structure point of view it is. It would make sense to store the private cluster as the private data of the class and use a public cluster as an exposed API. But solving the same issue with a library isn't that different. It doesn't however solve the issue of connecting the public and private data in some smart way. But @LogMAN provided an interesting solutionf or that! I'll look into it!

-

So yes I went for "brute force". But I managed to solve it using VI scripting, i.e. automatically creating undbundle-by-name from the public cluster to bundle-by-name to the private cluster. So even if the creation process is obscure the result is easy to read by someone who knows only little LabVIEW. Which was what I was aiming for.

-

Hi, So I have this situation where I want to flatten a cluster to a byte array for data transfer. But as things are there are empty spaces between some of the parameters, call it reserved according to the format specification the cluster mirrors. So to make the flatten process as simple as possible I want to be able to traverse the cluster in a loop so the cluster must self-contain all information. I am currently solving this by including dummy parameters between the real ones to reflect these reserved areas. But of course, as you all probably agree upon, I do not want to expose the dummy parameters to the user (the vi will be accessed by Teststand btw). Som my question is: can some controls in a cluster be private somehow? And if not is there any other smart way to solve it? My current solution to this is to create a "public cluster" without these dummy controls and move data between. So a secondary question is there any smart way to do that? I want to keep my code as flat as possible so my idea is simply to use bundle/unbundle but create it using vi scripting.

-

Including solicitation of interest from potential acquirers

codcoder replied to gleichman's topic in LAVA Lounge

Yes! NI is now Emerson Test & Measurement headquarted in Austin. So will the corporate colour scheme revert from green to blue now to complete the circle?