-

Posts

1,203 -

Joined

-

Last visited

-

Days Won

116

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Neil Pate

-

I think the standard recommendation of not doing too much processing in the event structure applies if you are also using it to handle GUI events. Are you doing this in your code?

-

I am a member of the IEEE, and have been since I was a student (so about 18 years in total). But honestly I do not really get much from it, other than a magazine each month which occasionally have some interesting articles.

-

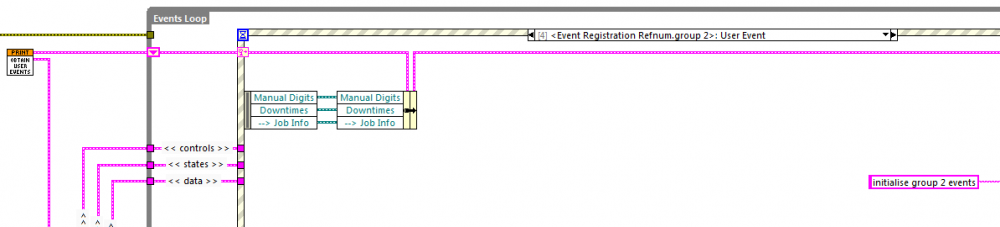

I think it can be done, but rather than doing a Reg Event, just replace the actual cluster element. Note: this is quite old code I wrote some time ago when I was just learning about User Events (LV 8.5), I am not sure I would do things like this now if I had to!

-

VISA write (read) to USB instruments in parallel crashes LabVIEW

Neil Pate replied to ThomasGutzler's topic in Hardware

Tom, I have seen lots of instability in the past when doing parallel VISA reads. Admittedly these were using USB<-->232 converters and I always laid the blame on flaky USB converters. -

NI Stuff takes up too much space on my SSD

Neil Pate replied to Neil Pate's topic in Development Environment (IDE)

Pah that's nothing..., my Oculus Rift dev kit should be arriving soon so I will soon be able to code in one more dimension than you (I wish...) -

NI Stuff takes up too much space on my SSD

Neil Pate replied to Neil Pate's topic in Development Environment (IDE)

I install everything on my SSD (240 GB). Especially LabVIEW; I switch between quite a few different projects in many different versions of LV, so I really like it to load nice and snappily. I would definitely prefer to offload the NIFPGA directory somewhere else though, as the SSD is not helping at all for that stuff. -

NI Stuff takes up too much space on my SSD

Neil Pate replied to Neil Pate's topic in Development Environment (IDE)

The strange thing about the mklink document is that it mentions Vista, and 8, but not 7 which clearly sits in between the two. Surely just a documentation error I think? -

NI Stuff takes up too much space on my SSD

Neil Pate replied to Neil Pate's topic in Development Environment (IDE)

Great idea, I will give it a try. I think this also supports Win 7 even though it is not mentioned. -

NI Stuff takes up too much space on my SSD

Neil Pate replied to Neil Pate's topic in Development Environment (IDE)

I think the MDF directory is used when you build an installer which needs additional components. LabVIEW first looks in the MDF directory to see if a copy of the other component installer is there. -

NI Stuff takes up too much space on my SSD

Neil Pate posted a topic in Development Environment (IDE)

So the ProgramData\National Instruments directory is getting very big on my disk, approaching 80 GB with the Update Service and installers (MDF). This along with with 40 GB in the NIFPGA directory is getting a bit silly. My primary disk is a nice fast SSD, but it is not huge. As such I want to move as much of this stuff off the c:\ as possible. I have moved the Update Service stuff onto bigger (mechanical) HDD, this is possible by changing the preferences of the NI Update Service. I don't yet know if this has actually worked, as I manually moved all the files after changing the preferences. Does anybody know if it is possible to move the NIFPGA and ProgramData\National Instruments\MDF somewhere else? -

Yes, that's what I normally do (keeping track of whether we have wrapped around or not etc). I have never done any benchmarks, always just done it that way. Quite often my circular buffers have a method to read n samples rather than the whole buffer, so I find I need to keep track of a separate read pointer.

-

Nice work. This is such a good use of XNodes adapting to type behaviour. Couple of questions though... In the Read, there is a Rotate 1 D array, is this not quite an expensive operation? Could that could be removed if a Read Pointer was introduced?

-

I can't be the first one to have tried this. XD

Neil Pate replied to Sparkette's topic in LabVIEW General

The LabVIEW beta normally rolls out around about January or February. -

We do what we must because we can.

-

You have a Starcraft II avatar, already that gets you points in my book :-) I have actually approached the problem slightly different, as I did not like the Strategy object needing to do the VISA read, and due due to the asynchronous nature my device sends data (all on its own it periodically sends data). I have implemented the Received data (and the parsing thereof) as a type of Strategy pattern, but the actual reading of the characters on the serial port is done somewhere else.

-

As someone wiser (and more sarcastic) than myself has already pointed out... Some people, when confronted with a problem, think “I know, I'll use regular expressions.†Now they have two problems.

-

I like to try and extend my understanding of things wherever possible, so I can make informed decisions later. This often means trying out features or design techniques I have not used in the past to see if there are better ways of accomplishing things. This is how I have evolved my style over the years. Sometimes the experiment works, sometimes it does not, but I always get to keep some knowledge from the exeperience. One thing I am trying to get my head around is proper OO design (forget LabVIEW for now). This is something I have some understanding of, but could certainly do with more practice; hence the original question. I agree now with Shane that this looks a lot like the Strategy Pattern.

-

the kool-aid is nice this time of year

-

Hi All, I have a set of classes representing an instrument driver which allows for different firmware versions. The instrument can operate in certain modes, and depending on the mode it periodically returns a different number of characters. What I would have done up till now is have a "mode" enum in the parent, and have a single Read method and inside that using a simple case structures read a different number of bytes depending on the mode, and then parse the string accordingly. No problem here, very simple to implement. What I want to do now is remove the enum, and make it a class. (it is my understanding that have type-defined controls inside a class can lead to some weirdness). So I figure I create a mode class (and child classes corresponding to the different modes my instrument can be in), and then at run-time change this object. Each of these mode child classes would implement a Read function, and they would know exactly how many bytes to read for their specific mode. This seems a bit weird as I would be implementing the Read function in the Mode class which does not feel like the right place to put it. Alternatively I can implement a BytesToRead function in each of the Mode classes and then also a Parse method. Does this sound sensible? Is this going to be complicated by the fact that my actual class holding the mode object is an abstract class?

-

Load hex file into avr controller using LabVIEW

Neil Pate replied to piZviZ's topic in LabVIEW General

If you truly want to do this from LabVIEW do yourself a favour and get a serial sniffer and capture a download that has been successfully done via the AVR tools. Doing this kind of thing from first principles is, in my experience, quite a bit of frustration finally followed by extreme satisfaction when it all works nicely. Good luck if you are trying to write the bootloader, expect much suffering! -

That should work, it certainly works fine on my PC. It is the technique used by the nifty Show VI In Folder quick-drop plugin http://decibel.ni.com/content/docs/DOC-22461