-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

279

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Rolf Kalbermatter

-

Please can anyone help me optimising this code

Rolf Kalbermatter replied to Neil Pate's topic in LabVIEW General

Makes sense. In this case its double unneeded. Since it is a 2D array, the two dimension sizes already add up to 8 bytes, so there would be no padding even for 64-bit integers. And since the array uses 32-bit integer values here, there is anyhow never any padding. -

Please can anyone help me optimising this code

Rolf Kalbermatter replied to Neil Pate's topic in LabVIEW General

Yes arrays in LabVIEW are one single block of memory where the multiple dimensions are all concatenated together for multi-dimensional arrays. There is no row padding, since the natural size of the elements is also the start address of the actual data area. The data area is prepended with the I32 values indicating the size of each dimension. And yes arrays can have 0 * x * y * z elements, which is in fact an empty array but it still maintains the original lengths for each dimension and therefore also allocated a memory block to store those dimension sizes. Only for empty one dimensional arrays (and strings) does LabVIEW internally allow a NULL pointer handle to be equivalent to an array with a 0 dimension size. If you pass such handles to C code through the Call Library Node you have to be prepared for that if the handle is passed by reference (e.g. LStrHandle *string). Here the string variable can be a valid handle with a length of 0 or greater or it can be a NULL pointer. If your C code doesn't account for that and tries to reference the string variable with LStrBuf(**string) for instance (but you anyhow should use the LStrBufH(*string) instead, which is prepared to not crash on a NULL handle), bad things will happen. For handles passed by value (e.g. LStrHandle string) this doesn't apply since while handles are relocatable in principle, there would be no way for the function to create a new handle and pass it back to LabVIEW, if LabVIEW passed a NULL handle in. In this case LabVIEW will always allocate a handle and set its length to 0, if an empty array is to be passed to the function. I do believe that your explanation about the value to subtract is likely misleading however. The pointer reported in the MemInfo function is likely the actual data pointer to the first element of the array. There is one int32 for each dimension located before that before you get to the actual pointer value contained within the handle. And that value is what DSRecoverHandle() needs. The way it works is that the pointer area of the memory block referred to by a handle actually contains extra bytes in front of the start address of the handle pointer. This area stores information such as the actual handle that refers to this handle pointer, the totally allocated storage in bytes for that handle (minus this extra management information and some area for flags that was used when LabVIEW still had two distinct handle types (AZ and DS). AZ handles could be dynamically resized by the memory manager whenever it felt like, unless there was a flag that indicated that the handle was locked. To set and clear this flag there was the AZLock() and AZUnlock() function. Trying to access an AZ handle without locking it could bomb your computer, the Macintosh equivalent of Blue screens back in those days. You got a dialog with a number of bombs, that indicated the type of 68k exception that had been triggered. And yes after acknowledgment of that dialog, the application in question was gone. DS handles never are relocated by the memory manager itself. The application needs to do an explicit DSSetHandleSize() or DSDisposeHandle() for a particular handle to change. However you should not try to rely on this information, the location of where LabVIEW stores the handle value and handle size (and if it even does so) is platform, compiler and version dependent. And since it is private code deep down in the memory manager that is fine. The entire remainder of LabVIEW does not care and is not aware about this. The only people who can do anything useful with that information are LabVIEW developers who actually might need to debug memory manager things. For all the rest including LabVIEW users this is utterly useless. So how much you would need to subtract from that pointer would almost certainly depend on the number of dimensions of your array and not the bitness you operate in. It's 4 bytes per dimension, BUT! There is a little gotcha, On other platforms than Windows 32-bit, the first data element in the array is naturally aligned. So if your array is an array of 64-bit integers or double precision floats, the actual difference to the real start of the handle needs to be a multiple of 8 bytes on non-Windows 32-bit (and Pharlap) platforms, since that is the size of the array data element. -

A glimpse of the future (or is it the present?)

Rolf Kalbermatter replied to X___'s topic in LabVIEW General

The first post does. 😀 I remember CICS and MVS. The mainframes in the company I did my vocational education as communication electronics technician was running on this and the entire inventory, order and production automation was running on this. The terminals were mostly green phosphor displays, 80 * 25 character. I did some CICS work there, but not Cobol programming. I did however do some Tektronix VAX VMS Pascal programming on the VAX systems they also used in the engineering departments to run simulation, embedded programming and CAD on. -

You are likely confused about EtherCAT Master and EtherCAT Slave devices. You only can use an NO-9144 or NI-9145 as EtherCAT slave. A device needs to have very specific hardware support in order to be able to support EtherCAT slave funcitonality. Part of it is technical and part of it is legal, as you need to pay license costs for every EtherCAT slave device to the EtherCAT consortium. Your NI-9057 can be used as EtherCAT master using the Industrial Communication for EtherCAT driver software but is otherwise simply a normal cRIO device. The EtherCAT master functionality has to be specifically programmed by you using the Industrial Communication for EtherCAT functionality. You may want to check out this Knowledge Base article. The order of steps is quite important. Without first configuring one of the Ethernet ports as EtherCAT, the autodetection function won't be able to show you the EtherCAT option.

-

Performance boost for Type Cast

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

The ini key enables the UI options to actually set these things. The configuration for those things is generally some flag or other thing that is stored with the VI. So yes it will stick. Except of course if you do a save for previous. If the earlier version you save to did not know that setting, it is most likely simply lost during the save for previous. -

FPGA SPI communication inside a SCTL (single cycle time loop)

Rolf Kalbermatter replied to patufet_99's topic in Embedded

Any chance that it is operating in bipolar mode? Then the MSB would be the sign bit! -

Performance boost for Type Cast

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

No, byte swapping happens in both cases. The code with and without ByteArrayToString is functionally equivalent. This is an oversight in the optimization of the Tyecast node, where it takes some shortcut in the case of the string input, but doesn't apply that shortcut for the byte array too, which in essence is the same as a LabVIEW string so far (but shouldn't be for many many years already). The BytArrayToString is in terms of runtime performance pretty much a NOP since the two are technically exactly the same in memory. But it enables a special shortcut in the Typecast function that handles string inputs differently than other datatypes. -

Performance boost for Type Cast

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

The comparison is however not entirely fair. MoveBlock does simply a memory move, Typecast does a Byte and Word Swap for every value in the array, so is doing considerably more work. That is also why Shaun had to add the extra block in the initialization to use another MoveBlock for the generation of the byte array to use in the MoveBlock call. If it would use the same initialized buffer the resulting values would look very weird (basuically all +- Inf). But you can't simulate the Typecast by adding Swap Bytes and Swap Words to the double array. Those Swap primitives only work on integer values and for single precision and doubles it simply is a NOP. I would consider it almost a bug that Typecast does swapping for single and double precision values but the Swap Bytes and Swap Words do not. It doesn't seem entirely logical. -

Performance boost for Type Cast

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

Typecast does a few things to make sure the input buffer is properly sized for the desired output type. For instance in your byte array to double array situation, if your input is not a multiple of 8 bytes, it can't just reuse the input buffer in place (It might never do that but I'm not sure. I would expect that it does if that array isn't used anywhere else by a function that wants to modify/stomp it). But if it does it has to resize the buffer and also adjust the array size in any case. If it doesn't it would be anyhow a dog slow operation 😃. Extra complication with Typecast is that it always does Big Endian normalization. This means that it will go on every still shipping LabVIEW platform and byte swap every element in the array appropriately. This may be desired but if it isn't, fixing it by adding a Swap Bytes and Swap Words function in the resulting array has actually several problems: 1) It costs extra performance for swapping the bytes in Typecast and then again for swapping it back. A simple memcpy() would be much more performant for sure even if it requires a memory allocation for the target buffer. 2) If LabVIEW ever gets a Big Endian platform again (we can dream, can we) your code will potentially do the wrong thing depending on who created the original byte array in the first place. -

Performance boost for Type Cast

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

It depends on your definition of safe! 😃 If the VI does enforce proper data types (through its connector pane for instance) and accounts for the size of the target buffer or adjusts it properly (for instance by using the minimum size in the Call Library Node to use a different parameter as size indicator, or explicitly resize the target buffer to the required size) this can be VERY safe. Of course it is not safe in the sense that any noob can go into that VI and sabotage it, but hey to make things foolproof requires an immense effort, and that is the overhead of the Typecast function. 😁 But to make things engineer proof is absolutely impossible! 😀 Also a memcpy() call is only functionally equivalent to a Typecast on Big Endian machines. For LabVIEW that applied "only" to Mac68K. MacPPC, SunSparc, HPUnix PARisc, Silicon Graphics Irix, IBM AIX, DEC Alpha and VxWorks (of whose not all were ever officially released). The only LabVIEW platforms that really use Little Endian are the ones based on i386/AMD64 and ARM CPUs, which are the only platforms that currently still are shipping. For me it really depends. I use it often in functions that deal with binary communication (if they use Big Endian binary format, otherwise the Flatten/Unflatten is always preferable). Here the additional overhead of the Typecast functions is usually insignificant in comparison to the time the overall software has to wait for responses from the other side. Even with typical TCP communications and 1Gb or higher fiber connections, your Read function sits generally there for several milliseconds to receive the next data package. Shaving off a few nanoseconds or even microseconds from the overall execution time is really completely insignificant in this case. If you talk about serial communication or similar, things get even more insignificant. For shared library interfacing and data processing like image handling and similar, the situation is often different and here I tend to always use memory copies whenever possible, unless I need to do specific endian handling. Then I use Flatten/Unflatten as that is very convenient to employ a specific endianness. -

FPGA SPI communication inside a SCTL (single cycle time loop)

Rolf Kalbermatter replied to patufet_99's topic in Embedded

Take a look at Figure 10 in the datasheet. What you see there is that the positive edge of CNVST starts the SAR. The DOUT then goes after a certain amount of time (t12) that is needed for the SAR to low. This indicates the readiness of the data on DOUT. After a time (t3) the first MSB is output (and could be a low too). You then need to sample the DOUT and after you read it you can immediately assert SCLK. The positive edge of this indicates to the ADC that you have read the data and it can output the next data bit. Then you deassert the SCLK signal after (t4). The data bit is not later than (t4) after the falling edge available. You can then read this and assert the SCLK again but not faster than (t8) after te falling edge of SCLK. So the general sequence looks like this 1 ) assert CNVST and deassert SCLK 2) dassert CNVST not faster than (t11) = 20 ns later 3) wait for DOUT to go low (this takes time as in this time the SAR is ongoing, this is not 10 ns but at most t12 = 525 ns, but since you are waiting for this you have to wait at least as much, but more safe is simply to wait until DOUT goes low 4) wait at least 10 ns more after DOUT got low. 5) Read DOUT, this is your first bit 6) Assert SCLK 7) wait at least 4.5 ns 8 ) deassert SCLK 9) Read DOUT, this is your next bit 10) wait at least another 4.5 ns 11) Go back to 6) until you have read all your bits. The timing is only critical in terms of not going faster than the times mentioned here. You can certainly wait longer if your FPGA loop timing makes this more convenient. The 4.5 ns high and low time for the SCLK is a minimum, but of you don't go to the maximum sample rate (determined by the CNVST frequency you can also clock out the data slower. You simply need to have enough time to clock out the data before you start the next CNVST. Those 4.5 ns wait I would simply make whatever your single cycle loop time is. That can be 5 or more ns, with the default FPGA clock of 40 MHz this would be 25 ns. With such a SCL loop driven at 40 MHz your maximum sample frequency would be about 525 + 10 + 16 * 50 ns = 1335 ns ~ 750 kHz -

FPGA SPI communication inside a SCTL (single cycle time loop)

Rolf Kalbermatter replied to patufet_99's topic in Embedded

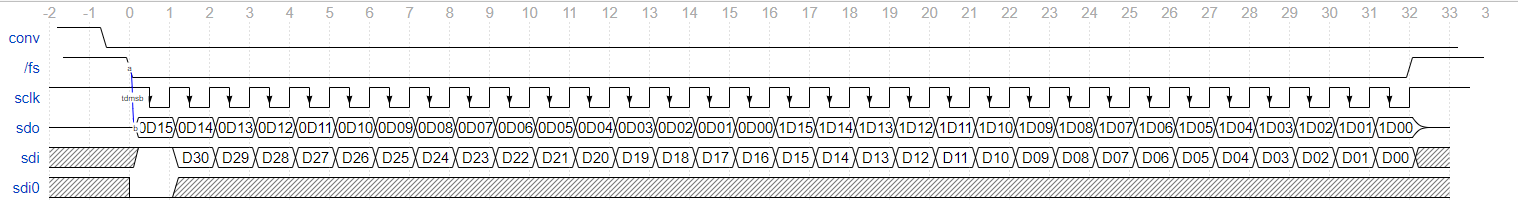

Something looks odd in that timing diagram. As you are the SPI master you should generate the CNVST and SCLK signal, right? And then you should be starting to generate the clock signal after the SAR Conversion time, which is depending on your chip and XCLK or similar. The first upgoing (or falling) edge of SCLK should cause the ADC to output the first bit of data, and you should then sample the SDO input in your FPGA on the other edge to make sure you read a steady state and not some transient. What you show is that you expect the ADC to start streaming the data on its own and then you start generating the clock, but that can't work. This is the typical timing for a ADS8528 chip. I chose to have the CONV pin active high for the entire duration of what the worst case SAR processing time is supposed to be based on the XCLK that is separately provided to the ADC. But it is not important for this chip as it starts the SAR operation on the rising edge of this signal and expects CONV to be low before the /FS is activated but will not care about when the CONV pin goes low between those two moments. The falling edge of the /FS pin (SPI chip select) starts the data cycle and on the falling time of the SCLK, also generated from the FPGA, I sample the SDO pin. The rising edge of the SCLK will signal to the ADC to output the next data bit. There are variations such as not each chip may use the /FS signal (or it might always be activated). But the principle is the same, activate the CONV to initiate a SAR, then wait for at least the worst case time the SAR will take and then start clocking the data bits. But you as master must clock these they won't just appear magically. -

TCP Listener: can it fail?

Rolf Kalbermatter replied to drjdpowell's topic in Remote Control, Monitoring and the Internet

According to this https://blog.cloudflare.com/when-tcp-sockets-refuse-to-die/ it would seem that listener sockets are not supposed to linger around. Still you should probably be prepared that a Create Listener right after a Close Listener can fail just to be on the safe side! -

TCP Listener: can it fail?

Rolf Kalbermatter replied to drjdpowell's topic in Remote Control, Monitoring and the Internet

The Internetcine Avoider should only come into play when you use the high level Listen.vi. If you use directly the Create Listener and Wait on Listener primitives there is no Internetcine Avoider unless you add it yourself.. But, Wait on Listener CAN return other errors than timeout errors and that usually means something got seriously messed up with the underlaying listener socket and the most prudent action is almost always to close that socket and open a new one. Except of course that when you close a listener socket it doesn't just go out of existence in a blink. It usually stays present in the underlaying socket library for a certain timeout period to catch potential late arriving connection requests and respond to them with a RST/NACK response to let the remote side know that it is not valid anymore. And together with the SO_EXCLUSIVEADDRUSE flag this makes new requests to create a socket on the same port fail with an according error, since the port is technically still in use by that half dead socket. That socket gets eventually deleted and then a new Create Listener call on that port will succeed, unless someone else was able to grab it first. And even if you stay entirely within the same system and there is no actual network card packet driver involved, can the socket library reset itself, for instance when a system service or the user does some reconfiguration of the network configuration. But if your code doesn't do something like this: do { err = CreateListener(&listenRefnum); if (!err) { do { err = WaitOnListener(listenRefnum, waitInterval, &connectionRefnum); if (!err) { CreateNewConnectionHandler(connectionRefnum); } else if (err != timeout) { // if we have any other error than timout, leave the loop // which will close the listener and go back to create a new one LogError(err); break; } } while (!quit); Close(listenRefnum); } else { LogError(err); Delay(someWaitTime); } } while (!quit); it will keep trying to listen on a socket that might have been long going into an error condition. -

TCP Listener: can it fail?

Rolf Kalbermatter replied to drjdpowell's topic in Remote Control, Monitoring and the Internet

Yes it takes some time after closing the refnum until the socket has gone through the entire RST, SYN, FIN handshaking cycle with associated timeouts. And that is even true if nobody has been connecting to the listener at that point to request a new connection. So with the SO_EXCLUSIVEADDRUSE flag you can end up having the listener to fail multiple times to create a new socket on the specified port. The alternative of not using exclusive mode is however in my opinion not really a good option. And the Internecine Avoider actually is a potential culprit in the observed problem of the OP. It doesn't really close the socket but rather tries to reuse it. The internal check if the refnum is valid, is in fact not really checking that the socket has not been in error, just that LabVIEW has still a valid refnum, the socket this refnum refers to may still be in an unrecoverable error and keep failing. To recover from a (admittingly rarely occurring) socket library error on the listener socket, the socket needs to be closed. And that means that a socket that has been opened with SO_EXCLUSIVEADDRUSE may actually be blocked from being reopened for up to a minute or more. But trying to reuse the failed socket is even worse as that will never recover. If Wait on Listener fails with any other error than a timeout error, you should close the listener refnum and try to reopen it until it succeeds or the user exits the application/operation. -

TCP Listener: can it fail?

Rolf Kalbermatter replied to drjdpowell's topic in Remote Control, Monitoring and the Internet

That about the Internecine Avoider is only true if you use the high level TCP Listener.vi. I usually use the low level primitives Create Listener and Wait on Listener instead (and always close the refnum if I detect any error other than timeout). The SO_EXCLUSIVEADDRUSE is in principle a good thing, you do not usually want someone else to be able to capture your port number. -

TCP Listener: can it fail?

Rolf Kalbermatter replied to drjdpowell's topic in Remote Control, Monitoring and the Internet

If the socket library or one of its TCP/IP provider sub components resets itself, for whatever reason, it is definitely possible that a listener could report an error. This could happen because the library detected an unrecoverable error (TCP/IP is considered such an essential service on modern platforms that a simple crash is absolutely not acceptable whenever it can be avoided somehow) or even when you or some system component reconfigures the TCP/IP configuration somehow. My TCP/IP listeners are actually a loop that sits there and waits on incoming connections as long as the wait returns only a timeout error. Any other error will close the listener refnum and loop back to the Create Listener before going again into the Wait Listener state. The Wait on Listener doesn't return an error cluster just to report that there is no new connection yet (timeout error 56) but effectively can return other errors from the socket library, even though that is rare. In case of any other errors than timeout, I immediately close the refnum, do a short delay to not let the loop monopolize the thread if the socket library should have another condition than a temporary hiccup, and then go back to Create Listener state until that succeeds. It's a fairly simple state machine but essential to continuous TCP/IP operation. Technical details: the Wait on Listener basically does a select() (or possibly poll()) on the underlaying listener socket and this is the function that can fail if the socket library gets into a hiccup. -

Not the VI itself but if you have enabled to separate compiled code from the VI and since it is at a different path location, it is considered different to the original VI as far as the compile cache is concerned. And therefore since there is no compile cache entry for that VI yet, LabVIEW will recompile the VI.

-

Basically all OpenG libraries before version 4.0 were LGPL licensed. With 4.0 the license for the VI part was changed to be BSD-3. The libraries which use a shared library/DLL have different licenses for the shared library and the VIs. The shared library remained LGPL which should not be a problem as long as you post a link to the OpenG project. For libraries version 4.0 and higher this is the git link mentioned by Jim, for older libraries this is the sourceforge link.

-

As mentioned. the library was a quick hack to another earlier library to add the bitwise operators. And it was likely a bit a to quick hack, messing up a few other things in the process. As you don't use bitwise operators I would recommend you to look at the original library, to which a link is included in that post.

- 172 replies

-

NI Linux RT Scheduler vs PharLap or Win10

Rolf Kalbermatter replied to Petr Mazůrek's topic in Linux

No flame from me for this. Under your constraint (only ever write from one place and never anywhere else) it is a valid use case. However beware of doing that for huge data. This will not just incur memory overhead but also performance, as the ENTIRE global is everytime copied even if you do right after an index array to read only one element from the huge array. -

Nope! You have to do it like in the lower picture. And while the order "should" not matter, it's after all the intend of using reference counts to not allow a client to dispose an object before all other clients have closed it too, I try to always first close the sub objects and then the owner of them (just as you did). There are assemblies and especially ActiveX automation servers out there who don't properly do ref counting and may spuriously crash if you don't do it in the right order.

-

I can't right now work on that. But I have plans to do that in the coming months. The story behind it is that I did a little more than just to make it 64-bits. - The file IO operations where all rewritten to be part of the library itself rather than relying on LabVIEW file IO. While LabVIEW 8.0 and newer supports reading and writing files that are bigger than 2GB, it still has the awful habit to use internally old OS file IOs that are naturally limited to only supporting characters in file names that are part of your current local and they also normally are limited to 260 character long path names. If your drive is formatted in FAT32, that is all the drive can do for you anyhow, but except for USB thumb drives, you would be hard pressured to find any FAT formatted drives anymore. So having these limitations in the library feels very bad. These two things are specially a problem on Windows. Mac is slightly less problematic and Linux has long ago pretty much solved it all internally in the kernel and surrounding system libraries. - Modern ZIP files support things like symbolic links and I wanted a way to support them. For Linux and Mac that is a piece of cake. For Windows I may for now not be able to seamlessly support that as creating symlinks under Windows is a privileged action, so the user has to either be elevated or you have to set an obscure Developer flag in Windows that allows all users to create symlinks. So in summary there was a lot of work to be done, most of it actually for Windows. Most of that is done but testing all that is a very frustrating job. And the non-Windows targets will then also take some more time for additional testing and making things that were modified for Windows compile again properly. So yes, it's still on my to-do list and I'm planning to work on it again, but right now I have another project that requires my attention. Because of the significant changes in the underlying shared library and internal organization of the VIs it will be almost certainly version 5.0. The official library API (those nice VIs with a green gift box in them) should remain compatible but if you want to make use of the new path name feature to fully support long path names with full character support, you may have to change to the new API, with the library specific path type, although if you use high level library functions, internal long path names will be ok, you just won't be able to access them with the normal LabVIEW file functions if they contain non local ANSI characters or are to long! It's the best I could come up with without the ability to actually changing the LabVIEW source code itself to add that feature into the internal Path Manager in LabVIEW. 😀 The according File Utilities Manager functions in the library will also be available for the user in a separate palette.

-

Lets suppose you create a .Net Image object. That image can potentially use many megabytes of memory. Any reference you obtain for that image will refer to the same image of course so references don't multiply the memory for your image, but LabVIEW will need to create a unique refnum object to hold on to that reference and that uses some memory, a few dozen bytes at most. However every such refnum holds a reference to the object and an object only is marked for garbage collection (for .Net) or self destructed (for ActiveX) once every single reference to it has been closed. So leaving a LabVIEW refnum to such an object open will keep that object in memory until LabVIEW itself terminates the VI hierarchy in which that refnum was created/obtained/opened, as LabVIEW does register every single refnum in respect to the top level VI in whose hierarchy the refnum was created and when that top level VI goes idle (terminates execution), the refnum is closed and the underlying reference is disposed. And to make matters even worse, if such an object somehow obtained a reference to one or more other objects, those objects will remain in memory too until the object holding those references is closed, and that can go over many hierarchy levels like this, so a single lower level object can potentially keep your entire object hierarchy in memory. If and how an object does that is however specific to that object and seldom properly disclosed in the documentation, so diligently closing every single refnum as soon as possible is the best way to make this manageable. Yes, aside for real UI programming I consider use of locals and globals a real sin! Ah oui carrément ! Vous n'utilisez jamais de variables globale ou local ? Vous faites que des FGV? In fact the only globals I allow in my programs nowadays are booleans to control the shutdown of an entire system or "constants" that are initialized once at startup from a configuration file for instance and NEVER after. The rest is handled with tasks (similar to actors) and data is generally transferred between them through messages (which can happen over queues, notifiers, or even network connections. Locals are often needed when programming UI components as you may have to update a control or indicator or read it outside of its specific event case, but replacing dataflow with access to locals in pure functional VIs is a sure way to get a harsh remark in any review I would do. And while I have been a strong supporter of FGVs in the past I do not recommend them anymore. They are better than globals if properly implemented (which means not just a get and set method, which is just as bad as a global, but NEVER EVER any read-modify cycle outside of the FGV.). But they get awkward once you do not just have one single set of data to manage but want to handle an array of such sets, which is quite often. Once you get there you want to have a more database like method to store them, rather than trying to prop them into an FGV.

-

The problem in this specific case is not about memory, although a refnum uses more than just a pointer, but not much more (the underlying object may however use tons of memory!). The problem is rather that it is very easy to lose the overview of which local (or global) is what and where it is initialized and where does it need to be deallocated. Yes, aside for real UI programming I consider use of locals and globals a real sin!