-

Posts

264 -

Joined

-

Last visited

-

Days Won

10

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by bbean

-

Futures - An alternative to synchronous messaging

bbean replied to Daklu's topic in Object-Oriented Programming

Nice examples in FPGA WRT OOP LabVIEW vs Old School (OS) LabVIEW, I think both approaches are lacking in one way or another. I think Actor/Messenger frameworks solve some of the problems of OS Labview but agree somewhat with Shaunr that they muddy the debugging waters significantly and strip some of the original benefits of WSYWIG OS LabVIEW away in the name of "pure" computer science principals. Still waiting for the IDE to catch up with VR glasses so I can see dynamically launched VIs and message paths in a 3rd dimension during debugging. If you look to our friends in the web and DB world similar struggles are happening. For instance, relational databases by theory you should normalize to the 6th normal form but not many people do. And in fact people got sick and tired of refactoring relational databases in general and completely abandoned them in many situations. Likely in LV 2017 it won't be a red herring anymore with "type enabled structures". So in the future you may be able to have DBLs in FPGA without OOP and without breaking the code. Pure speculation on my part though. -

just curious why you used a SEQ for a "semaphore" vs an actual semaphore. BTW I really like your version of the Modbus library.

-

What if you have more than one comm port / instrument? Are you going to make an action engine for each com port/instrument?

-

I do the same thing as ensegre. I gave up on using VISA lock / unlock, because I keep forgetting the nuanced use cases like porter has shown (I'm getting old and my memory is going) so its easier for me to just use a semaphore and be done with it.

-

Python client to unflatten received string from TCP Labview server

bbean replied to mhy's topic in LabVIEW General

Or better yet a Type Enabled Structure -

Is the Variant Tree indicator available in the Dev environment? Not sure I remember correctly, but I thought after looking into using it in a program I found the primitive only ran if it was a probe.

-

CRIO-style architecture for Raspberry Pi

bbean replied to dmurray's topic in LabVIEW Community Edition

I know the title of your post is "CRIO-style architecture for Raspberry Pi" and briefly looking over the architecture I think it looks fine. But have you or anyone tried this architecture on the Rpi: Front Panel Publisher What are the limitations of the Rpi LabVIEW runtime? When I get some time I'd like to investigate more. -

Sorry, I'm a little tied up right now with work stuff. maybe we could chat offline regarding what you want in detail, so I don't accidentally go off on a tangent when/if I do actually work on it. I think what was stopping me in my tracks before was figuring out how to determine the "name" to put on the output control based on an upstream connection (input wire)

-

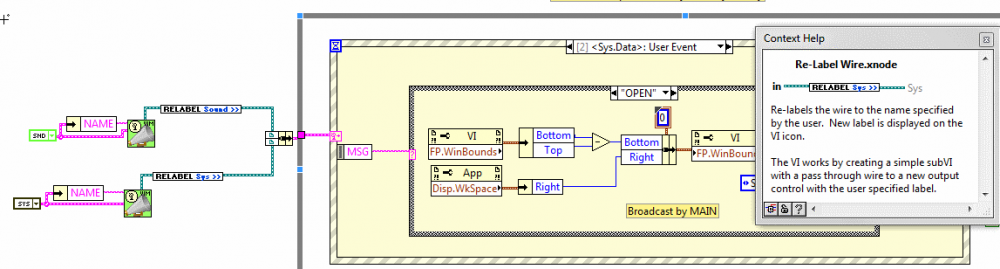

lordexod. I tried your new Relabelizer popup plugin and its a nice solution. Seems to have a small bug that the terminals may not get wired up properly depending on where you right click to execute the script. See: Relabilizer Plugin Bug I still like my solution better because it looks better on diagram. Yours is more elegant because of its simplicity though.

-

Bean, Thanks for the response. I clicked your link and then went down the black hole of MS again trying to find a reseller for the license. Doing a google search resulted in this possible link to purchase: Microsoft MSDN Platforms License & Software Assurance 1 Is that a similar license to what you are using? I may be hijacking the thread at this point.

- 25 replies

-

- open source

- alternative

-

(and 1 more)

Tagged with:

-

To be a little more helpful, I googled speed up gifs and found this: Ezgif speed up site and sped up the above gif by 200% and inserted it into the attached VI. Then inserted the original gif saved from the site at 100% (for some reason the original gif wouldn't drag/drop into the VI, but ones run through the website would). Anyway it seems the speed up works on my machine/vi. staythirsty.vi

-

-

I don't know what the definition of cool is, but here are some posts that I've found interesting / very instructive: VI Macros (includes comments from someone named jeffK) HAL Demo / Named Events (an offshoot of this discussion by ShaunR) Turn your front panel into an interactive HTML5 site LVTN Messenger Library I am probably leaving out a bunch of others.... I think another potential cause of the downturn in use of lavag is the move of advanced users to their own company/personal blogs for people to present / discuss new ideas. The motivation I presume is to drive internet traffic / SEO / twitter / instagram / facebook / myspace (was once cool ) links but results in the scattering of the community. I don't know about everyone else, but usually when I disappear from lavag for a while it means I'm to busy with "real" (billable) work. Maybe its a good sign that lavag use is going down

-

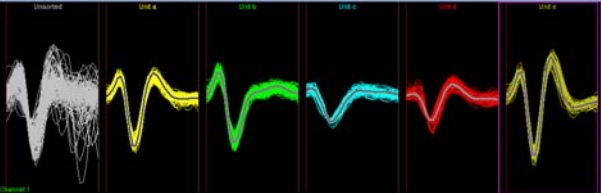

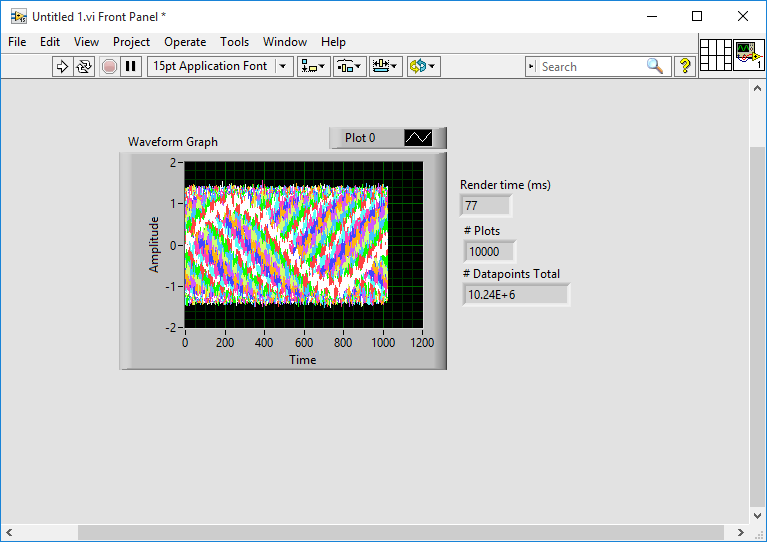

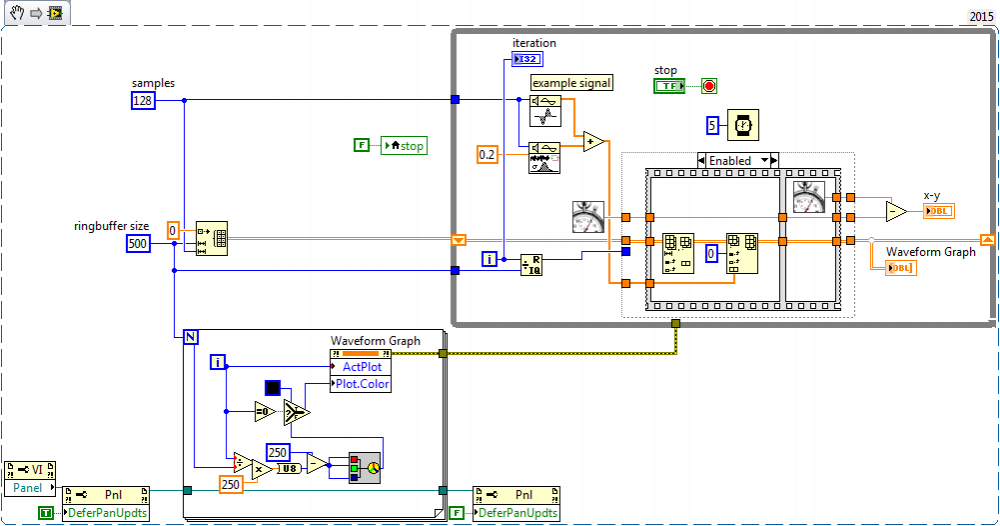

how many points are in each plot of graphs you shown in your last post? 8192 or 30-50 Is the template trace pre-calculated before starting or is it an average of some set of the white traces (exceeding some threshold)? I guess I'm asking if you have multiple different thresholds for each color shown in the graphs?

-

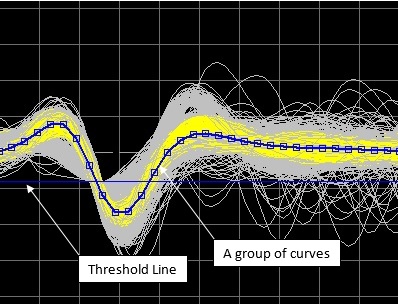

I'm a little confused. On your first post you show code and an example where you do a peak detection and than pop off traces from the data around those points (samples before and after). Is that all you need to do? Or do you need to do additional post processing on the samples before and after the peaks (analyze each set of data around the peaks). For example in the post with these screenshots: you show another threshold line. Is there a second post processing step (on the traces that were pop'd off of the original signal) that you want to do "online" with another threshold? Also do you want to be able to change the samples before and after in real-time as you are collecting data or can it be fixed for each session?

-

Changing the colors of individual plots occurs in the UI thread and is "expensive". The secondary XY graph avoids this by making all plots the same color...in your case yellow? Some other things to think about: if you change your threshold value, do you want to remove the old yellow traces from the display if they no longer meet the threshold criteria? It gets more complicated that way. how long does the daq read take, you should explore pipelining to help speed up things as well. In the link A would be your DAQ and B would be your update of the buffer and display You make an exact copy of the graph and then use the tools pallette paintbrush, switch the color to transparent T, start erasing things on the graph that aren't needed (everything except the signal plots for the most part), and then move that graph over top of the other graph. Some ppl will call this a "cluge" and you have to make sure that if the x or y scale change on the visible graph, it also changes on the transparent graph. So programming challenges arise for instance if you allow the user to autoscale.

-

the last snippet from ensegre is not working for me either. Point taken re 8192 points. On my laptop it takes about 20ms per plot update. How long does the daq take? Maybe pipe-lining would help here. Option 2 - decimate the data for display 8192 points or more exceeds the pixel width of the graph indicator area (unless he needs a super big xy graph and has a super monitor). For display purposes he could decimate the data with min/max and only display the #of points = pixels width of graph indicator. Then store the actual data in a separate buffer.

-

Ha! I knew someone would be able to make it more efficient. Is the visible property faster than defer panel updates? Is there anyway to do the array operation inplace? or is there a "rotate 2d array" primitive hidden somewhere? Also I'm guessing a good portion of the execution time is redrawing all the plots when they shift 1 plot location on each iteration...but maybe I'm wrong.

-

Nice. Building off of ensegre's solution, here is another option for ring buffer (below). I think its an order of magnitude slower, but it allows older plots to fade away. I think you will have to create two ring buffers and two graphs, one for displaying realtime data, and another for displaying your "selected plots", then make one graph transparent and overlay it over the other

-

help for 3D Picture and markers

bbean replied to Alberto Bottillo's topic in Application Design & Architecture

Sorry don't have too much time to help here, but try example code here for some guidance maybe: C:\Program Files (x86)\National Instruments\LabVIEW 2014\examples\Graphics and Sound\3D Picture Control\Using Meshes.vi- 5 replies

-

- 3d picture control

- stl file

- (and 2 more)

-

Some self promotion here, but have you tried or seen this:

-

Make sure all your power options are set so that the computer doesn't turn off when not being used (no hibernate, no sleep, no usb suspend, etc)

-

Maybe this can help a little until 2016...Re-label Event Wire Xnode

-

I put together a simple xnode to relabel event registration reference wires that feed into dynamic events of event structures. With the increasing use of event based messaging systems, I found myself manually changing the name of event registration references to clarify the event name when multiple event registration references were used in an object. I did this by manually creating a cluster constant on the build cluster before the dynamic registration terminal on the event structure and renaming each registration reference. Being a lazy programmer, this seemed tedious after a few times so I decided to attempt to create an Xnode to accomplish the same thing faster. This video shows the "problem" and the potential solution using the xnode: Re-Label Xnode - Event Registration Here's another example showing another use case with ShaunR's VIM HAL Demo code: Re-Label Use Case ShaunR HAL Demo This may have been done before or there may be an easier way to do this, but I wanted to throw it out here to see if there's any interest and to see if people will try it out and give feedback. I've found it works best using quick drop for initial use (highlight wire, CTRL-Space,type re-label, CTRL-I, type new name in dialog) and for replacing or renaming an existing instance on the diagram (highlight existing xnode, CTRL-Space, type re-label, CTRL-P, type revised name in dialog). You can also use directly from the palette, but I found much faster from quick drop and also seen a couple crashes replacing through the pallete. The Double Click ability is also a work in progress. Its purpose is to allow you to quickly rename the relabel with the same dialog box, but when it executes it breaks the wire on the output connection. You can still re-wire it to the event structure, but you will have to open the Event Structure Edit Events menu to get the event to "Re-link". Something I'm trying to avoid. The Xnode generated code is simply a pass through wire with the output terminal renamed to the label of your choice. This seems to update attached event structures. - B sobosoft_llc_lib_diagram_tools-1.0.4.1.vip

-

Thanks for the heads up. I guess I'll have to sign up for the beta testing to get a peak.