-

Posts

264 -

Joined

-

Last visited

-

Days Won

10

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by bbean

-

Serial Communication Question, Please

bbean replied to jmltinc's topic in Remote Control, Monitoring and the Internet

or there's an issue at the hardware level eg. the TX and RX pins are swapped -

You are correct...I worded my thought incorrectly. My thought was that since the Keysight code was using Synchronous VISA calls and both the Keysight / Pendulum were most likely running in the UI thread bc their callers were in the UI thread and the execution system was set to same as caller initially, the VISA Write / Read calls to the powered off instrument were probably blocking the other instrument with a valid connection (since by my understanding there is typically only one UI thread).

-

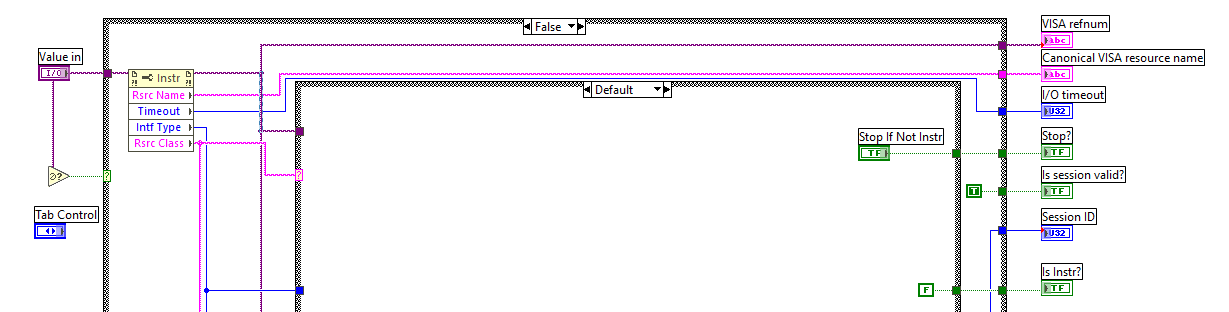

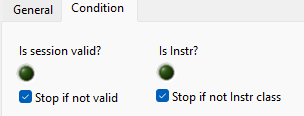

Thanks for the detailed explanation. I think the "Is Not a Number/Path/Refnum" is what I'm looking for based on what you mentioned and what the probe has under the hood. I've never really had a need for using it before with VISA until now and I'm learning things even after many years of coding LabVIEW. What brought up the question was a situation where two separate "instrument drivers", one an Keysight 973 multiplexer and the other a Pendulum CNT-91 Frequency counter were being called in separate loops. The loop for the Keysight 973 was setup to periodically (every 10secs) sample its channel list, the loop for the Pendulum was setup in a similar fashion to sample every 500ms. At some point the end user disconnected the Keysight 973, but wanted to continue measuring with the Pendulum CNT-91. But with the Keysight disconnected, the VISA calls in its loop were locking up or reserving the USB bus and interfering with the measurements in Pendulum loop. Diving into the weeds of the code, I noticed: the Keysight 973 driver (actually an older version using Agilent 34970 code) had Synchronous VISA Write and Read calls. So I thought those must be running in the UI thread and blocking the execution of the Pendulum loop when the instrument was connected. After switching them to Asynchronous VISA calls the problem persisted. Both VIs that sampled the data were had VI properties for Execution set to "same as caller" and since they were both called from top level VIs that had property nodes, event structures etc they were probably both running in the UI thread. I switched the Keysight to the "data acquisition" thread and the Pendulum to the "instrument driver" thread. But the problem still persisted. in the Keysight loop I had made use of the VISA User Data property to store and track the number of channels were being queried. This VI was taking ~2secs to execute when the Keysight was not connected. This was a surprise to me because I thought it was just a passive property node that would return its value (quickly and without error) even if the instrument was disconnected. But apparently, its doing much more under the hood, possibly even trying to reconnect (possibly VISA open under the hood?) to the Keysight instrument. After temporarily disabling this code and all instrument query in the 10 sec sampling loop, the interference or blocking of the Pendulum went away. Lessons learned: If an instrument is disconnected physically, make sure you disable any queries to it if the VISA session isn't valid or didn't' connect properly in your initialize state. I'm still not sure why a VISA query timeout or User Data call would block a separate VISA loop with a different address from executing if they are properly setup to use separate threads (maybe I'm still not doing that correctly). I guess I could use NI I/O trace to get more details about what's going on under the hood when the User data property is read. What's also weird to me is that the VISA timeout for that session was 10 seconds (not 2 seconds) but every call to the User Data property took almost exactly 2 secs. Avoid using the VISA user data property for passive storage because its not actually passive. the "Is Not A Number/Path/Refnum" works with VISA sessions. Even though I disabled sampling now if the Keysight initialize state fails, I'm also using the "Is Not a Number/Path/Refnum" as a paranoid check before executing the sampling code now. Looking at the VISA probe VI that Darren linked to, it appears like the code also checks the VISA property "Rsrc Class" to see if the VISA session "Is Instr" if the "Is Not a Number/Path/Refnum" returns a False. Does anyone know if this is a passive call under the hood? Thanks for everyone's help.

-

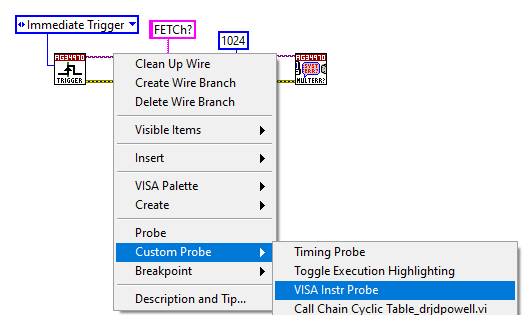

Does anyone know where the VISA Instr Probe (custom probe) source code is located? I'm interested in how the "Is session valid?" and "Is Instr?" Booleans are determined

-

Thank you. One of these solutions should work well for me.

-

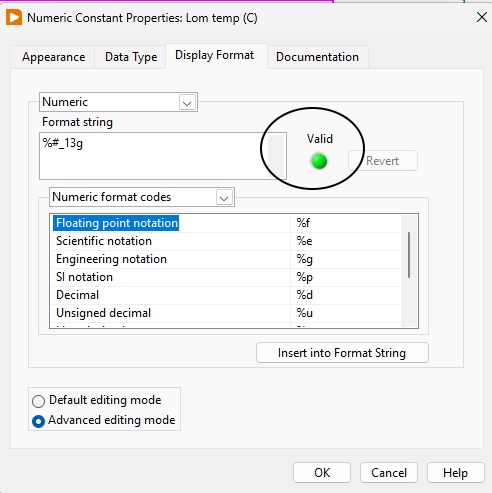

Does anyone know if there's a VI hidden away in the LabVIEW directory somewhere that returns whether a Format String is valid eg the "Valid" Boolean on the screenshot ? I'm terrible with regex but I can get an AI code generator to make a regular expression but I'd rather just use a LabVIEW built-in VI to be "safe". FYI this is the regex generated by AI if anyone cares: %(?:\d+\$)?(?:[-+#^0])?(?:\d+)?(?:[._]\d+)?(?:\{[^}]*\})?(?:<[^>]*>)?[a-zA-Z%]

-

Does anyone know the status of GPM and/or Gcentral? I went to gpackag.io and website isn't working. last commit here https://gitlab.com/mgi/gpm/gpm was a few years ago

-

you could try this property saver. its pretty old. See the examples in the palette that gets installed konstantin_shifershteyn_lib_property_saver-1.5.0.4.vip

-

Hi @drjdpowell, Before I reinvent the wheel, do you have any examples of python modules sending messages to one of your LabVIEW TCP Event Messenger Servers (or a simple UDP Receiver / Sender pair)? I'm interested in extending an existing LabVIEW Messenger Actor to guys on the python side as an API. I'm not sure the best way for them to send message, but a simple one would be to simply send a string with the format (Message Label>>Msg Param1, Msg Param2....)

-

Including solicitation of interest from potential acquirers

bbean replied to gleichman's topic in LAVA Lounge

I didn't see the word "LabVIEW" mentioned in any of those press release. Just seems like Emerson is on a buying spree. Typically this happens at the end of a business cycle when companies (CEOs) run out of ideas for how to improve their business from within. It will be interesting to see how Emerson executes and brings all these acquisitions together under one umbrella and whether LabVIEW has any role. -

Strange Development Environment Errors with RT Target

bbean replied to DTaylor's topic in LabVIEW General

I want to say I've seen this when one of the modules has gone bad. Have you tried pulling them all out and then installing them one by one to see if one of them causes the problem? -

you can already do this in a limited fashion in python. This is a simple example from a single prompt but you can see where its going: https://poe.com/s/BEWvBcTIEkrVXzTezpza The manual was probably online when the model was trained so it already has knowledge of it in this example. But once the size limitations of the text entry increase, you will be able to just upload a manual. And here's a simple refactoring to make an abstract class https://poe.com/s/MJp4t75WVrA5MR5SiS8B I believe the way they work is they are predicting each character on the fly

-

sounds like they were too busy updating NI Logo and colors to implement VISA. Oh well.

-

Returning Python exceptions to LabVIEW

bbean replied to Phillip Brooks's topic in Calling External Code

That seems reasonable. I have a wrapper class and pass back a pass/fail numeric and a status string, but now thinking back it might have been more appropriate to just pass back a standard labview error cluster. In my case, the python wrapper can be run from either LabVIEW or directly in python. The thing i struggle with is passing back real-time status that normally is provided in the terminal via python print statements when python scripts run stand-alone. I'm testing an approach that just sends these via a UDP session but I haven't found a way to override the Python print command so that it prints to both the terminal (python scripts) and to the UDP session (LabVIEW VIs). -

What type of instrumentation and data acquisition systems does your lab work with ? Mostly VISA type serial/gpib/LXI instruments or mostly data acquisition cards / PXI chassis type stuff?

-

This isn't enticing you to upgrade from LV 2009?😀

-

I would investigate cosmos. I haven't migrated a complete project to it yet but I'm implementing data collection, storage and telemetry GUIs to it for a new project working with python and FPGA programmers. A lot of the python programmers use python and it as a replacement for LabVIEW/TestStand. I haven't used their Script-Runner (TestStand-ish stuff) but the current version is highly capable for data collection, saving, presentation, limit checking. They are working on cosmos 5 which will run in a docker container and have web based gui's

-

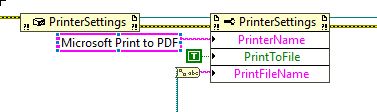

Ok. I misunderstood. But maybe there's something in there that can help. I remember having to do a lot of work arounds with callback VIs to get printing a panel without a prompt for filename to work. Attached is a 2014 version. Win10PrintPDF2014.llb

-

If you are using Win10 you can try the VI(s) in the attached LLB. We typically create a "print" VI that shows all the controls, decorations logos etc we want to print, then run the VI in this llb. I threw the llb together real quick...hope everything works. Win10PrintPDF.llb

-

What do you think of the new NI logo and marketing push?

bbean replied to Michael Aivaliotis's topic in LAVA Lounge

💩 -

This is a long shot here, but do they all need to run in the UI thread but their preferred execution system is set to "same as caller" and then something gets screwed up when they are called by dynamic dispatch in the runtime? What happens if you set their preferred execution system to "user interface" and retry.

-

agreed. As part of my trials and tribulations with MAX I had to repair NI VISA. With NIPM18.5? no option to do that so they recommend uninstalling and reinstalling...fair enough. Tried that and NIPM wanted to uninstall LabVIEW..wtf. Upgrading to the latest version of NIPM (19.6) provided a better experience allowing you to repair installs now.

-

Is the latest version 1.10.9.115? Is there another higher version somewhere because that one seems to still have the issue.

-

Has anyone used IPFS as a tool for storing and distributing test data (multiple gigabytes)? My use case would be to run tests that store data on local windows machines and then distribute that to other users who may have linux, windows etc and also to a centralized archiving location. The users and test machines are in a relatively strict network environment and most users machines are locked down. Some of the linux users may have elevated privileges to install things like ipfs but I'm worried about a typical windows user who may want to get the files easily without having to go through a bunch of command line steps to install ipfs on there machine after requesting elevated privileges.