-

Posts

5,008 -

Joined

-

Days Won

312

Content Type

Profiles

Forums

Downloads

Gallery

Posts posted by ShaunR

-

-

1 hour ago, codcoder said:

Doesn't sound too hard?

The comfyUI nodes are described by JSON in files called "Workflows" so we could import them and use scripting to create nodes. That's if we want parity. But we could support nesting which ComfUI wouldn't understand.

The WebUI's are just interfaces to create REST requests which we can easily do already. I'm just trying to find a proper API specification or something that enables me to know the JSON format for the various requests. Like most of these things, there are just thousands of Github "apps" all doing something different because they use different plugins. Modern programmers can do wonderful things but it's all built on tribal knowledge which you are expected to reverse engineer. The only proper API documentation I have found so far is for the Web Services which isn't what I want - I'm running it locally.

-

1

1

-

-

I've been playing around with an A.I. Imaging software called Stable Diffusion. It's written in Python but that's not the part that interests me...

There are a number of web browser user Interfaces for the Stable diffusion back-end. Forge, Automatic 1111, Comfy UI - to name a couple - but the last one, comfy UI, is graphical UI.

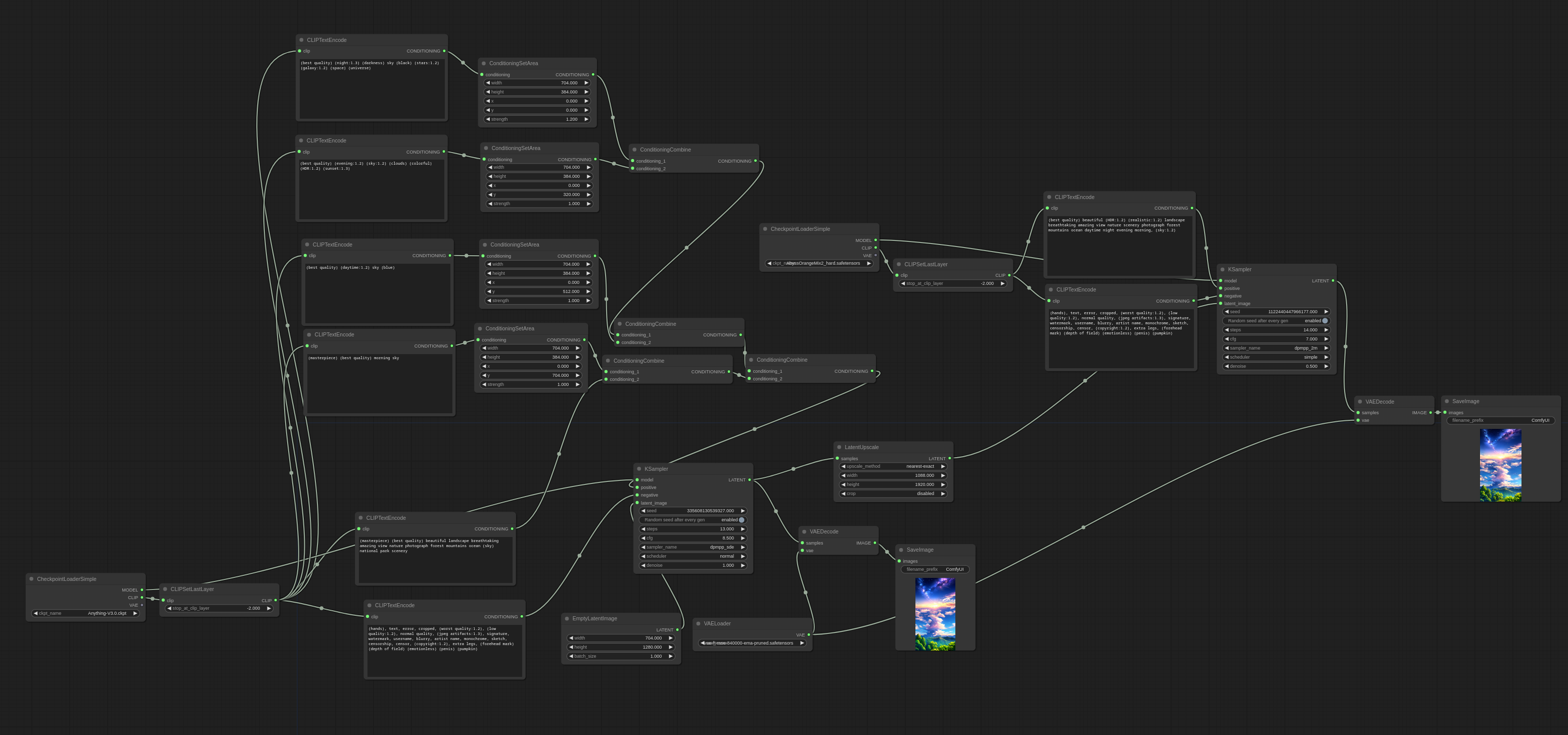

The ComfyUI block diagram can be saved as JSON or within a PNG image. That's great. The problem is you cannot nest block diagrams. Therefore you end up with a complete spaghetti diagram and a level of complexity that is difficult to resolve. You end up with the ComfyUI equivalent of:

The way we resolve the spaghetti problem is by encapsulating nodes in sub VI's to hide the complexity (composition).

So. I was thinking that LabVIEW would be a better interface where VI's would be the equivalent of the ComfyUI nodes and the LabVIEW nodes would generate the JSON. Where LabVIEW would be an improvement, however, would be that we can create sub VI's and nest nodes whereas ComfyUI cannot! Further more, perhaps we may have a proper use case for express VI's instead of just being "noob nodes".

Might be an interesting avenue to explore to bring LabVIEW to a wider audience and a new technology.

-

1

1

-

-

On 11/19/2024 at 11:48 AM, Rolf Kalbermatter said:

It just occurred to me that there is a potential problem. If your DLLs are always containing 32 in their name, independent of the actual bitness, as for instance many Windows DLLs do, this will corrupt the name for 64-bit LabVIEW installations.

I haven't checked if paths to DLL names in the System Directory are added to the Linker Info. If they are, and I would think they are, one would have to skip file paths that are only a library name (indicating to LabVIEW to let the OS try to find them through the standard search mechanism).

This of course still isn't fail proof:

DLLs installed in the System directory (not from Microsoft though) could still use the 32-bit/64-bit naming scheme, and DLLs not from there could use the fixed 32 naming scheme (or 64 fixed name when building with VIPM build as 64-bit executable, I'm not sure if the latest version is still build in 32-bit).

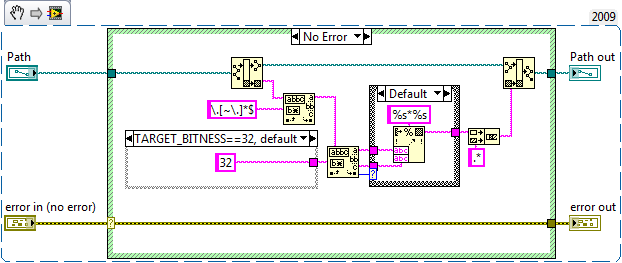

I've modified your sub vi to check the actual file bitness (I think). If you target user32.dll, for example, the filename out is user32.* - which is what's expected. I need to think a bit more about what I want from the function (I may not want the full path) but it should fix the problem you highlighted (only in Windows

).

).

-

11 minutes ago, Rolf Kalbermatter said:

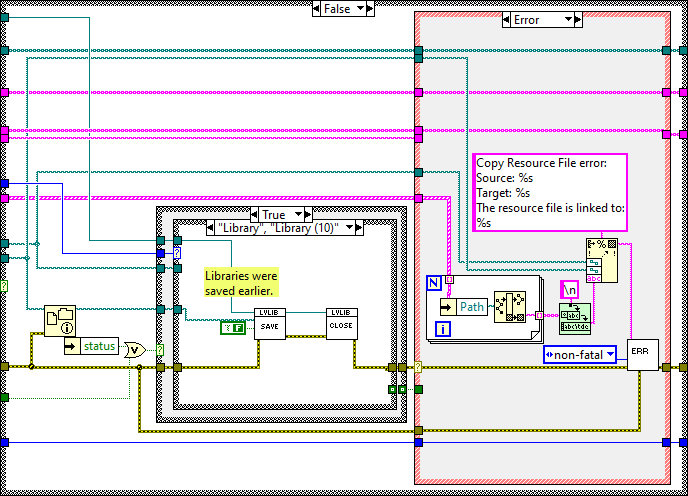

I checked on a system where I had VIPM 2013 installed and looked in the support/ogb_2009.llb. Maybe your newer VIPM has an improved ogb_2009.llb. Also check out the actual post I updated the image.

Yes. The path isn't passed through but I figured out what it was supposed to be.

this is the one in my installation:

It's a trivial change though. The important part is the adding the extra case and your new VI. I was about to go all Neanderthal on the "Write Linker Info" before you posted the proper solution.

-

25 minutes ago, Rolf Kalbermatter said:

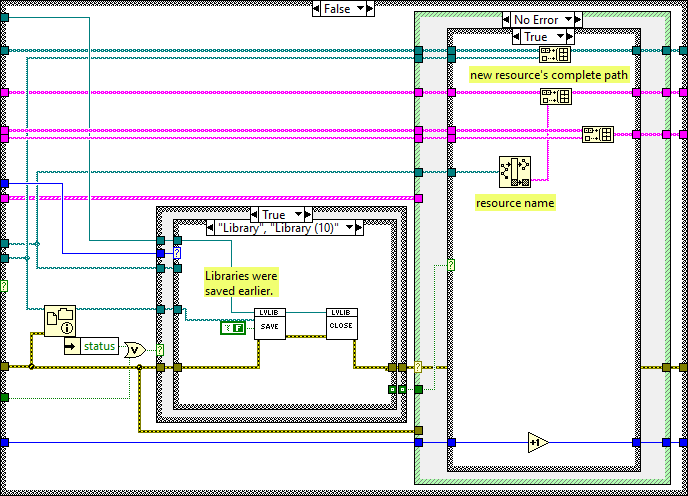

Not quite! It's better to actually modify the Copy Resource Files and Relink.vi. Just add an additional case structure to handle shared libraries.

The VI in question is this one:

This will unconditionally change the linking name of all shared libraries in your build. There is a possibility that that is not desired although I can't think of a reason why that could be a problem right now.

Sweet. It's not quite the same but I'll figure the rest out. You've done the hard part

Thanks.

Thanks.

-

3 hours ago, Rolf Kalbermatter said:

I changed deep in the belly of the OpenG Builder in OpenG\build\ogb.llb\Copy Resource Files and Relink VIs__ogb.vi, that for shared library names the file name is changed back to the previous <file name>*.* with some magic to detect the 32 or 64 in the file name if present. It's not fail safe and for that not a fix that I would propose for inclusion in a public tool, but it does the job for me. What basically goes wrong is that when LabVIEW loads the VIs, it replaces to magic place holders with the real values in the paths in the VIs in memory and when you then Read the Linker Info to massage that for renaming VIs, you receive these new fully resolved paths and when you then write back the modified linker info you cement the not-platform neutral naming into the VIs and save it to disk.

The OpenG Package Builder modifications mainly have to do with a more detailed selection of package content and special settings to more easily allow multi-platform support for shared library and other binary compiled content. In terms of user experience it is the total opposite of VIPM. It would overwhelm the typical user with way to many options and details that it could be useful for most. I had hoped to integrate the hierarchy renaming into the Package Builder too, since the information in the Package Builder would be basically enough to do that, but looking at the core of the OpenG Builder in Build Applciation__ogb.vi will for sure make you get the shivers to try to reimplement that in any useful way. 😁

There is a "Copy Resource Files and Relink" in "<program files>\JKI\VI Package Manager\support\ogb_2009.llb" and "<program files>\JKI\VI Package Manager\support\ogb_2017.llb".

Is it "Write Linker Info from File__ogb.vi" that you have modified?

I'll have to have a closer look.

-

On 11/11/2024 at 10:06 AM, Rolf Kalbermatter said:

Also needed to fixup the linker info in the VIs after creating the renamed (with oglib postfix) VI hierarchy through the OpenG Builder functions.

Can the JKI builder be modified to do this? I've already hacked some of their VI's in ogb_2009.llb so it didn't take 6 hrs to build. It's a huge problem for me when building. I have a solution that sort of works, sometimes, but not a full proper solution.

Can you detail your process?

-

14 hours ago, Cat said:

Hellooo? Anybody home?

For those of you who don't remember (or weren't even born yet when I started posting here 😄), I work for the US Navy and use a whole bunch of LabVIEW code. We're being forced to "upgrade" to Windows 11, so figured we might as well bite the bullet and upgrade from LV2019 to LV2024 at the same time. And then the licensing debacle began...

Due to our operating paradigm, we currently use a LV2019 permanent disconnected license for our software development. This was very straightforward back then. But not so much with LV2024 and the SaaS situation. Add to this the fact that I can't talk to NI directly and have to go thru our govt rep for any answers. And he and I are not communicating very well.

I'm hoping someone here has an answer to what I think should be a really simple question: If I have a "perpetual" license with 1 year service duration for LabVIEW, at the end of that year, if I don't renew the service, can I still use LabVIEW like always, as if I still had my old permanent license? I realize I would not have any more support or upgrades, but that's fine.

I've read thru the threads here and in the NI forum about this, but they mostly ended back when no one really knew how it was all going to shake out. So are we locked into either our ancient LV versions forever, or are we going to be paying Emerson/NI every year for something we don't really need?

Cat

Welcome back. Retirement not all it was cracked up to be?

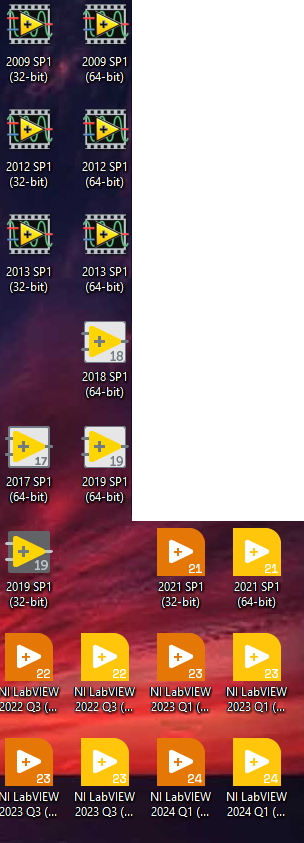

My only comment about this (because I still use LV 2009-best version ever) is that generally:

- Never do it in the middle of a project. Upgrading LabVIEW is a huge project risk.

- Don't upgrade if the software already works and you are adding to it (only use it on new projects).

- Only upgrade if everyone else in your team upgrades at the same time.

- Upgrade if there are specific features you cannot do without.

- Upgrade if it will greatly reduce the time to delivery (unlikely but it has been known).

- Upgrade if there is a project stopping bug that is addressed in the upgrade you are considering.

- Remember that you can have multiple versions on the same machine. You don't need (and should never) go and recompile all your old projects.

-

-

1

1

-

20 hours ago, crossrulz said:

Is ShaunR actually a robot?

Nobody has met me, right? I might be A.I. without the I

-

-

22 hours ago, Bruniii said:

Now I'm looking for a way to read it using the public key in LabVIEW.

You want EVP_PKEY_verify.

-

4 hours ago, Rolf Kalbermatter said:

It's doable with a Post Install VI in the package that renames the shared libraries after installation, except with VIPM 2017 and maybe others, which needs to run with root privileges to do anything useful.

I have the binaries as arrays of bytes in the Post Install. I convince VIPM to not include the binary dependency and then write out the binary from the Post Install. You can check in the Post install which bitness has invoked it to write out the correct bitness binary. Not sure if that would work on Linux though.

-

1

1

-

-

39 minutes ago, Rolf Kalbermatter said:

I just can install the shared library with the 32 and 64 bit postfix alongside each other and be done, without having to abandon support for 8.6!!

Installing both binaries huh? Don't blame you. It takes me forever to get VIPM to not include a binary dependency so I can place the correct bitness at install time with the Post Install (64bit and 32bit have the same name. lol)

-

2 hours ago, hooovahh said:

I do occasionally have correspondence with him. He is aware of the spam issue and we've talked about turning on content approval. He seems to want to update the forums and just has limited bandwidth to do so. Until that happens I'll just keep cleaning up the spam that gets through.

The longer it is unresolved, the less likely users will bother to return. I used to check the forums every day. Now it is every couple of weeks. Soon it will be never.

Some might see that as a bonus

-

1

1

-

1

1

-

-

18 hours ago, Rolf Kalbermatter said:

It's a valid objection. But in this case with the full consent of the website operator. Even more than that, NI pays them for doing that.

The objection is that I (as a user) do not have end-to-end encryption (as advertised by the "https" prefix) and there is no guarantee that all encryption is not stripped, logged and analysed before going on to the final server. But that's not just a single server, it's all servers behind Cloudlfare, so it would make data mining correlation particularly useful to adversaries.

Therefore I refuse to use any site that sits behind Cloudflare and my Browsers are configured in such a way that makes it very hard to access them so that I know when a site uses it. If I need the NI site (to download the latest LabVIEW version for example) then I have to boot up a VM configured with a proxy to do so. I refuse to use the NI site and the sole reason is Cloudflare.

So now you know how you can get rid of me from Lavag.org - put it behind Cloudlfare

-

On 8/14/2024 at 10:37 AM, Rolf Kalbermatter said:

Now, spending substantial time on their forum is another topic that could spark a lot of discussion

Any site that uses Cloudflare is completely safe from me using it. As far as I'm concerned it is a MitM attack.

-

1 hour ago, Rolf Kalbermatter said:

Maybe there is an option in the forum software to add some extra users with limited moderation capabilities. Since I was promoted on the NI forums to be a shiny knight, I have one extra super power in that forum and that is to not just report messages to a moderator but to actually simply mark them as spam. As I understand it, it hides the message from the forum and reports it to the moderators who then have the final say if the message is really bad. Something like this could help to at least make the forum usable for the rest of the honorable forum users, while moderation can take a well deserved night of sleep and start in the morning with fresh energy. 🤗 It only would take a few trusted users around the globe to actually keep the forum fairly neat (unless of course a bot starts to massively target the forum. Then having to mark one message a time is a pretty badly scaling solution).

Not exactly a software solution though. I wrote a plugin for my CMS that uses Project Honeypot so it's not that difficult and this is supposed to be a software forum, right?

The problem in this case, however, seems to be that it's an exploit-it needs a patch. Demoting highly qualified (and expensive) software engineers to data entry clerks sounds to me like an accountants argument (leverage free resource). I'd rather the free resource was leveraged to fix the software or we (the forum users) pay for the fix.

The sheer hutzpah of NI to make you a no-cost employee to clear up their spam is, to me, astounding. What's even more incomprehensible is that they have also convinced you it's a privilege

-

Port 139 is used by NetBIOS. The person in the video is using port 8006 which is not used by any other programs.

-

12 hours ago, hooovahh said:

Also just so others know, you don't have to report every post and message by a user. When I ban an account it deletes all of their content so just bringing attention to one of the spam posts is good enough to trigger the manual intervention.

By now, you really shouldn't have to be deleting them manually.

If it's an exploit then it should have been patched already (within 24hrs is usual). If it's just spam bots beating CAPTCHA then maybe we can help with a proper spam plugin (coding challenge?). This is a software engineering forum and if we can't stop bots posting after a week then what kind of software engineers are we?

It's also quite clear to me that this is no more than a script kiddie. You can watch the evolution of the posts where originally they had unfilled template fields that, as time went on, became filled.

-

1 hour ago, LogMAN said:

They probably went to bed for a few hours, now they are back. Is CAPTCHA an option for posting new messages?

They are signing up when new registrations are disabled. It looks like an exploit.

-

3 hours ago, ensegre said:

well, NSV are out of cause here first because it's a linux distributed system, second because of their own proven merits 😆...

The background is this, BTW. We have 17 PCs up and running as of now, expected to grow to 40ish. The main business logic, involving the production of control process variables, is done by tens of Matlab processes, for a variety of reasons. The whole system is a huge data producer (we're talking of TBs per night), but data is well handled by other pipelines. What I'm concerned with here is monitoring/supervision/alerting/remediation. Realtiming is not strict, latencies of the order of seconds could even be tolerated. Logging is a feature of any SCADA, but it's not the main or only goal here; this is why I'd be happy with a side Tango or whatever module dumping to a historical database, but I would not look in the first place into a model "first dump all to local SQLs, then reread them and merge and ponder about the data". I'd think that local, in-memory PV stores, local first level remediation clients, and centralized system health monitoring is the way to go.

As for the jenga tower, the mixup of data producers is life, but it is not that EPICS or Tango come without a proven reliability pedigree! And of course I'd chose only one ecosystem, I'm at the stage of choosing which.

ETA: as for redis I ran into this. Any experience?

I've no experience with Redis.

For monitoring/supervision/alerting, you don't need local storage at all. MQTT might be worth looking at for that.

Sorry I couldn't be more helpful but looks like a cool project.

-

13 hours ago, ensegre said:

Reviving this thread. I'm looking for a distributed PV solution for a setup of some tens of linux PCs, each one writing some ten of tags at a rate of a few per sec, where the writing will mostly be done by Matlab bindings, and the supervisory/logging/alerting whatnot by clients written in a variety of languages not excluding LV. OSS is not strictly mandatory but essentially part of the culture.

I'd would be looking at REDIS, EPICS and Tango-controls (with its annexes Sardana, Taurus) in the first place, but I haven't yet dwelled into them order to compare own merits. In fact I had a project where I interfaced with Tango some years ago, and I contributed cleaning up the official set of LV bindings then. As for EPICS, linux excludes the usual Network Shared Variables stuff (or the EPICS i/o module), but I found for example CALab which looks on spot. Matlab bindings seem available for the three. The ability of handling structured data vs. just double or logical PV may be a discriminant, if one solution is particularly limited in that respect.

Has anyone recommendations? Is anyone aware of toolkits I could leverage onto?

My tuppence is that anything is better than Network Shared Variables. My first advice is choose one or two, not a jenga tower of many.

What's the use-case here? Is the data real-time (you know what I mean) over the network? Are the devices dependent on data from another device or is the data accumulated for exploitation later? If it's the latter, I would go with SQLite locally (for integrity and reliability) and periodic merges to MySQL [Maria] remotely (for exploitation). Both of those technologies have well established API's in almost all languages.

-

4 minutes ago, Mads said:

Oh, I knew. I just like to poke dormant threads (keeps the context instead of duplicating it into a new one) until their issue is resolved. Only then can they die, and live on forever in the cloud😉

I can't even find threads I posted in last week. It's a skill, to be sure.

-

46 minutes ago, Mads said:

The "LVShellOpen"-helper executable seems to be the de-facto solution for this, but it seems everyone builds their own. Or has someone made a template for this and published it?

Seems like a good candiate for a VIPM-package

(The downside might be that NI then never bothers making a more integrated solution, but then a again such basic features has not been on the roadmap for a very long time🤦♂️...)

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

in OpenG General Discussions

Posted

Feel free to add them in.