-

Posts

1,203 -

Joined

-

Last visited

-

Days Won

116

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Neil Pate

-

The forced font size change for clusters (and other thing) meant it was an immediate uninstall for me.

-

Making a cluster a class is not going to help at all unless you also take some time to restructure your data.

-

Weird, sorry about that. Does this work? https://discord.gg/KaXKg5Jw

-

For now I would prefer to keep the link expiring. Here is the new one https://discord.gg/ZghDZsxZ

-

Request for LabVIEW Block Diagrams for Dataset Collection

Neil Pate replied to Elbek Keskinoglu's topic in LabVIEW General

I think you need to share some code of what you are trying to do, as this still does not really make sense to me. When you say "experimental setup" I think you mean some kind of configuration controls on the FP of a VI, but I do not see how that related to some graphs and charts unless you look at the code also. -

Request for LabVIEW Block Diagrams for Dataset Collection

Neil Pate replied to Elbek Keskinoglu's topic in LabVIEW General

Sorry I do not follow the you. The block diagram is the code, it is not represented by a cluster that you can wire in to your json writing code. -

Request for LabVIEW Block Diagrams for Dataset Collection

Neil Pate replied to Elbek Keskinoglu's topic in LabVIEW General

@Elbek Keskinoglu are you trying to get a textual representation of a data structure or the block diagram itself? LabVIEW does actually have a way to save a VI as some kind of XML file or something, but its a bit hidden away and I don't actually know how to make this work. Others in the forum probably will though. See here if you are curious. -

Thanks, this is gold! I did actually brwose to that (via ftp.ni.com which now redirects) but I did not find the `support` directory.

-

Thank you anyway for your attempt @dadreamer, I do appreciate the effort.

-

OK, got it. I got in touch with NI support and they helped me very quivkly!

-

Hi, I am trying to help a colleague restore an old ATE and we are looking for the identical DAQmx driver that was present originally. This is DAQmx 8.0.1 Does anyone know where I can download this? Unfortunately the DAQmx drivers on ni.com only go back to version 9.0 Thanks!

-

Maybe you can make a channel on the Discord? https://discord.gg/fP3mmBty

-

I seem to recall you do need to start with one of the controls that has 6 pictures. I played around with this years ago but cannot remember how I started. Will try and remember! Did you try the System style controls? They have 6 pictures and are editable (I think). Edit 2: Sorry I don't think I read your original post properly...

-

Sure here you go https://discord.gg/cW4hEddg

-

As your application grows I would expect the need to pass additional state information between the frames of your VI. You could make a "Core Data" type cluster and put whatever you want inside that. Then you just have one wire that gets passed on the shift register.

-

That means you are not reading from the DAQ quick enough. You can set the DAQ to continuous acquisition, and then read a constant number of samples from the buffer (say 1/10th of your sampling rate) every iteration of your loop and this will give you a nice stable loop period. Of course this assumes your loop is not doing other stuff that will take up 100 ms worth of time.

-

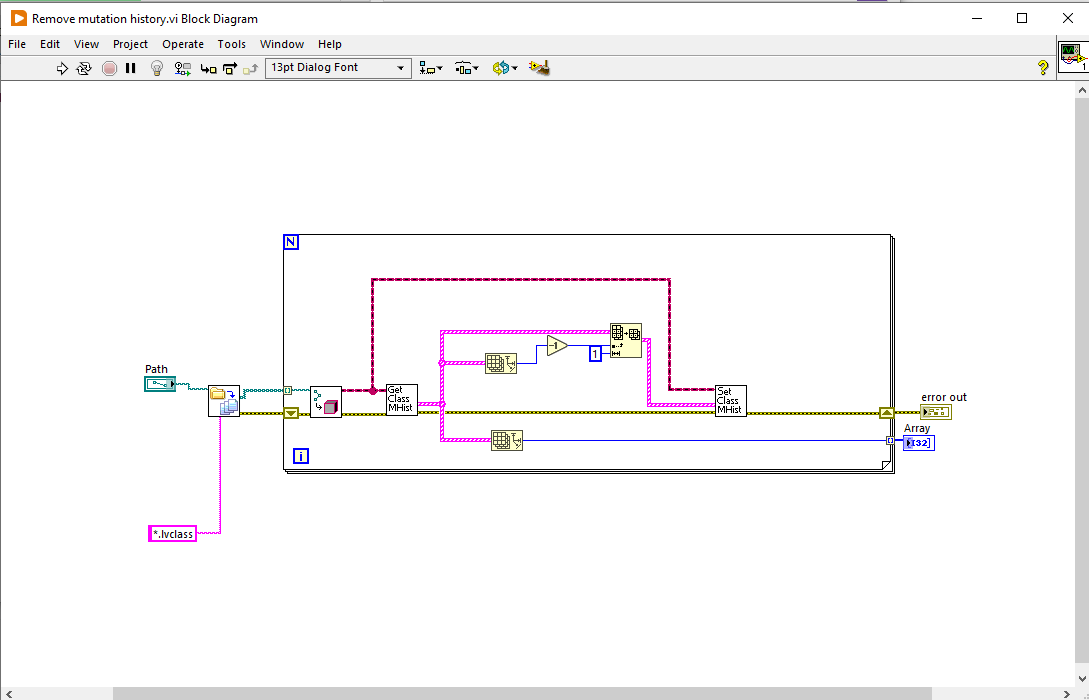

LabVIEW constant values change

Neil Pate replied to Tomi Maila's topic in Object-Oriented Programming

My understanding is that the class mutation history is present in case you try and deserialise from string. It is supposed to "auto-magically" work even if a previous version of the data is present. This is a terrible feature that I wish I could permanently turn off. I have had lots of weird bugs/crashes especially when I rename class private data to the same name as some other field that was previously in it. This feature also considerably bloats the .lvclass file size on disk. For those not aware, you can remove the mutation history like this -

Set Menu Item Info - How to make the Shortcut menu input a no-op?

Neil Pate replied to bjustice's topic in LabVIEW General

The point is more about the difference in behaviour with connecting the cluster and not having it connected (even though the data in would appear to be the same(. I would expect most LabVIEW developers to assume the code would function identically in both circumstances, as detailed in the OP. I cannot think of any other nodes that work like that. That is confusing and inconsistent behaviour and should not be present. -

Totally tangentially... I had an interesting bug once, and I eventually tracked it down to stacked property nodes. Something which is probably expected when you think about it, but not obvious at first is that if one of the actions of a property node does give an error then the following property nodes do not execute. I guess this is related to the comment posted by @sam above regarding ignoring errors. I never expected a property node could fail if the reference was valid, but there are certain conditions where it can happen. I forget what caused it though.

-

Set Menu Item Info - How to make the Shortcut menu input a no-op?

Neil Pate replied to bjustice's topic in LabVIEW General

Rolf, what I meant was that the bevaiour as manifested is strange and unexpected, and not even something we could recreate in our own code. -

Set Menu Item Info - How to make the Shortcut menu input a no-op?

Neil Pate replied to bjustice's topic in LabVIEW General

I dunno, something about this feels fishy to me. I agree with @bjustice this feels like a bug (or perhaps just a badly designed API). This is not the same as polymorphism as the connector pane is not changing. We could not write any normal LabVIEW code that had this same behaviour. -

What determines which monitor a dialog shows on?

Neil Pate replied to drjdpowell's topic in User Interface

That DMC deck is quite nicely done, thank you for sharing it. -

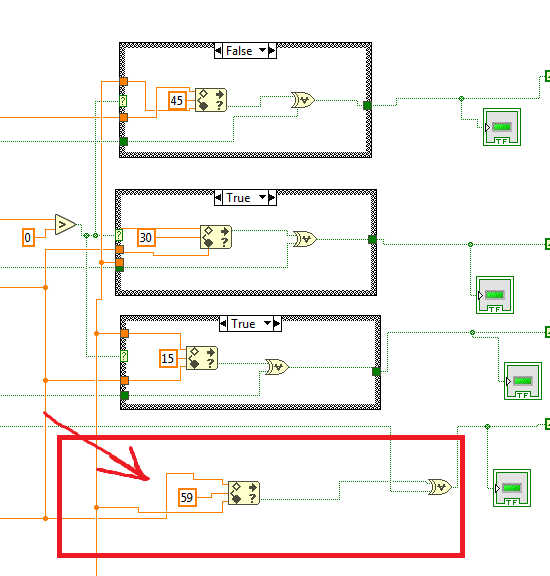

Well, given that this is some kind of (bad) timing logic, and 59 is suspiciously like the number of seconds in a minute, I would suspect this is some kind of check to see if the elapsed time is > 1 minute. I strongly suspect this code is overcomplicated and there are signficantly simpler ways of doing things. These four sections of parallel code below look like they constantly evaluate if the elapsed time is greater than 45, 30, 15 and 59 seconds. The shift register on the boolean essentially latches (i.e. remembers) the previous value, and I think the XOR is used to reset the latch. I am not sure what is in the other cases of the Case Structures. Strangely the top one is active on a False, whereas the others are on True.

-

Ah right. Thanks for the clarification.