Search the Community

Showing results for tags 'timeout'.

-

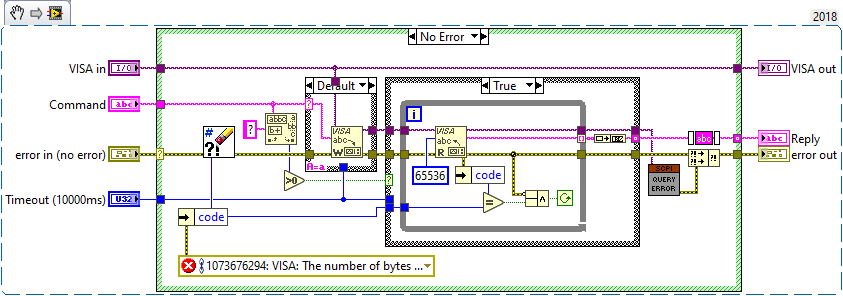

Hi, I'm connecting to a Rigol DZ1000 Oscilloscope via USB and using the :DISP:DATA? ON,0,PNG command to grab a screenshot. Reading out the data in blocks of 65535 bytes until there is no more (see attached vi). This normally works fine but yesterday I was getting a timeout error. I fired up IO Trace and got this: > 783. viRead (USB0::0x1AB1::0x04CE::DS1ZA201305475::INSTR (0x00000001), "#9000045852‰PNG.......", 65536 (0x10000), 45864 (0xB328)) > Process ID: 0x000039C8 Thread ID: 0x00001760 > Start Time: 13:13:54.1169 Call Duration 00:00:10.4323 > Status: 0xBFFF0015 (VI_ERROR_TMO) You can see that 45864 bytes were received, which is exactly what was specified by the binary data header (45852 data bytes + 11 header bytes + 1 termination char) I dumped the reply string into a binary file and set the scope to run so it show something else on the screen. Sure enough the error went away. I also dumped a good result into a file. Then I tried to figure out what the problem may have been but I didn't get anywhere. Any ideas? Sure looks like a bug in VISA read or perhaps an incorrectly escaped reply from the scope? It's very easy to "convert" the reply into the screenshot - just remove the leading 15 bytes (4 bytes from WriteBinayFile and 11 bytes from the scope header). And yes, both data files display just fine as PNG. I don't think PNG does internal checksum so byte errors would be hard to spot. Any ideas what could have caused that timeout?

-

I'm writing an application where users can plug in their own matlab code via a Mathscript "wrapper". I need a way of timing out the matlab in case the user creates an infinite loop. Does anyone know if mathscript provides a native timeout (google didn't find anything when searching "mathscript timeout"). If not, does anyone have any good (hopefully simple) suggestions? Thanks!

-

In my project i have 2 task (1).Read data of sensor (attached to arduino board) serially and display on LabVIEW. (2).Control output(just ON-OFF) pins of arduino using LabVIEW. So,for that i am using TAB in labview.created 2 TAB called Oscillospe and Input. If i first start Oscilloscope it work well. But when i come to Oscilloscope tab after using Input tab. It gives Time out error (VISA read) If i am executing in Highlight mode it works well tab--event.vi

-

Some of my code is giving me behavior I'm not understanding. I've been talking with NI tech support, but I'm trying to better understand what's going on for the foreseeable project down the road that is going to tax what TCP can transmit. I have a PC directly connected to a cRIO-9075 with a cable (no switch involved). I've put together a little test application that, on the RT side, creates an array of 6,000 U32 integers, waits for a connection, and then starts transmitting the length of the array (in bytes) and the array itself over TCP every 100 msec. The length and data are a single TCP write. On the PC side, I have a TCP open, a read of four bytes to get the length of the data, then a read of the data itself. The second TCP read does not occur if there is any errors with the first TCP read. Both reads have a 100 msec timeout. The error I'm getting is a sporatic timeout (error 56) at the second TCP read on the PC side. This causes the next read of the data length to be from my data so I get invalid data from there out. The error occurs from second to hours after the start of transmission. As a sanity check, I did some math on how long it should take to transmit the data. Ignoring the overhead of TCP communication, it should take ~2 msec for the write to transmit. A workaround seems to be to have an infinite timeout (value of -1) for the second TCP read. I'm rather leery of having an infinite (or very long) timeout in the second read. Tech support was able to get this working with 250 msec on the second read. Test VIs uploaded... Test Stream Data.zip

-

Hi all, new to Lava, so (kindly) let me know if I screwed up my post I have a multi-user web application controlled by a LV web service. I keep track of all the users through the web service > sessions VI's. This is pretty cool and works really great. I can give each user different levels of authentication, force them to use SSL, track where they go and when throughout the website, etc. Now what I'd like to do is keep track of when their session expires. Obviously I can provide a "Logout" link on the client-side which ultimately calls the "Destroy Session" VI, but I can't ensure that all clients will click the link to log out (e.g. how many times do you really sign out of gmail verse just close the browser?!) What's the best way to catch the session timeout event that is specified during "Create Session" VI? It appears as if I need a "LV Web Service Request" object to even call the Destroy Session VI, which makes matters much more difficult it seems. Is this even possible? Thanks for the help

- 1 reply

-

- web service

- session

-

(and 2 more)

Tagged with: