-

Posts

5,015 -

Joined

-

Days Won

312

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by ShaunR

-

OK. This is how I design systems like this. 1. TCP. Each subsystem has a TCP interface. This allows spinning up "instances" then connecting to them even across networks. You can rationalise the TCP API and I usually use a thin LabVIEW wrapper around SCPI (most of your devices will support SCPI). You can also use it to make non SCPI compliant devices into SCPI ones (your Environmental Chamber - EC - is probably one that doesn't support SCPI). If you do this right, you can even script entire tests from a text file just using SCPI commands. 2. Services. Each subsystem offers services. These are for when #1 isn't enough and we need state. A good example of this is your Environmental Chamber. It is likely you will have temperature profiles that control signals and measurements need to be synchronized with. While services may be devices (a DVM, for example), services can also be synchronization logic that sequences multiple devices. If you put that logic in your EC code, it will fix that code for that specific sequence so don't do that. Instead use services to glue other devices (like the DVM and EC) into synchronization processes. Along with #1, this will form the basis of recipe's that can incorporate complex state and sequencing. In this way you will compartmentalize your system into reusable modules. First thing you should do is make a "Logging" service. Then when your devices error they can report errors for your diagnostics. The second thing should be a service that "views" the log in real-time so you can see what's going on, when it's going on. (This is why we have logging levels). 3. Global State. If you have 1 & 2 this can be anything. It can be a text file with a list of SCPI commands (#1). It can be a service you wrote in #2, Test Stand, web page or a bash/batch script. This is where you use your recipe's to fulfill a test requirement. 4. You will need to think carefully about how the subsystems talk to each other. For example. Using SCPI a MEAS :VOLT:DC? command returns almost instantly (command-response pattern). However for the EC you may want to wait until a particular temperature has been reached before issuing MEAS :VOLT:DC?. The problem here is that SCPI is command-response but the behavior required is event driven. One could make the the TCP interface (#1) of the EC accept MEAS :TEMP? where the command doesn't return unless the target setpoint has been reached. However, this won't work reliably and requires internal state and checks for the edge cases though. So it may actually be better in #2. There are a number of ways to address these things using #1, #2 or #3 and that is why you are getting the big bucks. You will notice I haven't mentioned specific technologies here (apart for TCP). For #1 you shouldn't need anything other than reentrant VI's and VI Server. For #2 you can use your favourite foot-shooting method but notice that you are not limited to one type and can choose an architecture for the specific task (they don't all have to be QMH, for example). For #3 you don't even have to use LabVIEW.

-

You actually have 2 levels with LLB's (semanticly) and that's more than enough for me. I also don't agree with all the private stuff. Protected should be the minimal resolution so people can override if they want to but still be able to modify everything without hacking the base. This only really makes sense in non-LabVIEW languages though so protected might as well be private in LabVIEW. And don't get me started with all that guff on "Friends" But in terms of containers, external users can call what they like as far as I'm concerned but just know only the published API is supported. So making stuff private is a non-issue to me. If I'm feeing generous and want them to call stuff then I make it a Top Level vi in the LLB. Everything else is support stuff for the top level VI's so call it at your peril. I still maintain PPL's are just LLB's wearing straight-jackets and foot-shooting holsters.

-

*prerequisite. Packed libraries are another feature that doesn't really solve any problems that you couldn't do with LLB's. At best it is a whole new library type to solve a minor source code control problem.

-

There isn't anything really special about lvlibs. They are basically containers with a couple of bells and whistles. If you look at them with a text editor you will see it's basically a list of VI's in an XML format. The main reason I use them is that they can be protected with the NI 3rd Party Activation Toolkit. A secondary reason I use them is for organisation and partitioning. It would be frowned upon by many but I use lvlibs for the ability to add virtual directories and self populating directories for organisation and contain the actual VI's in llb's for ease of distribution. I don't see them as a poor-mans class, rather a llb with project-like features.

-

This is the modern 2020's equivalent of "works for me".

-

I find it interesting that spam really wasn't an issue until the forums were upgraded. I run old software on my website and I've noticed a reduction in spam attempts as time goes on and the scanners update to newer exploits. I was getting spam through the on-site contact form as they were bypassing the CAPTCHA. It's prevented with a simple .htaccess RewriteCond but when I recently upgraded the website OS I turned it off. It took a month for a scanner to find it and start spamming and it only sent every hour. A few years ago it took something like 30 minutes and they sent every 5 minutes. By far the most effective methods to stop spam are Checking for reverse DNS resolution. Checking against known blacklists (like spamhaus.org). Offering honeypot files or directories (spider traps). #2 tends to have a low false positive rate but [IMHO] even 1 false positive is unacceptable for mail - although might be acceptable for a forum. I also wrote a spam plugin for my CMS which basically did the above first 2 things and a couple of other things like checking against a list of common disposable email addresses, checking user agents and so on. The way those things work is they tend to ban the IP address for an amount of time but I didn't want to ban someone that was trying to send an message through the site maybe because an email had bounced ; so I turned it off.

-

There is no automatic garbage collector. It's an AQ meme that he used to rage about it.

-

Controlling PTZ functionality of an ONIF camera from LABVIEW

ShaunR replied to BTS_detroGuy's topic in Calling External Code

Indeed. It's not a full solution as it doesn't support multiple streams, audio or other encoding types. But if you want to get the audio then you need to add the decoding case (parse is the nomenclature used here) for the audio packets in the read payload case structure. -

Controlling PTZ functionality of an ONIF camera from LABVIEW

ShaunR replied to BTS_detroGuy's topic in Calling External Code

IIRC there are a couple of RTSP libs for around (a while ago now). Some are based on using the VLC DLL's and I even saw one that was pure LabVIEW. Might be worth having a look at them for "inspiration". -

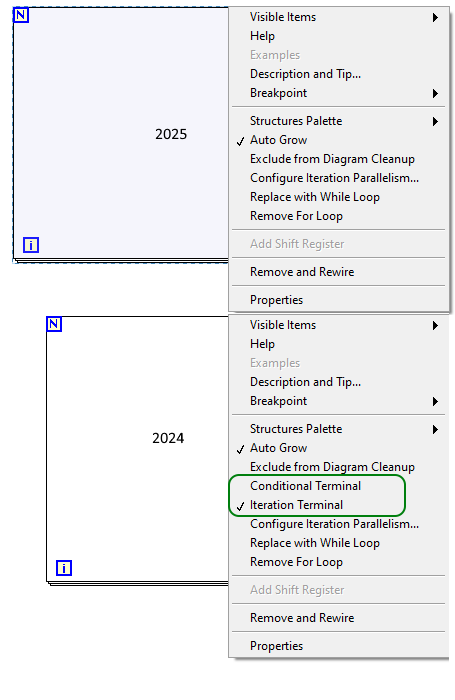

Where'd the conditional terminal go?

ShaunR replied to ShaunR's topic in Development Environment (IDE)

Makes sense. It just goes to show how ingrained workflows are and little things can trip you up. I was right-clicking over the N, over the I. Right clicking 2 pixels down/up from the edge. Top edge, bottom edge, left right. -

Probably not the feedback you are expecting but we really should do something about the nasty root loop API calls in the input API. I have somewhat progressed with this over the years and have the windows stuff all working for mouse and keyboard (and a little of the Linux) but I don't have a Mac so can't do anything on that. If there is some interest then let me know and I will see if I can allocate time to getting an API together.

-

It's not April yet so I must have missed a memo somewhere. How do I get For Loop conditional terminal back?

-

-

Strange VIM conversion and break down upon Source Distribution build

ShaunR replied to X___'s topic in LabVIEW General

I have similar problems now and again but it's not VIM's. Some things to try to sniff out the problem: Check project files and lvlibs with a text editor. If you have full file paths then that's a problem. File paths should be relative BUT! LabVIEW cannot always use a relative path (for example if it's on a network, different drives et.al.) Sometimes LabVIEW also gets confused but you need all paths to be relative even if it means reorganising how you structure your files. Do the above and put all dependencies in VI.lib. If you are using source control, checkout dependencies to the VI.lib directory, not your own file organisational preferences (some people like to have them on a different drive, for example). The litmus test for this is that you should be able to move your project to different locations without getting the VI searching dialogue. The search will invariably put a full path in when it finds the file-don't let it. Recursion breaks the project conflict resolution. If you have recursive functions, make sure they are not broken by manual isepction. The usual symptom is that there are no errors in the error dialogue but conflicts exist and VI's are broken somewhere. Polymorphic VI's hate recursion. If you are using polymorphic VI's recursively, don't use the polymorphic container (the thing that gives you the drop-down selector)! Only use polymorphic VI's as interfaces to other code - not itself. If you are using the polymorphic VI recursively then use the actual VI not the polymorphic container. If something gets broken you will go round in circles never getting to the broken code and multiple things will be broken. -

Nice. If you replace the OpenG VI with the above then we don't need to install all those dependencies for your demo What do you expect the cancellation behviour to be? Press escape while holding the mouse down? Mouse up cancels unless the dragged window is outside the main window? Both? Something else? I wouldn't want this feature to be a "framework" though. It would need to be much easier than that. Maybe a VI that accepts control references and it just works like above on those controls?

-

You are probably getting a permissions error on the Open (windows doesn't ordinarily allow writing "c:") which will yield a null refnum and an err 8 on the open. Your err 5000 becomes a noop as does the write. You're then clearing that error so when you come to close the null refnum it complains it's an invalid parameter - equivalent to the following. Is it expected behaviour? Yes. Should it report a different error code? Maybe.

-

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

Faster execution than scripting. Scripting is incredibly slow. -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

Well. You could get it from the file. It's under the block diagram heap (BDHP) which is a very old structure. I personally wouldn't bother for the reasons I stated earlier but it would be much faster than using scripting. -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

Yes. This is exactly what is required. The User32 problem can be resolved either with file path comparison (which you stated earlier) or a list of known DLL names. I could think of a few more ways to make it automatic but I would lean to the latter as the developer could add to the list in unforeseen edge cases. The former might just break the build with no recourse. You seem to have added that for no apparent reason, from what I can tell. -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

This is the same as naming a DLL x32 or x64 with extra steps. You are now adding a naming convention to a read linker and a modify linker. It's getting worse, not better. -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

That doesn't help you with user32.dll. -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

That is a given. The only issue I would have there is when VIPM is updated. However, you still have not explained why the "Extract Resources Info From Linker Info.vi" needs modification if all modifications can be achieved in the "Copy Resource Files and Relink VIs__ogb.vi" -

OpenG LabVIEW Zip 5.0.0-1 - stuck at the readme

ShaunR replied to PA-Paul's topic in OpenG General Discussions

I think it was just tongue-in-cheek whimsy. I'm still not convinced it needs fixing around there at all. As far as I can tell, it only needs to be fixed at the original VI you proffered. The only issue you seemed to have is when a binary that isn't part of the developers distribution has a 32 or 64 on it (like user32.dll). I'd be more inclined to think of your initial suggestion of comparing paths to circumvent that though.