-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

279

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Rolf Kalbermatter

-

Files end up as directories in zip archive

Rolf Kalbermatter replied to Mads's topic in OpenG General Discussions

Thanks I'll have a look at this over the weekend. EDIT: I did have some look at this and got the newer code to work a bit more, but need to test on a real x64 cRIO system. Hope to get my hands on one during this week. -

Direct access to camera memory

Rolf Kalbermatter replied to RayR's topic in Machine Vision and Imaging

Duplicate post -

Direct access to camera memory

Rolf Kalbermatter replied to RayR's topic in Machine Vision and Imaging

Without documentation of the .Net interface for this component, there is no way to say if that would work. This refnum that is returned could be just a .Net object wrapping an IntPtr memory pointer as you hope, but it could be also a real object that is not just a memory pointer. Without seeing the actual underlaying object class name it's impossible to say anything about it. It is to me not clear if that Memory object is just the description of the image buffer in the camera with methods to transfer the data to the computer or if it is only managing the memory buffer on the local computer AFTER the driver has moved everything over the wire. If it is the first, your IntPtr conversion has absolutely no chance to work, since the "address" that refnum contains is not a locally mapped virtual memory address on your computer but rather a description of the remote buffer in the camera, and the CopyToArray method does a lot more than just shuffling data between two memory locations on your local computer. If it is indeed just a local memory pointer as you hope, I would not even try to copy anything into an intermediate array buffer but rather use the Advanced IMAQ function "IMAQ Map Memory Pointer.vi" to retrieve the internal memory buffer of the IMAQ image and then copy the data directly in there. However you can usually not do that with a single memcpy() call for the entire image since IMAQ images have extra border pixels which make the memory layout have several more bytes per image line than your source image would contain. So without a possibility to see the documentation for your .Net component we really can't say anything more about this. -

Files end up as directories in zip archive

Rolf Kalbermatter replied to Mads's topic in OpenG General Discussions

How do you look at those ZIP files? Through the OpenG library again or a Unix ZIP file command line utility? -

CINTOOLS for ARM exists? Resize String in c++

Rolf Kalbermatter replied to x y z's topic in Calling External Code

The LabVIEW PDA Toolkit is not supported anymore as you may know and that is the only way to support Windows CE platform. That said, labview.lib basically does something like this which you can pretty easily program yourself for a limited number of LabVIEW C functions: MgErr GetLVFunctionPtr(CStr lvFuncName, ProcPtr *procPtr) { HMODULE libHandle = GetModuleHandle(NULL); *procPtr = NULL; if (libHandle) { *procPtr = (ProcPtr)GetProcAddress(libHandle, lvFuncName); } if (!*procPtr) { libHandle = GetModuleHandle("lvrt.dll"); if (libHandle) { *procPtr = (ProcPtr)GetProcAddress(libHandle, lvFuncName); } } if (!*procPtr) { return rFNotFound; } return noErr; } The runtime DLL lvrt.dll may have a different name on the Windows CE platform, or maybe it isn't even a DLL but gets entirely linked into the LabVIEW executable. I never worked with the PDA toolkit so don't know about that. -

Call Library Node Crashes LV in Edit Mode

Rolf Kalbermatter replied to viSci's topic in LabVIEW General

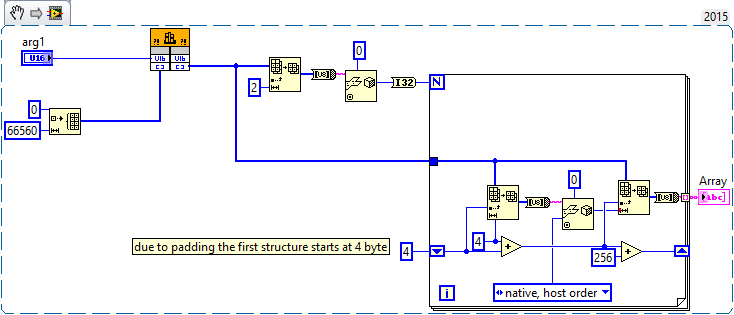

You should probably simply pass a Byte array (or string) of 2 + 2 byte padding + 256 * (4 + 256) elements/bytes as array data pointer and then extract the data from that byte array through indexing and Unflatten from String. -

Create objects in different LabVIEW versions

Rolf Kalbermatter replied to Leif's topic in Object-Oriented Programming

If you talk about patterns, then this follows the factory pattern. The parent class here is the Interface, the child classes are the specific implementations and yes you can of course not really invoke methods that are only present in one of the child classes as you only ever call the parent (interface) class. Theoretically you might try to cast the parent class to a specific class and then invoke a class specific property or method on them, but this doesn't work in this specific case, since that would load the specific class explicitly and break the code when you try to execute it in the other LabVIEW version than the specific class you try to cast to. -

Error accessing site when not logged in.

Rolf Kalbermatter replied to ShaunR's topic in Site Feedback & Support

I'm also seeing it in Chrome on Windows when not logged in. -

Tortoise SVN (+command line tools for a few simple LabVIEW tools) both at work as well as on a private Synology NAS at home.

-

Just Downloaded and Installed LabVIEW NXG.....

Rolf Kalbermatter replied to smarlow's topic in LAVA Lounge

Well Windows IoT must be based on Windows RT or its successor, as the typical IoT devices do not use an Intel x86 CPU, but usually an ARM or some similar CPU. And looking at the Windows IoT page it says: Windows 10 IoT Core supports hundreds of devices running on ARM or x86/x64 architectures. Now I don't think they can limit that one to MS Store app installs only, so they must not use that restriction on IoT, but technically it would seem to be based on the same .Net centric kernel than Windows RT. And in order to provide the taunted write once and run on all of them, they will push the creation of .Net IL assemblies rather than any native binary code, Maybe it's not even possible to use native binary code for this platform. At some point they did promise an x86 emulator for the Windows RT platform (supposedly slated for a Windows RT 8.1 update) in order to lessen the pain of a very limited offering in the App Store, but I don't think that ever really materialized. CPU architecture emulation has been many times tried and while it can work, it never was a real success, except for the 68k emulator in the PPC Macs, which worked amazingly well for most applications that didn't make use of dirty tricks. -

Just Downloaded and Installed LabVIEW NXG.....

Rolf Kalbermatter replied to smarlow's topic in LAVA Lounge

Windows RT and Windows Embedded are two very different animals. Windows Embedded is a more modular configurable version of Windows x86 for Desktop while Windows RT is a .Net based system that does contain a kernel specifically designed for a .Net system, without any of the normal Win32 API interface. Windows Embedded only runs on x86 hardware ,while Windows RT can run on ARM and potentially other embedded CPU hardware. On the other hand Windows Embedded can run .Net CLR applications and x86 native applications, while Windows RT only runs .Net CLR applications. RT here doesn't stand for RealTime but is assumed to refer to the WinRT system, which basically uses the .Net intermediate code representation for all user space applications to isolate application code form the CPU specific components. Windows RT officially was only released for Windows 8 and 8.1 but the new Windows S system that they plan to release, seems to be build on the same principle, strictly limiting application installation from Microsoft Store and only for apps that are fully .Net CLR compatible, meaning they can't really include CPU native binary components. These limitations make it hard for anyone to release hardware devices based on this architecture as only Microsoft Store apps are supported. But I think NI might be big enough to negotiate a special deal with Microsoft for a customized version that can install applications from an NI App Store . Perfect monetization! However with the track record Microsoft has with Windows CE, Phone, Mobile, RT and now S, which all but S (which still has to be released yet) were basically discontinued after some time, I would like to think that NI is very weary of betting on such an approach. For the current Realtime platforms NI sells I would guess that ARM support is still pretty important for the lower cost hardware units, so use of Windows Embedded alone is not really feasible If NI could use the Windows RT based approach for those units, they might get away with implementing an LLVM to .Net IL code backend for those, but since Windows RT is already kind of dead again, that is not going to happen.Therefore I guess NI Linux RT is not going away anytime soon. Yes the new WebVI technology based on HTML5 is likely the horse NI is betting on for future cross platform UI applications that will also run on Android, iOS and other platforms with a working web browser. Development however is likely going to be Windows only for that for a long time. -

Just Downloaded and Installed LabVIEW NXG.....

Rolf Kalbermatter replied to smarlow's topic in LAVA Lounge

No, .Net Core is open source, but .Net is quite a different story. And the difference is akin to saying that MacOS X is open source, because the underlying Mach kernel is BSD licensed! -

Just Downloaded and Installed LabVIEW NXG.....

Rolf Kalbermatter replied to smarlow's topic in LAVA Lounge

That would mean to trash NI Linux RT and go with a special variant of Windows RT for RT (sic). I'm not yet sure that NI is prepared to trash NI Linux RT and introduce yet another RT platform. But stranger things have happened. -

Just Downloaded and Installed LabVIEW NXG.....

Rolf Kalbermatter replied to smarlow's topic in LAVA Lounge

There was some assurance in the past that classic LabVIEW will remain a fully supported product for 10 years after the first release of NXG. Having 1.0 being released this NI Week would end LabVIEW classic support with LabVIEW 2026. And yes .Net is a pretty heavy part in the new UI of LabVIEW NXG (the whole backend with code compiler and execution support is for the most part the same as in current LabVIEW classic). Supposedly this makes integrating .Net controls a lot easier, but it makes my hopes for a non-Windows version of LabVIEW NXG go down the drain. Sure they will have to support RT development in LabVIEW NXG, which means porting the whole host part of LabVIEW RT to 64 bit too, but I doubt it will support more than a very basic UI like nowadays on the cRIOs with onboard video capability. Full .Net support on platforms like Linux, Mac, iOS and Android is most likely going to be a wet dream forever, despite the open source .Net Core initiative of Microsoft (another example of "if you can't beat them, embrace and isolate them") -

Accessing Microsoft Sharepoint with LabVIEW

Rolf Kalbermatter replied to Rixa79's topic in Database and File IO

Definitely! Accessing directly the Sharepoint SQL Server database is a deadly sin that puts your entire Sharepoint solution immediately into fully unsupported mode AFA Microsoft is concerned, even if you only do queries. -

Load lvlbp from different locations on disk

Rolf Kalbermatter replied to pawhan11's topic in Development Environment (IDE)

You usually need to scroll down in the list until you find issues that have at least one valid resolution option. Higher level conflicts that depend on lower level conflicts can't be resolved before their lower level conflicts are resolved. -

Load lvlbp from different locations on disk

Rolf Kalbermatter replied to pawhan11's topic in Development Environment (IDE)

Doesn't need to. The LabVIEW project is only one of several places which stores the location of the PPL. Each VI using a function from a PPL stores its entire path too and will then see a conflict when the VI is loaded inside a project, while the project has this same PPL name in another location present. There is no other trivial way to fix that, than to go through the resolve conflict dialog and confirm for each conflict from where the VI should be loaded from now on. Old LabVIEW versions (way before PPLs even existed) did not do such path restrictive loading and if a VI with the wanted name already was loaded, did happily relink to that VI, which could get you easily into very nasty cross linking issues, with little or no indication that this had happened. The result was often a completely messed up application if you accidentally confirmed the save dialog when you closed the VI. The solution was to only link to a subVI if it was found at the same location that it was when that VI was saved. With PPLs this got more complicated and they choose to select the most restrictive modus for relinking, in order to prevent inadvertently cross linking your VI libraries. The alternative would be that if you have two libraries with the same name on different locations you could end up with loading some VIs from one of them and others from the other library, creating potentially a total mess. -

How do you debug your RT code running on Linux targets ?

Rolf Kalbermatter replied to Zyl's topic in Real-Time

Unfortunately, 27 kudos is very little! Many of the ideas that got implemented had at least 400 and even that doesn't guarantee at all that something gets implemented.- 17 replies

-

How do you debug your RT code running on Linux targets ?

Rolf Kalbermatter replied to Zyl's topic in Real-Time

That's of course another possibility but the NI Syslog Library works well enough for us. It doesn't plug directly into the Linux syslog but that is not a big problem in our case. It depends. In a production environment it can be pretty handy to have a life view of all the log messages, especially if you end up having multiple cRIOs all over the place which interact with each other. But it is always a tricky decision between logging as much as possible and then not seeing the needle in the haystack or to limit logging and possibly miss the most important event that shows where things go wrong. With a life viewer you get a quick overview but if you log a lot it will be usually not very useful and you need to look at the saved log file anyhow afterwards to analyse the whole operation. Generally, once debugging is done and the debug message generation has been disabled, a life viewer is very handy to get an overall overview of the system, where only very important system messages and errors get logged anymore.- 17 replies

-

How do you debug your RT code running on Linux targets ?

Rolf Kalbermatter replied to Zyl's topic in Real-Time

Well as far as the Syslog functionality itself is concerned, we simply make use of the NI System Engineering provided library that you can download through VIPM. It is a pure LabVIEW VI library using the UDP functions and that should work on all systems. As to having a system console on Linux there are many ways for that which Linux comes actually with, so I'm not sure why it couldn't be done. The problem under Linux is not that there are none, but rather that there are so many different solutions that NI maybe decided to not use any specific one, as Unix users can be pretty particular what they want to use and easily find everything else simply useless.- 17 replies

-

- 2

-

-

How do you debug your RT code running on Linux targets ?

Rolf Kalbermatter replied to Zyl's topic in Real-Time

We don't use Veristand, but we definitely use syslog in our RT applications quite extensively. In fact we use a small Logger class library that implements either file or syslog logging. I'm not sure what you would consider a pain to have such a solution working in VeriStand though. Somewhere during your initialization you configure and enable the syslog (or filelog) and then you simply have a Logger VI that you can drop in anywhere you want. Ours is a polymorphic VI with one version acting as a replacement for the General Error Handler.vi and the other being for simply reporting random messages to the logging engine. After that you can use any of the various syslog viewer applications to have a life update of the messages on your development computer or anywhere else on the local network.- 17 replies

-

- 1

-

-

View Executable on Web browser

Rolf Kalbermatter replied to Cat's topic in Remote Control, Monitoring and the Internet

That sounds a bit optimistic considering that all major web browsers nowadays disable Flash by default and some have definite plans to remove it altogether. Similar about the Silverlight plugin, which Microsoft has stopped to develop years ago already and support is marginal today (security fixes). -

That is not entirely true, depending on your more or less strict definition of a garbage collector. You are correct that LabVIEW does allocate and deallocate memory blocks explicitly, rather than just depending on a garbage collector to scan all the memory objects periodically and determine what can be deallocated. However LabVIEW does some sort of memory retention on the diagram where blocks are not automatically deallocated whenever they are going out of scope, because they can be then simply reused on the next iteration of loops or for the next run of the VI. And there is also some sort of low level memory management where LabVIEW doesn't usually return memory to the system heap whenever it is released inside LabVIEW but instead holds onto it for future memory requests. However this part has been changed several times in the history of LabVIEW, with early versions having a very elaborate memory manager scheme built in, at some point even using a third party memory manager called Great Circle, in order to improve on the rather simplistic memory management scheme of Windows 3.1 (and MacOS Classic) and also to allow much more fine grained debugging options for memory usage. More recent versions of LabVIEW have shed much of these layers and rely much more on the memory management capabilities of the underlying host platform. For good reasons! Creating a good, performant and most importantly flawless memory manager is an entire art in itself.

-

A Rolf Kalbermatter Article - External Code in LabVIEW

Rolf Kalbermatter replied to Tomi Maila's topic in Announcements

I have recently resurrected these articles under https://blog.kalbermatter.nl -

Communicate with Omron E5CC using Modbus

Rolf Kalbermatter replied to Nathan_MerlinIC's topic in Hardware

That's the status return value of the viRead() function and is meant as a warning "The number of bytes transferred is equal to the requested input count. More data might be available.". And as you can see, viRead() is called for the session COM12 and with a request for 0 bytes, so something is not quite setup right, since a read for 0 bytes is pretty much a "no operation".