-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

279

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Rolf Kalbermatter

-

Problem with dll for Image processing

Rolf Kalbermatter replied to S_1's topic in Machine Vision and Imaging

You definitely need to show your DLL function prototype and the diagram that calls it, preferably in VI form and not just an image. What you expect us here to do is showing us a picture of your car and asking why its motor doesn't run! -

I understand your concerns, but reality is that any multiplattform widget library (be it wxWidget, QT, Java, LabVIEW or whatever else you can come up with) is always going to be limited with whatever is the least common denominator for all supported platforms. Anything that doesn't exist on even one single platform natively has to be either left away or simulated in usually very involved and complicated ways, that make the code difficult to understand and maintain. And the LabVIEW window manager does contain quite a few such hacks to make things like subwindows, Z ordering, menus and common message handling at least somewhat uniform to the upper layers of LabVIEW. Hacky enough at least to make opening the low level APIs to this manager to other users a pretty nasty business. I'm sure there are other marketing driven reasons too, that Apple didn't include a cfWindow interface to NSWindow into its CoreFoundation libraries, but even if they did, they certainly wouldn't have tried to map that to the WinAPI as they did with most other CoreFoundation libraries.

-

And that is exactly what LabVIEW does. It's Window manager component is a somewhat hairy code piece dealing with the very platform specific issues and trying to merge highly diverging paradigmas into one common interface. It makes part of that functionality available through VI server, and a little more through an undocumented C interface which lacks a few fundamental accessors to make it useful for use outside of LabVIEWs internal needs. Yes "one" could! And what you describe is mostly how it would need to be done. But are you volunteering? Be prepared to deal with highly diverging paradigmas of what a window means on the different platforms and how it is managed by the OS and/or your application! And many hours of solitude in an ivory tower with lots of coffeine, torn out hairs and swearing when dealing with threading issues like the LabVIEW root loop and other platform specific limitations. I don't see the benefit of that exercise and won't go there! What for? For MDI applications? Sorry but I find MDI one of the worst implementation choices for almost every application out there.

-

"/usr/lib" should be in the standard search path for libraries, and if the library was properly installed with ldconfig (which I suppose should be done automatically by the OPKG script) then LabVIEW should be able to find it. Note that on Linux platforms LabVIEW will automatically try to prepend "lib" to the library name if it can't find the library with its wildcard name. So even "sqlite3.*" should work. However I'm not entirely sure about if that also happens when you explicitedly wire the library path to the Call Library Node. But I can guarantee you that it is done for library names directly entered in the Call Library Node configuration.

-

Ahh licensing! Well, I'm also in the last stage of finalizing a license solution for all LabVIEW platforms. Yes, including all possible NI RT targets!

-

Good luck with that! On Linux the native handle is supposedly the XWindows Window datatype. On Mac I would guess (don't have looked at it but entirely based on assumptions) that for the 32 Bit version it is the Carbon WindowPtr and for 64 Bit version it is an NSWindow pointer (and yes this seems to require Objective C, there doesn't seem to be a CoreFoundation C API to this for 64 Bit). So, you have at least 4 ENTIRELY different datatypes for the native window handle, with according ENTIRELY different APIs to deal with! And no, the Call Library Node function can not access Objective C APIs, for the same reason it can't access C++ APIs. There is no publically accepted standard for the ABI of OOP compiled object code. I guess the Objective C interface could be sort of regarded as standard on the Mac at least, since anyone doing it otherwise than what the included GNU C toolchain in X code does is going to outcast himself. For C++ there exists unfortunately not such an inofficial standard.

-

That's actually covered in that document. Any child references of the front panel are "static" references. When the VI server was pretty new (around LabVIEW 5.1) those were in fact dynamic references that had to always be explicitedly closed. However in a later version of LabVIEW that was changed for performance reasons. If you attempt to close such a reference the Close Reference node is in fact simply a NoOp. This is documented and some people like to go to great lengths to make sure to always know exactly which references need to be closed and which not, in order to not use the Close Reference node unneccessarily but my personal opinion is that this is a pretty useless case of spending your time and energy and simply always using the Close Reference node anyways is the most quick and simple solution. One way to reliably detect if a returned refnum is static or dynamic is to place the node that produces the refnum into a loop that executes at least twice and typecast the refnum into an int32. If the numeric value stays the same then it is a static refnum, otherwise it is dynamic and needs to be closed explicitedly in order to avoid unneccessary resource hogging of the system.

-

As if that would be any better! i have seen very bizarre behaviour with customers both when using McAfee and Norton and have a few pretty bad experiences myself with McAfee. Bad enough that we did not renew the license. On one side they like to be notoriously present in anything you do, on the other hand their UI allows little to no configuration options anymore. LabVIEW tends to do all kind of things when starting up, which opens many files all over the place. Virus scanners like Norton and McAfee like to intercept that every time and not in a way that is performance wise to be ignored. Works fine if only a few files are involved but runs completely awry with a high number of files being queried during a programmatic operation. Unfortunately it is not something that seems to be consistent from machine to machine. These virus scanners cause trouble on some machines and the same version seems to work fine on others and it is almost impossible to analyze why. But removing them also makes such problems go away, so go figure.

-

One reason why EtherCAT slave support isn't trivial is that you need to license it from the EtherCAT consortium. EtherCAT masters are pretty trivial to do with standard network interfaces and a little low level programming but EtherCAT slave interfaces require special circuitry in the Ethernet hardware to work properly. One way to fairly easily incorporate EtherCAT slave functionality into a device is to buy the specific EtherCAT silicon chips from Beckhoff and others which also include the license to use that standard. However those chips are designed to be used in devices, not controllers so there is no trivial way of having them be used as generic Ethernet interfaces. That makes it pretty hard to support EtherCAT slave functionaility on a controller device that might also need general Ethernet connectivity, unless you add a specific EtherCAT slave port in addition to the generic Ethernet interface, which is a pretty high additional cost for something that is seldom used by the majority of the users of such PC type controllers.

-

How to use camera not listed under MAX

Rolf Kalbermatter replied to Sharon_'s topic in LabVIEW General

I wasn't aware of the Toshiba Teli product line. Googling "TeliCam Toshiba" didn't bring up any relevant links :-) and "TeliCam" alone only showed some analog cameras! Since it's indeed an entire range of cameras with all kinds of interfaces we definitely need to know more about the actually used model before we can say anything more specific about the best way to use that from withing LabVIEW. -

How to use camera not listed under MAX

Rolf Kalbermatter replied to Sharon_'s topic in LabVIEW General

To add to what Jordan and Tomas already said, the camera is pretty unimportant here. Since it is an anaolog camera you need to have also some sort of image frame grabber interface that converts the analog signal to a digital computer image. This is what is important as to how you can interface to your camera. Unfortunately NI has discontinued all their analog frame grabber interfaces otherwise the most simple solution would have to be to buy an NI IMAQ device and connect your camera to that. Instead of that there are supposedly still some Alliance Members that sell third party analog frame grabbers with LabVIEW drivers. Other possible interfaces that claim to have LabVIEW support: http://www.theimagingsource.com/de_DE/products/grabbers/dfgmc4pcie/ http://www.bitflow.com/products/details/alta-an http://www.i-cubeinc.com/pdf/frame%20grabbers/TIS-DFGUSB2.pdf And as has been mentioned, if the frame grabber has a DirectX driver you should be able to access is from IMAQdx too, possibly with a little configuration effort. -

Calling GetValueByPointer.xnode in executable

Rolf Kalbermatter replied to EricLarsen's topic in Calling External Code

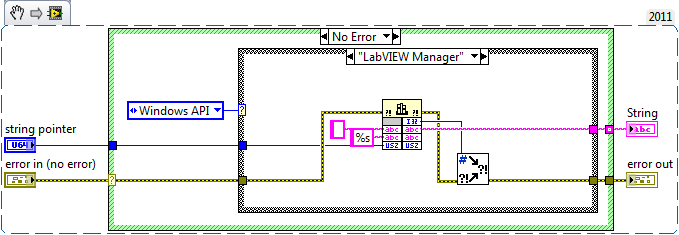

This appears to probably call libtiff and there the function TIFFGetField() for one of the string tags. This would return a pointer to a character string and as such can indeed not be directly configured in the LabVIEW Call Library Node. The libtiff documentation is not clear about if the returned memory should be deallocated explicitedly afterwards or if it is managed by libtiff and properly deallocated when you close the handle. Most likely if it doesn't mention it, the second is true, but definitely something to keep in mind or otherwise you might create deep memory leaks! As to the task of returning the string information in that pointer there are in fact many solutions to the problem. Attached VI snipped shows two of them. "LabVIEW Manager" calls a LabVIEW manager function very much like the ominous MoveBlock() function and has the advantage that it does not require any extra DLL than what is already present in the LabVIEW runtime itself. "Windows API" calls similar Windows API functions instead.- 10 replies

-

- 2

-

-

Get Volume Info, but of which volume?

Rolf Kalbermatter replied to ThomasGutzler's topic in LabVIEW General

The problem is that you are using reparse points (the Microsoft equivalent, or more precisely attempt to create an equivalent, of unix symbolic or hard links). And that reparse points where only really added with NTFS 3.0 (W2K) but Windows itself only really learned to work with them in XP sort of, and even in W7 support is pretty limited and far from getting recognized properly by many Windows components who aren't directly part of the Windows kernel. LabVIEW never has heard about them and treats them logically as whatever the reparse point is used for, namely either the file or directory they point at. LabVIEW for Linux and Mac OSX properly can deal with symbolic links (hard links are as far as applications are concerned anyhow fully transparent). On Windows LabVIEW does actually support shortcuts (the Win95 placebo for support of path redirection) but does not offer functionality to allow an application to have any influence on how LabVIEW deals with them. When you pass a path that contains one or more shortcuts to the File Open/Create or the File/Directory Info function, LabVIEW automatically will resolve every shortcut in the path and access the actual file or directory. But it will not attempt to do anyhing special for reparse points and doesn't really need to as that is done automatically by the Windows kernel when passing a path to it that contains reparse points. It only gets complicated when you want to do something in LabVIEW that needs to be aware of the real nature of those symbolic links/reparse points, such as the OpenG ZIP Library. And that is the point where I'm currently working on and it seems the only way to do that is by accessing the underlaying operating system functions directly, since LabVIEW abstracts to much away here. But it shouldn't be a problem for any normal LabVIEW application as long as you are mostly interested in the contents of files but not the underlaying hierarchy of the file system. Incidentially my tests with the List Folder function showed that LabVIEW properly places the elements that point to directories (reparse points, symbolic links and shortcuts) into the folder name list, while elements that point to files are placed into the filenames list. And that is true for LabVIEW versions as far back as 7.0. But there is an exported C function FListDir() which is even (poorly) documented in the external Code reference online documentation, that returns shortcuts as files but also returns an extra file types array which indicates them to be of type 'slnk' for softlink which was the MacOS signature for alias files. Supposedly List Folder uses internally FListDir() to do its work and properly process the returned information to place these softlinks into the right list. Unfortunately FListDir() doesn't know about reparse points, something quite understandable if you realize that even Windows 8 only has one single API to create a symbolic link. If one wants to create hard links or retrieve the actual redirection information of the symbolic or hard link, one has to call directly into kernel space with pretty sparsely documented information to do those things. -

Keeping track of licenses of OpenG components

Rolf Kalbermatter replied to Mellroth's topic in OpenG General Discussions

While this might be a possible option and is done in other software components to get around the problem of changing authors for different components I do think it is made more complicated by the fact that there would have to be some sort of body that actually incorporates the "OpenG Group". For several open source projects that I know of and which use such a catch all copyright notice, there actually existst some registered non-profit organization under that name that can function as copyright holder umbrella for the project. Just making up an OpenG Group without some organizational entity may legally not be enough. Personally I would be fine with letting my copyright on OpenG components be represented by such an entity. Now, even if such an entity would exist there would be one serious problem at the moment. You can't just declare that any cody provided to OpenG in the past falls under this new regime. Every author would have to be contacted and would have to give his approval to be represented in such a way through the entity. Code from authors who wouldn't give permission or can't be contacted, would need to remain as is or get removed from the next distribution that uses this new copyright notice. And there would need to be some agreement that every new submitter would have to agree too, that any newly submitted code falls under this rule. All in all, it's doable, but quite a bit of work and I'm not sure the OpenG community is still active enough that anyone would really care enough to pick this up. -

That display is really a computer terminal. It was released in 1974, about 12 years before LabVIEW 1.0 was even invented/released. So I would guess that even LabVIEW 3.0 is very unlikely to have ever had any specific library for this thing. What are you trying to do there? Reading the display contents over the RS-232 optional interface or something? It probably would involve knowing exactly which type of interface you have installed in that thing and then using the according protocol. In this catalog on page 273 you can see that the interface was option selectable for many popular computers of that time, each of them with their own specific terminal data protocol. That was before DECs VT100 terminal set some sort of defacto terminal protocol standard. It might be nowadays pretty hard to come to some protocol definitions of some of those interfaces. Interesting to see that this thing cost almost 9000$ at its introduction excluding any interface options.

-

No, LabVIEW for Linux is only x86 (and in 2014 also x64 compiled) meaning it will only run on Intel x86 compatible processors. The NI Linux RT version for their ARM targets (myRIO, cRIO 906x) could theoretically be made to work on this but not without some serious effort. Unfortunately it is not like you can just copy the image over, but you would rather have to download the source code for NI Linux RT distribution and adapt it to the hardware resources as available on this board and compile your own linux kernel image and libraries for this target. Even if that succeeds (which given enough determination would be possible) there is another problem: licensing! When you buy an NI RT hardware platform you also buy a LabVIEW runtime license. NI does want to get a license fee, if you plan to install the LabVEW RT runtime kernel (nirt.so and other stuff) on non-NI embedded hardware!

-

mathscript Dynamically calling Mathscript .m file in EXE

Rolf Kalbermatter replied to drjdpowell's topic in Calling External Code

Yes, when developing the LabPython interface which also has an option to use a script node. That is why I then added a VI interface to LabPython which made it possible to execute Python scripts that are determined at runtime rather than at compile time. However not sure how to do that for Mathscript. -

Why do these two subVIs behave differently?

Rolf Kalbermatter replied to DTaylor's topic in LabVIEW General

It's not an overzealous optimization but one that is made on purpose. I can't find the reference at the moment but there was a discussion of this in the past and some input from some LabVIEW developer about why they did that. I believe it's at least since LabVIEW 7.1 or so like that but possibly even earlier. And LabVIEW 2009 doing it differently would be a bug! Edit: I did a few tests in several LabVIEW versions with attached VIs and they all behaved consistently by resetting the value when the false case was executed. LV 7.0, 7.1.1f2, 8.0.1, 2009SP1 64 Bit, 2012SP1f5, 2013SP1f5 FP Counter.vi Test.vi -

It's not an upgrade code but a Product ID. Technically it is a GUID (globally unique identifier). It is virtually guaranteed to be different each time a new one is generated. This Product ID is stored inside the Build Spec for your executable. If you create a new Build Spec this product ID is each time newly generated. If you clone a Build Spec, the Product ID is cloned too. The Installer stores the Product ID in the registry and when installing a new product it will search for that Product ID and if it finds it it will consider the current install to be the same product. It then checks the version and if the new version is newer than the already installed version, it will proceed to install over the existing application. Otherwise it silently skips the installation of that product. Now, please forgive me but your approach of cloning Build Specs to make a new version is most likely useless. As you create a new version of your application you usually do that because you changed some functionality of your code. But even though your old build spec is retained, it still points to the VIs on disk as they are now, most likely having been overwritten by your last changes. So even if you go back and launch an older build spec, you most likely will build the new code (or maybe a mix of new and old code, which has even more interesting effects to debug) with the only change being that it claims to be an older version. The best way to maintain a history of older versions is to use proper source code control. That way you always use the same build spec for each version (with modifications as needed for your new version), but if you need to, you can always go back to an earlier version of your app. A poor mans solution is to copy the ENTIRE source tree including project file and what else for each new version. I did that before using proper source code control, also zipping the entire source tree up but while it is a solution, it is pretty wasteful and cumbersom. Here again, you don't create a new Build Spec for each version but rather keep the original Build Spec in your project.

-

The Generate button generates a new Product ID. A product ID identifies a product and as long as the installer uses the same product ID it will update previous version with the same product ID. However the installer will NOT overwrite a product with the same product ID but a newer version. If you really want to install an older version on your machine over a new version, the easiest solution would be to completely deinstall your product and start your previous installer to install your application from scratch. Trying to trick the installer into installing your older version over a newer version is a pretty complicated and troublesome operation that will go more often wrong than right.

-

Ok guys, I managed to organize a nice iMac in order to be able to compile and test a shared library of the OpenG ZIP Toolkit. However I have run into a small roadblock. I downloaded the LabVIEW 2014 for Mac Evaluation version and despite that it tells me that it is the 32 bit version, does it contain the 64 version of the import library in the cintools directory. Therefore I would like to ask if someone could send me the lvexports.a file from the cintools directory from an earlier LabVIEW for Mac (Intel) installation, so that I can compile and test the shared library in the Evaluation version on this iMac. I'm afraid that even a regular LabVIEW 2014 for Mac installation might contain a 64 bit library in both installation, so the lvexports.a file from around LabVIEW 2010 up to 2013 would be probably safer, as those versions were 32 bit only and therefore more likely will also contain a 32 bit library file

-

And what references are you talking about here?

-

I have created a new package with an updated version of the OpenG ZIP library. The VI interface should have remained the same with the previous versions. The bigger changes are under the hood. I updated the C code for the shared library to use the latest zlib sources version 1.2.8 and made a few other changes to the way the refnums are handled in order to support 64 bit targets. Another significant change is the added support for NI Realtime Targets. This was already sort of present for Pharlap and VxWorks targets but in this version all current NI Realtime targets should be supported. When the OpenG package is installed to a LabVIEW 32 bit for Windows installation, an additional setup program is started during the installation to copy the shared libraries for the different targets to the realtime image folder. This setup will normally cause a password prompt for an administrative account even if the current account already has local administrator rights, although in that case it may be just a prompt if you really want to allow the program to make changes to the system, without requiring a password. This setup program is only started when the target is a 32 bit LabVIEW installation since so far only 32 bit LabVIEW supports realtime development. After the installation has finished it should be possible to go in MAX to the actual target and select to install new software. Select the option "Custom software installation" and in the resulting utility find "OpenG ZIP Tools 4.1.0" and let it install the necessary shared library to your target. This is a prelimenary package and I have not been able to test everything. What should work: Development System: LabVIEW for Windows 32 bit and 64 Bit, LabVIEW for Linux 32 Bit and 64 Bit Realtime Target: NI Pharlap ETS, NI VxWorks and NI Linux Realtime targets From these I haven't been able to test the Linux 64 Bit at all, as well as the NI Pharlap and NI Linux RT for x86 (cRIO 903x) targets If you happen to install it on any of these systems I would be glad if you could report any success. If there are any problems I would like to hear them too. Todo: In a following version I want to try to add support for character translation of filenames and comments inside the archive if they contain other characters than the ASCII 7 bit characters. Currently characters outside that range are all getting messed up. Edit (4/10/2015): Replaced package with B2 revision which fixes a bug in the installation files for the cRIO-903x targets. oglib_lvzip-4.1.0-b2.ogp

-

Reading the string output of a DLL

Rolf Kalbermatter replied to eberaud's topic in Calling External Code

This can't be! The DLL knows nothing about if the caller provides a byte buffer or a uInt16 array buffer and conseqently can't interpret the pSize parameter differently. And as ned told you this is basically all C knowledge. There is nothing LabVIEW can do to make this even more easy. The DLL interface follows C rules and those are both very open (C is considered only slightly above assembly programming) and the C syntax is the absolute minimum to allow a C compiler to create legit code. It is and was never meant to describe all aspects of an API in more detailed way than what a C compiler needs to pass the bytes around correctly. How the parameters are formated and used is mostly left to the programmer using that API. In C you do that all the time, in LabVIEW you have to do it too, if you want to call DLL functions. LabVIEW uses normal C packing rules too. It just uses different default values than Visual C. While Visual C has a default alignment of 8 bytes, LabVIEW uses in the 32 bit Windows version always 1 byte alignment. This is legit on an x86 processor since a significant amount of extra transistors have been added to the operand fetch engine to make sure that unaligned operand accesses in memory don't invoke a huge performance penalty. This all to support the holy grail of backwards compatibility where even the greatest OctaCore CPU still must be able to execute original 8086 code. Other CPU architectures are less forgiving, with Sparc having been really bad if you would do unaligned operand access. However on all other current platforms than Windows 32 Bit, including the Windows 64 Bit version of LabVIEW, it does use the default alignment. Basically this means that if you have structures in C that are in code compiled with default alignment you need to adjust the offset of cluster elements to align on the natural element size when programming for LabVIEW 32 bit, by possibly adding filler bytes. Not really that magic. Of course a C programmer is free to add #pragma pack() statements in his source code to change the aligment for parts or all of his code, and/or change the default alignment of the compiler through a compiler option, throwing of your assumptions of Visual C 8 byte default alignment. This special default case for LabVIEW for Windows 32 Bit does make it a bit troublesome to interface to DLL functions that uses structure parameters if you want to make the code run on 32 Bit and 64 Bit LabVIEW equally. However so far I have always solved that by creating wrapper shared libraries anyhow and usually I also make sure that structures I use in the code are really platform independent by making sure that all elements in a structure are aligned explicitedly to their natural size. -

Reading the string output of a DLL

Rolf Kalbermatter replied to eberaud's topic in Calling External Code

A proper API would specifically document that one can call the function with a NULL pointer as buffer to receive the necessary buffer size to call the function again. But that thing about that you have to specify the input buffer size in bytes but get back the returned characters would be a really brain damaged API. I would check again! What happens if you pass in the number of int16 (so half the number of bytes)? Does it truncate the output at that poistion? And you still should be able to define it as an int16 array. That way you don't need to decimate it afterwards.