-

Posts

369 -

Joined

-

Last visited

-

Days Won

43

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by dadreamer

-

From what I can remember, for LV 5.0.x and older RTE (i.e., a loader plus small subset of resources) was included into the EXE automatically during the build process. For LV 5.1.x there was a choice: to include RTE into the build or to use an external RTE. And since LV 6.0 only an external RTE was supposed. I could say more, such a trick is still possible for all modern versions on all three platforms (Win, Mac, Linux). The latest version I tested it on, was LV 2018, but I'm pretty sure, the technique hasn't changed much. I can't remember, from which version NI started to use Visual Studio 2015, but since then each EXE requires The Universal CRT, that is contained in Microsoft Visual C++ 2015 Redistributable. One could install such a distro on a clean machine or copy all these files from the machine, where such a CRT is already installed. Now besides of those the application will also require this minimal subset of folders/files (true for LV 2018 64-bit): On Linux it goes much easier (true for LV 2014 64-bit): For LV 2018 64-bit with a "dark" RTE it also wants And for Mac OS you can embed RTE into the application with this script: Standalone LabVIEW-built Mac Application with Post-Build Action. Of course (and I'm sure everyone understands that), the technique described above, is applicable to very simple 'a la calculator' apps and not very to not at all for more or less complex projects. The more functions are called, the more dependencies you get. If something from MKL is used, you need lvanlys.dll and LV##0000_BLASLAPACK.dll, if VISA is used, you need visa32.dll, NiViAsrl.dll and maybe others, and so on and so forth.

-

I have LabVIEW 5 and 6 on my USB stick too and they both run OK on Windows 10. Initially LV 5 was hanging at the start, so I had to disable multithreading: ESys.StdNParallel=0 Not that I really need LabVIEW to be on hand all the time. But sometimes it's useful to have around an advanced calculator for quick-n-dirty prototyping. And sometimes to look at how things were then. Considering the age and bugs, using these versions for serious projects is, to put it mildly, unwise. I also don't like that LabVIEW re-registers file associations for itself every time it starts, but I'm more or less used to this. I also believe, those versions didn't really need some pirate tools. Just owner's personal data and serial number were needed. If not available, it was possible to use 'an infinite trial' mode: start, click OK and do everything you want.

-

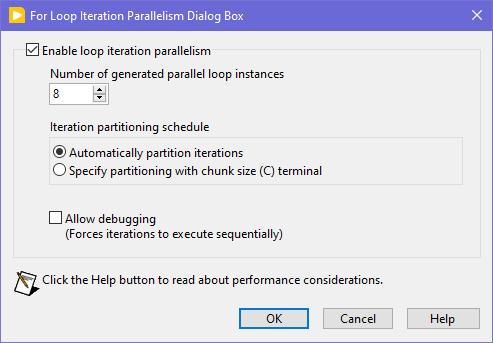

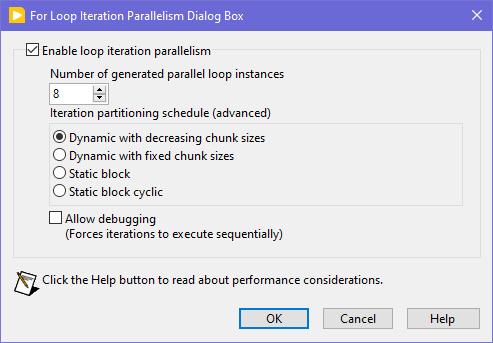

Seems like this one has "escaped everyone's grasp" too. ParallelLoop.ShowAllSchedules=True Because was only checked from the password-protected diagram of ParallelForLoopDialog.vi (LabVIEW 20xx\resource\dialog). Present since LabVIEW 2010. When activated, allows to apply more advanced iteration partitioning schedule. In other words, instead of this you will get this Сould this be useful? I can't say. Maybe in some very specific use-cases. In my quick tests I didn't manage to get increase in any productivity. It's easy to mess up with those options and make things worse, than by default. Also can be changed by this scripting counterpart.

-

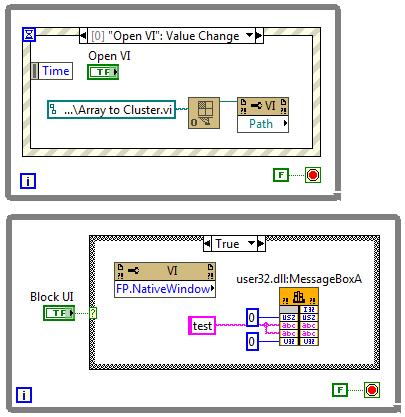

This is true. But! If the VI to open is a member of a lvclass or lvlib and the typedefs input is False (default value), then almost any subsequent action on that VI ref leads to the whole hierarchy loading into memory. This is not good in most cases as with large hierarchies loading takes several seconds and LabVIEW displays loading window during that, no matter which options are passed. The only easy way to escape that is to set the typedefs to True. Another option would be putting the VI in a bad (broken) state, but the function suitable for this is not exported. Frankly this Open.VI Without Refees method acts a bit odd, because when the typedefs is False, it sets the viBadVILibrary flag, but it does not give the desired effect, but when the typedefs is True, such a flag is not set, but no dependencies are loaded. Sometimes I use Open.VI Without Refees to scan VIs for some objects on their BD's / FP's and when there are thousands of VIs, it all takes many minutes to scan, even with the typedefs = True. I managed to speed things up 3-4 times by compiling my traversal VI and running it on a Full Featured RTE instead of a standard RTE to get the scripting working. Using that ~20 000 VIs are scanned in about 5 minutes.

-

I haven't had much time to investigate this until this month, but I think I've found the cause. XNodes on the production computer were not designed optimally. In the AdaptToInputs ability I was unconditionally passing a GenerateCode reply, thinking that the AdaptToInputs is only called when interacting with the XNode (connecting/disconnecting wires). It turned out that LabVIEW also calls the AdaptToInputs ability once, when the VIs are loaded and any single change is made, no matter if it touches the XNode or not. As I had many such non-optimal XNodes in many places, it was causing code regeneration in all of them. Besides of that some of my VIs had very high code complexity (11 to 13), because of a bunch of nested structures. When the XNodes regeneration was occurring simultaneously with the VIs recompilation, it was taking that a minute or so. After I added extra conditions into my AdaptToInputs ability (issue a GenerateCode reply only, when the Term Types are changed), the edits in my VIs started to take 1.5 seconds. Still the hierarchy saves can be slow, when some 'heavy' VIs are changed, but it's a task for me to refactor those VIs, so their complexity could decrease to 10 or less. By the way, my example from the previous page was not suitable for demonstrating the situation, as its code complexity is low and the Match Regular Expression XNode does not issue a GenerateCode reply in the AdaptToInputs.

-

Sort (search) Array of cluster by one cluster element

dadreamer replied to Bobillier's topic in LabVIEW General

You want an ability to override the Equality or Comparison operators? I'm unsure, whether it really existed in OpenG packages, but now you have those neat malleable VIs, that let you do that: Search Unsorted 1D Array , Sort 1D Array , Search Sorted 1D Array. They have an additional input to specify your own equals or less function in a form of a custom comparison class or a VI refnum. There's an article to help: Creating a Custom Sorting Function in LabVIEW -

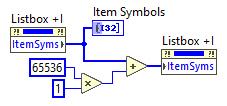

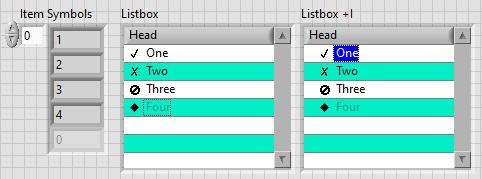

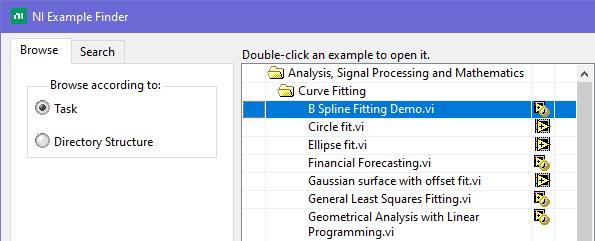

This is exactly what was said in that ancient thread: Tree control in labview. So if you add 65536*N to the Item Symbols property of the Listbox and have the "Enable Indentation" option activated, you shift the symbol/glyph and the text N levels to the right. Could be useful for simple 'parent-child' relationships, if you don't want to use a Tree. And still it's used in Find Examples / NI Example Finder window:

-

How to extract a circular ring from a Image?

dadreamer replied to Wang xiang yang002's topic in LabVIEW Community Edition

IMAQ Unwrap VI Example is at \LabVIEW 20xx\examples\Vision\Image Management\Unwrap Barcode.vi. For U16 images also see this: IMAQ Unwrap Example - not working properly with U16 image type? -

About a minute for a change, that's what I've seen on a production machine. On my home computer it's as on yours though: a single edit takes 1-2 seconds. I'm still investigating it. I will try different options such as enabling those ini keys or disabling the auto-save feature. To me it looks like the whole recompilation is triggered sometimes. By the way, there are more keys to fine-tune XNodes: I haven't yet figured out the details.

-

Looks like Block Diagram Binary Heap (BDHb) resource took 1.21 MB and the rest is for the others. There are 120 Match Regular Expression XNodes on the diagram. If each XNode instance is 10 KB approximately, and they all are get embedded into the VI, we get 10 * 120 = 1200 KB. The XNode's icon is copied many times as well (DSIM fork). So, the conclusion is that we shouldn't use XNodes for multiple parallel calls. The less, the better, right? Ok. The load time seems to reduce with these tokens: Still the editing is sluggish tho.

-

4. WinAPI version using ChooseColor function. NativeColors.rar Far from ideal, don't kick too hard. 🙂 Determine Clicked Array Element Index is from here.

-

Well, I see no issues when running XNodes at the run-time, when everything is generated and compiled. What I see is some noticeable lags at the edit time. Say, I have 50 or even 100 instances of one or two XNodes in one VI, set to their own parameters each. When compiled, all is fine. But when I make some minor change (create a constant, for example), LabVIEW starts to regenerate code for all the XNodes in that VI. And it can take a minute or so! Even on a top-notch computer with NVMe SSD and loads of RAM. Anyone experienced this? I've never seen such a behaviour, when dealing with VIM's. Tried to reproduce this with a bunch of Match Regular Expression XNodes in a single VI. Not on such a large scale, but the issue remains. Moreover the whole VI hierarchy opens super slowly, but this I've already noticed before, when dealt with third party XNodes. xn.vi

-

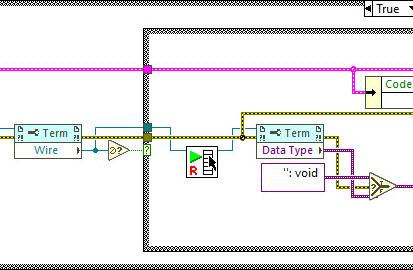

I kind of liked this idea and wished VIM's could allow for such a backpropagation. Even had a thought of making an idea on the dark forums. But then I played a while with the Variant To Data node. It doesn't play well. It can't determine a sink, if a polymorphic VI is connected or even when a LV native (yellow) node is connected. Borders of structures are another issue, obviously. So, it'd require making two ideas at least: to implement VIM backpropagation and to enhance the Variant To Data node. (As a hack one could eliminate the Variant to Data in their code with coerceFromVariant=TRUE token, but then the diagram starts to look odd and no error handling is performed). If someone still wants the code, shown in the very first post, it's here: https://code.google.com/archive/p/party-licht-steuerung/source/default/source?page=3 (\trunk\PLS-Code\PLS Main.vi). And these are the papers to progress through the lessons: LabVIEW Intermediate I Successful Development Practices Course Manual. Nothing interesting there for an experienced LV'er though. XNodes demonstrated here work a way better, and could be a good alternative (if you're OK with unsupported features, of course). As I tried to adapt them for my own purposes, I decided to improve the sink search technique. It surprised me a bit, that there's still no complete code to walk through all the nested structures to determine a source/sink by its wire. Maybe I didn't search well but all I found was this popup plugin: Find Wire Source.llb. It stops on Case structures though. I have reversed its logic to search for a sink instead of a source and tried to apply recursion, when it encounters a Case structure. Well, it's still not ideal, but now it works in most my cases. There are some cases, when it cannot find a sink, e.g. wire branches with void terms: Too many scenarios to process them all. Nevertheless, this little VI might be useful for someone. You may use it as a popup plugin, of course, or may pull out that Execute Find Wire Destination (R).vi and use it in your XNodes. As an example: Find Wire Destination.llb Already tried such nodes in a work project. I must admit that not all the time back-propagation is suitable, so about 50/50. But when it's used, it works.

-

Find most recently created or modified image(file)

dadreamer replied to mooner's topic in LabVIEW General

In addition to the LV native method, there are options with .NET and command prompt: Get Recently Modified Files. -

LabVIEW Build Array Bug #1: Unexpected Array Growth

dadreamer replied to Joel Foster's topic in LabVIEW Bugs

I remember I even had an idea, that would make it easier to track such situations: Add Array Size(s) Indicator. In design time it would cost almost nothing. Although I admit, its use cases are quite rare. -

Started playing with XNodes a bit and noticed the same behaviour as well. Really upsetting. But there is the solution. Just send FailTransaction reply in a Cancel case in the OnDoubleClick ability of your XNode and that 'dirty dot' never appears! That's exactly what the Timed Loop XNode does internally. Looking at this description I get the impression that this reply was invented precisely to overcome that bug (was even given its own CAR #571353). Similar thread for cross-reference: LabVIEW Bug Report: Error Ring Edit + Cancel modifies the owning VI

-

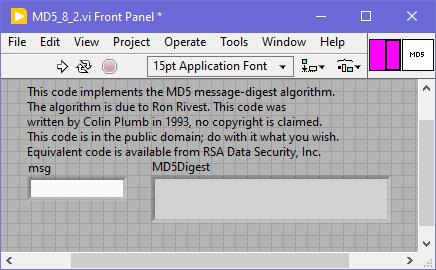

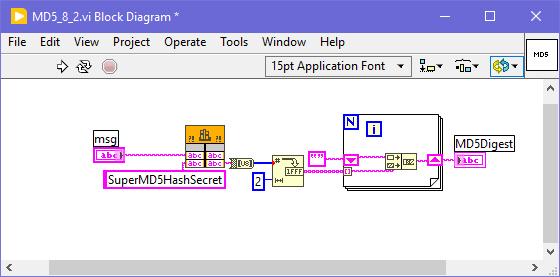

For an odd reason I don't see such a function in LabVIEW 5.x, 6.x and 7.x. It appears only in 8.2, maybe it's available since 8.0 too, can't check right now. Well, I ran a quick binary search for "GetMD5Digest" word and found, that such a function was being used in ...\LabVIEW 8.2\vi.lib\imath\engines\lvmath\Utility\MD5.vi. That VI was password-protected, the password was calvinthegorilla (😁) and, of course, was removed like 1-2-3. Here's the VI to play: MD5_8_2.vi As shown, the prototype is still correct, just some extra "secret" / key was applied. Works well in LV 2023 Q3 64-bit.

-

The Create NI GUID.vi is using App.CreateGUID. On Windows App.CreateGUID private method calls CoCreateGuid function, which could be easily called directly from BD, as shown in that cross-posted link.

-

The task of intercepting WM_DDE_EXECUTE message has been around for a while here and there. Except that private OS Open Document event there are two more ways to get this done. 1. Using Windows Message Queue Library 2. Using DDEML of WinAPI and self-written callback library More details can be found in this thread. I now think that it could even be simplified a bit, if one could try to hack that internal LinkDdeCallback function and reuse it like LabVIEW does. But I feel too lazy to check it now.

-

It's just a cosmetic token to get "Run At Any Loop" option visible in the IDE mode. After the flag is set, it's sticky to VIs, on which it was activated. No need to add the token to the EXE's settings.

-

There's obscure "Run At Any Loop" option, being activated with this ini token: showRunAtAnyLoopMenuItem=True Firstly mentioned by @Sparkette in this thread: I've just tested it with this quick-n-dirty sample, it works. Also some (all?) property and invoke nodes receive "Run At Any Loop" option if RMB clicking on them. But from what I remember, not all of them really support bypassing the UI thread, so it needs to be tested by trial and error, before using somewhere.

-

Is this what you are looking for? Front Panel Window:Alignment Grid Size VI class/Front Panel Window.Alignment Grid Size property LabVIEW Idea Exchange: Programmatic Control of Grid Alignment Properties (Available in LabVIEW 2019 and later)

-

I have seen LabWindows 2.1 on old-dos website. Don't know if it's of any interest for you tho'. As to BridgeVIEW's, I still didn't find anything, neither the scene release nor the customer distro. Seems to be very rare. Sure some collectors out there would appreciate, if you archive them somewhere on the Wayback Machine. 🙂

-

It's utilizing the PCRE library, that is incorporated into the code. It's a first incarnation of PCRE, 8.35 for a 32-bit lvserial.dll and 8.45 for a 64-bit one. When configuring the serial port, you can choose between four variants of the termination: /* CommTermination2 * * Configures the termiation characters for the serial port. * * parameter * hComm serial port handle * lTerminationMode specifies the termination mode, this can be one of the * following value: * COMM_TERM_NONE no termination * COMM_TERM_CHAR one or more termination characters * COMM_TERM_STRING a single termination string * COMM_TERM_REGEX a regular expression * pcTermination buffer containing the termination characters or string * lNumberOfTermChar number of characters in pcTermination * * return * error code */ Now when you read the data from the port (lvCommRead -> CommRead function), it works this way: //if any of the termination modes are enabled, we should take care //of that. Otherwise, we can issue a single read operation (see below) if (pComm->lTeminationMode != COMM_TERM_NONE) { //Read one character after each other and test for termination. //So for each of these read operation we have to recalculate the //remaining total timeout. Finish = clock() + pComm->ulReadTotalTimeoutMultiplier*ulBytesToRead + pComm->ulReadTotalTimeoutConstant; //nothing received: initialize fTermReceived flag to false fTermReceived = FALSE; //read one byte after each other and test the termination //condition. This continues until the termination condition //matches, the maximum number bytes are received or an if //error occurred. do { //only for this iteration: no bytes received. ulBytesRead = 0; //calculate the remaining time out ulRemainingTime = Finish - clock(); //read one byte from the serial port lFnkRes = __CommRead( pComm, pcBuffer+ulTotalBytesRead, 1, &ulBytesRead, osReader, ulRemainingTime); //if we received a byte, we shold update the total number of //received bytes and test the termination condition. if (ulBytesRead > 0) { //update the total number of received bytes ulTotalBytesRead += ulBytesRead; //test the termination condition switch (pComm->lTeminationMode) { case COMM_TERM_CHAR: //one or more termination characters //search the received character in the buffer of the //termination characters. fTermReceived = memchr( pComm->pcTermination, *(pcBuffer+ulTotalBytesRead-1), pComm->lNumberOfTermChar) != NULL; break; case COMM_TERM_STRING: //termination string //there must be at least the number of bytes of //the termination string. if (ulTotalBytesRead >= (unsigned long)pComm->lNumberOfTermChar) { //we only test the last bytes of the receive buffer fTermReceived = memcmp( pcBuffer + ulTotalBytesRead - pComm->lNumberOfTermChar, pComm->pcTermination, pComm->lNumberOfTermChar) == 0; } break; case COMM_TERM_REGEX: //regular expression //execute the precompiled regular expression fTermReceived = pcre_exec( pComm->RegEx, pComm->RegExExtra, pcBuffer, ulTotalBytesRead, 0, PCRE_NOTEMPTY, aiOffsets, 3) >= 0; break; default: //huh ... unknown termination mode _ASSERT(0); fTermReceived = 1; } } //Repeat this until // - an error occurred or // - the termination condition is true or // - we timed out } while (!lFnkRes && !fTermReceived && ulTotalBytesRead < ulBytesToRead && Finish > clock()); //adjust the result code according to the result of //the read operation. if (lFnkRes == COMM_SUCCESS) { if (!fTermReceived) { //termination condition not matched, so we test, if the max //number of bytes are received. if (ulTotalBytesRead == ulBytesToRead) lFnkRes = COMM_WARN_NYBTES; else lFnkRes = COMM_ERR_TERMCHAR; } else //termination condition matched lFnkRes = COMM_WARN_TERMCHAR; } } else { //The termination is not activated. So we can read all //requested bytes in a single step. lFnkRes = __CommRead( pComm, pcBuffer, ulBytesToRead, &ulTotalBytesRead, osReader, pComm->ulReadTotalTimeoutMultiplier*ulBytesToRead + pComm->ulReadTotalTimeoutConstant ); } As shown in the code above, when the termination is activated, the library reads data one byte at a time in a do ... while loop and tests it against the term char / string / regular expression on every iteration. I can't say how good that PCRE engine is as I never really used it.

-

And what's the image data type (U8, U16, RGB U32, ...)? You need to know this as well to calculate the buffer size to receive the image into. Now, I assume, you first call the CaptureScreenshot function and get the image pointer, width and height. Second, you allocate the array of proper size and call MoveBlock function - take a look at Dereferencing Pointers from C/C++ DLLs in LabVIEW ("Special Case: Dereferencing Arrays" section). If everything is done right, your array will have data and you can do further processing.