Leaderboard

Popular Content

Showing content with the highest reputation since 06/09/2025 in Posts

-

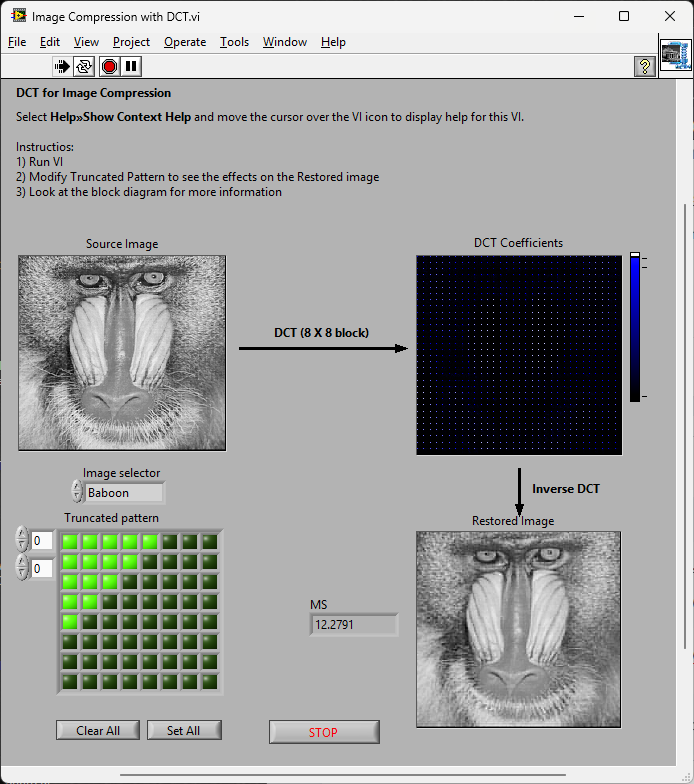

So a couple of years ago I was reading about the ZLIB documentation on compression and how it works. It was an interesting blog post going into how it works, and what compression algorithms like zip really do. This is using the LZ77 and Huffman Tables. It was very education and I thought it might be fun to try to write some of it in G. The deflate function in ZLIB is very well understood from an external code call and so the only real ever so slight place that it made sense in my head was to use it on LabVIEW RT. The wonderful OpenG Zip package has support for Linux RT in version 4.2.0b1 as posted here. For now this is the version I will be sticking with because of the RT support. Still I went on my little journey trying to make my own in pure LabVIEW to see what I could do. My first attempt failed immensely and I did not have the knowledge, to understand what was wrong, or how to debug it. As a test of AI progression I decided to dig up this old code and start asking AI about what I could do to improve my code, and to finally have it working properly. Well over the holiday break Google Gemini delivered. It was very helpful for the first 90% or so. It was great having a dialog with back and forth asking about edge cases, and how things are handled. It gave examples and knew what the next steps were. Admittedly it is a somewhat academic problem, and so maybe that's why the AI did so well. And I did still reference some of the other content online. The last 10% were a bit of a pain. The AI hallucinated several times giving wrong information, or analyzed my byte streams incorrectly. But this did help me understand it even more since I had to debug it. So attached is my first go at it in 2022 Q3. It requires some packages from VIPM.IO. Image Manipulation, for making some debug tree drawings which is actually disabled at the moment. And the new version of my Array package 3.1.3.23. So how is performance? Well I only have the deflate function, and it only is on the dynamic table, which only gets called if there is some amount of data around 1K and larger. I tested it with random stuff with lots of repetition and my 700k string took about 100ms to process while the OpenG method took about 2ms. Compression was similar but OpenG was about 5% smaller too. It was a lot of fun, I learned a lot, and will probably apply things I learned, but realistically I will stick with the OpenG for real work. If there are improvements to make, the largest time sink is in detecting the patterns. It is a 32k sliding window and I'm unsure of what techniques can be used to make it faster. ZLIB G Compression.zip5 points

-

3 points

-

Phew that is a pretty strong opinion! Although I personally am not a fan of the overall style of DQMH none of my problems are with the scripting/wizards or placeholder text. I think any framework that tries to do "a lot" will be complicated... your own personal framework (which you likely find trivial to use) is likely to be a bit weird to others. DQMH is extremely popular for a reason... To paraphrase the words of a wiser person than I, "please don't yuck someone elses yum"3 points

-

It is not that LabVIEW MAY unregister the reference, but that it WILL unregister the reference as soon as the top level VI in whose hierarchy the reference was created goes idle. This is by design and the only way to prevent that is to either keep that hierarchy active until any other user of that refnum has finished or delegate creating of the refnum to the place where it is needed, for instance through a LV2 style global maintaining the reference in a shift register and when being called for the first time it will create the refnum if the shift register contains an invalid refnum. True Actor Framework design kind of mandates that all refnums are created in the context of where they are used not some other global instance that may or may not keep running for the time some Actor is using the refnum.2 points

-

Hi everyone, Just want to share our open source project "Labview Python Bridge". Connect labview apps with python apps in realtime with multi-processing data queues. https://github.com/jmor2000/labview_python_bridge If anyone has any questions or suggestions for new developments / features, let me know. Cheers Jeff2 points

-

Are you seriously expecting anyone to install a random executable on their system from an unknown publisher, provided by an anonymous person on the web, where one can't even get a proper link in Google to the actual company page? Sorry, but anyone doing that should not be allowed near 5m of a computer system!2 points

-

Absolutely echo what Shaun says. Nobody banned them. But most who tried to use them have after some more or less short time run from them, with many hairs ripped out of their head, a few nervous tics from to much caffeine consume and swearing to never try them again. The idea is not really bad and if you are willing to suffer through it you can make pretty impressive things with them, but the execution of that idea is anything but ideal and feels in many places like a half thought out idea that was eventually abandoned when it was kind of working but before it was a really easily usable feature.2 points

-

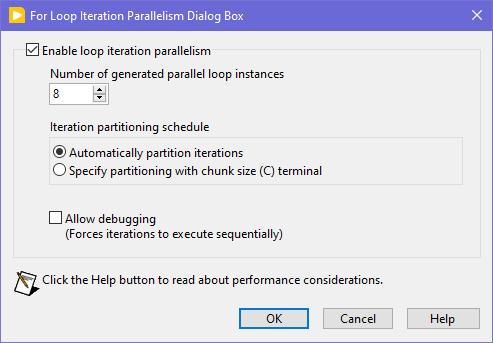

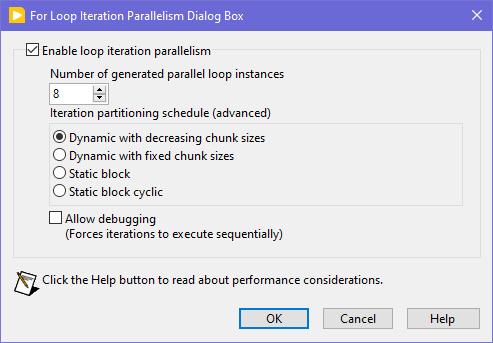

Seems like this one has "escaped everyone's grasp" too. ParallelLoop.ShowAllSchedules=True Because was only checked from the password-protected diagram of ParallelForLoopDialog.vi (LabVIEW 20xx\resource\dialog). Present since LabVIEW 2010. When activated, allows to apply more advanced iteration partitioning schedule. In other words, instead of this you will get this Сould this be useful? I can't say. Maybe in some very specific use-cases. In my quick tests I didn't manage to get increase in any productivity. It's easy to mess up with those options and make things worse, than by default. Also can be changed by this scripting counterpart.2 points

-

Look at this new download on VIPM https://www.vipm.io/package/bjm_lib_request_power/2 points

-

You want an ability to override the Equality or Comparison operators? I'm unsure, whether it really existed in OpenG packages, but now you have those neat malleable VIs, that let you do that: Search Unsorted 1D Array , Sort 1D Array , Search Sorted 1D Array. They have an additional input to specify your own equals or less function in a form of a custom comparison class or a VI refnum. There's an article to help: Creating a Custom Sorting Function in LabVIEW2 points

-

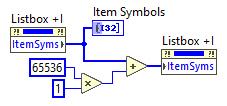

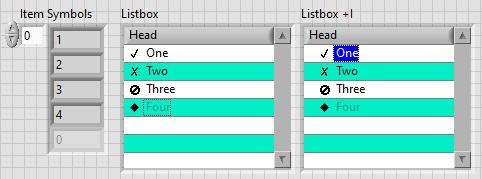

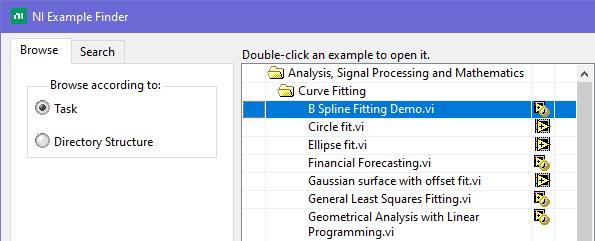

This is exactly what was said in that ancient thread: Tree control in labview. So if you add 65536*N to the Item Symbols property of the Listbox and have the "Enable Indentation" option activated, you shift the symbol/glyph and the text N levels to the right. Could be useful for simple 'parent-child' relationships, if you don't want to use a Tree. And still it's used in Find Examples / NI Example Finder window:2 points

-

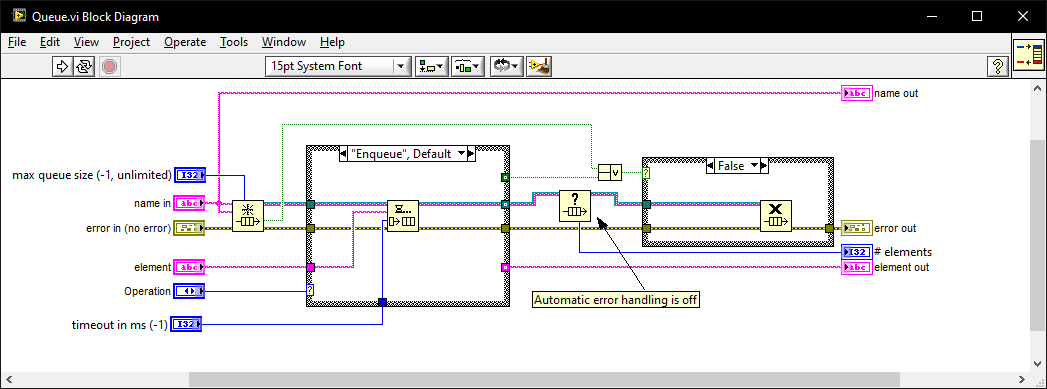

I once went for an interview where they gave me a coding test and asked me to modify it. It was a very long time ago so I don't remember the exact modification they wanted (nothing to do with memory leaks) but I do remember the obtain queue and read queue inside a while loop with the release queue outside. I asked if they wanted me to also fix the memory leak as well as the modifications and they were a little puzzled until I explained what you have just said. I must have seen (and fixed) this while-loop bug-pattern a thousand times since then in various code bases. I also created this VI which I generally use instead of the primitives as it intialises on first call, can be called from anywhere, and prevents most foot-shooting by rolling them all into a single VI and ensuring all references but 1 are closed after use. Queue.vi2 points

-

1 point

-

1 point

-

Bit of a long shot but is your FPGA initialising Trig_0 in any way, e.g. Setting it to F on startup to get it into a known state? I've just recently fixed an issue on my 1085 system where everything was running normally until I used a FPGA card to set a trigger. Once this had happened the trigger was reserved by the FPGA and I could no longer control it through DAQmx even if I closed and restarted the DAQmx tasks, but I also didn't get any DAQmx errors.1 point

-

My part of the world! Unfortunately, I don't have any connections to the realm you are talking about. But I would look at Northern Kentucky University (just across the Ohio river) as they have a good CS program (or at least used to). There is also Miami University (not to be confused with the one in Florida), Wright State University (where I got my MSE), and University of Dayton about an hour north of here in the Dayton area. University of Kentucky is about 2 hours south (Lexington, Kentucky), which is about the same distance from Ohio University (Athens) or THE Ohio State University (Columbus).1 point

-

1 point

-

There should be a forum on the dark side for that, but anyway, here you go. LabGRAD_21.zip1 point

-

For fun. 😄 "Science isn't about why; it's about why not!" - Cave Johnson1 point

-

1 point

-

1 point

-

A Timestamp is a 128 bit fixed point number. It consists of a 64-bit signed integer representing the seconds since January 1, 1904 GMT and a 64-bit unsigned integer representing the fractional seconds. As such it has a range of something like +- 3*10^11 years relative to 1904. That's about +-300 billion years, about 20 times the lifetime of our universe and long after our universe will have either died or collapsed. And the resolution is about 1/2*10^19 seconds, that's a fraction of an attosecond. However LabVIEW only uses the most significant 32-bit of the fractional part so it is "only" able to have a theoretical resolution of some 1/2*10^10 seconds or 200 picoseconds. Practically the Windows clock has a theoretical resolution of 100ns. That doesn't mean that you can get incremental values that increase with 100ns however. It's how the timebase is calculated but there can be bigger increments than 100ns between two subsequent readings (and no increment). A double floating point number has an exponent of 11 bits and 52 fractional bits. This means it can represent about 2^53 seconds or some 285 million years before its resolution gets higher than one second. Scale down accordingly to 285 000 years for 1 ms resolution and still 285 years for 1us resolution.1 point

-

😅 You might be waiting a while, I'm mostly interested in compression, not decompression. That being said in the post I made, there is a VI called Process Huffman Tree and Process Data - Inflate Test under the Sandbox folder. I found it on the NI forums at some point and thought it was neat but I wasn't ready to use it yet. It isn't complete obviously but does the walking through of bits of the tree, to bytes. EDIT: Here is the post on NI's forums I found it on.1 point

-

1 point

-

The thing I loved about the original LabVIEW was that it was not namespaced or partitioned. You could run an executable and share variables without having to use things like memory maps. I used to to have a toolbox of executables (DVM, Power Supplies, oscilloscopes, logging etc. ) and each test system was just launching the appropriate executable[s] at the appropriate times. It was like OOP composition for an entire test system but with executable modules. Additionally, crashes were unheard of. In the 1990's I think I had 1 insane object in 18 months and didn't know what a GPF fault was until I started looking at other languages. We could run out of memory if we weren't careful though (remember the Bulldozer?). Progress!1 point

-

I have always used this library to prevent the screensaver and windows lock from occurring. Our IT locks down the computer so the screensaver, lock screen, cannot be changed. This library bascially tells Windows it's in Presentation mode, e.g., slideshow, watching a movie, etc, such that the screen will not got to screensaver or lock screen.1 point

-

1 point

-

You might have more success posting this on the Discord. Most of the conversations happen there these days.1 point

-

I don't know what drivers are used under the hood, but I've recently used G-Audio to interface to the mic/speakers for a LabVIEW application I was working on.1 point

-

Thanks, I'll be honest, I'm allergic to Discord. Vehemently so. To the point where I refuse to use it. Just seems like a lot of unfiltered noise to this old man. I'm gonna play with NodeRed and see if it's the tool of choice. And oh, back in the day I was a National Instruments Alliance Member. Dunno if that's still a thing or not. Cheers,1 point

-

1 point

-

Hi My advice for managing multiple versions of LabVIEW is always the same : >>> Install only one LabVIEW version per partition if you also need to install any driver, toolkit or module. Or need other software that integrates with LabVIEW in some way. No exceptions. I do have VMWare installed with Windows XP to be able to open ancient LabVIEW versions like 6.1 or read the old CHM help files, accepting the sluggish performance of the VM environment. I avoid using it for anything 'serious'. To manage the span between LabVIEW 2018 and 2024 I would divide the disk into two partitions and install two copies of Windows and then install LabVIEW. To manage multiple partitions and selecting which to boot from by default, I recommend installing EasyBCD. But you don't have to. Windows creates a simple multiboot menu itself. There are other options too. But they require some dedication going into the art of multiboot management. ¤ You can install Windows on an external USB3 connected disk, SSD or FlashDisk. Microsoft abandoned the concept in 2020. But a program called Rufus revived the concept and now there are many tools that gives this as an opportunity. Works splendidly even with Windows 11. ¤ Some laptops ( and desktops of course ) support easy change of the disk. Sometimes using a replaceable disk craddle instead of the DVD drive. Good luck1 point

-

There is an Application property called Display->All Monitors. It will give you the pixel ranges of the monitors in your system. What I've done is to use the calling VI's position to figure out which monitor it was on and then place the new VI window as needed. You could use a win32 dll call to get the mouse position as well if that better meets your requirements.1 point

-

Discussion forums like LAVA and ni.com are challenged these days by LLMs that can answer in a well structured manner very quickly and handle follow-up questions on the spot. Forums have their stenghts, but when it comes to basic questions that LLMs can find a lot about, spread across multiple forums, the LLMs win. It is a bit of a catch 22 though, as the LLMs need the forums alive to stay up to date 😮 ------------------------------ Here is Grok answering you question of what a DVR is: DVRs as References: In LabVIEW, DVRs (Data Value References) are a mechanism to access data by reference rather than by value. This means that instead of creating copies of the data (as is typical in LabVIEW's dataflow paradigm), a DVR provides a pointer-like reference to a single memory location where the data resides. This avoids unnecessary data duplication, which can be critical for large datasets or performance-sensitive applications. Avoiding Copies: By using DVRs, you can manipulate the same data in memory without creating copies, which is especially useful for large arrays, clusters, or other complex data structures. This reduces memory usage and improves performance. Parallel Operations and Race Conditions: When multiple parallel operations (e.g., parallel loops or VIs) access the same DVR, there is a potential for race conditions if the access is not properly synchronized. LabVIEW provides the In-Place Element Structure to safely access and modify DVR data. This structure ensures that only one operation can read or write to the DVR at a time, preventing race conditions. Without this, simultaneous read/write operations could lead to unpredictable results or data corruption. Key Points to Add: Thread Safety: DVRs are not inherently thread-safe. You must use the In-Place Element Structure (or other synchronization mechanisms like semaphores) to avoid race conditions when multiple parallel tasks access the same DVR. Use Cases: DVRs are commonly used in scenarios where you need to share data between parallel loops, modules, or processes without copying, such as in real-time systems or when managing shared resources. Limitations: DVRs are only valid within the application instance where they are created, and the data they reference is freed when the reference is deleted or the application stops. --------------------- Before DVRs we typically had to resort to cloning of functional globals (VIs), but DVRs make this a little more dynamic and slick. You can have a single malleable VI operate on multiple types of DVRs too e.g., that opens up a lot of nice reuse. If you have an object that requires multiple circular buffers e.g. you can include circular buffer objects in the private data of that object, with the circular buffer objects containing a DVR to an array acting as that buffer... -------------------- Here is ChatGPT comparing functional globals with DVRs: Functional Globals (FGs) and Data Value References (DVRs) are both techniques used in programming (particularly in LabVIEW) to manage shared data, but they offer different approaches and have different strengths and weaknesses. FGs encapsulate data within a VI that provides access methods, while DVRs provide a reference to a shared memory location. Functional Globals (FGs): Encapsulation: FGs encapsulate data within a VI, often a subVI, that acts as an interface for accessing and modifying the data. This encapsulation can help prevent unintended modifications and promote better code organization. Control over Access: The FG's VI provides explicit methods (e.g., "Get" and "Set" operations) for interacting with the data, allowing for controlled access and potential validation or error handling. Potential for Race Conditions: While FGs can help avoid some race conditions associated with traditional global variables, they can still be susceptible if not implemented carefully, particularly if the access methods themselves are not synchronized. Performance: FGs can introduce some overhead due to the VI calls, but this can be mitigated by using techniques like inlining and careful design. Example: An FG could be used to manage a configuration setting, with a "Get Configuration" and "Set Configuration" VI providing access to the settings. Data Value References (DVRs): Shared Memory Reference: DVRs are references to a memory location, allowing multiple VIs to access and modify the same data. This is a more direct way of sharing data than FGs. Flexibility: DVRs can be used with various data types, including complex data structures, and can be passed as parameters to subVIs. Potential for Race Conditions: DVRs, like traditional globals, can be prone to race conditions if not handled carefully. Proper synchronization mechanisms (e.g., queues, semaphores, or action engines) are often needed to prevent data corruption. Performance: DVRs can be very efficient, especially when used with optimized data access patterns. Example: A DVR could be used to share a large array between different parts of an application, with one VI writing to the array and another reading from it. Key Differences and Considerations: Control vs. Flexibility: FGs offer more control over data access through their defined interface, while DVRs offer more flexibility in terms of the data types and structures that can be shared. Race Condition Mitigation: While both can be susceptible, FGs can be designed with built-in synchronization mechanisms (like action engines), while DVRs require explicit synchronization mechanisms to prevent race conditions. Performance Trade-offs: DVRs can offer better performance in many cases, especially when dealing with large data sets, but this can be offset by the complexity of managing synchronization. Code Readability and Maintainability: FGs can make code more readable and maintainable by encapsulating data access logic, but poorly designed FGs can also lead to confusion. In summary: Use Functional Globals when: You need controlled access to data, want to encapsulate data management logic, or need to ensure some level of synchronization. Use Data Value References when: You need to share data efficiently between multiple parts of your application, need flexibility in the data types you are sharing, or when performance is critical and synchronization can be handled externally. It's worth noting that in many cases, a combination of both techniques might be used to leverage the strengths of each approach. For example, a DVR might be used to share data, while a functional global (or an action engine) is used to manage access to that data in a controlled and synchronized manner.1 point

-

Some people might be tempted to use Obtain Queue and Obtain Notifier with a name and assume that since the queue is named each Obtain function returns the same refnum. That is however not true. Each Obtain returns a unique refnum that references a memory structure of a few 10s of bytes that references the actual Queue or Notifier. So the underlaying Queue or Notifier is indeed only existing once per name, BUT each refnum still consumes some memory. And to make matters more tricky, there is only a limited amount of refnum IDs of any sort that can be created. This number lies somewhere between 2^20 and 2^24. Basically for EVERY Obtain you also have to call a Release. Otherwise you leak memory and unique refnum IDs.1 point

-

I cannot look at your file, but I suggest save the data to TDMS or any binary format of your choice. Once the file is saved, then you can convert it to text.1 point

-

1 point

-

4. WinAPI version using ChooseColor function. NativeColors.rar Far from ideal, don't kick too hard. 🙂 Determine Clicked Array Element Index is from here.1 point

-

Technically it is a resource collector, but not exactly in the same way typical garbage collectors work. Normal garbage collectors work in a way where the runtime system somehow tracks variables usage at runtime by monitoring when they get out of runtime scope and then attempts to deallocate any variable that is not a value type in terms of the stack space or scope space it consumes. The LabVIEW resource collector works in a slightly different way in that whenever a refnum gets created, it is registered together with the current top level VI in the call chain and a destroy callback with a refnum resource manager. When a top level VI stops executing, both by being aborted or simply executing its last diagram element, it informs the refnum resource manager that it goes idle, and that will then make the refnum resource manager scan its registered refnums to see if any is associated with that top level VI and if so, call its destroy callback. So while it is technically not a garbage collector in the exact same way as what Java or .Net does, it still is for most practical purposes a garbage collector. The difference is, that a refnum can be passed to other execution hierarchies through globals and similar and as such might still be used elsewhere, so technically isn't really garbage yet. There are three main solutions for this: 1) Don't create the refnum in an unrelated VI hierarchy to be passed to another hierarchy for use 2) If you do create it in one VI hierarchy for use in another, keep the initial hierarchy non-idle (running) until you do not need that refnum anymore anywhere. 3) If the refnum is a resource that can be named (eg. Queues, Notifiers) obtain a seperate refnum to the named resource in each hierarchy. The underlying object will stay alive for as long as at least one refnum is still valid. Each obtained refnum is an independent reference to the object and destroying one (implicit or explicit) won't destroy any of the other refnums.1 point

-

There is a "best practices" document (this too) but I suspect you are looking for a less abstract set of guidelines.1 point

-

@Ravi Beniwal, I have the same problem, VIPM reports that missing dependency for me too. If it isn't required could it be removed ? If it is required could it be included ? I suspect it is included in version 1.7.028 which does not report the error. I spent(wasted !) time looking for the dependency and downloading it, only to realise it might not be needed as it launches OK from the LV IDE menu Tools\LabVIEW Task Maanger.... Peter1 point

-

We use the MPSSE.dll LABview driver from Benoit. We are trying the i2c read 1 byte and multi bytes. We expect ack for all bytes except the last byte with nak. During read, we understand that the I2C master drives the ack/nak. However, ack and nak happens randomly. Any body have any suggestions Thank you Dan1 point

-

MAT files are now just H5 files(HDF). Look at the library https://h5labview.sourceforge.io/ and find the example for writing a MAT file. You just need to add a special header in the beginning. I assume the dlls needed will work on Windows server, but am not sure.1 point

-

Here is a VI that gets the title of the window that is active. You could then continually loop until the title you expect is active, then perform operations. https://forums.ni.com/t5/LabVIEW/Get-Current-Active-Window/m-p/3930389#M11169261 point

-

Looks like someone beat me to it! Oh well, I already exported it (also for 2009, incidentally) so I'll post it here in case it'd be more convenient to use a regular VI file. 0 to -4096.vi1 point

-

I think I have this fixed. Tried a compiled version of the transport library; this worked without issue. I made the timeout changes, which did not seem to have an impact on performance. I then recompiled everything (the server-side code is used in multiple applications on the system); the delay I was seeing with the one message/response went to expected amounts in tests. I've been waiting to test this with the whole system up and going; unfortunately, we've been battling drive issues that are stopping everything else. Can't definitively say it's fixed. Can't point to a smoking gun. I'm appreciating this forum and the people on it right now.1 point

-

Mwuhahahahaha! Three config tokens have escaped your grasp! I modified them specifically for folks like Flarn! They don't appear as plain text anywhere in the EXE (or in any VI for that matter). Do they guard any great secret of LabVIEW? I'm not telling! But you can have fun pouring through the code and looking for interesting bits and trying to figure out what you need to put in your config file. LabVIEW 2013 or later. Good luck.1 point

-

Basically you need 2 more Property nodes if you want to keep your headers color. you must do what QueueYueue said first. Then : Active Cell.Active Column Number = -2 (this selects all columns) Active Item.Row Number = -1 (this selects the column headers) Active Cell.Background Color = Desired color Then : Active Cell.Active Column Number = -1 (this selects row header) Active Item.Row Number = -2 (this selects all rows) Active Cell.Background Color = Desired color1 point

-

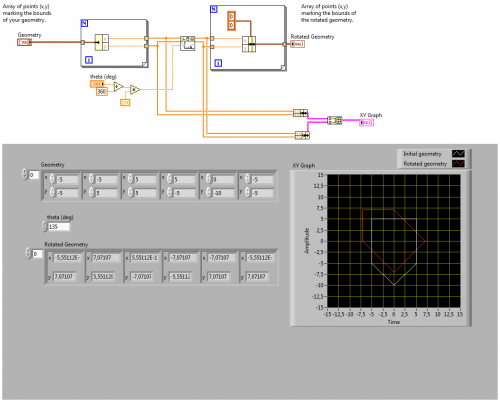

I think that's what the function does behind the scene. A rectangle is simply one case of any number of geometries you can make with this function's inputs. NI Vision rotation algorithm is more complete because it will interpolate colours when the rotated pixel positions are not integers, but otherwise it's the same. The rotation matrix in 2D is exactly what you state above. Rotation of points.vi1 point