-

Posts

5,010 -

Joined

-

Days Won

312

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by ShaunR

-

And the stage is set! In the blue corner, we have the "Triple Buffering Triceratops from Timbuktoo". , In the red corner we have the Quick Queue Quisling from Quebec and, at the last minute. In the green corner we have the old geezers' favourite - the Dreaded Data Pool From Global Grange. Dreaded Data-pool From Global Grange.llb Tune in next week to see the results.

-

Obviously you don't. Looking forward to your triple buffering and bench-marking it Maybe put it in the CR?

-

Are you currently using IMAQ Extract Buffer VI and finding it is not adequate?

-

Everything we said before and use a lossy queue. You are over thinking it because LabVIEW is a "Dataflow paradigm". Synchronisation is inherent!. Double/triple buffering is a solution t get around a language problem with synchronising asynchronous processes that labview just doesn't have. If you do emulate a triple buffer, it will be slower than the solutions we are advocating because they use dataflow synchronisation and all the critical sections, semaphores and other techniques required in other languages are not needed. C++ triple buffering is not the droid you are looking for.

-

Yes. You are right. I had forgotten about those. Whats the betting it's just a IMAQ refs queue

-

Can you elaborate on that a bit more? Lossy, how?

-

I think you are over thinking this. The inherent nature of a queue is your lock. Only place the IMAQ ref on the queue when the grab is complete and make the queue a maximum length of 3 (although why not make it more?). The producer will wait until a there is at least one space left when it tries to place a 4th ref on the queue (because it is a fixed length queque). If you have multiple grabs that represent 1 consumer retrieval (3 grabs then the consumer takes all three), then just pass an array of IMAQ refs as the queue element. As

-

DVRs (for the buffers) and semaphores (LabVIEW's "condition variable"). However. You only have one writer and reader, right? So push the DVRs into a queue and you will only copy a pointer. You can then either let LabVIEW handle memory by creating and destroying a DVR for each image or have a round-robin pool of permanent DVRs if you want to be fancy (n-buffering). You were right in your original approach, you just didn't use the DVR so had to copy the data.

-

https://www.youtube.com/watch?v=OdB2dy59Waw

-

You don't need to do partial matching. You translate the string "The remaining time is %d Seconds" and use the format into string primitive. . You might want to take a look at Passa Mak. It will generate all the language files for translation and switch all the UI controls. (Good luck with the Chinese one. I'm not brave enough to attempt the East Asian translations)

-

I think the OP was just having difficulties figuring out how to do #5 and #6. He'll be back again when he runs into #2 between modules.

- 20 replies

-

- modular

- application design

-

(and 1 more)

Tagged with:

-

MoveFileWithProgress problem from kernel32.dll

ShaunR replied to Houmoller's topic in Calling External Code

Integrating LabVIEW code -

Functional Dataflow Programming with LabVIEW

ShaunR replied to Tomi Maila's topic in LabVIEW Feature Suggestions

I hate strict typing. It prevents so much generic code. I like the SQLite method of dynamic typing where there is an "affinity" but you can read and write as anything. I also like PHPs dynamic typing, but that is a bit looser than SQLite and therefore a bit more prone to type cast issues, but still few and far between. That is why sometimes you see things like value+0.0 so as to make sure that the type is stored in the variable as a double, say. Generally, though. I have spent a lot of time writing code to get around strict typing. A lot of my code would be a lot cleaner and generic if it didn't have to be riddled with Var to Data with hard-coded clusters and conversion to every datatype under the sun. You wouldn't need a polymorphic VI for every data-type or a humungous case statement for the same. It's why I choose strings which is the next best thing with variants coming in 3rd. <rant> I mean. We have a primitive for string to int, one for string to double another for exponential (and again, all the same in reverse). Really? Why can't we connect a string straight to an integer indicator and override the auto-guess type if we need to. </rant> But yes. I think it can can be done in LabVIEW. They could do it with variants. Not as good as dynamic typing, but it'd be closer. A variant knows what type the data is and you can connect anything to them but they failed to do the other end when you recover the value (I think it was a conscious decision). That is why I call variants "the feature that never was" because they crippled them. I think recovery of data is a bit of a blind spot with NI. Classes suffer the same problem. It's always easy getting stuff into a forma/type/class, but getting it out again is a bugger.- 7 replies

-

- functional programming

- dataflow

-

(and 1 more)

Tagged with:

-

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

Thinking about it. If you go the micro controller route for the detector, you might as well go for Bluetooth to get the extra range and tell people to keep their ids visible. I once used a similar idea to upload results data to engineers phones when they passed by inspection machines on the factory floor. It continuously scanned for bluetooth phones (not many tablets in those days ) and if it found one, compared the mac address to a user list. It then pushed the files to their SD card. You may remember the OPP Push software that was in the CR a while ago. That was part of it (the bit that detected and pushed the files). -

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

Well, apart from the phone doesn't need to be out and can be in their pocket (they only have to visit a page once that auto-refreshes and leave the browser open without killing it) then you'll need 3 USRPs to do that passively via their GSM signal (not cheap). But sure. There's lots of ways from using their phones (which we have covered) , RFID tags, GPS pedometers, Wifi triangulation and a myriad of custom soluyions. I could carry on for weeks giving solutions with the tech available. It depends on you budget, timescale and amount of effort you want to put in and only you currently know that. I get the feeling, though, that you have been asked to do it as the "tech guy" and gone "sheesh, I hope I don't have to build one" -

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

No. I'm suggesting you just use a normal webserver with PHP (or labview if you really must) and Javascript and the phones browser. (HTML5 geolocation). Thanks to the Russians, we now have much better accuracy if your phone can use GNSS.I guess yours can't -

I've though for a long time we've needed another option apart from just Error and Warning. Errors break VIs, but warnings are just overwhelming in number but completely trivial. So much so that they are usually ignored and/or turned off-ineffective.. I think most warnings should be regarded as "hints" and things like your race condition are actual warnings but they shouldn't break the VI - mainly for Mje's reason that it may not matter but also for "out there" edge cases like the RNG. Personally, I'd love to see warnings (my definition of them) about race conditions. If you could pull it off, it would prevent quite a few of us stepping in those bear traps.

-

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

Sure, if you just want to use a router as a proximity device and say they are in the building. If you want to know which room they are in, then use an RFID and send the data via that wifi router. You can have 100 scattered all throughout the building then and know who's in all the rooms. If, on the other hand you want to know which room they are in with Wifi, you need 3 of them o do tiangulation with less accuracy and dead rooms. It depends on granularity required and how much time you want to put in You have 4 technologies which can all interface to each other and can be used to pinpoint people. Combinations of those technologies will give you differing accuracies and capabilities. I'd want to be able to resolve who was talking to who, how many are grouped and where and who's wandered off to where they shouldn't. That's just me though If you want a cheap, cheerful and quick solution, then you could just go for them using their own phones (or let them borrow some). Then you don't need wifi or any fancy hardware (although if you borrowed a USRP from NI, you could set up your own cellphone base-station ). You could then just track them on Google Maps with a bit of PHP and javascript on a webserver. But where's the fun in that? -

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

It's unusual for buy now buttons for hardware solutions. Here's one for RFID. Many mobile phones also come with Near Field technologies now, too. It really has to be GPS for long range, though. I think Wifi on a few acres will give huge blind spots (just a gut feeling). -

Streaming timestamped data to chart, but ignoring gaps in acquisition

ShaunR replied to Mike Le's topic in User Interface

You could use the "Picture Plot" to draw it. If you are heavily dependent on cursors and annotations, then you'd have to handle all that yourself so that may put you off. Another alternative is to have two graph controls side-by-side with their scales hidden and use a Picture Plot function to draw the scale. You won't have to worry about alignment but cursors wont cross the boundaries so you'd have to "fake" it. In a similar vein, you could have two controls, one on top of the other and manipulate the start and maximum scales so that they appear to be contiguous. You may get flicker with this though. With the exception of the first, these are all variations on "don't put them all in one graph", so I expect there are others. We've all gotten used to the in-built features of the graphs so generally we balk at having to write the code to get the features back. This puts many people off the first option but you can do some fantastic graphs with the Picture Plots (gradient shaded limit areas behind the data, anyone?). -

Need help: want to build a real-time GPS tracker for multiple movers

ShaunR replied to Aristos Queue's topic in Hardware

There probably are things off the shelf (maybe look into car fleet trackers). This sounds like a fun project that you should just do because you can, though. Maybe later your charity can sell it to other organisations (paintball?) to raise some funds, If you get the users to use their own phones, you will even get cell location enhancement and cell location when GPS is unavailable. Remember that mobile phones are trracking devices that make telephone calls You could use RFID in addition to GPS. GPS is accurate to a few meters (10?) and RFID is cheap. They would be great for detecting when a room is occupied and by whom if the GPS cannot distinguish. I would even be tempted to make my own RFID senders with an arduino or similar, but it really depends on your time-scale. How long have you got? Whats the time constraints for this project? I'll give your charity some licences for the Websocket API, gratis, if you want to stream the data via websockets to peoples browsers in their tablets or phones (you only need them for development). Webservices? Data Dashboard? Meh! I thought you wanted real-time That technology is sooooo 2000 -

Well. You mention that you don't want to use LVOOP because it makes it difficult to grasp for novices but then advocate a Muddled Verbose Confuser (MVC) architecture which even experts on that design pattern can't agree on what should be in which parts when it comes to real code. As it needs to be simple for novices, I also suggest you throw rotten tomatoes at anyone that mentions "The Actor Framework". Since there maybe many people who build on the code and many will have limited experience, Have you thought about a service oriented architecture? With this approach you only need to define the interfaces to external code written by "the others". They can write their code anyway they like but it won't affect your core code if they stuff it up. You can then create a plugin architecture that integrates their "modules" that communicate with your core application via the interfaces. The module writers don't need to know any complicated design patterns or architecture or even the details of your core code (however you choose to write it). They will only need to know the API and how to call the API functions..

- 20 replies

-

- modular

- application design

-

(and 1 more)

Tagged with:

-

Xilinx14_7? Did you install that or did it come with your FPGA?

-

Real-time acquisition and plotting of large data

ShaunR replied to wohltemperiert's topic in LabVIEW General

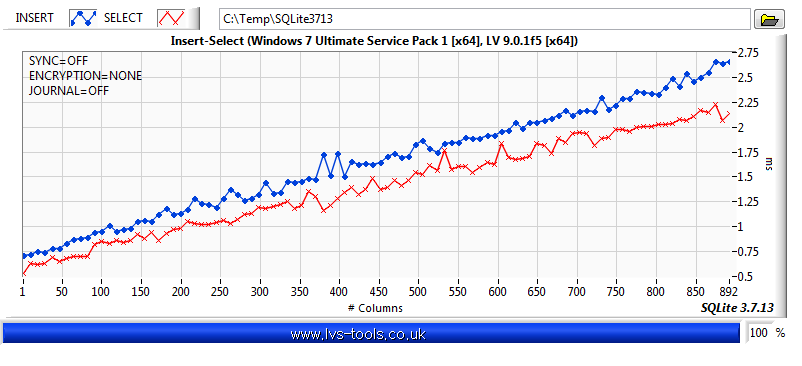

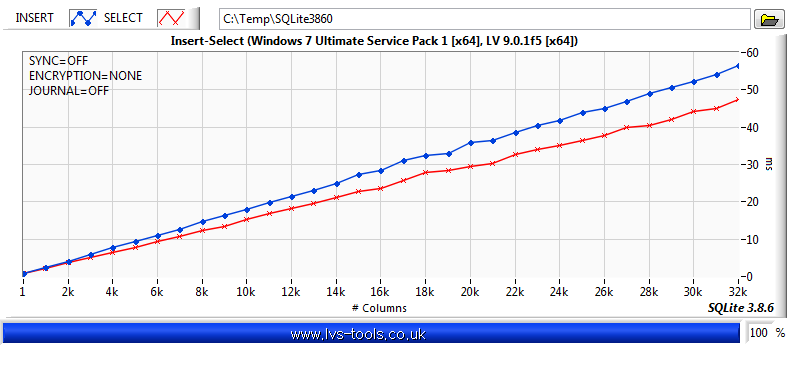

yup. It is linear. A while later............. That was up to the default maximum number of columns (1000 ish for that version). As I was I was building version 3.8.6 for uploading to LVs-Tools I thought I would abuse it a bit and compile the binaries so that SQLite could use 32,767 columns (3.8.6 is a bit faster than 3.7.13, but the results are still comparable). I think I've taken the thread too far off from the OP now, so that's the end of this rabbit hole.Meanwhile. Back at the ranch..........