-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

279

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Rolf Kalbermatter

-

New VIs should simply inherit the default setting you made in your Tools->Options->Environment->General unless you create them from inside a project, in which case they inherit the setting as made in the project properties. The bug mentioned by Yair may be related to the fact that the restoring of auto-saved VIs is not happening in the project context but in the global LabVIEW context and therefore uses the global settings from the Tools menu.

-

The wrapping may be done anyways even if it is a clean pass through of all parameters. Simply because this was how the VIs always worked and it was actually easier. The lvanlys.dll had to be modified anyways, so just leave the original exports and redirect them wherever necessary to MKL, with or without any parameter massaging. This makes the tedious work of going into every single LabVIEW VI to edit the Call Library Node superfluous. And yes I have experience with wrapping DLLs from LabVIEW and can assure you that the last thing you want to do when changing something is to make a change that will require you to go into every single VI and make some more or less minor changes. Aside from being a mind numbing job, it is also VERY VERY easy to make stupid mistakes in such changes by forgetting a certain change in some of the VIs. And then you have to open each and every VI again to make sure that you did really change everything correctly, and to be safe, do that again and again. Tedious, painful and utterly unnecessary. Instead just leave the VIs alone, change the underlying DLL in whatever way you need and you are done. There is still a lot of testing after that, but at least one potential source of errors less.

-

You might be right but please note that those LabVIEW VIs more or less all call "internal" functions in lvanlys.dll. But in reality all they do is massage the parameters from a LabVIEW friendly format into an Intel MKL C(++) API format and then call the actual MKL library. So the fact that a VI calls LV_something in that DLL means absolutely nothing in terms of if it is ultimately executed inside lvanlys.dll or actually simply forwarded to MKL to do the heavy number crunching part. It could be implemented fully in lvanlys.dll, because the MKL doesn't provide this function or not in the way the old NI library did, so for compatibility reason they maintained the old code but in most cases it is simply a forward to the MKL with minor parameter datatype translations. Even if there is some real implementation part in lvanlys.dll for a function, it still will very often ultimately call the MKL for lower level functions so may still depend on a corrected MKL to fix a bug.

-

Ethercat for LabView2020 on Linux

Rolf Kalbermatter replied to Yaw Mensah's topic in LabVIEW General

No! We used it on a IC 3173 NI Linux RT controller. It should supposedly work on all NI Linux RT hardware. -

I'm not exactly privy to the details but most likely NI doesn't even build the MKL themselves. They simply take the binaries as released from Intel and package them with their LabVIEW wrapper and be done with it. And there are a number of issues with this that way: 1) NI can indeed not patch that library themselves anymore but has to wait on Intel to make bug fixes. 2) And NI won't pick a new release everytime Intel decides to make some more or less relevant change to that library. Instead they will likely review the list of changes since the last pick they did and decide if it is worth the hassle to rebuild a new MKL + LabVIEW package. This is not a one hour process of just adding the new DLLs to the old package build but instead involves a lot of extra work in terms of making sure everything is correct and lots and lots of testing too. The moment for such a review is likely usually a few months before a new release of LabVIEW. IF Intel happens to make this one single important change one month after this, NI will most likely not pick it up until the next review moment a few months before the next full LabVIEW release and then you can easily see how it can take 2 years.

-

Making USB-8451 work with PXI running LabVIEW RT / Phar Lap ETS

Rolf Kalbermatter replied to codcoder's topic in Hardware

Almost certainly. This device does almost certainly not use a standard USB device class and that means you will have to program on NI-VISA USB Raw to emulate the commands that the native Windows driver sends and receives from the device using directly USB device driver calls. Possible? Yes but not easy and pretty for sure. If it wasn't such a pain to use VISA USB Raw on modern OSes, I would have long ago released a driver for the FTDI chips using pure VISA calls. -

Ethercat for LabView2020 on Linux

Rolf Kalbermatter replied to Yaw Mensah's topic in LabVIEW General

If you would have used a LabVIEW Realtime controller you could have used the NI Industrial Communication for EtherCAT driver. It does have a learning curve for sure, but I have used it in the past successfully. If you use other libraries you will have to make sure that it is 64-bit compiled in order to interface it through the LabVIEW Call Library Node. LabVIEW for Linux is since 2016 only available as 64 bit version. Both libraries you mention are GPL, so this can have very significant consequences for using it in a project that you can't or will not want to make open source itself. -

Actually not exactly. NI set this compile define to make the shared library multi-threading safe, trusting the library developers to have done everything correctly to get this work like it should but somehow it doesn't. Still there is something seriously odd. I could understand that things get nasty if you had other code running in the background also accessing this library at the time you do this test but if this is the only code accessing this shared library something is definitely odd. There is still only one call to the shared library at the time your PQfinish() executes so the actual protection from multiple threads accessing this library is really irrelevant. So how did you happen to configure the PGconn "handle"? Is this a pointer sized integer variable? You are executing on an IC-7173 which is a Linux x64 target, so these "handles" are 64-bit big on your target but 32-bit if you execute the code on LabVIEW for Windows 32-bit! I'm just throwing out ideas here, but the crash from just calling one single function of a library in a reentrant CLN really doesn't make to much sense. The only other thing that could be relevant is if this library would use thread-local storage, but that would be brain damaged considering that it uses "handles" for its connections and can therefore store everything relevant in there instead. And a warning anyways: While I doubt that you would find PG libraries that are not compiled as multithreading safe (this only really makes sense on targets that provide no proper threading support such as Windows threading or the Unix pthread system) there obviously is a chance that it could happen. You can choose to implement everything reentrant and on creation of a new connection, call the function that Shaun showed you. But what then? If that function returns false, all you can do is abort and return an error as you can not dynamically reconfigure the CLNs to use UI threading instead (well you can by using scripting but I doubt you want to do that on every connection establishment and scripting is also not available in a built application). So it does make your library potentially unusable if someone uses a binary shared library compiled to be not multithreading safe.

-

What do you mean with "threadsafe CLN"? It is a rather bogus terminology in this context. What you have is "reentrant" which requires the library to be multithreading safe and "UI Thread", which will allow the library to do all kind of non multithreading safe things. That trying to call PGisthreadsafe() from any context is not crashing is to be expected. This function simply accesses a readonly information that was created at compile time and put in the library. There is absolutely nothing that could potentially cause threading issues in that function. That every other function simply crashes even if you observe proper data flow dependency so that functions never can attempt to access the same information at the same time, would be utterly strange. That would not be just the reentrant setting causing multithreading unsafe issues but something much more serious and basic. I at least assume that you tried this also in single stepping highlighting mode? Does it still crash then?

-

Is it? Then there would be indeed a discrepancy between when the front panel update is executed and when the debug mechanism considers the data to be finally going through the wire. Which could be considered a bug strictly speaking, however one in the fringes of "who cares". I guess working 25+ years in LabVIEW, such minor issues have long ago ceased to even bother me. My motto with such things is usually "get over it and live with it, anything else is bound to give you ulcers and high blood pressure for nothing".

-

Reset Low level TCP connection on LV2018

Rolf Kalbermatter replied to Bobillier's topic in LabVIEW General

Ahhh I see, that one had however no string input at that point. But now it's important to know on which platform this executes!! I don't think this VI is a good method to use in implementing a protocol driver, given LabVIEWs multiplatform nature. The appended EOL will depend on the platform this code runs, while your device you are talking with most likely does not care if it is contacted by a program running on Windows, Mac or Linux but simply expects a specific EOL. Any other EOL is bound to cause difficulties! -

internal warning 0x occurred in MemoryManager.cpp

Rolf Kalbermatter replied to X___'s topic in LabVIEW General

You either have a considerably corrupted LabVIEW installation or are executing some external code (through Call Library Node or possibly even as ActiveX or .Net component) that is not behaving properly and corrupts memory! At least for the Call Library Node it could be also an incorrect configuration of the Call Library Node that causes this. Good luck hunting for this. The best way if you have no suspicion about possible corrupting candidates is to "divide and conquer". Start to exclude part of your application from executing and then run it for some time and see if those errors disappear. Once you found a code part that seems to be the culprit, go and disable smaller parts of that code and test again. It ain't easy but you have to start somewhere. Just because your program doesn't crash right away when executing (or the test VIs that come with such an external code library) does not provide any guarantee that it is fully correct and not corrupting memory anyhow. Not every corruption leads immediately to a crash. Some may just cause slight (or not so sligh) artefacts in measurement data, some may corrupt memory that is later accessed (sometimes as late as when you try to close your VIs or shutdown LabVIEW and LabVIEW dutifully wants to clean all resources, and then stumbles over corrupted pointers, and only when serious things like stack corruption happen do you usually get an immediate crash. -

You are trying to force your mental model onto LabVIEW data flow. But data flow does not mandate or promise any specific order of execution not strictly defined by data flow itself. A LabVIEW diagram typically always processes all input terminals (controls) and all constants on the top level diagram (outside any structure) first and then goes to the rest of the diagram. The last thing it does is processing all indicators on the top level diagram. There is no violation of any rule in doing so. Updating front panel controls before the entire VI is finished is only necessary if the according terminal is inside a structure. Clumping the update of all indicators on the top level diagram into one single action at the end of the VI execution does not delay the time the VI is finished but can save some performance. It also has to do with the old rule that it is better to put pass through input and output terminals on the top level diagram and not bury them somewhere inside structures, aside from other facts such as readability and the problem of output indicators potentially not being updated at all for subVIs, retaining some data from previous execution and passing that out.

-

Reset Low level TCP connection on LV2018

Rolf Kalbermatter replied to Bobillier's topic in LabVIEW General

What is that icon with the carriage return/line feed doing? Any local specific code in there? If your other side expects a carriage return, linefeed or both together specifically and just ignores other commands you could get similar behaviour. -

Something is surely off here: You say that the checksum is in the 7th byte and the count in the 8th. But aside that it is pretty stupid to add the count of the message at the end (very hard to parse a binary message if you don't know the length, but you only know that length if you read exactly the right amount of data), those 70 71, 73 and so on bytes definitely have nothing to do with the count of bytes in your message. Besides what checksum are you really dealing with? A typical CAN frame uses a 15 bit CRC checksum. This is what the SAE_J1850 fills in on its own and you can't even modify when using that chip. It would seem that what you are dealing with is a very device specific encoding. There could be a CRC in there of course, but that is totally independent of the normal CAN CRC. As far as the pure CAN protocol is concerned, your 8 bytes of the message are the pure data load, and there is some extra CAN framing around those 8 bytes, that you usually never will see on the application level. As such adding an 8 bit CRC to the data message is likely a misjudgement from the device manufacturer. It adds basically nothing to the already performed 15 bit CRC checking that the CAN protocol does itself one protocol layer lower.

-

Is that other application a normal Windows app or a LabVIEW executable? In most normal Windows apps each control (button, numeric etc) is really another window that is a child window of the main window. LabVIEW applications manage their controls entirely themselves and don't use a window for each of them. They only use a window for the main window of each front panel and for ActiveX/.Net controls.

-

1D Array to String not compiling correctly

Rolf Kalbermatter replied to G-CODE's topic in OpenG General Discussions

LabVIEW for Mac OS Classic used actually a "\r" character for this, since the old MacOS for some reason was using yet another EOL indicator. This is strictly speaking not equivalent to the originally intended implementation since this would remove multiple empty lines at the end while the original one only removed one EOL. However the Array to Spreadsheet String function should not produce empty lines (except maybe if you enter a string array of n * 0 elements) but that does not apply for this 1D array use case. -

Importing shared Library .so in Linux.

Rolf Kalbermatter replied to Yaw Mensah's topic in Code Repository (Certified)

LabVIEW doesn't handle name mangling at all. It would be an exercise in vain to try since GCC does it differently than MSVC, just to name two. There is no standard in how names should be mangled and each compiler uses its own standard. There is some code in LabVIEW that tries to work around some common decorations to functions names such as a prepended underscore. If the Call Library Node can't find a function in the library it tries to prepend an underscore to the name and tries again. Stdcall compiled functions also have by default a decoration at the end of the functions name. It is an ampersand (@) followed by a number that basically indicates the number of bytes that have to be pushed on the stack for this function call. LabVIEW tries to match undecorated names to names with the stdcall decoration if possible but that can go wrong if you have multiple functions with the same name but differently sized parameters. It's also not possible to compile that in standard C but C++ could theoretically do it, but I never saw it in the wild until now. One other speciality that LabVIEW does under Windows is if a function name is only a decimal number and nothing else. In that case it interprets the name as ordinal number and calls the function through its ordinal number. This is an obscure Windows feature that is seldom used nowadays but Windows does have some undocumented functions that are only exported by an ordinal number. Every exported function has an ordinal number, but not every exported function does need an exported function name. This was supposedly mostly to save some executable file size, since the names in the export table can mount up to shockingly many kB's of data. What the OP describes seems to be some of these algorithms trying to match a non-existing function name to one which is present in the library, going somehow havoc. -

Communication with hardware using Serial connection (RS232)

Rolf Kalbermatter replied to Shaun07's topic in LabVIEW General

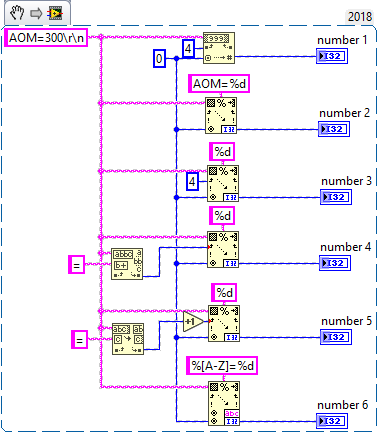

There are about 500+ ways to tackle this. You could use a Scan from String with the format string AOM=%d. Or with the format string %d and the offset with 4 wired. Or a little more flexible and error proof, first check for where the = sign is and use the position past that sign as input to the Scan from String above. -

[CR] MPSSE.dll LABview driver

Rolf Kalbermatter replied to Benoit's topic in Code Repository (Uncertified)

It does for me. -

There are various packages around that do different things to different degree. Most of them are actually APIs that existed in the early days of Windows already. Things that come to mind would be querying the current computer name, user name or allowing to actually authenticate with the standard Windows credentials. Others are dealing with disk functionality although the current LabVIEW File Nodes offer many of those functionalities since they were reworked in LabVIEW 8.0. then there are the ubiquitous window APIs that allow to control LabVIEW and other application windows. As far as LabVIEW is concerned most of those things are since a long time also possible through VI server, but the possibility to integrate an external app as child window can be interesting sometimes (although in many cases a questionable design choice). There are of course many newer Windows APIs too but a lot of them are either to specialistic or to complex to be of much interest to most of the people. For instance there have been many attempts at accessing Bluetooth Low Energy APIs in Windows, but that API is complex, badly documented and riddled with compatibility issues, which would make a literal translation with a LabVIEW VI per API function a very useless idea for almost every LabVIEW user. But once you start to go down the path of creating a more LabVIEW user friendly interface, it is usually easier to develop a wrapper library in C that takes care of all the low level hassles and provides a more concise and clean API to the LabVIEW environment, so that one does not have to do very involved C compiler acrobatic tricks on the LabVIEW diagram to satisfy the sometimes rather complicated memory manager requirements of those APIs. The problem with the original idea of the metaformat to allow importing Windows APIs is however that the difficulties of using such an API is very often not limited to making it available in LabVIEW through a Call Library Node. That is indeed specialistic work and can require a deep understanding of C compiler details and memory manager techniques, but the really difficult part is to understand how to use those APIs properly and that use also influences often how you should actually import the API as dadreamer pointed out with the example of string or array pointers that can either be passed a NULL value or the actual pointer. It would be almost impossible to provide this functionality in the current Call Library Node implementation, since LabVIEW does not make a difference between an empty string (or array) and a NULL pointer (in fact LabVIEW has to the outside nothing like a NULL pointer since it does not provide pointers). Internally one of the many optimizations is that all LabVIEW functions are happily accepting a NULL handle to mean the same as a valid handle with the length value set to 0. How to tell LabVIEW that an empty string should pass a NULL value in one case and a pointer to an empty string in the other? Some APIs will crash when being passed a NULL pointer, others will do weird things when receiving an empty C string pointer. And that might not even be something that you want to have in the Call Library Node configuration since there exist APIs that understand a NULL pointer to have a very different meaning (such as to use a specific default value for instance) than what an empty C string will do (omitting this parameter for instance). This has to be programmed explicitly anyways by using a case structure for both cases and only a human can make this decision at this point based on the prosa text in the function documentation. A metaformat can say if a pointer is allowed to be a NULL value or not, but being allowed to be NULL doesn't mean automatically that an empty string should be passed as NULL value. And that all is not really possible to describe in a metaformat in a way that is parsable by an automated tool. It can be described in the prosa text of the Programmer Manual to that function, but often isn't or in a way that says nothing more than what the names of the parameters already suggest anyways. And as mentioned, Windows APIs make only a small fraction of the functions that I have imported through the Call Library Node in my over 25 years of LabVIEW work

-

NI abandons future LabVIEW NXG development

Rolf Kalbermatter replied to Michael Aivaliotis's topic in Announcements

That was very lame of Indy! 😆 -

NI abandons future LabVIEW NXG development

Rolf Kalbermatter replied to Michael Aivaliotis's topic in Announcements

While I prefer non-violence, I definitely feel more for a sword than a gun. It has some style. 😎 -

The potential is there and if done well (which always remains the question) might be indeed a possibility to integrate into the import library wizard. But it would be a lot of work in an area that very few people really use. The Win32API is powerful but not the complete answer to God, the universe and everything. Also there are areas in Windows you can not really access through that API anymore. In addition I wonder what the motivation at Microsoft is for this, considering their push to go more and more towards UWP, which is an expensive abbreviation for a .Net only Windows kernel API without an underlying Win32 API layer as in the normal desktop version. And at the same time and purely for users safety (of course 😄), also a limitation to only install apps from the Online Windows Store. 😀 But the Win32API is only a very small portion of external code you will typically want to interface to. It does not cover your typical device instrument driver that comes in DLL form, often with the latest update 10 years ago and with the only support in the form of obscure online posts with lots and lots of guesswork and wrong assumptions. Also it won't help with shared libraries from Open Source projects, unless some brave contributor dives into the topic and creates that meta description for it. But creation of such a meta description for an API is a lot of very specialistic and almost impossible to automate work. Microsoft may really do it, I'm still sceptical if it will be really finished, but most others will probably throw their hands in the air in despair at the first moment they lay eyes on the documentation of this meta description format. 😎 In the best case it will be several years before there is any significant adoption of this by other parties than Microsoft, and only if Microsoft is actively going to lobby for it by other major computer software providers. In reality someone at Microsoft will likely invent some hand brakes in the form of patents, or special signing authorities, that makes adoption of this by other parties extra difficult. And it won't help a single yota for Linux and Mac platforms, which are also interesting to target with the Call Library Node.