-

Posts

3,962 -

Joined

-

Last visited

-

Days Won

279

Content Type

Profiles

Forums

Downloads

Gallery

Everything posted by Rolf Kalbermatter

-

A the so called system color indices. Forgot about them. They are determined on startup based on the current OS scheme or possibly when Windows sends a system message that the OS scheme has been changed.

-

Well a color in LabVIEW is simply an int32 with the lower 24 bits being an RGB color when the MSB is 0. There are a few special constants when the MSB is exactly 1, with the 0x1000000 value indicating transparent, and 0x1000001 is a special type for most recent color and 0x1000002 indicates an invalid color. I don't think there are any other values above 0x1000002 that have any meaning in LabVIEW. The color box is a control with a special display logic that shows the transparent color with the T on top. It is meant as color chooser more than a visual object and if it just would display transparent as, well transparent, it would be hard for a user to know that it is a special color. You can do that with a boolean whose color is set to transparent if you need to. The boolean doesn't have special logic to display the T to indicate that it is transparent.

-

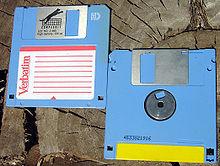

LabVIEW 2.5 was distributed on 3.5" floppy disks. About 4 or 5 or so of these in 1.44MB high density formatted. I ditched them several years ago as it is pretty hard to find a drive nowadays to read them. Later releases were substantially more floppy disk. I don't think CD-ROM was an option before LabVIEW 6.0.

-

Thousends of releases? I kind of doubt it. Leaving away LabVIEW prior to the multiplatform version (2.2.x and earlier which only were Macintosh) there have been 2.5, 3.0, 3.1, 4.0, 5.0, 5.1, 6.0, 7.0, 7.1, 8.0, 8.2, 8.5, 8.6, 2009, 2010, 2011, 20012, 2013, 2014, 2015, 2016, 2017, 2018, 2019 releases so far. Of each of them there was usually 1 and rarely two maintenance releases, and of each maintenance release between 1 to 8 bug fix releases. This does probably only amount to about 100 releases in total and maybe another 50 for beta releases of these versions (a beta has usually 2 to 4 intermediate releases although that tends to be no more than 2 in the last 10 years or so). I'm not aware of any LabVIEW release that had the debug symbols exposed. PDPs were used even in Microsoft Visual C 6.0, the first version that was probably used by NI to create a released LabVIEW version (NI only switched to use Microsoft C for the standard 32-bit builds for Windows NT, the Windows 3.1 versions of LabVIEW were created using Watcom C 10.x which was the only compiler able to create full 32-bit executables that could run on 16-bit Windows 3.1 through the built-in DOS extender runtime). Microsoft makes this anyhow pretty hard to happen by accident as such DLL/EXE files would normally link to the debug version of the Microsoft C Runtime library and you can't install that legally on a computer without installing the entire Microsoft C Compiler contained in the Visual Studio software. There is definitely no downloadable installer for the debug version of the C runtime engine. The only early leak I'm aware of was that the original LabVIEW 2.5 prerelease contained a huge extcode.h file in the cintools directory that showed much more than the officially documented LabVIEW manager functions. About half of it was still pretty much unusable as you needed other functions that were not exposed in there to make use of some of the features, and a lot of those functions were removed from the exported functions around LabVIEW 4.0 and 5.0 as they were considered obsolete or undesirable to pollute the exported symbols list, but it did contain a few interesting functions that are still exported from the LabVIEW kernel but not declared in the current extcode.h file. They fixed that extcode.h bug before the official release of LabVIEW 3.0, which was the first non-beta version of LabVIEW running on other computers than Macintosh. (2.5 was basically a beta release called prerelease version to have something to show for NI Week 1992 that runs on Windows 3.1, and there was a 2.5.1 and I believe 2.5.2 bug fix release of it in 1993). Also lvrt.dll is a development that only got introduced around LabVIEW 6.0. As this was released in 2000 it most likely used at least Microsoft Visual Studio C++ 6.0; Before that the application builder was concatenating the generated runtime LLB to a stub executable that contained the entire LabVIEW runtime engine. That was a pretty neat feature as it created a single file executable, but as LabVIEW was extended and more and more functionality implemented in external files and DLLs that get linked dynamically, this was pretty much unmaintainable in the long run and the entire runtime engine was externalized.

-

Coining a phrase: "a left-handed scissors feature"

Rolf Kalbermatter replied to Aristos Queue's topic in LabVIEW General

Part of these is the Macintosh legacy. You could make a case that Microsoft is at fault as they had to invent differences to the Mac, either for the sake of being different or maybe also to not give to much fodder for a court case about plagiarizsme. -

DLL Linked List To Array of Strings

Rolf Kalbermatter replied to GregFreeman's topic in Calling External Code

There were a few errors in how you created the linked list. I did this and did a quick test and it seemed to work. The chunk in the debugger is expected. The debugger does not know anything about LabVIEW Long Pascal handles and that they contain an int32 cnt parameter in front of the string and no NULL termination character. So it sees a char array element and tries to interpret it until it sees a NULL value, which is of course more or less beyond the actual valid information. #include "extcode.h" #include "stdlib.h" #include "string.h" typedef struct IIR_USB_RELAY_DEVICE_INFO { char *serial_number; struct IIR_USB_RELAY_DEVICE_INFO *next; } IIR_USB_RELAY_DEVICE_INFO_T; static IIR_USB_RELAY_DEVICE_INFO_T* iir_usb_relay_device_enumerate() { int i, len; IIR_USB_RELAY_DEVICE_INFO_T *ptr, *deviceInfo = NULL; const char* sn[] = { "abcd", "efgh", "ijkl", NULL }; for (i = 0; sn[i]; i++) { IIR_USB_RELAY_DEVICE_INFO_T* info = (IIR_USB_RELAY_DEVICE_INFO_T*)malloc(sizeof(IIR_USB_RELAY_DEVICE_INFO_T)); len = (int32)strlen(sn[i]) + 1; info->serial_number = (unsigned char*)malloc(len); memcpy(info->serial_number, sn[i], len); info->next = NULL; if (!deviceInfo) { deviceInfo = info; } else { ptr->next = info; } ptr = info; } return deviceInfo; } static void iir_usb_relay_device_free_enumerate(IIR_USB_RELAY_DEVICE_INFO_T *deviceInfo) { IIR_USB_RELAY_DEVICE_INFO_T *ptr; while (deviceInfo) { ptr = deviceInfo; deviceInfo = deviceInfo->next; free(ptr->serial_number); free(ptr); } } #define EXPORT_API __declspec(dllexport) /* Make sure to wrap any data structure definitions that are passed from and to LabVIEW with the two include files that make sure to set and reset the memory alignment to what LabVIEW expects for the current platform */ #include "lv_prolog.h" typedef struct { int32 cnt; LStrHandle elm[]; } **LStrArrayHandle; #include "lv_epilog.h" /* define a typecode that depends on the bitness of the platform to indicate the pointer size */ #if IsOpSystem64Bit #define uPtr uQ #else #define uPtr uL #endif MgErr EXPORT_API iir_get_serial_numbers(LStrArrayHandle *arr) { MgErr err = mgNoErr; LStrHandle *pH = NULL; int len, i, n = (*arr) ? (**arr)->cnt : 0; IIR_USB_RELAY_DEVICE_INFO_T *ptr, *deviceInfo = iir_usb_relay_device_enumerate(); /* This only works reliably if there is guaranteed that the deviceInfo linked list won't change in the background while we are in this function! */ for (i = 0, ptr = deviceInfo; ptr; ptr = ptr->next, i++) { /* Resize the array handle only in power of 2 intervals to reduce the potential overhead for resizing and reallocating the array buffer every time! */ if (i >= n) { if (n) n = n << 1; else n = 8; err = NumericArrayResize(uPtr, 1, (UHandle*)arr, n); if (err) break; } len = (int32)strlen(ptr->serial_number); pH = (**arr)->elm + i; err = NumericArrayResize(uB, 1, (UHandle*)pH, len); if (!err) { MoveBlock(ptr->serial_number, LStrBuf(**pH), len); LStrLen(**pH) = len; } else break; } iir_usb_relay_device_free_enumerate(deviceInfo); /* If we did not find any device AND the incoming array was empty it may be NULL as this is the canonical empty array value in LabVIEW. So check that we have not such a canonical empty array before trying to do anything with it! It is valid to return a valid array handle with the count value set to 0 to indicate an empty array!*/ if (*arr) { /* If the incoming array was bigger than the new one, make sure to deallocate superfluous strings in the array! This may look superstitious but is a very valid possibility as LabVIEW may decide to reuse the array from a previous call to this function in a any Call Library Node instance! */ n = (**arr)->cnt; for (pH = (**arr)->elm + (n - 1); n > i; n--, pH--) { if (*pH) { DSDisposeHandle(*pH); *pH = NULL; } } (**arr)->cnt = i; } return err; } -

DLL Linked List To Array of Strings

Rolf Kalbermatter replied to GregFreeman's topic in Calling External Code

uQ is the LabVIEW typcode for an unsigned 8 byte integer (uInt64). uL is the typecode for a uInt32. These are the sizes of a pointer in the respective environment and a LabVIEW handle is a pointer! You are right of course. That was a typo! But the real typo is in the declaration: LStrHandle *pH = NULL; The rest of the code is meant to have this variable be a reference to the handle not the handle itself. -

DLL Linked List To Array of Strings

Rolf Kalbermatter replied to GregFreeman's topic in Calling External Code

A string array is simply an array handle of string handles! Sounds clear doesn't it? 😄 In reality we didn't just arbitrarily create this data structure but LKSH simply defined it knowing how a LabVIEW array of string handles is defined. You can actually get this definition from LabVIEW by creating the Call Library Node with the Parameter configured to Adapt to Type, Pass Array Handle Pointer, then right click on the Call Library Node and select "Create .c file". LabVIEW then creates a C file with all datatype definitions and an empty function body. -

DLL Linked List To Array of Strings

Rolf Kalbermatter replied to GregFreeman's topic in Calling External Code

That entirely depends on the implementation. If the API just returns a pointer to a globally maintained list then there is no way to make this safe unless: 1) It only ever updates this list when calling this function just before returning the first element pointer. This means that updating this list because of OS events for unplugging devices is not a safe option. 2) It provides some locking such that getFirstDeviceInfo() acquires a lock and you have to unlock the list after you are done for instance by calling a function unlockDeviceInfo() or similar. Another option is that the API returns a newly allocated list and you need to call a freeDeviceInfo() or similar function everytime afterwards to deallocate the entire list. If any of these is true you SHOULD be safe, otherwise there is no way to make it safe. -

DLL Linked List To Array of Strings

Rolf Kalbermatter replied to GregFreeman's topic in Calling External Code

There is a serious problem with this if you ever intend to compile this code for 64 bit. Then alignment comes into play (LabVIEW 32 bit uses packed data structures but LabVIEW 64 bit uses default alignment so the Array of handles requires sizeof(int32) + 4 + n * sizeof(LStrHandle) bytes. More universally it is really: #define RndToMultiple(nDims, elmSize) ((((nDims * sizeof(int32)) + elmSize - 1) / elmSize) * elmSize) #if IsOpSystem64Bit || OpSystem == Linux /* probably also || OpSystem == MacOSX */ #define ArrayHandleSize(nDims, nElm, elmSize) RndToMultiple(nDims, elmSize) + nElms * elmSize #else #define ArrayHandleSize(nDims, nElm, elmSize) nDims * sizeof(int32) + nElms * elmSize #endif But NumericArrayResize() takes care of these alignment troubles for the platform you are running on! Personally I solve this like this instead: #include "extcode.h" /* Make sure to wrap any data structure definitions that are passed from and to LabVIEW with the two include files that make sure to set and reset the memory alignment to what LabVIEW expects for the current platform */ #include "lv_prolog.h" typedef struct { int32 cnt; LStrHandle elm[]; } **LStrArrayHandle; #include "lv_epilog.h" /* define a typecode that depends on the bitness of the platform to indicate the pointer size */ #if IsOpSystem64Bit #define uPtr uQ #else #define uPtr uL #endif MgErr iir_get_serial_numbers(LStrArrayHandle *strArr) { MgErr err = mgNoErr; LStrHandle *pH = NULL; deviceInfo_t *ptr, *deviceInfo = getFirstDeviceInfo(); int len, i = 0, n = (*strArr) ? (**strArr)->cnt : 0; /* This only works reliably if there is guaranteed that the deviceInfo linked list won't change in the background while we are in this function! */ for (ptr = deviceInfo; ptr; ptr = ptr->next, i++) { /* Resize the array handle only in power of 2 intervals to reduce the potential overhead for resizing and reallocating the array buffer every time! */ if (i >= n) { if (n) n = n << 1; else n = 8; err = NumericArrayResize(uPtr, 1, (UHandle*)strArr, n); if (err) break; } len = strlen(ptr->serial_number); pH = (**strArr)->elm + i; err = NumericArrayResize(uB, 1, (UHandle*)pH, len); if (!err) { MoveBlock(ptr->serial_number, LStrBuf(**pH), len); LStrLen(**pH) = len; } else break; } if (deviceInfo) freeDeviceInfo(deviceInfo); /* If we did not find any device AND the incoming array was empty it may be NULL as this is the canonical empty array value in LabVIEW. So check that we have not such a canonical empty array before trying to do anything with it! It is valid to return a valid array handle with the count value set to 0 to indicate an empty array!*/ if (*strArr) { /* If the incoming array was bigger than the new one, make sure to deallocate superfluous strings in the array! This may look superstitious but is a very valid possibility as LabVIEW may decide to reuse the array from a previous call to this function in a any Call Library Node instance! */ n = (**strArr)->cnt; for (pH = (**strArr)->elm + (n - 1); n > i; n--, pH--) { if (*pH) { DSDisposeHandle(*pH); /* Clean out the handle pointer to indicate it was disposed */ *pH = NULL; } } (**strArr)->cnt = n; } return err; } This is untested code but should give an idea! -

In the case of the libraries that I contributed to OpenG, I tried to add all the names to the copyright notice who provided more than a trivial bug fix. I also happened to add my name to a few VIs in other OpenG packages when I felt it was more than a trivial bug fix.

-

Should I abandon LVLIB libraries?

Rolf Kalbermatter replied to drjdpowell's topic in LabVIEW General

Well there could be two that apply! "Killing me softly" and "Ready or Not, here I come you can't hide" 😀 -

Should I abandon LVLIB libraries?

Rolf Kalbermatter replied to drjdpowell's topic in LabVIEW General

The upgraded LLB almost certainly never ever will happen. The lvclassp or whatever it would be called probably neither because you can do that basically today by wrapping one or more lvclasses into a lvlib and then turning that into a lvlibp. While these single file containers are all an interesting feature they have many potential trouble as can be seen with lvlibp. Some are unfortunate and could be fixed with enough effort, others are fundamental problems that are hard to almost impossible to be really done right. Even Microsoft has been basically unable to plugin an archive system like a ZIP archive into its file explorer in a way that feels fully natural and doesn't limit all kind of operations that a user would expect to be able to do in a normal directory. Not saying it's impossible although the Windows Explorer file system extension interface is basically a bunch of different COM interfaces that are both hard to use right and incomplete and limited in various ways. A bit of a bolted on extension with more extensions bolted on on the side whenever the developers found to need a new feature. It works most of the time but even the Microsoft ZIP extension has weird issues from using the COM interfaces in certain ways that were not originally intended. It works good enough to not having to spend more time on it to fix real bugs or to axe the feature and let users rely on external archive viewers like 7-ZIP, but is far from seamless. At least for classic LabVIEW I think the time has come where NI won't spend any time in adding such features anymore. They will limit future improvements to features that can be relatively easily developed for NXP and then backported to classic LabVIEW with little effort. Something like a new file format is not such a thing. It would require a rewrite of substantial parts of the current code and they are pretty much afraid of touching the existing code substantially as it is in large parts very old code with programming paradigms that are completely the opposite to what they use nowadays with classes and other modern C++ programming features. Basically the old code was written with standard C in ways that was meant to fit into the constrained memory of those days with various things that defies modern programming rules completely. Was it wrong? No, it was what was necessary to get it to work on the hardware that was available then, without waiting another 10 years to have on the architecture and hope to get the hardware that makes a modern system able to run, with programming paradigms that were nowhere used at that time. -

In general you are working here with non released, non documented features in LabVIEW. You should read the Rusty Nails in Attics thread sometimes. Basically LabVIEW has various areas that are like an attic. There exist experimental parts, non finished features and other things in LabVIEW that were never meant for public consumptions, either because they are not finished and tested, an aborted experiment or a quick and dirty hack for a tool required for NI internal use. There are ways to access some of them, and the means to it have been published many times. NI does not forbid anyone to use them although they do not advertize it. Their stance with them is: If you want to use it, then do but don't come to us screaming because you stepped on a rusty nail in that attic! The fact that the node has a dirty brown header is one indication that it is a dirty feature.

-

Yep I know! Linux is a fileID, except there are at least two different types of identifiers, one is a socket like one and one is a posix file IO one. Mac is nasty. For 32 bit it seemed to be a Carbon API FS number, later changed to the posix file IO number for 64 bit. Not sure they changed anything for 32 bit too. The differences and uncertainities make it not a safe bet to just ASSume things and HOPE it will always remain like this, sorry. But nooooo, a file refnum is NOT a Windows file handle. Pease repeat after me: IT IS NOT!. You need the file manager function FRefNumToFD() to retrieve the underlaying file descriptor handle. The File primitives do quite a bit more than just calling the according Windows API function. A lot sits in the path resolution where thinks like shortcuts will autmatically be resolved. It won't do anything special about symlinks and all the Windows APIs except the CreateHandle() with special flags and GetFileAttributes() are made by Microsoft explicitely to work in the way to not do special things with symlinks in the name of maximum backwards compatibility. You need to call special functions to deal with symlinks and some are still not available officially such as reading the target of a symlink explicitly.

-

It's called bindings to non native libraries and functionality. It's a standard problem in every programming environment. The perceived difficulty has always a direct relation with the distance of the programming paradigme of the calling environment to the callee. In C it is almost non existent, since you have to bother about memory management, thread management, etc, etc. no matter what you try to call. In higher level languages with a managed environmen like LabVIEW or .Net, it seems a lot more complicated. It isn't really but the difference between what you normally have to do in the calling environment is much bigger when calling such non native entities. And each environment has of course a few special subtleties. The one currently causing me a lot of extra work for the OpenG ZIP library is the fact that LabVIEW always has and still does assume that STRING==BYTEARRAY and that encoding does not exist in a LabVIEW platform. A ZIP file can contain encoding in the stored file names and nowadays regularly does. So the strings that are returned as filenames in an archive need to be treated with care. Except when I then try to turn it into a LabVIEW path to create the file, the whole care falls into the water as the filepath either will alter the name to something else or even possibly attempt to create a file with invalid characters. So the solution is to replace the Open File, Create File and Create Directory among with some others functions (like Delete File) with my own version that can handle the paths properly. Great idea except that LabVIEW does not document and hence not guarantee how the underlaying file system object is mapped into a file refnum. So in order to be safe here I also have to create the Read File, Write File, Close File, File Size and such functions.All doable but serious work to do. I'm basically rewriting the LabVIEW File Manager and Path Manager functionality for a considerable amount.

-

Sssssht! My first version was without that sequence structure and I was for a brief moment wondering if maybe my ability to do the pointer juggling had failed me. After looking over it once more I figured the problem must be elsewhere and then it struck me that the control assignment was happening right after the NumericArrayResize() call. LabVIEW has a preference to do terminal assignments always as soon as possible.

-

That's just to force execution of the copying of the array size before assigning the handle to the control. Looks strange when you have created an array with elements but the control shows an empty array. For use as subVI it wouldn't really matter as by the time the subVI returns the array it is correctly sized but when you test run it from the front panel it looks weird.

-

Well it is when you look at how the equivalent looks in C 😄 MgErr AllocateArray(LStrHandle *pHandle, size_t size) { MgErr err = NumericArrayResize(uB, 1, (UHandle*)pHandle, size); if (size && !err) LStrLen(**pHandle) = (int32)size; return err; } Very simple! The complexity comes from what in C is that easy LStrLen() macro, which does some pointer vodoo that is tricky to resemble in LabVIEW.

-

That was my first thought too 😆. But!!!! The Call Library Node only allows for Void, Numeric and String return types and the String is restricted to C String Pointer and Pascal String Pointer. The String Handle type is not selectable. -> Bummer! And the logic with the two MoveBlock functions to tell the array in the handle what size it actually has, needs to be done anyway. Otherways the handle might be resized automatically by LabVIEW at various places when passing through Array nodes for instance, such as the Replace Array Subset node. Also Replace Array Subset would not copy data into an array beyond the indicated array size too. Handle size and array size are not strictly coupled beyond the obvious requirement handle size >= dimensions * sizeof(int32) + array size * array element size

-

Nope, sorry. Still, trying to get information if a memory allocation might succeed by looking at whatever memory statistics might be available can never be a foolproof approach. It has the classical race that between checking if you can and doing it, the statistics might be not actual anymore and you still fail. The only fool proof approach is to actually do the allocation and deal with the failure of it. Of course for memory allocations that is always tricky as seen here. We want to read in a 900MB file and want to be sure we can read it in. Checking if we can and then trying can still fail. We have to allocate the entire buffer beforehand and then copy piece by piece the file into this buffer. Another approach might be a memory mapped file but trying to trick LabVIEW build in functions to use such a beast is an entire exercise in its own. You basically invert the complete execution flow from calling a function that returns some data, to first preparing a buffer and hand it to a function to use it to eventually return that data. If you ever have dealt with streams (in Java, or .Net which has not only taken the whole stream concept verbatim from Java) you will know this problem. It's super handy and normally quite easy but internally quite complex. And you always end up with two distinct types that can't be easily connected without some intermediate proxy, Input Streams and Output Streams. And such a proxy will always involve copying data from one stream to the other, adding significant overhead to the originally very simple and seemingly beautiful idea. Now, one solution in hindsight that would be beneficial in the OPs case would be if those LabVIEW low level functions would return an error 2 or so in these cases rather than throw up a dialog that gives you only the option to quit, crash or puke. With the current almost everywhere present error cluster and its consisten handling throughout LabVIEW, this would seem the logical choice. Back when LabVIEW was invented however, error clusters were not even thought of yet and error handling from things like out of memory conditions was anyhow an end of story condition in almost all cases, since once that happened LabVIEW would almost surely run into other out of memory conditions when trying to handle the previous error conditions. When LabVIEW for Windows came out, most users found 8MB of memory an outragous expensive requirement and were insisting that LabVIEW should be able to fly to the moon and back with the 4MB it was claiming to work with in the marketing material.