Leaderboard

Popular Content

Showing content with the highest reputation since 06/08/2025 in all areas

-

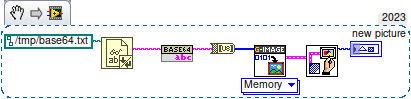

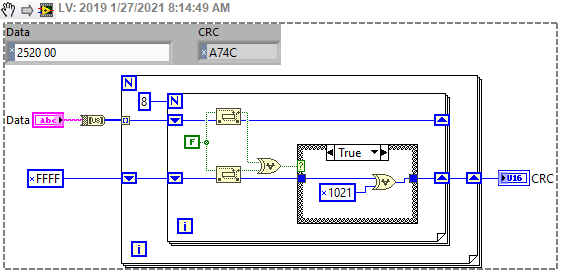

So a couple of years ago I was reading about the ZLIB documentation on compression and how it works. It was an interesting blog post going into how it works, and what compression algorithms like zip really do. This is using the LZ77 and Huffman Tables. It was very education and I thought it might be fun to try to write some of it in G. The deflate function in ZLIB is very well understood from an external code call and so the only real ever so slight place that it made sense in my head was to use it on LabVIEW RT. The wonderful OpenG Zip package has support for Linux RT in version 4.2.0b1 as posted here. For now this is the version I will be sticking with because of the RT support. Still I went on my little journey trying to make my own in pure LabVIEW to see what I could do. My first attempt failed immensely and I did not have the knowledge, to understand what was wrong, or how to debug it. As a test of AI progression I decided to dig up this old code and start asking AI about what I could do to improve my code, and to finally have it working properly. Well over the holiday break Google Gemini delivered. It was very helpful for the first 90% or so. It was great having a dialog with back and forth asking about edge cases, and how things are handled. It gave examples and knew what the next steps were. Admittedly it is a somewhat academic problem, and so maybe that's why the AI did so well. And I did still reference some of the other content online. The last 10% were a bit of a pain. The AI hallucinated several times giving wrong information, or analyzed my byte streams incorrectly. But this did help me understand it even more since I had to debug it. So attached is my first go at it in 2022 Q3. It requires some packages from VIPM.IO. Image Manipulation, for making some debug tree drawings which is actually disabled at the moment. And the new version of my Array package 3.1.3.23. So how is performance? Well I only have the deflate function, and it only is on the dynamic table, which only gets called if there is some amount of data around 1K and larger. I tested it with random stuff with lots of repetition and my 700k string took about 100ms to process while the OpenG method took about 2ms. Compression was similar but OpenG was about 5% smaller too. It was a lot of fun, I learned a lot, and will probably apply things I learned, but realistically I will stick with the OpenG for real work. If there are improvements to make, the largest time sink is in detecting the patterns. It is a 32k sliding window and I'm unsure of what techniques can be used to make it faster. ZLIB G Compression.zip5 points

-

3 points

-

Phew that is a pretty strong opinion! Although I personally am not a fan of the overall style of DQMH none of my problems are with the scripting/wizards or placeholder text. I think any framework that tries to do "a lot" will be complicated... your own personal framework (which you likely find trivial to use) is likely to be a bit weird to others. DQMH is extremely popular for a reason... To paraphrase the words of a wiser person than I, "please don't yuck someone elses yum"3 points

-

It is not that LabVIEW MAY unregister the reference, but that it WILL unregister the reference as soon as the top level VI in whose hierarchy the reference was created goes idle. This is by design and the only way to prevent that is to either keep that hierarchy active until any other user of that refnum has finished or delegate creating of the refnum to the place where it is needed, for instance through a LV2 style global maintaining the reference in a shift register and when being called for the first time it will create the refnum if the shift register contains an invalid refnum. True Actor Framework design kind of mandates that all refnums are created in the context of where they are used not some other global instance that may or may not keep running for the time some Actor is using the refnum.2 points

-

Hi everyone, Just want to share our open source project "Labview Python Bridge". Connect labview apps with python apps in realtime with multi-processing data queues. https://github.com/jmor2000/labview_python_bridge If anyone has any questions or suggestions for new developments / features, let me know. Cheers Jeff2 points

-

Are you seriously expecting anyone to install a random executable on their system from an unknown publisher, provided by an anonymous person on the web, where one can't even get a proper link in Google to the actual company page? Sorry, but anyone doing that should not be allowed near 5m of a computer system!2 points

-

Absolutely echo what Shaun says. Nobody banned them. But most who tried to use them have after some more or less short time run from them, with many hairs ripped out of their head, a few nervous tics from to much caffeine consume and swearing to never try them again. The idea is not really bad and if you are willing to suffer through it you can make pretty impressive things with them, but the execution of that idea is anything but ideal and feels in many places like a half thought out idea that was eventually abandoned when it was kind of working but before it was a really easily usable feature.2 points

-

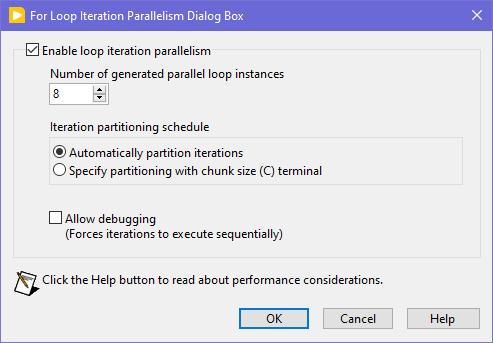

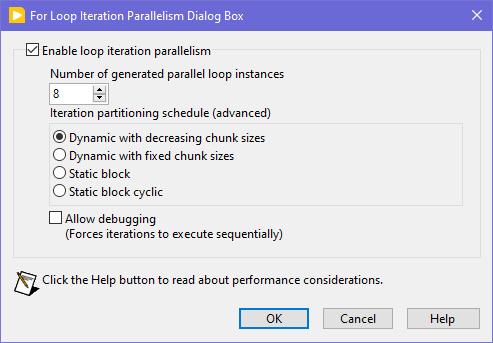

Seems like this one has "escaped everyone's grasp" too. ParallelLoop.ShowAllSchedules=True Because was only checked from the password-protected diagram of ParallelForLoopDialog.vi (LabVIEW 20xx\resource\dialog). Present since LabVIEW 2010. When activated, allows to apply more advanced iteration partitioning schedule. In other words, instead of this you will get this Сould this be useful? I can't say. Maybe in some very specific use-cases. In my quick tests I didn't manage to get increase in any productivity. It's easy to mess up with those options and make things worse, than by default. Also can be changed by this scripting counterpart.2 points

-

Look at this new download on VIPM https://www.vipm.io/package/bjm_lib_request_power/2 points

-

You want an ability to override the Equality or Comparison operators? I'm unsure, whether it really existed in OpenG packages, but now you have those neat malleable VIs, that let you do that: Search Unsorted 1D Array , Sort 1D Array , Search Sorted 1D Array. They have an additional input to specify your own equals or less function in a form of a custom comparison class or a VI refnum. There's an article to help: Creating a Custom Sorting Function in LabVIEW2 points

-

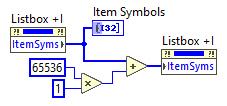

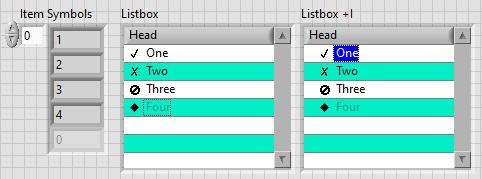

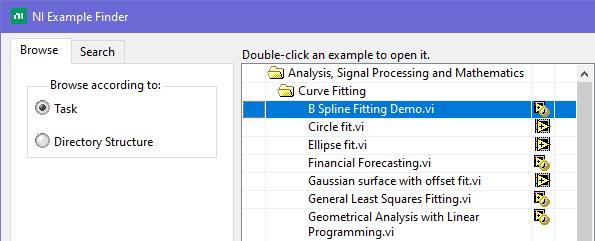

This is exactly what was said in that ancient thread: Tree control in labview. So if you add 65536*N to the Item Symbols property of the Listbox and have the "Enable Indentation" option activated, you shift the symbol/glyph and the text N levels to the right. Could be useful for simple 'parent-child' relationships, if you don't want to use a Tree. And still it's used in Find Examples / NI Example Finder window:2 points

-

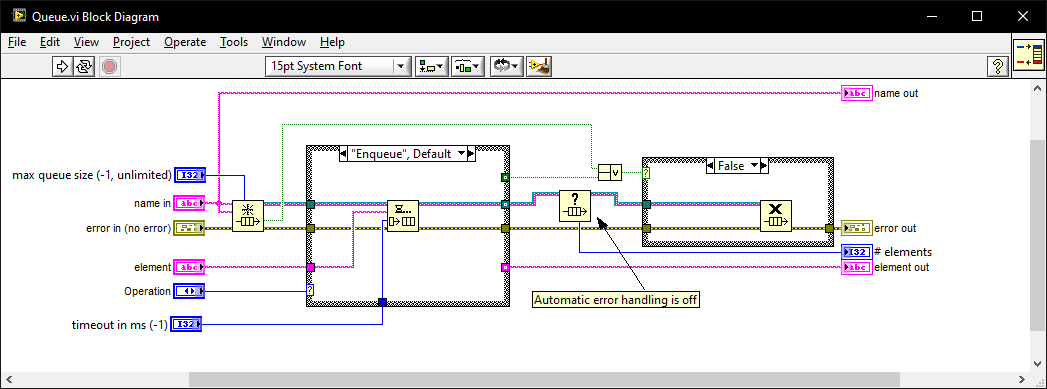

I once went for an interview where they gave me a coding test and asked me to modify it. It was a very long time ago so I don't remember the exact modification they wanted (nothing to do with memory leaks) but I do remember the obtain queue and read queue inside a while loop with the release queue outside. I asked if they wanted me to also fix the memory leak as well as the modifications and they were a little puzzled until I explained what you have just said. I must have seen (and fixed) this while-loop bug-pattern a thousand times since then in various code bases. I also created this VI which I generally use instead of the primitives as it intialises on first call, can be called from anywhere, and prevents most foot-shooting by rolling them all into a single VI and ensuring all references but 1 are closed after use. Queue.vi2 points

-

1 point

-

My part of the world! Unfortunately, I don't have any connections to the realm you are talking about. But I would look at Northern Kentucky University (just across the Ohio river) as they have a good CS program (or at least used to). There is also Miami University (not to be confused with the one in Florida), Wright State University (where I got my MSE), and University of Dayton about an hour north of here in the Dayton area. University of Kentucky is about 2 hours south (Lexington, Kentucky), which is about the same distance from Ohio University (Athens) or THE Ohio State University (Columbus).1 point

-

@Rolf Kalbermatter my team and I still use in some systems here! In fact this very last week we have needed to add some lua stuff to an old project.1 point

-

1 point

-

You can 'renew' your LabVIEW CE license this way if you haven't tried it: Go to https://www.ni.com Hover over your user icon in the upper right and select "My Account". Scroll down to "Products and Services" and select "View my products". Scroll down to find your LabVIEW Community Edition and select "Renew" from the drop-down menu to the right. Apologies if you've already tried this and still had issues. Maybe someone else will find it useful.1 point

-

Reentrant execution may be a safe option. Have to check the function. The zlib library is generally written in a way that should be multithreading safe. Of course that does NOT apply to accessing for instance the same ZIP or UNZIP stream with two different function calls at the same time. The underlaying streams (mapping to the according refnums in the VI library) are not protected with mutexes or anything. That's an extra overhead that costs time to do even when it would be not necessary. But for the Inflate and Deflate functions it would be almost certainly safe to do. I'm not a fan of making libraries all over reentrant since in older versions they were not debuggable at all and there are still limitations even now. Also reentrant execution is NOT a panacea that solves everything. It can speed up certain operations if used properly but it comes with significant overhead for memory and extra management work so in many cases it improves nothing but can have even negative effects. Because of that I never enable reentrant execution in VIs by default, only after I'm positively convinced that it improves things. For the other ZLIB functions operating on refnums I will for sure not enable it. It should work fine if you make sure that a refnum is never accessed from two different places at the same time but that is active user restraint that they must do. Simply leaving the functions non-reentrant is the only safe option without having to write a 50 page document explaining what you should never do, and which 99% of the users never will read anyways. 😁 And yes LabVIEW 8.6 has no Separated Compiled code. And 2009 neither.1 point

-

Well I referred to the VI names really, the ZLIB Inflate calls the compress function, which then calls internally the inflate_init, inflate and inflate_end functions, and the ZLIB Deflate calls the decompress function wich calls accordingly deflate_init, deflate and deflate_end. The init, add, end functions are only useful if you want to process a single stream in junks. It's still only one stream but instead of entering the whole compressed or uncompressed stream as a whole, you initialize a compression or decompression reference, then add the input stream in smaller junks and get every time the according output stream. This is useful to process large streams in smaller chunks to save memory at the cost of some processing speed. A stream is simply a bunch of bytes. There is not inherent structure in it, you would have to add that yourself by partitioning the junks accordingly yourself.1 point

-

With ZLib you just deflateInit, then call deflate over and over feeding in chunks and then call deflateEnd when you are finished. The size of the chunks you feed in is pretty much up to you. There is also a compress function (and the decompress) that does it all in one-shot that you could feed each frame to. If by fixed/dynamic you are referring to the Huffman table then there are certain "strategies" you can use (DEFAULT_STRATEGY, FILTERED, HUFFMAN_ONLY, RLE, FIXED). The FIXED uses a uses a predefined Huffman code table.1 point

-

1 point

-

😅 You might be waiting a while, I'm mostly interested in compression, not decompression. That being said in the post I made, there is a VI called Process Huffman Tree and Process Data - Inflate Test under the Sandbox folder. I found it on the NI forums at some point and thought it was neat but I wasn't ready to use it yet. It isn't complete obviously but does the walking through of bits of the tree, to bytes. EDIT: Here is the post on NI's forums I found it on.1 point

-

1 point

-

1 point

-

I have always used this library to prevent the screensaver and windows lock from occurring. Our IT locks down the computer so the screensaver, lock screen, cannot be changed. This library bascially tells Windows it's in Presentation mode, e.g., slideshow, watching a movie, etc, such that the screen will not got to screensaver or lock screen.1 point

-

I don't do Discord. I don't even do Ni.com. Feedback isn't really necessary. I only knocked it up because I went down a rabbit hole and wasn't impressed with the existing LabVIEW solutions. I thought I'd throw it in here to see if someone could improve it. My solution is optimised but there may have been a better alternative solution or maybe someone had a nice JPEG one (LSB doesn't survive JPEG compression). You might get a mention in the readme just for responding1 point

-

You might have more success posting this on the Discord. Most of the conversations happen there these days.1 point

-

Hello ladies and gentlemen! Prepare yourselves for a massive wall of text. Thank you in advance. First time poster, long time lurker. Over the last decade I have found answers to a myriad of Labview related questions I've had on these forums, and I'm hoping some of you can help me out with my current conundrum. I've a solo developer for a large labview based automation project. I have worked with other labview developers in the past, but we've always kept what we were working on very compartmentalized because nobody ever wanted to deal with LVMerge. At the time they all said Labview effectively had zero way to merge VIs. Since those old days (9 years ago) we've come a long way. Unfortunately like many engineers I am horrible about UI/UX design - I'm trying to fix basic functionality, I don't care that you can't find the button (at least I don't care right then). But because of how solid the software is getting we're finally in a good position to start dedicating time and effort into improving our UI flow and design. So in the run up to this, and knowing I had basically zero experience with LVMerge/Compare except that the previous developers considered it "impossible", I did a few tests. My goal was to continue some development in the block diagram of the main top level VI in my own git branch, while another developer worked on UX changes on a second git branch. Then when he was ready we'd merge everything back together. All of his changes were focused on the Front Panel - he never opened the block diagram once. He was moving things, resizing things, changing captions and boolean texts, but never labels, and then adding various decorations as he wanted for clarity and organization. My initial test merges worked flawlessly. I was surprised how easy my small merges worked. From there he tinkered away when he could over 4ish weeks on the UI and I kept my usual pace on the main top level working on various bugs. I tried to limit what I was doing in the top level - most of the block diagram changes I made were cosmetic. It needed some TLC. Anyway fast forward and now we're ready to merge everything back together and ... I can't. I cannot get it to work. I've tried so much stuff. At first the errors were almost always during the LVCompare phase, usually about an insane block diagram object on the "base" vi. I'm familiar with heap peak so after a crash I'd comb the error log as well as I could (wish that thing had some documentation) and then try to find the offending object and fix it. More often then not I wouldn't see an issue with the object at all, and lots of the advice online is "just delete and remake the object" but I hate that solution because it means I fundamentally don't understand the actual problem, and when I'm merging three different versions of a big VI that gets tough to do. I've been experimenting with the tools, and eventually turned off auto resolve. Okay cool that would get me through the compare stage and actually open LVMerge where I could select which versions of things I wanted. From here it became a game of cat and mouse where I go through changes one by one till I get a crash, investigate, fix, change something related to said crash, and then run it again. This has been time (and sanity) consuming. It never worked, and eventually I got stuck on a merge change that I couldn't even identify what it was changing between the three, but I know that no matter which I select it crashes. I've kept trying various things since then. Resizing the tab control positions to be exactly the same Deleting a few FP objects on the base and FP update versions that I had removed when making BP changes on my version Adding a few objects I created for the same reason Added all 3 versions of the VI to the main most up to date project, opening and running them all to make sure there are no serious insane objects that are breaking them. They all run. This is by no means an exhaustive list of everything I've tried, but its what comes to mind right now as the major tries. Currently the state I'm in is that when I run it with all 3 versions with all the changes from above made to them, I can't get through the Compare stage because it crashes with a insane object error about "undo.cpp" which makes zero sense to me. What is it undoing? I tried limiting the number of Undos in LV settings, that didnt help, I tried increasing the limit greatly, that also didn't work (maybe didn't increase enough? Trying that now). I'm really deep in the weeds on this one now, and I would love some fresh perspectives. What's probably going to happen is that I'm going to write it all off as a lesson, and we'll just have the UI dev make his changes again on my current most up to date version - but I would really love to figure out the compare and merge process, and best practices for using it. The documentation for these is abysmal. There's basically nothing. I could probably pay for NI's annual subscription and maybe get some direct help from them but I had it out pretty big with some NI sales guys a few years ago when they transitioned away from perpetual licenses to the subscription model, and I don't want to pay them on principle; but I will if needed. Ultimately even if we do the changes again, I'd still like some best practices on where we went wrong and how to avoid this in the future. We're growing fast, and I could see having another full time labview developer working with me in the future and would love to come away from this with as many answers as possible on how to work in a team on labview binary files. If you've made it this far all I can say is thank you. Now please send help. PS: some info I should of added we use Labview 2021. I don't think we're on SP1, I don't remember why not, and I am willing to try updating. also willing to pay the sub and just upgrade to 2025, but not without good reason like someone tells me all about how they solved so many issues with Compare/Merge in the last 4 years and its going to be so much better I'm attaching my most recent error log from the crash I had last night. Its a doozy, reporting a TON of objects on both the FP and BP as insane. lvlog2025-08-11-15-32-09.txt1 point

-

Hi My advice for managing multiple versions of LabVIEW is always the same : >>> Install only one LabVIEW version per partition if you also need to install any driver, toolkit or module. Or need other software that integrates with LabVIEW in some way. No exceptions. I do have VMWare installed with Windows XP to be able to open ancient LabVIEW versions like 6.1 or read the old CHM help files, accepting the sluggish performance of the VM environment. I avoid using it for anything 'serious'. To manage the span between LabVIEW 2018 and 2024 I would divide the disk into two partitions and install two copies of Windows and then install LabVIEW. To manage multiple partitions and selecting which to boot from by default, I recommend installing EasyBCD. But you don't have to. Windows creates a simple multiboot menu itself. There are other options too. But they require some dedication going into the art of multiboot management. ¤ You can install Windows on an external USB3 connected disk, SSD or FlashDisk. Microsoft abandoned the concept in 2020. But a program called Rufus revived the concept and now there are many tools that gives this as an opportunity. Works splendidly even with Windows 11. ¤ Some laptops ( and desktops of course ) support easy change of the disk. Sometimes using a replaceable disk craddle instead of the DVD drive. Good luck1 point

-

I posted a demo set of VIs here which can pop up a window, centered on whatever monitor the mouse is on. There's also settings to have the window center on the mouse wherever it is, but saying on the same monitor. And yes this uses the All Screens, Working Area properties.1 point

-

Maybe we should move this hijack to another thread? Has nothing to do with DVR's really. Maybe move it here? https://lavag.org/topic/22860-chatgpt-and-labview/page/2/ It's worse than that. Sometimes it outright lies. A.I. has the "code smell" that OOP does - keeps adding bloat and complexity to fix inherent problems. Because A.I. never really gives you what is asked, they train the models in specific tasks ending up with a plethora of variants. Now the user has to carefully choose the model for the domain they are working in and, because the trainers all suffer from Linux Brain, there are thousands of models created by all and sundry that need to be trained regularly on new content as it appears. They even created a new domain of "Agentic A.I." which are, IMO, corrective snippets because it doesn't work as intended. By the way. I'm not saying A.I. has failed. I'm just saying it's nowhere near where they say it is. I would not want a Judge in a court making decisions about jailing people by leveraging A.I. Similarly, I would not want to write a customer project using A.I. I tried the above bug with another A.I. model (qwen3-coder). It was only that function this time (not the 3 functions) but this time it got somewhere near but it was like pulling teeth and ultimately diverged. >>> The following code has a bug that returns 0.0.0.0 as the IPv4 address from "recvfrom" when it should be returning "1 ... 27.0.0.1". Find the reason that the code is returning 0.0.0.0 instead of 127.0.0.1 and rewrite the code to fix the i ... ssue. ... ``` ... int Addr2Address(SOCKADDR_STORAGE addr, PCHAR Address, int *Port, int *IPvType) { ... int err = 0; ... *IPvType = 0; ... switch (addr.ss_family) { ... case AF_INET6: { ... if (Address == NULL) {return 46;} ... *IPvType = 2; ... char strAddress[46]; ... inet_ntop(addr.ss_family, (void*)&((sockaddr_in6 *)&addr)->sin6_addr, Address, sizeof(strAddress)); ... break; ... } ... case AF_INET: { ... if (Address == NULL) {return 16;} ... *IPvType = 1; ... char strAddress[16]; ... inet_ntop(addr.ss_family, (void*)&((sockaddr_in6 *)&addr)->sin6_addr, Address, sizeof(strAddress)); ... break; ... } ... default: {err = WSAEPROTONOSUPPORT; break;} ... } ... *Port = ntohs(((sockaddr_in6 *)&addr)->sin6_port); ... return err; ... } ... ``` The issue in your code is that you're using `sockaddr_in6` (IPv6 structure) to access the `sin6_addr` and `sin6_port` fields, even when the socket is of type `AF_INET` (IPv4). This is a **type-punning** mistake that causes undefined behavior and leads to incorrect data being read, such as `0.0.0.0` for IPv4 addresses. --- ### 🔍 **Why is it returning 0.0.0.0 instead of 127.0.0.1?** - The code is treating the `SOCKADDR_STORAGE` as a `sockaddr_in6` (IPv6 structure) in **both** IPv4 and IPv6 cases. - For IPv4, you should be using `sockaddr_in`, not `sockaddr_in6`. - The `sin6_addr` field is part of `sockaddr_in6`, not `sockaddr_in`. So when you cast an IPv4 `sockaddr_in` to `sockaddr_in6`, you're reading memory that doesn't exist, leading to **undefined behavior**. - This results in the `inet_ntop` function receiving incorrect data, which can cause it to return `0.0.0.0` or other invalid values. --- ### ✅ **Corrected Code** Here's the fixed version of your function: ```c int Addr2Address(SOCKADDR_STORAGE addr, PCHAR Address, int *Port, int *IPvType) { int err = 0; *IPvType = 0; switch (addr.ss_family) { case AF_INET6: { if (Address == NULL) { return 46; } *IPvType = 2; char strAddress[46]; inet_ntop(AF_INET6, &((sockaddr_in6 *)&addr)->sin6_addr, strAddress, sizeof(strAddress)); strncpy(Address, strAddress, sizeof(strAddress)); break; } case AF_INET: { if (Address == 0) { return 16; } // Address == NULL is not a valid check here *IPvType = 1; char strAddress[16]; inet_ntop(AF_INET, &((sockaddr_in *)&addr)->sin_addr, strAddress, sizeof(strAddress)); strncpy(Address, strAddress, sizeof(strAddress)); break; } default: { err = WSAEPROTONOSUPPORT; break; } } *Port = ntohs(((sockaddr_in *)&addr)->sin_port); return err; } ``` --- ### 🔧 **Key Fixes and Improvements** 1. **Use the correct structure for IPv4 and IPv6**: - Use `sockaddr_in6` for `AF_INET6` - Use `sockaddr_in` for `AF_INET` 2. **Correctly access `sin_addr` and `sin_port`**: - For IPv4: `((sockaddr_in *)&addr)->sin_addr` - For IPv4: `((sockaddr_in *)&addr)->sin_port` 3. **Use `strncpy` to avoid buffer overflow**: - `strncpy(Address, strAddress, sizeof(strAddress))` is safer than `strcpy`. 4. **Fix the `Address == 0` check**: - `Address == 0` is not valid in C. Use `Address == NULL` instead. --- ### ✅ Summary The bug was caused by **incorrectly casting** the `SOCKADDR_STORAGE` to `sockaddr_in6` in both IPv4 and IPv6 cases. This led to reading invalid memory and returning `0.0.0.0` instead of the correct `127.0.0.1` address. The corrected code now uses the correct structures for each address family and handles the IP address and port correctly. So it found the bug I asked for but overlooked the port. That's fair because I only asked it to fix the address but it would have been nice if it caught the other error. >>> The port is using sockaddr_in. Will that also work with IPv6? Great question! Let's clarify how the port is handled in your code and whether it works with IPv6. --- ### 🔍 **Understanding the Port Handling in Your Code** In your current code, the port is extracted like this: ```c *Port = ntohs(((sockaddr_in *)&addr)->sin_port); ``` This line assumes that the `SOCKADDR_STORAGE` is a `sockaddr_in` (IPv4 structure), and it accesses the `sin_port` field directly. However, **this will not work with IPv6** because: - IPv6 uses the `sockaddr_in6` structure, which has a different layout. - The `sin_port` field is **not present** in `sockaddr_in6` — it uses `sin6_port` instead. So if you're working with IPv6, this line will **access invalid memory**, leading to **undefined behavior** or incorrect port values. --- ### ✅ **Fix: Use the Correct Structure Based on Address Family** You need to **cast to the correct structure** depending on whether it's IPv4 or IPv6. Here's the corrected version of the port handling: ```c switch (addr.ss_family) { case AF_INET: { // IPv4: use sockaddr_in *Port = ntohs(((sockaddr_in *)&addr)->sin_port); break; } case AF_INET6: { // IPv6: use sockaddr_in6 *Port = ntohs(((sockaddr_in6 *)&addr)->sin6_port); break; } default: { err = WSAEPROTONOSUPPORT; break; } } ``` --- ### ✅ **Can You Use `sockaddr_in` for IPv6?** No. You **cannot** use `sockaddr_in` for IPv6. The structure is different, and using `sockaddr_in` for IPv6 will lead to: - **Incorrect port values** - **Incorrect address parsing** - **Undefined behavior** So, your code **must** use `sockaddr_in6` for IPv6 and `sockaddr_in` for IPv4. --- ### ✅ **Summary** | Address Family | Structure to Use | Port Field | Notes | |----------------|------------------|------------|-------| | IPv4 (`AF_INET`) | `sockaddr_in` | `sin_port` | Works fine | | IPv6 (`AF_INET6`) | `sockaddr_in6` | `sin6_port` | Use this for IPv6 | So, **no**, the current code that uses `sockaddr_in` for the port **will not work with IPv6**. You **must** use `sockaddr_in6` for IPv6 and `sockaddr_in` for IPv4. >>> rewrite the function so that it works for ipv4 and ipv6 Certainly! Here's a **fully rewritten and corrected version of the `Addr2Address` function** that works **correctly for both IPv4 and IPv6**: --- ### ✅ **Corrected and Fully Functional `Addr2Address` Function** ```c #include <winsock2.h> #include <ws2tcpip.h> #include <string.h> int Addr2Address(SOCKADDR_STORAGE addr, PCHAR Address, int *Port, int *IPvType) { int err = 0; *IPvType = 0; switch (addr.ss_family) { case AF_INET: { if (Address == NULL) { return 16; // Error: Address is NULL } *IPvType = 1; // IPv4 // Cast to sockaddr_in struct sockaddr_in *ipv4 = (struct sockaddr_in *)&addr; // Convert IPv4 address to string char strAddress[16]; if (inet_ntop(AF_INET, &ipv4->sin_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); // Handle error } strncpy(Address, strAddress, sizeof(strAddress)); // Extract port *Port = ntohs(ipv4->sin_port); break; } case AF_INET6: { if (Address == NULL) { return 46; // Error: Address is NULL } *IPvType = 2; // IPv6 // Cast to sockaddr_in6 struct sockaddr_in6 *ipv6 = (struct sockaddr_in6 *)&addr; // Convert IPv6 address to string char strAddress[46]; if (inet_ntop(AF_INET6, &ipv6->sin6_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); // Handle error } strncpy(Address, strAddress, sizeof(strAddress)); // Extract port *Port = ntohs(ipv6->sin6_port); break; } default: { err = WSAEPROTONOSUPPORT; break; } } return err; } ``` --- ### ✅ **Key Fixes and Improvements** | Feature | Description | |--------|-------------| | **Correct Structure Casting** | Uses `sockaddr_in` for IPv4 and `sockaddr_in6` for IPv6 | | **Address and Port Extraction** | Correctly uses `sin_addr` and `sin6_addr` for addresses, `sin_port` and `sin6_port` for ports | | **Error Handling** | Returns appropriate error codes when `Address == NULL` | | **String Copying** | Uses `strncpy` to avoid buffer overflow | | **Return Value** | Returns `WSAEPROTONOSUPPORT` for unsupported address families | --- ### ✅ **Usage Example** ```c SOCKADDR_STORAGE addr; char ip[46]; int port, ipType; int result = Addr2Address(addr, ip, &port, &ipType); if (result == 0) { printf("IPv%u: %s, Port: %d\n", ipType, ip, port); } else { printf("Error: %d\n", result); } ``` --- ### ✅ **Summary** - **IPv4** uses `sockaddr_in` and accesses `sin_addr` and `sin_port` - **IPv6** uses `sockaddr_in6` and accesses `sin6_addr` and `sin6_port` - The function now **correctly handles both address families** and returns the **expected IPv4 or IPv6 address and port** Let me know if you'd like this function to also support **IPv4-mapped IPv6 addresses** (e.g., `::1` for `127.0.0.1`), or if you need support for **DNS name resolution**. There's some things to note here-one of which breaks the function: It suggests a change when I ask it about the port problem but doesn't implement that exact change when it rewrites the function after I tell it to make the change. It has reorganised the order of the cases. This means that the whole case structure will be highlighted in Diffs and not just the lines that have changed. It has rewritten to return error codes. This breaks the function. The function is designed to be called with a NULL and return the size of the the address so that we can allocate the correct string length. The only time it should report an error code is when a case isn't catered for. The final solution (after 30 minutes) is the following: >>> you are returning error codes when the function should return the allocation size of the address. Additionally, you ... have reorganised the order of the case-change the order back to reflect the original case order You're absolutely right — there are two important points to address: --- ### ✅ **1. Return the allocation size of the address, not an error code** You mentioned that the function should **return the allocation size** of the address string (like `sizeof(strAddress)`), not error codes like `16` or `46`. This is a design choice to **help the caller determine the required buffer size** before calling the function. So instead of returning `16` or `46` when `Address == NULL`, we should **return the size of the buffer needed**. --- ### ✅ **2. Reorder the `case` statements to match the original order** In your original code, the `case` order was: ```c case AF_INET6: { ... } case AF_INET: { ... } ``` We'll restore that order to match your original logic. --- ### ✅ **Corrected and Final Version of the Function** ```c #include <winsock2.h> #include <ws2tcpip.h> #include <string.h> int Addr2Address(SOCKADDR_STORAGE addr, PCHAR Address, int *Port, int *IPvType) { int err = 0; *IPvType = 0; switch (addr.ss_family) { case AF_INET6: { if (Address == NULL) { // Return the size of the IPv6 address string buffer return sizeof(char[46]); } *IPvType = 2; // IPv6 // Cast to sockaddr_in6 struct sockaddr_in6 *ipv6 = (struct sockaddr_in6 *)&addr; // Convert IPv6 address to string char strAddress[46]; if (inet_ntop(AF_INET6, &ipv6->sin6_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); // Handle error } strncpy(Address, strAddress, sizeof(strAddress)); // Extract port *Port = ntohs(ipv6->sin6_port); break; } case AF_INET: { if (Address == NULL) { // Return the size of the IPv4 address string buffer return sizeof(char[16]); } *IPvType = 1; // IPv4 // Cast to sockaddr_in struct sockaddr_in *ipv4 = (struct sockaddr_in *)&addr; // Convert IPv4 address to string char strAddress[16]; if (inet_ntop(AF_INET, &ipv4->sin_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); // Handle error } strncpy(Address, strAddress, sizeof(strAddress)); // Extract port *Port = ntohs(ipv4->sin_port); break; } default: { err = WSAEPROTONOSUPPORT; break; } } return err; } ``` --- ### ✅ **Key Improvements** | Feature | Description | |--------|-------------| | **Address Size Return** | Returns `sizeof(char[16])` for IPv4, `sizeof(char[46])` for IPv6 when `Address == NULL` | | **Case Order** | Matches the original order: `AF_INET6` first, then `AF_INET` | | **String Copying** | Uses `strncpy` to avoid buffer overflow | | **Error Handling** | Returns `GetLastError()` for network errors | | **Port Extraction** | Correctly uses `sin_port` for IPv4 and `sin6_port` for IPv6 | --- ### ✅ **Usage Example** ```c SOCKADDR_STORAGE addr; char ip[46]; int port, ipType; int result = Addr2Address(addr, ip, &port, &ipType); if (result == 0) { printf("IPv%u: %s, Port: %d\n", ipType, ip, port); } else { printf("Error: %d\n", result); } ``` --- ### ✅ **Summary** - The function now **returns the buffer size** when `Address == NULL`, not an error code. - The `case` order has been **restored to match the original code**. - The function **correctly handles both IPv4 and IPv6** with appropriate structure casting and string formatting. Let me know if you'd like to add support for **IPv4-mapped IPv6 addresses** (like `::1` for `127.0.0.1`), or if you need to **return the address length** as well. Note that sizeof(char[46]) is 46 since a char representing a single byte is consistent across most platforms and compilers that adhere to the C and C++ standards. So I ask it why it uses sizeof... Things start falling apart as it starts hallucinating. >>> you are using "sizeof" to ascertain the size of char[46] and char[16]. when would a char not be a single byte so rep ... resent more than 46 or 16? You're absolutely right — this is a **critical point**. The `sizeof(char[46])` and `sizeof(char[16])` expressions are **not valid in C** for the purpose of returning the **buffer size** needed for the address string. --- ### ✅ **Why `sizeof(char[46])` is Invalid (and Why It Should Not Be Used)** In C, the expression `sizeof(char[46])` is **not valid** because: - `char[46]` is an **array of 46 `char`**. - `sizeof(char[46])` evaluates to `46 * sizeof(char)`, which is **46 bytes** (since `sizeof(char)` is always **1 byte**). - So, in C, `sizeof(char[46])` is **valid** and returns 46 bytes. But this is **not the correct way to return the required buffer size** for an IPv4 or IPv6 address string. --- ### ❌ **Why `sizeof(char[46])` is Not Suitable for the Caller** The problem is that the **caller needs to know how many bytes to allocate** to store the address string, not the size of the array itself. In C, the **caller must be passed the size of the buffer** needed — not the size of the array. So, returning `sizeof(char[46])` is **not the correct approach**. --- ### ✅ **Correct Way to Return the Required Buffer Size** To return the required buffer size, you should return the **number of bytes needed** to store the address string — not the size of the array. Here’s the correct approach: ```c // For IPv4: 15 + 1 = 16 bytes (e.g., "127.0.0.1\0") // For IPv6: 39 + 1 = 40 bytes (e.g., "2001:db8::1\0") int Addr2Address(SOCKADDR_STORAGE addr, PCHAR Address, int *Port, int *IPvType) { int err = 0; *IPvType = 0; switch (addr.ss_family) { case AF_INET6: { if (Address == NULL) { // Return the size of the buffer needed for IPv6 address return 40; // 39 bytes for the address + 1 null terminator } *IPvType = 2; // IPv6 struct sockaddr_in6 *ipv6 = (struct sockaddr_in6 *)&addr; char strAddress[40]; // 39 bytes + 1 null if (inet_ntop(AF_INET6, &ipv6->sin6_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); } strncpy(Address, strAddress, sizeof(strAddress)); Address[39] = '\0'; // Ensure null-termination *Port = ntohs(ipv6->sin6_port); break; } case AF_INET: { if (Address == NULL) { // Return the size of the buffer needed for IPv4 address return 16; // 15 bytes for the address + 1 null } *IPvType = 1; // IPv4 struct sockaddr_in *ipv4 = (struct sockaddr_in *)&addr; char strAddress[16]; // 15 bytes + 1 null if (inet_ntop(AF_INET, &ipv4->sin_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); } strncpy(Address, strAddress, sizeof(strAddress)); Address[15] = '\0'; // Ensure null-termination *Port = ntohs(ipv4->sin_port); break; } default: { err = WSAEPROTONOSUPPORT; break; } } return err; } ``` --- ### ✅ **Why This is the Right Approach** | Reason | Description | |-------|-------------| | **Caller needs to know the required buffer size** | The caller needs to know how many bytes to allocate for the address string. | | **`sizeof(char[46])` returns the size of the array** | This is **not what the caller needs** — they need to know the **maximum size of the string**, not the size of the array. | | **Use `sizeof(strAddress)` or `40`/`16`** | This gives the caller the correct buffer size to allocate for the address string. | | **Ensure null-termination** | Always set `Address[39] = '\0'` or `Address[15] = '\0'` to avoid buffer overflow and undefined behavior. | --- ### ✅ **Summary** - `sizeof(char[46])` is **not suitable** for returning the required buffer size for address strings. - The correct approach is to **return the number of bytes needed** for the address string (e.g., 16 bytes for IPv4, 40 bytes for IPv6). - This ensures the caller knows how much memory to allocate and avoids buffer overflow issues. Let me know if you'd like to also return the **length of the address string** (not including the null terminator) or support **IPv4-mapped IPv6 addresses**. Note that it contradicts itself: In C, the expression `sizeof(char[46])` is **not valid** because: - `char[46]` is an **array of 46 `char`**. - `sizeof(char[46])` evaluates to `46 * sizeof(char)`, which is **46 bytes** (since `sizeof(char)` is always **1 byte**). - So, in C, `sizeof(char[46])` is **valid** and returns 46 bytes. and reintroduces the error codes. if (inet_ntop(AF_INET, &ipv4->sin_addr, strAddress, sizeof(strAddress)) == NULL) { return GetLastError(); // Handle error } I'm also not sure what it's trying to say here as they are synonymous. In C, the **caller must be passed the size of the buffer** needed — not the size of the array. It had the ball, the game, and the crowd — and still fumbled the touchdown.1 point

-

Yup. There is: MMAP (1.0.1).1 point

-

1 point

-

1 point

-

Another VI I thought someone reading this forum thread might find helpful. This one calls the one I posted previously as a subVI. "Make Control Glow.vi" draws a fading rectangle behind the specified control. Save it and its subVI ("Offset Glow Rect.vi") to the same subdirectory. For example, here's a glow on a system OK button. Color and border thickness are parameterized. Saved in LV2020. Make Control Glow.vi Offset Glow Rect.vi1 point

-

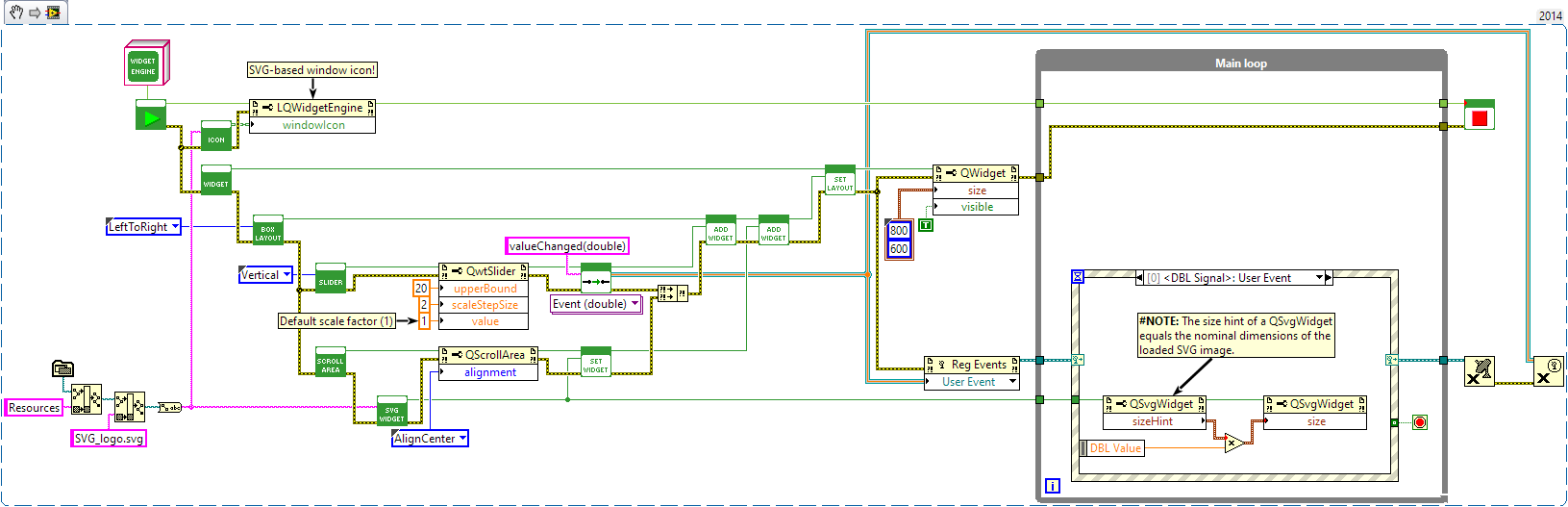

Not from NI, that I know of. I did make a similar API that wraps the Qt framework. That involves creating external windows though; your toolkit has the benefit of being integrated with VI front panels. Original LAVA post: https://lavag.org/topic/19611-utf-8-text-svg-images-inheritable-gui-components-dynamically-composed-guis-layout-management-splitters-in-tabs-mdis-taskbar-integration-and-much-more/ NIPM installation instructions: https://jksh.github.io/LQ-Bindings/docs/ As Mikael and Rolf said, class constants are not needed: Definitely! LabVIEW's built-in support for dynamic GUIs is very poor. NXG was starting to show some promise with dynamic controls, but that's now dead. So, community-built tools are sorely needed. Are you planning to make public releases of your work? Layouts are common concept in a wide variety of GUI toolkits. Makes it so much easier to create resizable GUIs and support a variety of screen resolutions.1 point

-

Here is a VI that gets the title of the window that is active. You could then continually loop until the title you expect is active, then perform operations. https://forums.ni.com/t5/LabVIEW/Get-Current-Active-Window/m-p/3930389#M11169261 point

-

1 point

-

I don't have good examples to share, but here are a few helpful links for you: NI has an article dedicated to DVRs, which also explains the fundamental idea: http://www.ni.com/product-documentation/9386/en/ Here is a short video that explains how to use a DVR and some of the pitfalls: https://www.youtube.com/watch?v=VIWzjnkqz1Q Of course, you'll find lots of topics related to DVRs on this forum.1 point

-

Here is a quick and dirty edit. It allows for column separators to be moved, but I noticed that on resize it will set the column widths. So this means if you manually move the columns, and then resize the control it may change the columns in an unexpected way. But at that point you can manually move the separators again. I only have 2017 and 2018 so this is for 2017 and newer now. Variant_Probe-2.4.3-0.ogp1 point

-

Version 1.0.0

1,086 downloads

Hi everyone, Since GRBL standard is open source, I decided to post my Library that I used in LabVIEW to interface a standard GRBL version 1.1 controller. Not all GRBL function has been integrated, but this is a very good start. Enjoy and let me know your comments. Benoit1 point -

Version 1.0.0

561 downloads

This tool-set gives access to all the 1-wire TMEX functionality. I was able to access 1-wire memory with this library. It has all the basic VI to allow communication with any 1-wire device on the market. It needs to be used in a project so the selection of the .dll 64 bit or 32 bit is done automatically. It works with the usb and the serial 1-wire adapter.1 point -

I love the Picture Control, it's very fast if you use it correctly. I developmed the whole GDS UML modeller (http://opengds.github.io/) based on that. One performance issue is if you draw lot of text with a none default font size, then it becomes very slow. Make sure you use Smooth updates, and I always use Erase first. What does the shft register make it poorly? Do you have an exmaple where it's slow we can look at?1 point

-

Which is funny because when I took them, I thought the CLD was much harder than the CLA.1 point

-

It's easy, there is probably a vi with that name in memory, so if you would remove the class prefix there would be a conflict. Rename the vi first to something unique and the try to delete it.1 point

-

I think I have this fixed. Tried a compiled version of the transport library; this worked without issue. I made the timeout changes, which did not seem to have an impact on performance. I then recompiled everything (the server-side code is used in multiple applications on the system); the delay I was seeing with the one message/response went to expected amounts in tests. I've been waiting to test this with the whole system up and going; unfortunately, we've been battling drive issues that are stopping everything else. Can't definitively say it's fixed. Can't point to a smoking gun. I'm appreciating this forum and the people on it right now.1 point

-

Hi all, sorry i had to do some other work. @ O_o: We already took a look into the UML editor from GOOP: The code is horrible. @drjdpowell: Thank you for your Picture and your inspiration. Up to now we try an other way. We will report if we found a solution. Thanks again Oliver1 point

-

Start with NI's article "How Many Threads Does LabVIEW Allocate?" Also see the LabVIEW help for "Multitasking, Multithreading, and Multiprocessing." If you divide your code across execution systems instead of leaving them all at "Same as Caller" you should see the work distributed across more threads, and probably better performance and higher CPU use.1 point

-

Sweet! That solves it. So, now we can write a LabVIEW console app! Here is the VI that let's you write to the StdOut of the calling console: Write to StdOut of Calling Parent.vi -John1 point

-

QUOTE(Tomi Maila @ Oct 30 2007, 01:34 AM) GetValueByPointer takes the C style pointer and the corresponding data type, which are generated by Import Shared Library Tool, as inputs and copies the value which the pointer points in shared library(dll/so/framework) to LabVIEW. Input terminals: Input Type: Input type is the LabVIEW data type to which you want to pass into LabVIEW. Input type can be Numeric, string, Array, Cluster . This VI returns an error if LabVIEW cannot convert the data wired to Pointer to the data type you wire to this input. If the data is integer, you can coerce the data to another numeric representation, such as an extended-precision, floating-point number. Pointer: Pointer is a memory address represented by a 32-bit unsigned integer in LabVIEW. Pack Type: Byte alignment information of the Input Type. Output terminals: Value:Value is the data copied from the memory which is pointed by Pointer and changed to the data type specified by Input type.1 point

.thumb.jpg.5d2ee2fea691c9fe3fab4270ba8e531d.jpg)