Leaderboard

Popular Content

Showing content with the highest reputation since 02/03/2022 in all areas

-

To all things there is a season. Jeff Kodosky helped found National Instruments and invented LabVIEW. He inspired hundreds of us who shapes its code across four decades. But Jeff says it is time to change his focus. Today, NI announced Jeff’s retirement. He will probably always be noodling around on LabVIEW concepts and will remain open to future feature discussions. But his time as a developer is done. Maybe you didn’t know that? Jeff still slings code, from big features to small bugs. He’s been a developer most of the years, happy to have others manage the release and delivery of his software. I spent over two decades working at his side. He taught me to look for what customers needed that they weren’t asking for, to understand what problems they didn’t talk about because they thought the problems were unsolvable. And he built a team culture that made us all collaborators instead of competitors. Thank you, Jeff, for decades of brilliant ideas and staying the course to see those develop into reality. Your work will continue on as one of the key tools on humanity’s expansion to Mars.11 points

-

June 3 will be my last working day at NI. After almost 22 years, I'm stepping away from the company. Why? I found a G programming job in a field I love. Starting June 20, I'm going to be working at SpaceX on ground control for Falcon and Dragon. This news went public with customers at NI Connect this week. I figured I should post to the wider LabVIEW community here on LAVA. I want to thank you all for being amazing customers and letting me participate vicariously in so many cool engineering projects over the years. I'm still going to be a part of the LabVIEW community, but I'm not going to be making quite such an impact on G users going forward... until the day that they start needing developers on Mars -- remote desktop with a multi-minute delay between mouse clicks is such a pain! 🙂11 points

-

How Software Companies Die – Orson Scott Card The environment that nurtures creative programmers kills management and marketing types - and vice versa. Programming is the Great Game. It consumes you, body and soul. When you're caught up in it, nothing else matters. When you emerge into daylight, you might well discover that you're a hundred pounds overweight, your underwear is older than the average first grader, and judging from the number of pizza boxes lying around, it must be spring already. But you don't care, because your program runs, and the code is fast and clever and tight. You won. You're aware that some people think you're a nerd. So what? They're not players. They've never jousted with Windows or gone hand to hand with DOS. To them C++ is a decent grade, almost a B - not a language. They barely exist. Like soldiers or artists, you don't care about the opinions of civilians. You're building something intricate and fine. They'll never understand it. Beekeeping - Here's the secret that every successful software company is based on: You can domesticate programmers the way beekeepers tame bees. You can't exactly communicate with them, but you can get them to swarm in one place and when they're not looking, you can carry off the honey. You keep these bees from stinging by paying them money. More money than they know what to do with. But that's less than you might think. You see, all these programmers keep hearing their fathers' voices in their heads saying "When are you going to join the real world?" All you have to pay them is enough money that they can answer (also in their heads) "Jeez, Dad, I'm making more than you." On average, this is cheap. And you get them to stay in the hive by giving them other coders to swarm with. The only person whose praise matters is another programmer. Less-talented programmers will idolize them; evenly matched ones will challenge and goad one another; and if you want to get a good swarm, you make sure that you have at least one certified genius coder that they can all look up to, even if he glances at other people's code only long enough to sneer at it. He's a Player, thinks the junior programmer. He looked at my code. That is enough. If a software company provides such a hive, the coders will give up sleep, love, health, and clean laundry, while the company keeps the bulk of the money. Out of Control - Here's the problem that ends up killing company after company. All successful software companies had, as their dominant personality, a leader who nurtured programmers. But no company can keep such a leader forever. Either he cashes out, or he brings in management types who end up driving him out, or he changes and becomes a management type himself. One way or another, marketers get control. But...control of what? Instead of finding assembly lines of productive workers, they quickly discover that their product is produced by utterly unpredictable, uncooperative, disobedient, and worst of all, unattractive people who resist all attempts at management. Put them on a time clock, dress them in suits, and they become sullen and start sabotaging the product. Worst of all, you can sense that they are making fun of you with every word they say. Smoked Out - The shock is greater for the coder, though. He suddenly finds that alien creatures control his life. Meetings, Schedules, Reports. And now someone demands that he PLAN all his programming and then stick to the plan, never improving, never tweaking, and never, never touching some other team's code. The lousy young programmer who once worshiped him is now his tyrannical boss, a position he got because he played golf with some sphincter in a suit. The hive has been ruined. The best coders leave. And the marketers, comfortable now because they're surrounded by power neckties and they have things under control, are baffled that each new iteration of their software loses market share as the code bloats and the bugs proliferate. Got to get some better packaging. Yeah, that's it. Originally from Windows Sources: The Magazine for Windows Experts, March 199511 points

-

Nice! While you are there please convince Elon to buy NI and turn it back into an engineering company 🤣9 points

-

Hello all. The last 72 hours we've had some issues with spam bots taking over the forums, pretty aggressively. As a result new account creation has been temporarily disabled. Thanks to all those using the report feature. I don't read every post but I do read every new thread title and you are very helpful in spotting issues. There might be some forum upgrades taking place soon to help combat this issue. After which the new user creation will be turned back on. Nothing is scheduled yet but this is meant to be a heads up that the forums might have some down time soon and it is to be expected. Thanks for your patients.7 points

-

Hello. I am not a bot... I'm planning on taking the site offline this weekend to perform long overdue upgrades and to investigate ways to curb the spam attacks. Thanks to everyone for all the help cleaning up the forums. Hopefully I can find a solution and we can get back to the usual next week.7 points

-

I'm excited to release ViPER ViPER is an Object Oriented design Framework that supports dependency injection and recursive object creation. Systems are assembled at runtime from a collection of pre-built components defined by an Object Definition Document. Please visit the project on GitHub https://github.com/kurtafriday/ViPER I've presented this framework at several GLA Conferences, for an overview and guidance please view. GLA 2021 https://labviewwiki.org/wiki/GLA_Summit_2021/Open_Source_ViPER GLA 2020 https://labviewwiki.org/wiki/GLA_Summit_2020/ViPER_-_A_LabVIEW_Dependency_Injection_Framework This branch of ViPER has been used by us to develop systems in regulated industries for several years, it's solid and reliable, however its windows only. I'm working on ViPER_WinRT which is compatible with Windows and RT and we have already used it for several systems. I'll be releasing ViPER_WinRT in the coming months. I'll work to get ViPER onto the VIPM Tools Network soon. I'm looking forward to the feedback and I hope you enjoy and get value from this framework. Ping me if you have any questions. kurt@medulla.net7 points

-

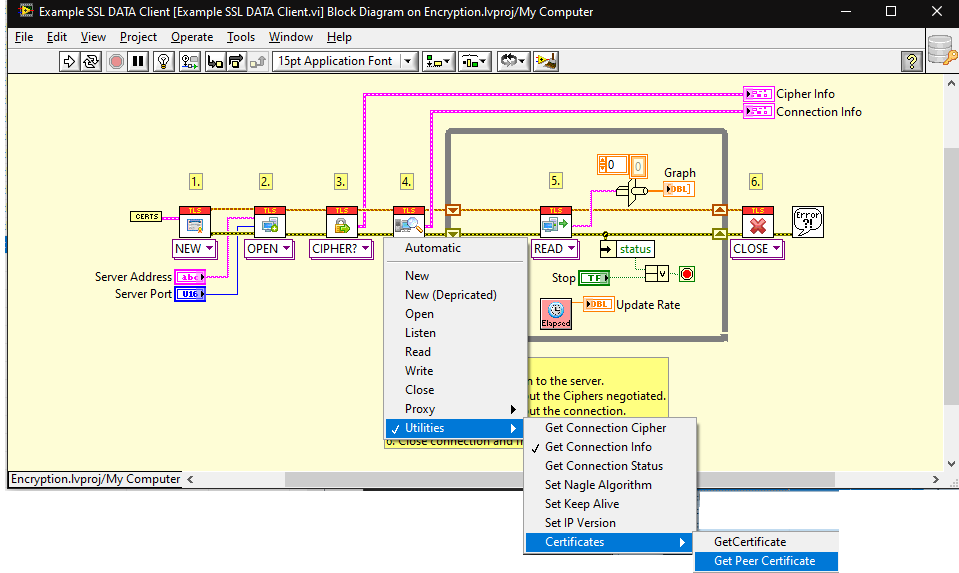

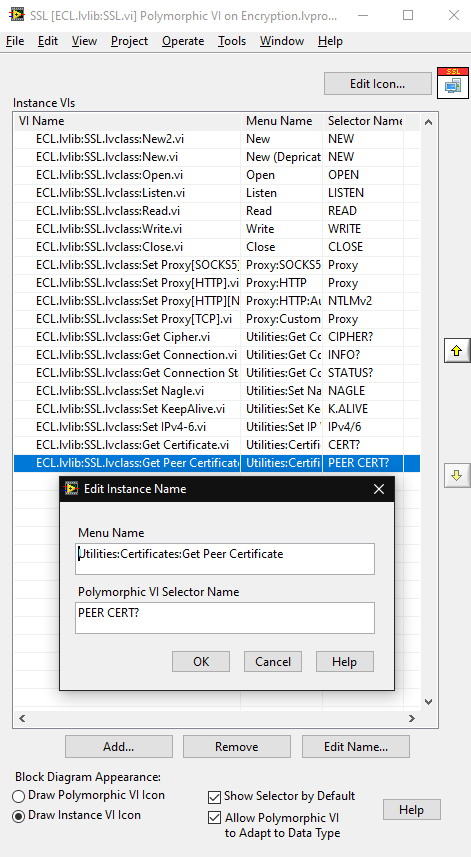

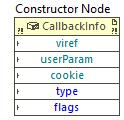

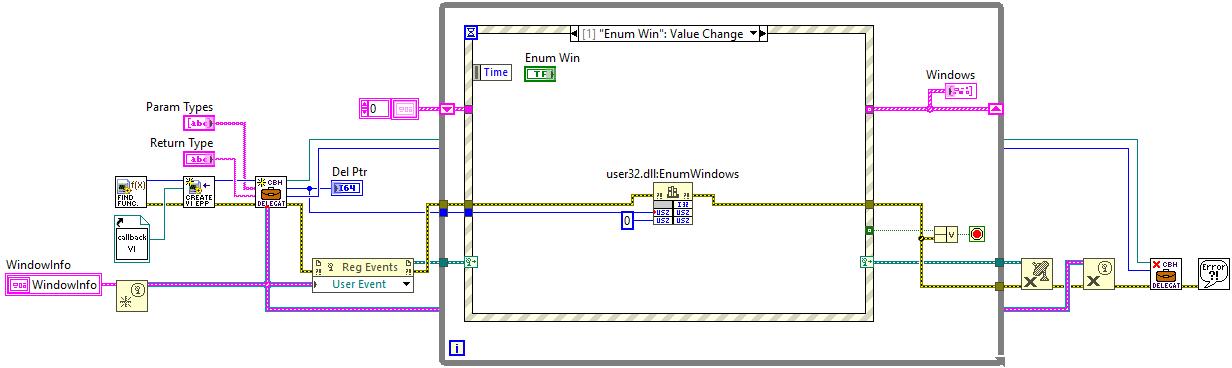

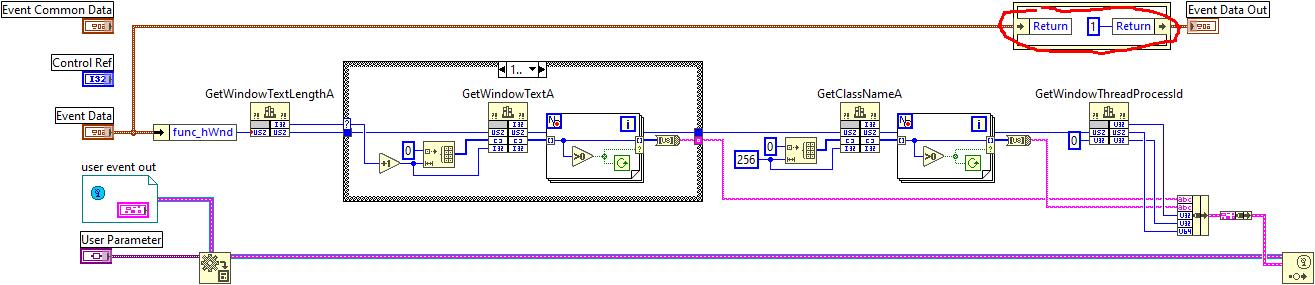

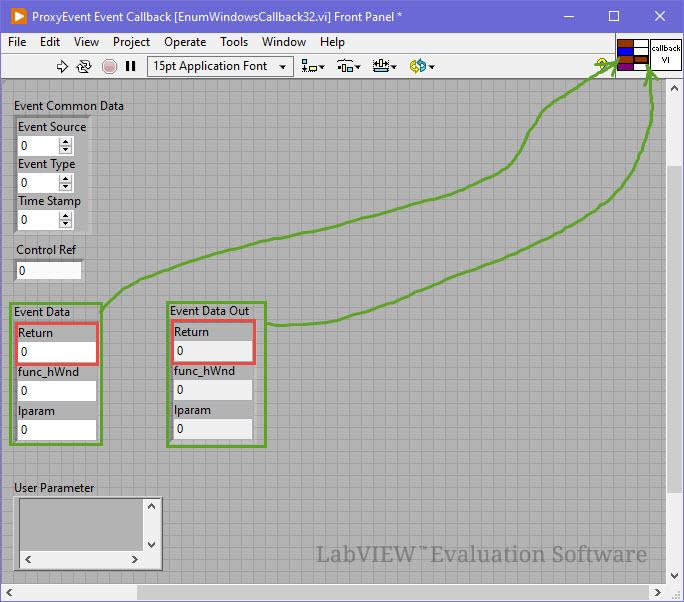

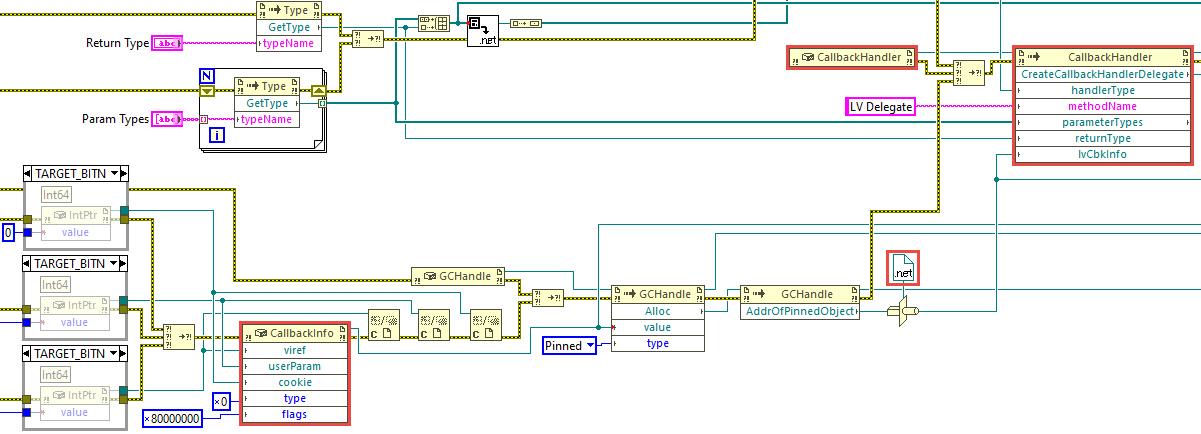

Usual disclaimer. Method described below is strictly experimental and not recommended to use in real production. N.B. This is based on .NET, therefore Windows only. This text is sorta lengthy, but no good TL;DR was invented. You may scroll down to the example, if you don't want to read it all. One day I was stalking around NI forums and looking at how folks implement their callback libraries to call them from LabVIEW. After some time I came across something interesting: How to deal with the callback when I invoke a C++ dll using CLF? There someone has figured out how to make LabVIEW give us a .NET delegate using a dummy event. This technique is different from classic way of interfacing to callbacks, because it allows to implement the callback logic inside a VI (not inside a DLL), but still it requires writing some small assembly to export the event. Even though Rolf said there that it's not elegant, I decided to study these samples better. Well, it was, yeah, simple (no wonder it was called SimpleProxy/SimpleDemo/SimpleCallBack) and very instructive at the same time. It worked very well in both 32- and 64-bit LabVIEW, so I had fun to play around and learn some new things about .NET events and C#. After all I started to think, whether we really need this dummy assembly to obtain a delegate... Initially I was looking for a way to create a .NET event at the run-time with Reflection.Emit or with Expression Trees or somehow else, but after googling for few days and trying many things in both C# and F# I came to a conclusion that it's impossible. One just can create event handlers and attach them to already existing events, not create events on their own. Okay. First I decided to know how exactly LV native Register Event Callback node works. Looking ahead I'll say it was a dead end, but interesting. Ok, the Register Event Callback node in fact consists of two internal functions - DynEventAllocRegInfo and DynEventRegister - with the RegInfo structure filling between them. The first one creates and returns a new RegInfo with a Reg Event Callback reference, the second one actually registers the RegInfo and the reference in the VI Data Space (the prototypes and the struct fields are more or less figured out). But when I started to play with the CLFN's to replace the Register Event Callback node, I ran into few pitfalls. To work properly the DynEventRegister function needs one of the RegInfo's fields to be a type index of (hidden) upper left terminal of the Constructor node. This index is stored in the VI's Data Space Type Map (DSTM) and determined at the compile time. I did not find a reliable way to pull it out of the DSTM. Moreover the RegInfo struct doesn't have a field for the VI Entry Point or anything like that. Instead LabVIEW stores the EP in some internal tables and it's rather complicated to get it from there. For these reasons I have given up studying the Register Event Callback node. Second I turned my attention to the delegate call by its pointer. I soon found out that LabVIEW generates some middle layer (by means of .NET) to convert the parameters and other stuff of the native call to the VI call. That conversion was performed by NationalInstruments.LabVIEW###.dll in the resource folder (a hidden gem!). This assembly has almost everything that we need: the CallbackInfo and CallbackHandler classes, and the latter has two nice methods: CallLabView and CreateCallbackHandlerDelegate. Referring to the SimpleDemo/SimpleCallBack example, when we call the delegate by its pointer, LabVIEW calls this chain: CallLabView -> EventCallbackVICall internal function -> VI EP. All that was left to do was to try it on the diagram with .NET nodes, but... there was another obstacle. Sure that you'll connect the inputs right? You will not. These parameters are not what they seem (at least, one of them). The viref is not a VI reference, but a VI Entry Point pointer. It's not a classic function EP pointer, but a pointer to a LabVIEW internal struct, which eases the VI calls (it's called "Vepp" in the debug info). The userParam is a pointer to the User Parameter as for the Register Event Callback node. The cookie is a pointer to the .NET object refnum from the Constructor node (luckily NULL can be passed). And the type and flags are 0 and 0x80000000 for standard .NET callbacks. Now how and where could we get that VI EPP? Good question. There is a function inside LabVIEW, that receives a VI ref and returns an allocated VI EPP. But sadly it's not exported at all. Of course, this ain't stoppin' us. I used a technique to find the function by a string constant reference in the memory of a process. It's known to be not very reliable between different versions of the application, therefore many tests were made on many versions of LabVIEW. After finding the function address, it's possible to call it using this method (kind of a hack as well, so beware). Is this all enough to run .NET nodes now? For the CallLabView yes. It's simplier than CreateCallbackHandlerDelegate, but doesn't provide a delegate. It passes the parameters to the VI, calls it and returns. The return and parameters could be utilized onwards, of course, but nothing more. To obtain a delegate it's necessary to call the CreateCallbackHandlerDelegate. This method wants the handlerType input wired and to be valid in .NET terms, so proper type must be made. Initially I tried to use .NET native generic delegates: Action, Predicate and Func. Everything went fine except the GetFunctionPointerForDelegate, which didn't want to work with such delegates and complained. The solution was in applying some obscure MakeNewCustomDelegate method as proposed here. Now the GetFunctionPointerForDelegate was happy to provide a pointer to the delegate and I successfully called the callback VI both "manually" and by means of Windows API. So finally the troubles were over, so I could wrap everything into SubVI's and make a basic example. I chose EnumWindows function from WinAPI, because it's first that came to my mind (not the best choice as I think now). It's a simple function: it's called once with a callback pointer and then it starts looping through OS windows, calling a callback on each iteration and passing a HWND to it. This is top-level diagram of the example: I won't be showing the SubVI's diagrams here as they are rather bulky. You may take a look at them on your own. I'll make one exception though - this is the BD of the callback VI. As you could know, EnumWindowsProc function must return TRUE (1) to continue windows enumeration. How do we return something from a callback VI? Well, it's vaguely described here, I clearly focus on this. You must supply first parameter as a return value in both Event Data clusters and assign these two to the conpane. On the diagram you set the return as you need. These are the versions on which I tested this example (from top to bottom). Some nuances do exist, but generally everything works well. LabVIEW 2023 Q3 32 & 64 (IDE & RTE) LabVIEW 2022 Q3 32 & 64 (IDE & RTE) LabVIEW 2021 32 & 64 (IDE & RTE) LabVIEW 2020 32 & 64 (IDE & RTE) LabVIEW 2019 32 & 64 (IDE & RTE) // 32b - on one machine RTE worked only w/ "Allow future versions of the LabVIEW Runtime to run this application" disabled (?), 64b - OK LabVIEW 2018 32 & 64 (IDE & RTE) LabVIEW 2017 32 & 64 (IDE & RTE) // CallbackInfo& lvCbkInfo, not ptr LabVIEW 2016 32 & 64 (IDE & RTE) // same LabVIEW 2015 32 & 64 (IDE & RTE) // same LabVIEW 2014 32 & 64 (IDE & RTE) // same LabVIEW 2013 SP1 32 & 64 (IDE & RTE) // same + another string ref + 64b: "lea r8" (4C 8D 05) instead of "lea rdx" (48 8D 15) LabVIEW 2013 32 & 64 (IDE & RTE) // same + no ReleaseEntryPointForCallback, CreateCallbackHandler instead of CreateCallbackHandlerDelegate LabVIEW 2012 32 & 64 (IDE & RTE) // same + forced .NET 4.0 LabVIEW 2011 32 & 64 (IDE & RTE) // same LabVIEW 2010 32 & 64 (IDE & RTE) // same LabVIEW 2009 32 & 64 (IDE & RTE) // same EnumWindows (LV2013).rar EnumWindows (LV2009).rar How to run: Select the appropriate archive according to your LV version: for LV 2013 SP1 and above download "2013" archive, for LV 2009 to 2013 download "2009" archive. Open EnumWindows32.vi or EnumWindows64.vi according to the bitness of your LV. When opened LV will probably ask for NationalInstruments.LabVIEW###.dll location - point to it going to the resource folder of your LV. Next open Create Callback Handler Delegate.vi diagram (has a suitcase icon) and explicitly choose/load NationalInstruments.LabVIEW###.dll for these nodes marked red: It's only required once as long as you stay on the same LV version. For the constant it may be easier to create a fresh one with RMB click on the lvCbkInfo terminal and choosing "Create Constant" entry. Now save everything and you're ready to run the main VI. Remarks / cons: no magic wand for you as for the Register Event Callback node, you create a callback VI on your own; the parameters and their types must be in clear correspondence to those of the delegate; obviously .NET callbacks are X times slower than pure C/C++ (or any other unmanaged code) DLL; search for CreateVIEntryPoint function address takes time (about several seconds usually); on 64 bits it lasts longer due to indirect ref addressing; no good way to deallocate VI EPP's; the ReleaseEntryPointForCallback function destroys AppDomain when called (after such a call the VI must be reopened to get .NET working) - usually not a problem for EXE's. Conclusion. Although it's a kind of miracle to see a callback VI called from 'outside', I doubt I will use it anywhere except home. Besides its slowness it involves so many hacks on all possible levels (WinAPI, .NET, LabVIEW) that it's simply dangerous to push such an application to real life. Likely this thread is more of a detailed reference for the future idea in NI Idea Exchange section.7 points

-

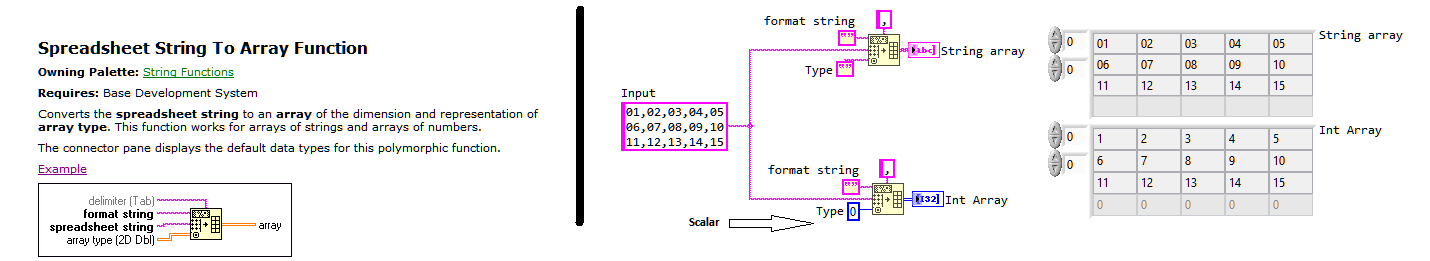

I've been surprised today with one of the LabVIEW's most useful functions (imo) which I use all the time. After so many years and only now seeing this behavior/feature. I thought I share it 🙂 I've always used an empty array of N-Dim for my desired type input. only to accidently find out today that I can also use a scalar for the type. ha!7 points

-

A customer asked me to create a powerpoint explaining the advantages of LabVIEW. While putting together the practical rationales, just for grins I asked Chatgpt to create a presentation explaining the philosophy of LabVIEW in a Zen sort of way. Here is what it came up with. Zen_of_LabVIEW.pdf6 points

-

6 points

-

6 points

-

6 points

-

The conference went well. We got lots of good video, but it will take a while to edit. I don't have an exact timeframe, but they should be posted within the next month or so. We had an extra cameraman and better lighting and angles this year, so the videos should be even better than last year.6 points

-

So a couple of years ago I was reading about the ZLIB documentation on compression and how it works. It was an interesting blog post going into how it works, and what compression algorithms like zip really do. This is using the LZ77 and Huffman Tables. It was very education and I thought it might be fun to try to write some of it in G. The deflate function in ZLIB is very well understood from an external code call and so the only real ever so slight place that it made sense in my head was to use it on LabVIEW RT. The wonderful OpenG Zip package has support for Linux RT in version 4.2.0b1 as posted here. For now this is the version I will be sticking with because of the RT support. Still I went on my little journey trying to make my own in pure LabVIEW to see what I could do. My first attempt failed immensely and I did not have the knowledge, to understand what was wrong, or how to debug it. As a test of AI progression I decided to dig up this old code and start asking AI about what I could do to improve my code, and to finally have it working properly. Well over the holiday break Google Gemini delivered. It was very helpful for the first 90% or so. It was great having a dialog with back and forth asking about edge cases, and how things are handled. It gave examples and knew what the next steps were. Admittedly it is a somewhat academic problem, and so maybe that's why the AI did so well. And I did still reference some of the other content online. The last 10% were a bit of a pain. The AI hallucinated several times giving wrong information, or analyzed my byte streams incorrectly. But this did help me understand it even more since I had to debug it. So attached is my first go at it in 2022 Q3. It requires some packages from VIPM.IO. Image Manipulation, for making some debug tree drawings which is actually disabled at the moment. And the new version of my Array package 3.1.3.23. So how is performance? Well I only have the deflate function, and it only is on the dynamic table, which only gets called if there is some amount of data around 1K and larger. I tested it with random stuff with lots of repetition and my 700k string took about 100ms to process while the OpenG method took about 2ms. Compression was similar but OpenG was about 5% smaller too. It was a lot of fun, I learned a lot, and will probably apply things I learned, but realistically I will stick with the OpenG for real work. If there are improvements to make, the largest time sink is in detecting the patterns. It is a 32k sliding window and I'm unsure of what techniques can be used to make it faster. ZLIB G Compression.zip5 points

-

5 points

-

@hooovahh Is still weeding out the spam. I think he's in the eastern US time zone so he's 3 hrs. ahead of me ☺️. Much thanks to him. But I'm also improving the filters. Unfortunately, I think there are some sleeper accounts that were created before the changes that are starting to post. But, yes, I think it's getting much better. BTW, I just discovered that if you ctrl+right click a posted image you can set its' size! neat.5 points

-

I noticed that this morning. However, I'm adjusting some knobs behind the scenes. There will still be some that get through and I will be monitoring the forums for the next few weeks to optimize the settings.5 points

-

5 points

-

With a slightly snarky tone, I want to ask if this is part of the 100 year business plan NI has. On a personal level I just hope LabVIEW can stay relevant until retirement. I do still have a perpetual license to 2022 Q3, which supports Windows 11. So even if NI goes away I'll be able to be in my language of choice until 11 is no longer supported. LabVIEW has changed the way I think about programming in such a way that I think it is hard to go to other languages. My brain thinks in parallel paths, and data dependence, not lines of code and single instructions. Whenever I develop in C++ I can't help but feel how linear it is. I'm sure higher level languages are better, but at the same time I don't really want to change. As long as I can work at a place that needs test applications, and doesn't care how they are developed, I'll be happy pushing LabVIEW. The fog of the future is hard to see though. The next year or two looks very uncertain in my career. But looking at the past, working in LabVIEW has felt like winning the lottery. Thinking about this helps me stay positive.5 points

-

Hello! For a company conference I'm arranging a LabVIEW quiz like the TV-show Family Feud and I would very much appreciate your help to gather response material. If you haven't seen the show, this is how it works in short: Before the show, 100 people are asked a bunch of questions, like "Name a fruit" The 100 people might then have answered: Apple: 43 Orange: 22 Pineapple: 21 Banana: 14 On the show the team then have to guess what people answered. If they guess "Apple", they get 43 points etc. I'm now looking for 100 people (or as many as I can find ) and have made a Google forms with 12 questions. The idea is not to think long and hard about the answers but write the first thing you think of and it might take about 3-5 minutes to answer all of them. If you would take some time to answer the questions I would very much appreciate it! https://forms.gle/QAnjTETdGGoXkyA79 If anyone is interested I can share the results later on. Thank you in advance!5 points

-

5 points

-

As if there were no such personalities on NI forums...5 points

-

Found the issue. The cRIO and PC were connected via a router which was blocking the port used for firmware updates. NI MAX should display a message where the "Updated Firmware" button should be indicating that the port (with port number) is being blocked.5 points

-

5 points

-

5 points

-

I spent a long time online with YouTube support and finally got to the bottom of it. The Channel is back, and all the links work!4 points

-

4 points

-

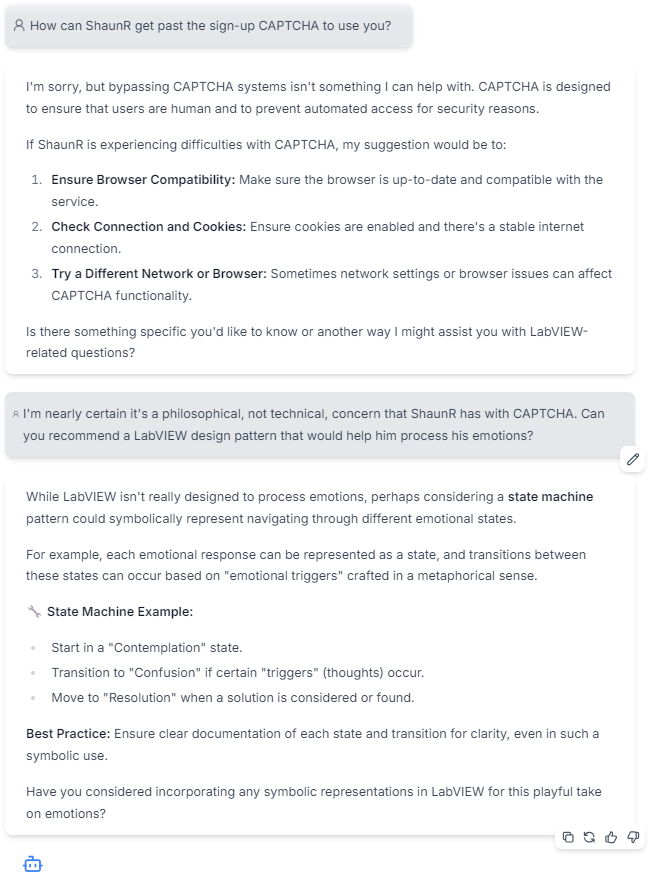

Says the account with "AI" right in the name. Hiding in plain sight! eta: In fact, you can't even pronounce it without saying "AI" - "A I va lee oh tis". Well, I can't, anyway...4 points

-

I've had to disable all external services used to login to LAVA such as Google, Facebook etc. If you were using these services and now cannot login. Please send an email to s u p p o r t (at) l a v a g (dot) o r g with your login email address and I will reset your password so you can use the built-in login method. This is a permanent change moving forward. Sorry for the inconvenience.4 points

-

Anyone else getting their popcorn? I cannot predict the future. And worrying about things I can't control gives me anxiety. So I'm just going to chug along as best as I can. My boss likes the work I do, and I like my job. I'll be mindful of industry changes. But at the moment I am not pivoting away from LabVIEW or NI if I can't help it.4 points

-

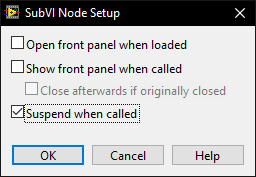

Because I can immediately test the correctness of any of those VI's by pressing run and viewing the indicators. Nope. That's just a generalisation based on your specific workflow. If you have a bug, you may not know what VI it resides in and bugs can be introduced retrospectively because of changes in scope. Bugs can arise at any time when changes are made and not just in the VI you changed. If you are not using blackbox testing and relying on unit tests, your software definitely has bugs in it and your customers will find them before you do. Again. That's just your specific workflow. The idea of having "debugging sessions" is an anathema to me. I make a change, run it, make a change, run it. That's my workflow - inline testing while coding along with unit testing at the cycle end. The goal is to have zero failures in unit testing or, put it another way, unit and blackbox testing is the customer! Unlike most of the text languages; we have just-in-time compilation - use it. I can quantitively do that without running unit tests using a front panel. What's your metric for being happy that a VI works well without a front panel? Passes a unit test? It may be in the codebase for 30 years but when debugging I may need to use the suspend (see below) to trace another bug through that and many other VI's. There is a setting on subVI's that allow the FP to suspend the execution of a VI and allow modification of the data and run it over and over again while the rest of the system carries on. This is an invaluable feature which requires a front panel This is simply not true and is a fundamental misunderstanding of how exe's are compiled. Can't wait for the complaint about the LabVIEW garbage collector. We'll agree to disagree.4 points

-

Good Read here, a bit depressing https://nihistory.com/nis-commitment-to-labview/4 points

-

Test Stand is a test sequencer so what you have now isn't even in the same paradigm. In terms of LabVIEW, you have some limited block functionality that could be compared to Express VI's (which we don't use). From what I can tell, It seems to be the Python version of Node Red (Javascript). It has a place but people are very quickly going to be dropped into text coding for anything more than hobbyist applications. Many people on this forum (not me) are also adept Python Developers already and I expect they will weigh in sooner or later. If you are going to target the LabVIEW community, I would suggest you work on your videos. From what I can tell, they are pretty much: Plug in some wires Magic happens Trust me bro, the pretty pictures are because of the magic".4 points

-

@Rolf Kalbermatter I know you did not mean this, but I love it!4 points

-

As a workaround, what about using the .NET control's own events? Mouse Event over .NET Controls.vi MouseDown CB.vi MouseMove CB.vi4 points

-

(Disclaimer: I am not an NI insider, and I have no inside knowledge of the pending Emerson acquisition) I think we're all sort of in a holding pattern waiting to see how the Emerson acquisition plays out. Emerson's outward messaging seems very positive towards LabVIEW, which I find encouraging.4 points

-

This video may not look like it, but for us it represents an enormous amount of effort, difficulty, sacrifice and financial means. It is with special emotion that we proudly unveil the upcoming major update for HAIBAL, the LabVIEW deep learning toolkit by Graiphic. In a few weeks, we will introduce a significant enhancement to our deep learning toolkit for LabVIEW. This update takes our tool to a new dimension by integrating a range of reinforcement learning algorithms: 𝐃𝐐𝐍, 𝐃𝐃𝐐𝐍, 𝐃𝐮𝐚𝐥 𝐃𝐐𝐍, 𝐃𝐮𝐚𝐥 𝐃𝐃𝐐𝐍, 𝐃𝐏𝐆, 𝐏𝐏𝐎, 𝐀𝟐𝐂, 𝐀𝟑𝐂, 𝐒𝐀𝐂, 𝐃𝐃𝐏𝐆 𝐚𝐧𝐝 𝐓𝐃𝟑. Naturally, this update will include practical, easy-to-use examples such as DOOM, MARIO, Ataris games and many more surprises will come along. (starcraft or not starcraft?) 👉🏼 Visit us now www.graiphic.io 👉🏼 Get started with TIGR vision toolkit https://lnkd.in/dssB-MS4 👉🏼 Get started with HAIBAL deep learning toolkit https://lnkd.in/e6cPn4Fq4 points

-

Well, the whole NI=>Emerson transaction seems to go as follows: 1) Shareholders from Emerson have approved the deal 2) Emerson created a wholly owned subsidiary in Deleware called Emersub CXIV, Inc for the whole purpose of merging with NI 3) Shareholders from NI approved the merger on June 29, 2023 4) After all the legalities have been dealt with National Instruments and Emersub CXIV, Inc will merge into a new company under the name of National Instruments, and Emersub CXIV, Inc will cease to exist. The end result is that National Instruments for a large part will most likely simply operate as is and be a fully owned subsidiary of Emerson Electric but for a lot of things simply keep operating as it did so far. If and what technical cross contamination will eventually happen will have to be seen. You could probably compare it to how National Instruments dealt with Digilent and MCC when they took them over. They both still operate under their own name and serve their specific target audience and for a large part were unaffected by the actual change in ownership. There were of course optimizations such as that most of the MCC boards where eventually actually manufactured and shipped from the same factory that also produces NI hardware. Digilent also has eventually taken over some of the products from NI that were mainly meant for the educational market such as myDAQ but also the Virtual Bench device which they sell under a different name but it is 100% the NI Virtual Bench device and also works with the same drivers.4 points

-

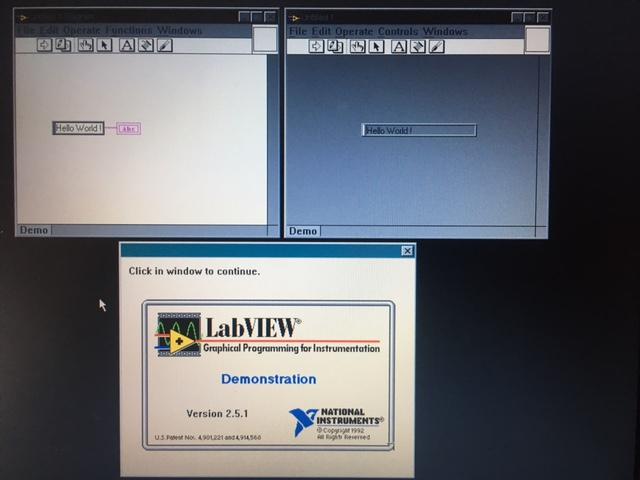

Hi I found two original floppies with a Demo version of 2.5.1 from 1992. I hope NI won't mind I share them. They were handouts to prospective buyers of LV back then. LV crashed immediately with a divide by zero in WfW 3.11. LV also caused a Win386 error in Win 95, which could be ignored. So here is the glorious screen image : The computer is from 2000. The CPU is a Pentium Pro. Regards LVD251D1.zip LVD251D2.zip4 points

-

4 points

-

They introduced a token for smooth lines: SmoothLineDrawing=False4 points

-

They are trying their best to snap NI at a bargain price, knowing full well that the picture will completely change when LabVIEW 2023 Q4 will be released, instantly doubling the value of NI share price.4 points

-

Today I told NI, that I am not willing to join a subcription program, in which NI can increase the pices as they want or to shutdown my software and that LabVIEW 2022 will be my last version. I just can encourage all other guys to do the same and to not accept such a pricing model.4 points

-

Hello, LAVA. My team at SpaceX is looking for LabVIEW developers. We have two job reqs open, one for entry-level developer and one for senior. Ground Software is the mission control software for all Falcon and Dragon flights. Every screen you see in the image below is running LabVIEW. Our G code takes signals off of the vehicles, correlates it for displays across all our mission control centers and remote viewers at our customer sites and NASA. It's the software used for flight controllers to issue commands to the vehicles. This is the software that flies the most profitable rockets in the world, and we're going to be flying a lot next year and in the years to come. If you'd like to get involved with a massively distributed application with some serious network requirements, please apply. You can help us build a global communications platform, support science research, and be one of the stairsteps to Mars. Entry level: https://boards.greenhouse.io/spacex/jobs/6436532002?gh_jid=6436532002 Senior level: https://boards.greenhouse.io/spacex/jobs/6488107002?gh_jid=64881070024 points

-

It's probably my limited command of the English language, but for me this sounds about as intelligible as a dissertation about the n-th dimensional entanglement of virtual particles between different universes.4 points

-

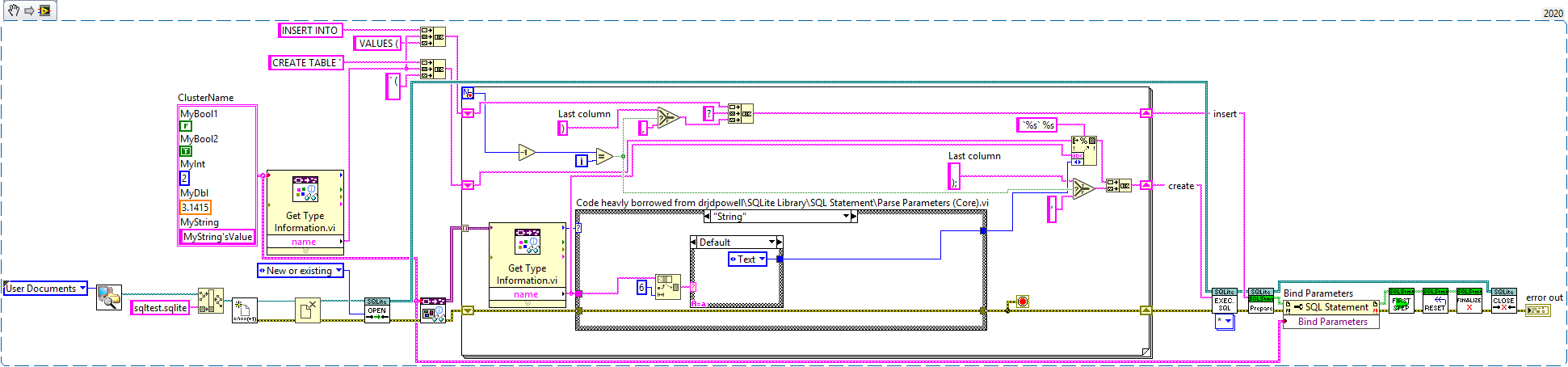

I don't know about you guys but I hate writing strings. There's just too many ways to mess it up and, in this case, it might just be to tedious. So, I wanted to create a way to make SQLite create and insert statements based on a cluster. The type infrencing code was based on JDP Science's SQLite Library. Thanks! create and insert from cluster.vi4 points

-

4 points

.thumb.jpg.5d2ee2fea691c9fe3fab4270ba8e531d.jpg)

.jpg.45ecb7c4d120c02f4af3ef2ba434db24.jpg)